blog

Preparing a MySQL or MariaDB Server for Production – Part Two

In the previous blog, we have covered some tips and tricks to prepare a MySQL server for production usage from a system administrator perspective. This blog post is the continuation…

Use a Database Backup Tool

Every backup tool has its own advantages and disadvantages. For example, Percona Xtrabackup (or MariaDB Backup for MariaDB) can perform a physical hot-backup without locking the databases but it can only be restored to the same version on another instance. While for mysqldump, it is cross compatible with other MySQL major versions and way simpler for partial backup, albeit it is relatively slower during restoration if compared to Percona Xtrabackup on big databases. MySQL 5.7 also introduces mysqlpump, similar to mysqldump with parallel processing capabilities to speed up the dump process.

Do not miss to configure all of these backup tools in your MySQL server as they are freely available and very critical for data recovery. Since mysqldump and mysqlpump are already included in MySQL 5.7 and later, we just need to install Percona Xtrabackup (or MariaDB Backup for MariaDB) but it requires some preparations, as shown in the following steps:

Step One

Make sure the backup tool and its dependencies are installed:

$ yum install -y epel-release

$ yum install -y socat pv percona-xtrabackupFor MariaDB servers, use MariaDB Backup instead:

$ yum install -y socat pv MariaDB-BackupStep Two

Create user ‘xtrabackup’ on master if it doesn’t exist:

mysql> CREATE USER 'xtrabackup'@'localhost' IDENTIFIED BY 'Km4z9^sT2X';

mysql> GRANT RELOAD, LOCK TABLES, PROCESS, REPLICATION CLIENT ON *.* TO 'xtrabackup'@'localhost';Step Three

Create another user called ‘mysqldump’ on master if it doesn’t exist. This user will be used for ‘mysqldump’ and ‘mysqlpump’:

mysql> CREATE USER 'mysqldump'@'localhost' IDENTIFIED BY 'Km4z9^sT2X';

mysql> GRANT SELECT, SHOW VIEW, EVENT, TRIGGER, LOCK TABLES, RELOAD, REPLICATION CLIENT ON *.* TO 'mysqldump'@'localhost';Step Four

Add the backup users’ credentials inside MySQL configuration file under [xtrabackup], [mysqldump] and [mysqlpump] directive:

$ cat /etc/my.cnf

...

[xtrabackup]

user=xtrabackup

password='Km4z9^sT2X'

[mysqldump]

user=mysqldump

password='Km4z9^sT2X'

[mysqlpump]

user=mysqldump

password='Km4z9^sT2X'By specifying the above lines, we don’t need to specify username and password in the backup command since the backup tool will automatically load those configuration options from the main configuration file.

Make sure the backup tools are properly tested beforehand. For Xtrabackup which supports backup streaming via network, this has to be tested first to make sure the communication link can be established correctly between the source and destination server. On the destination server, run the following command for socat to listen to port 9999 and ready to accept incoming streaming:

$ socat -u tcp-listen:9999,reuseaddr stdout 2>/tmp/netcat.log | xbstream -x -C /var/lib/mysqlThen, create a backup on the source server and stream it to port 9999 on the destination server:

$ innobackupex --socket=/var/lib/mysql/mysql.sock --stream=xbstream /var/lib/mysql/ | socat - TCP4:192.168.0.202:9999You should get a continuous stream of output after executing the backup command. Wait until you see the ‘Completed OK’ line indicating a successful backup.

With pv, we can throttle the bandwidth usage or see the progress as a process being piped through it. Commonly, the streaming process will saturate the network if no throttleting is enabled and this could cause problems with other servers to interact with another in the same segment. Using pv, we can throttle the streaming process before we pass it to the streaming tool like socat or netcat. The following example shows the backup streaming will be throttled around 80 MB/s for both incoming and outgoing connections:

$ innobackupex --slave-info --socket=/var/lib/mysql/mysql.sock --stream=xbstream /var/lib/mysql/ | pv -q -L 80m | socat - TCP4:192.168.0.202:9999Streaming a backup is commonly used to stage a slave or store the backup remotely on another server.

For mysqldump and mysqlpump, we can test with the following commands:

$ mysqldump --set-gtid-purged=OFF --all-databases

$ mysqlpump --set-gtid-purged=OFF --all-databasesMake sure you see non-error lines appear in the output.

Stress Test the Server

Stress testing the database server is important to understand the maximum capacity that we can anticipate for the particular server. This will become useful when you are approaching thresholds or bottlenecks at a later stage. You can use many benchmarking tools available in the market like mysqlslap, DBT2 and sysbench.

In this example, we use sysbench to measure the server’s peak performance, saturation level and also the components’ temperature while running in a high database workload environment. This will give you a ground understanding on how good the server is, and anticipate the workload that the server can process for our application in production.

To install and configure sysbench, you can compile it from the source or install the package from Percona repository:

$ yum install -y https://repo.percona.com/yum/percona-release-latest.noarch.rpm

$ yum install -y sysbenchCreate the database schema and user on the MySQL server:

mysql> CREATE DATABASE sbtest;

mysql> CREATE USER 'sbtest'@'localhost' IDENTIFIED BY 'sysbenchP4ss';

mysql> GRANT ALL PRIVILEGES ON sbtest.* TO sbtest@'localhost';Generate the test data:

$ sysbench

/usr/share/sysbench/oltp_common.lua

--db-driver=mysql

--mysql-host=localhost

--mysql-user=sbtest

--mysql-password=sysbenchP4ss

--tables=50

--table-size=100000

prepareThen run the benchmark for 1 hour (3600 seconds):

$ sysbench

/usr/share/sysbench/oltp_read_write.lua

--report-interval=2

--threads=64

--max-requests=0

--db-driver=mysql

--time=3600

--db-ps-mode=disable

--mysql-host=localhost

--mysql-user=sbtest

--mysql-password=sysbenchP4ss

--tables=50

--table-size=100000

runWhile the test is running, use iostat (available in sysstat package) in another terminal to monitor the disk utilization, bandwidth, IOPS and I/O wait:

$ yum install -y sysstat

$ iostat -x 60

avg-cpu: %user %nice %system %iowait %steal %idle

40.55 0.00 55.27 4.18 0.00 0.00

Device: rrqm/s wrqm/s r/s w/s rkB/s wkB/s avgrq-sz avgqu-sz await r_await w_await svctm %util

sda 0.19 6.18 1236.23 816.92 61283.83 14112.44 73.44 4.00 1.96 2.83 0.65 0.34 69.29The above result will be printed every 60 seconds. Wait until the test finishes and take the average of r/s (reads/second), w/s (writes/second), %iowait, %util, rkB/s and wkB/s (bandwidth). If you are seeing a relatively low utilization of disk, CPU, RAM or network, you probably need to increase the “–threads” value to an even higher number so it will make use of all the resources to the limit.

Consider the following aspects to be measured of:

- Queries per Second = Sysbench summary once the test completes under SQL statistics -> Queries -> Per sec.

- Query latency = Sysbench summary once the test completes under Latency (ms) -> 95th percentile.

- Disk IOPS = Average of r/s + w/s

- Disk utilization = Average of %util

- Disk bandwidth R/W = Average of rkB/s / Average of wkB/s

- Disk IO wait = Average of %iowait

- Average server load = Average load average as reported by top command.

- MySQL CPU usage = Average CPU utilization as reported by top command.

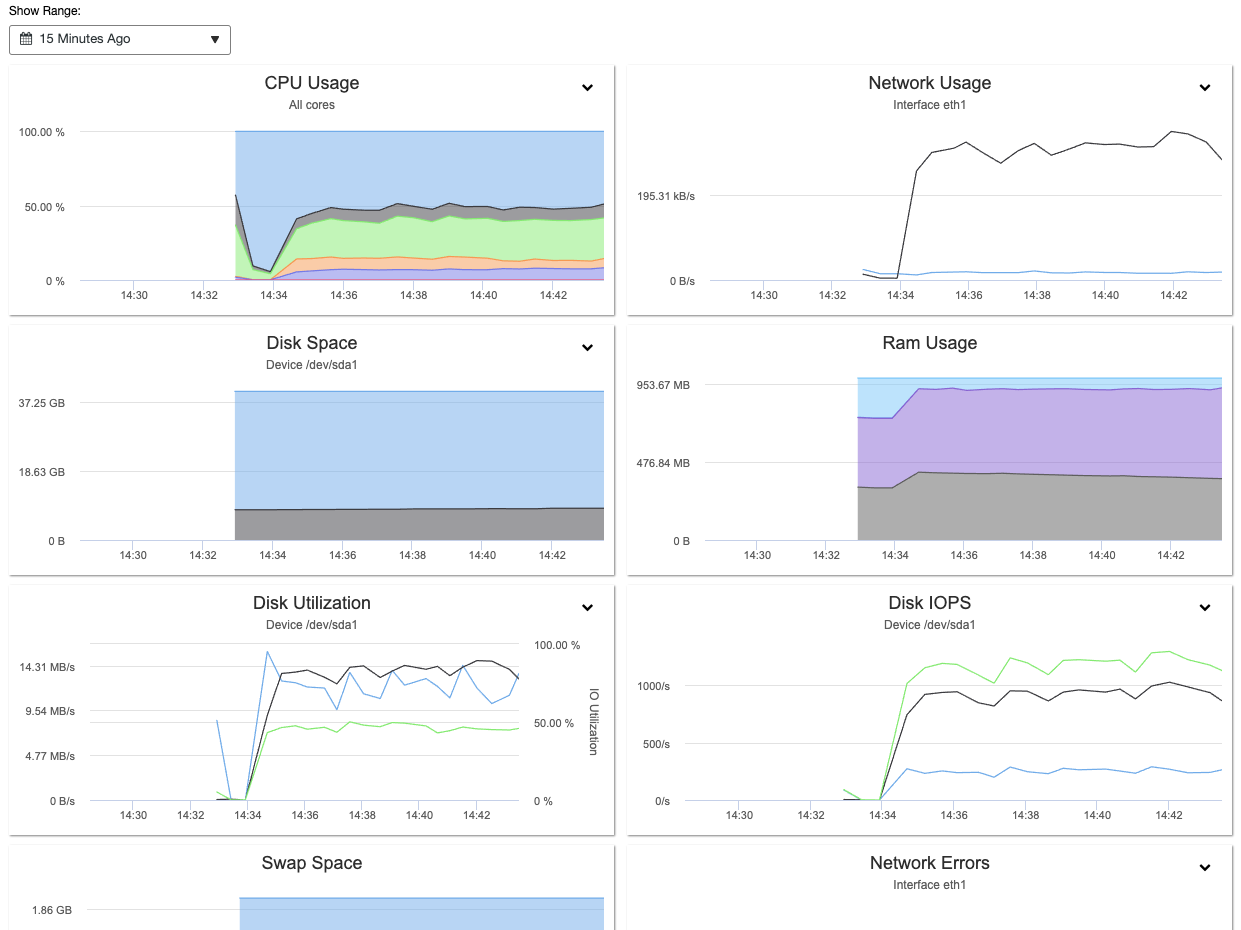

With ClusterControl, you can easily observe and get the above information via Nodes Overview panel, as shown in the following screenshot:

Furthermore, the information gathered during the stress test can be used to tune MySQL and InnoDB variables accordingly like innodb_buffer_pool_size, innodb_io_capacity, innodb_io_capacity_max, innodb_write_io_threads, innodb_read_io_threads and also max_connections.

To learn more about MySQL performance benchmark using sysbench, check out this blog post, How to Benchmark Performance of MySQL & MariaDB Using SysBench.

Use an Online Schema Change Tool

Schema change is something that is inevitable in relational databases. As the application grows and becomes more demanding over time, it does certainly require some structure change to the database. There are some DDL operations that will rebuild the table thus blocking other DML statements to run and this could impact your database availability if you are performing structural changes on a huge table. To see the list of blocking DDL operations, check out this MySQL documentation page and look for operations that have “Permits Concurrent DML” = No.

If you can’t afford downtime on the production servers when performing schema change, it’s probably a good idea to configure the online schema change tool at the early stage. In this example, we install and configure gh-ost, an online schema change built by Github. Gh-ost uses the binary log stream to capture table changes and asynchronously applies them onto the ghost table.

To install gh-ost on a CentOS box, simply follow the following steps:

Step One

Download latest gh-ost from here:

$ wget https://github.com/github/gh-ost/releases/download/v1.0.48/gh-ost-1.0.48-1.x86_64.rpmStep Two

Install the package:

$ yum localinstall gh-ost-1.0.48-1.x86_64.rpm Step Three

Create a database user for gh-ost if it does not exist, and grant it with proper privileges:

mysql> CREATE USER 'gh-ost'@'{host}' IDENTIFIED BY 'ghostP455';

mysql> GRANT ALTER, CREATE, DELETE, DROP, INDEX, INSERT, LOCK TABLES, SELECT, TRIGGER, UPDATE ON {db_name}.* TO 'gh-ost'@'{host}';

mysql> GRANT SUPER, REPLICATION SLAVE ON *.* TO 'gh-ost'@'{host}';** Replace the {host} and {db_name} with their appropriate values. Ideally, the {host} is one of the slave hosts that will perform the online schema change. Refer to gh-ost documentation for details.

Step Four

Create gh-ost configuration file to store the username and password under /root/.gh-ost.cnf:

[client]

user=gh-ost

password=ghostP455Similarly, you can have Percona Toolkit Online Schema Change (pt-osc) configured on the database server. The idea is to make sure you are prepared with this tool first on the database server that is likely to be running this operation in the future.

Utilize the Percona Toolkit

Percona Toolkit is a collection of advanced open source command-line tools, developed by Percona, that are engineered to perform a variety of MySQL, MongoDB and PostgreSQL server and system tasks that are too difficult or complex to perform manually. These tools have become the ultimate saviour, used by DBAs around the world to address or solve technical issues found in MySQL and MariaDB servers.

To install Percona Toolkit, simply run the following command:

$ yum install https://repo.percona.com/yum/percona-release-latest.noarch.rpm

$ yum install percona-toolkitThere are over 30 tools available within this package. Some of them are specifically designed for MongoDB and PostgreSQL. Some of the most popular tools for MySQL troubleshooting and performance tuning are pt-stalk, pt-mysql-summary, pt-query-digest, pt-table-checksum, pt-table-sync and pt-archiver. This toolkit can help DBAs to verify MySQL replication integrity by checking master and replica data consistency, efficiently archive rows, find duplicate indexes, analyze MySQL queries from logs and tcpdump and much more.

The following example shows one of the tools (pt-table-checksum) output where it can perform online replication consistency check by executing checksum queries on the master, which produces different results on replicas that are inconsistent with the master:

$ pt-table-checksum --no-check-binlog-format --replicate-check-only

Checking if all tables can be checksummed ...

Starting checksum ...

Differences on mysql2.local

TABLE CHUNK CNT_DIFF CRC_DIFF CHUNK_INDEX LOWER_BOUNDARY UPPER_BOUNDARY

mysql.proc 1 0 1

mysql.tables_priv 1 0 1

mysql.user 1 1 1The above output shows that there are 3 tables on the slave (mysql2.local) which are inconsistent with the master. We can then use the pt-table-sync tool to patch up the missing data from the master, or simply resyncing the slave once more.

Lock Down the Server

Finally, after the configuration and preparation stage is complete, we can isolate the database node from the public network and restrict the server access to known hosts and networks. You can use firewall (iptables, firewalld, ufw), security groups, hosts.allow and/or hosts.deny or simply disable the network interface that faces the internet if you have multiple network interfaces.

For iptables, it’s important to specify a comment for every rule using the ‘-m comment –comment’ flag:

$ iptables -A INPUT -p tcp -s 192.168.0.0/24 --dport 22 -m comment --comment 'Allow local net to SSH port' -j ACCEPT

$ iptables -A INPUT -p tcp -s 192.168.0.0/24 --dport 3306 -m comment --comment 'Allow local net to MySQL port' -j ACCEPT

$ iptables -A INPUT -p tcp -s 192.168.0.0/24 --dport 9999 -m comment --comment 'Allow local net to backup streaming port' -j ACCEPT

$ iptables -A INPUT -p tcp -s 0.0.0.0/0 -m comment --comment 'Drop everything apart from the above' -j DROPSimilarly for Ubuntu’s Firewall (ufw), we need to define the default rule first and then can create a similar rules for MySQL/MariaDB similar to this:

$ sudo ufw default deny incoming comment 'Drop everything apart from the above'

$ sudo ufw default allow outgoing comment 'Allow outgoing everything'

$ sudo ufw allow from 192.168.0.0/24 to any port 22 comment 'Allow local net to SSH port'

$ sudo ufw allow from 192.168.0.0/24 to any port 3306 comment 'Allow local net to MySQL port'

$ sudo ufw allow from 192.168.0.0/24 to any port 9999 comment 'Allow local net to backup streaming port'Enable the firewall:

$ ufw enableThen, verify the rules are loaded correctly:

$ ufw status verbose

Status: active

Logging: on (low)

Default: deny (incoming), allow (outgoing), disabled (routed)

New profiles: skip

To Action From

-- ------ ----

22 ALLOW IN 192.168.0.0/24 # Allow local net to SSH port

3306 ALLOW IN 192.168.0.0/24 # Allow local net to MySQL port

9999 ALLOW IN 192.168.0.0/24 # Allow local net to backup streaming portAgain, it’s very important to specify comments on every rule to help us understand the rule better.

For remote database access restriction, we can also use VPN server as shown in this blog post, Using OpenVPN to Secure Access to Your Database Cluster in the Cloud.

Conclusion

Preparing a production server is obviously not an easy task, which we have shown in this blog series. If you are worried that you would screw up, why don’t you use ClusterControl to deploy your database cluster? ClusterControl has a very good track record in database deployment and has enabled more than 70,000 MySQL and MariaDB deployments for all environments to date.