blog

How to Deploy the Open edX MySQL Database for High Availability

Open edX is a platform for online educational activities. Given the situation the world is in, all such platforms are encountering higher loads and their importance has significantly increased. Those are not just the “helper” platforms but they often become the main way in which educational activities are performed. This leads to the higher requirements regarding the load they can handle or the availability of the platform.

Open edX is a complex product that consists of multiple elements. One of them is the MySQL database. In this short blog we would like to discuss how you can improve the high availability of the Open edX platform.

Obviously, the single MySQL database is a single point of failure and, as such, it is not an approach suitable for the production deployments. There are several ways in which you can improve the availability of MySQL database.

For starters, you can run the master – slave setup using asynchronous or semi-synchronous replication. The advantage of it is that, when the master is unavailable, you can promote one of the slaves and proceed with the operation. The main downside of such a setup is that the failover has to be performed either manually, which increases the downtime or you have to use some external software (for example ClusterControl) but then again it still may take a bit of time. Finally, any kind of asynchronous or semi-synchronous replication is subject to replication lag. This may seriously impact the read-after-write scenarios in which the application executes a write on the master and then immediately attempts to read that data from the slave.

Another approach would be to use a Galera Cluster to store the data from Open edX platform. We can start with three node clusters – such clusters can automatically handle the failure of a single node. The remaining two nodes will continue to work and be responsive for queries coming from the application. Another advantage of Galera is that, even though it is “virtually” synchronous (which means pretty much that it is almost synchronous), there is a way to enforce causality checks and force clusters into the synchronous mode (even if you pay for it with reduced performance).

Both scenarios would require some sort of a load balancer in front of them, which would handle the traffic and redirect it to a proper destination.

Let’s see how ClusterControl can help you to deploy a Galera Cluster with a set of load balancers that you can use for your Open edX platform.

Deploying MariaDB Cluster

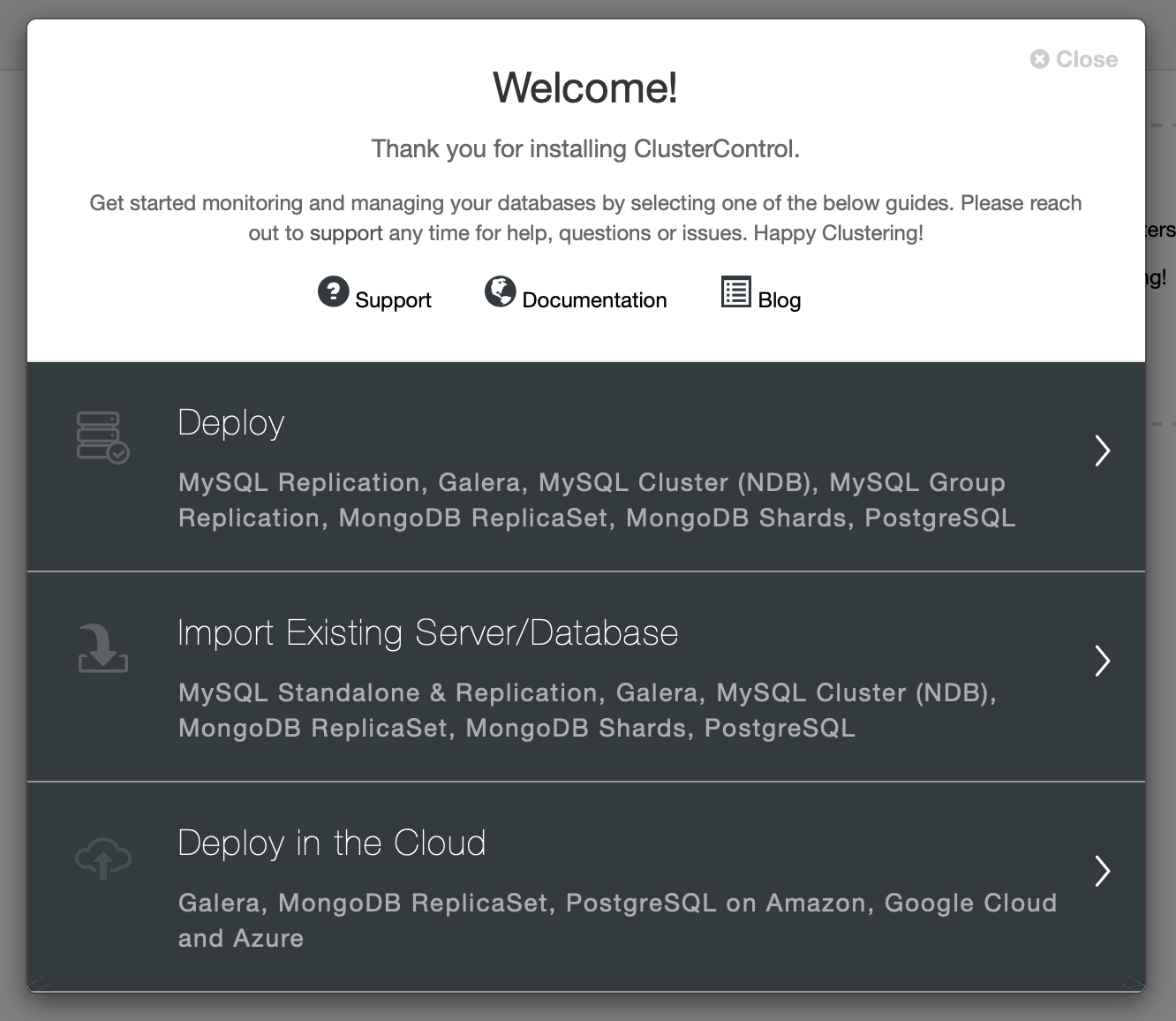

This time we’ll try to use MariaDB Cluster as our backend. Again, the first step is the same, we need to pick “Deploy” from the wizard:

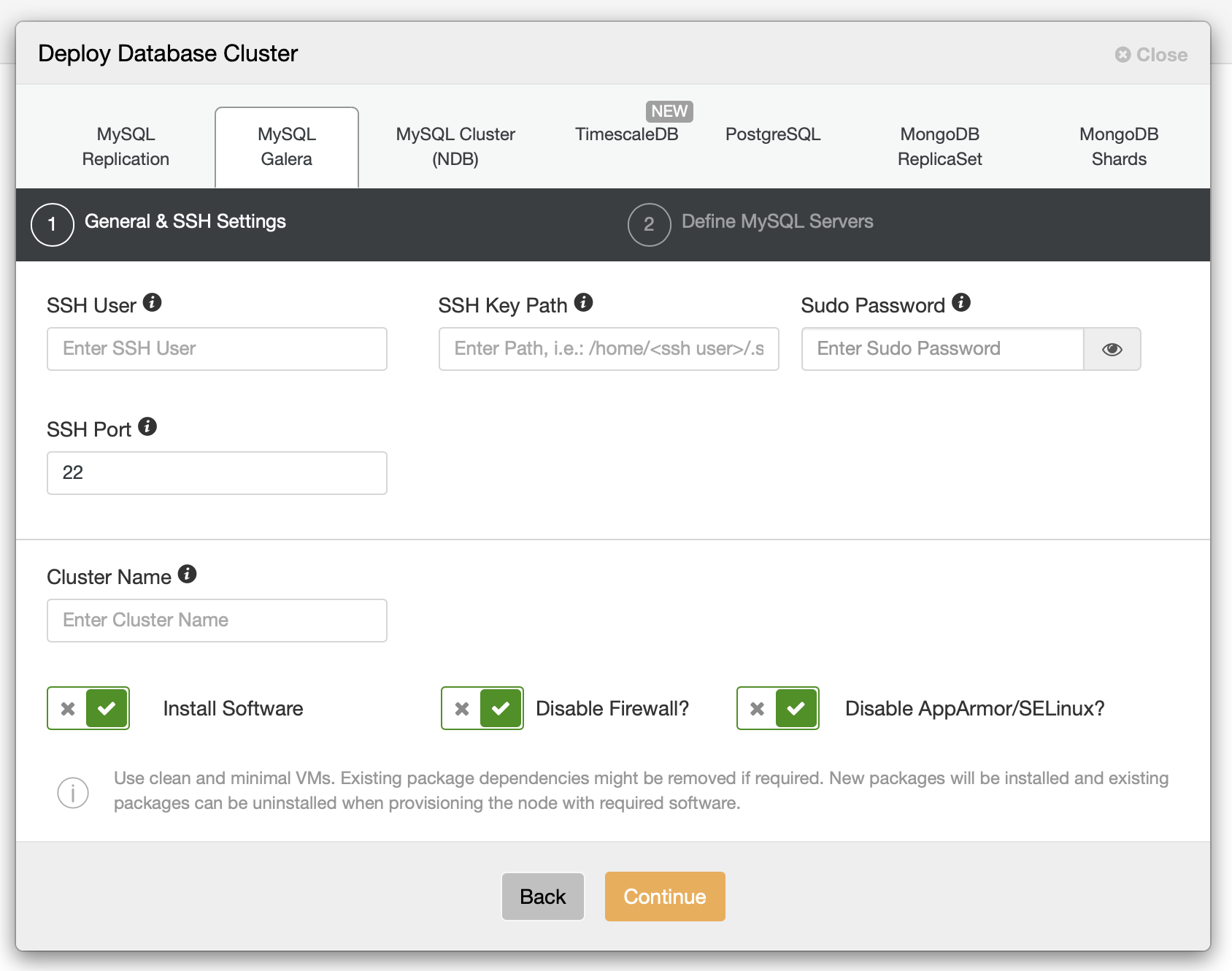

Once you do that, we have to define SSH connectivity, passwordless, key-based SSH access is a requirement for ClusterControl, otherwise it won’t be able to manage database infrastructure.

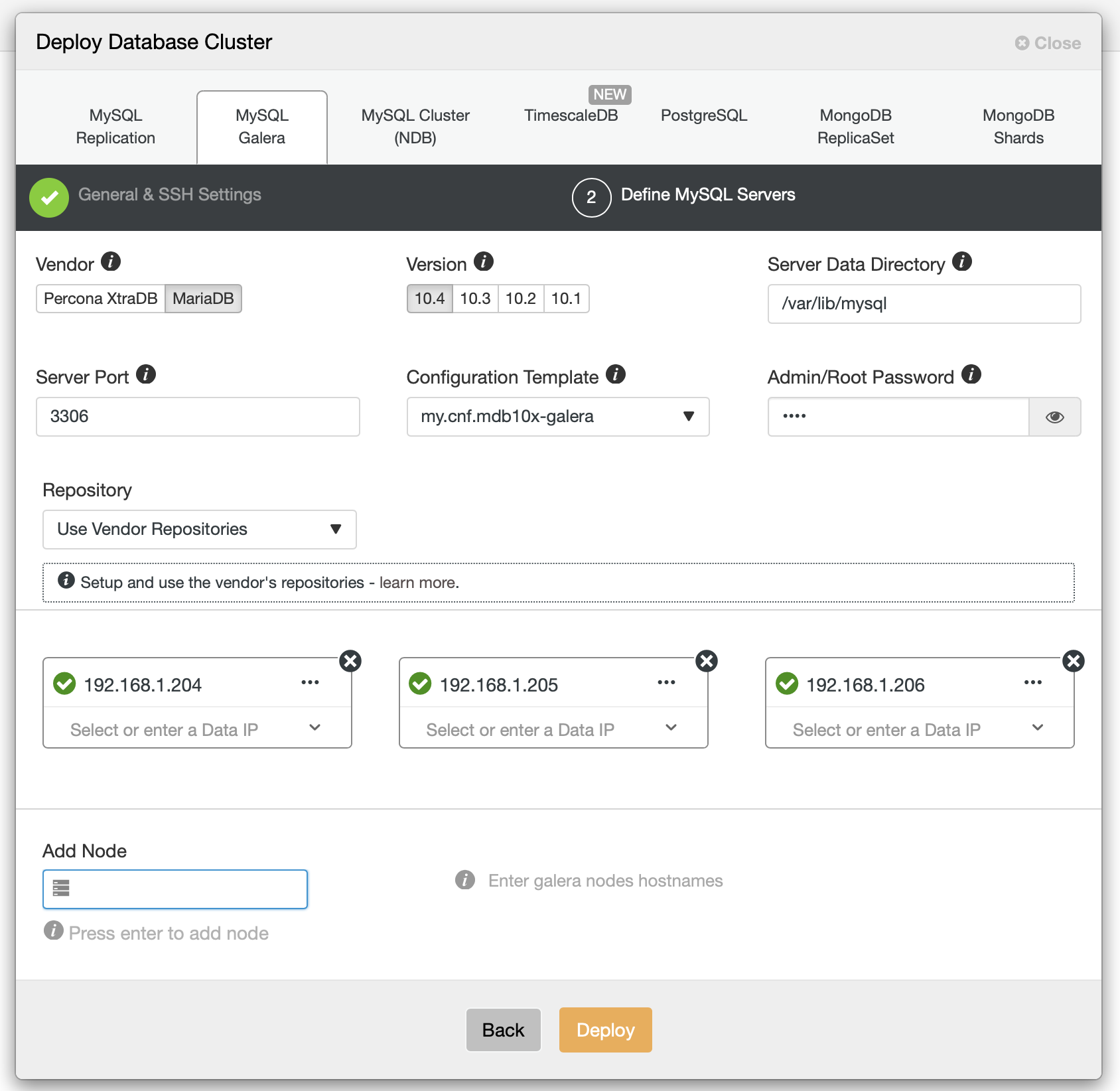

Then we should decide on the vendor, version, password, hosts and some additional settings:

With all those details filled we are good to proceed with deployment.

Deploying ProxySQL

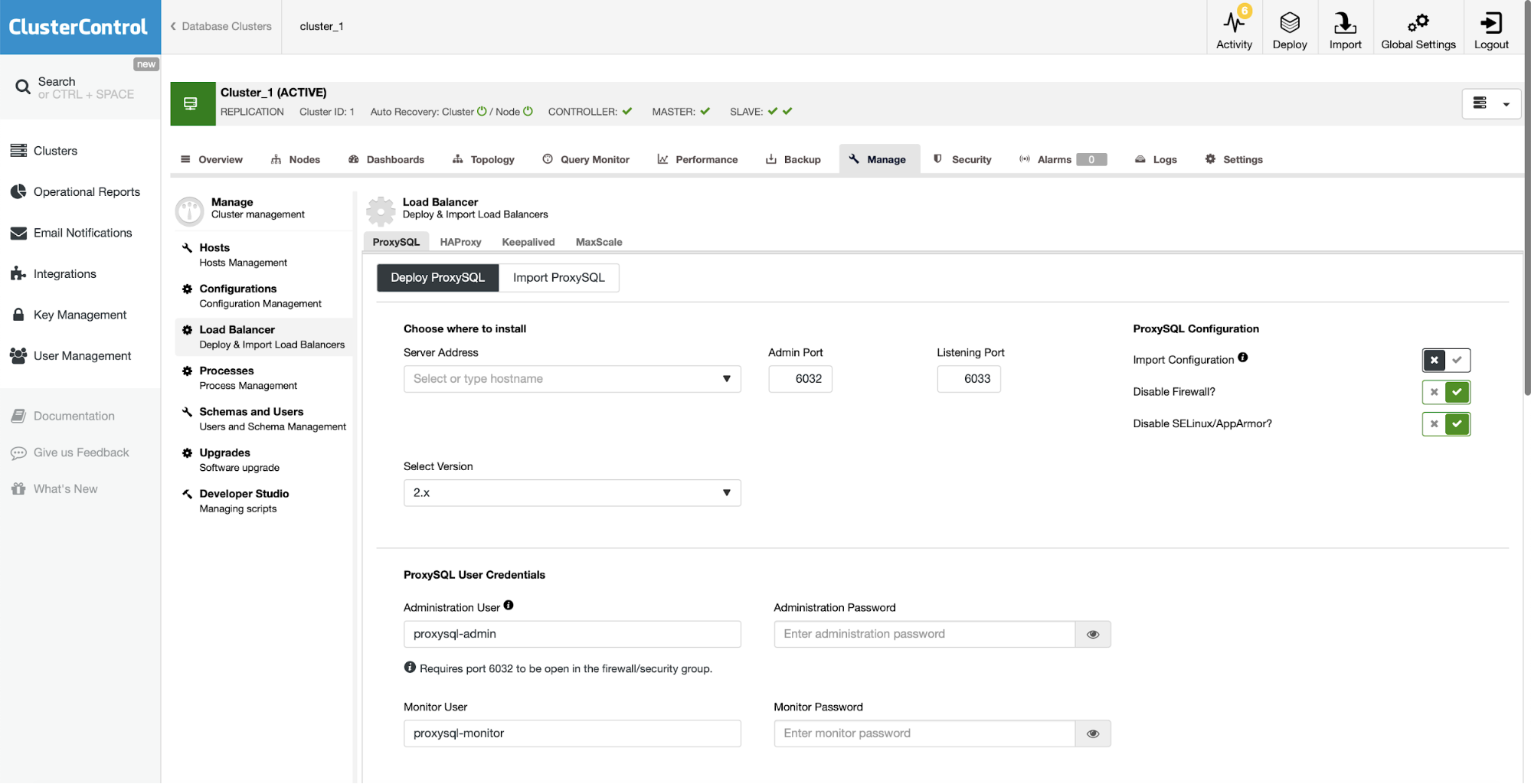

Database itself is not the only element that we want to deploy. We also need a load balancer which we will use to direct the traffic to the nodes that are available in the given moment. We will also use it to provide read/write split, directing all of the writes to a single MariaDB Galera node. This will help us avoid conflicts between writes executed on different Galera nodes.

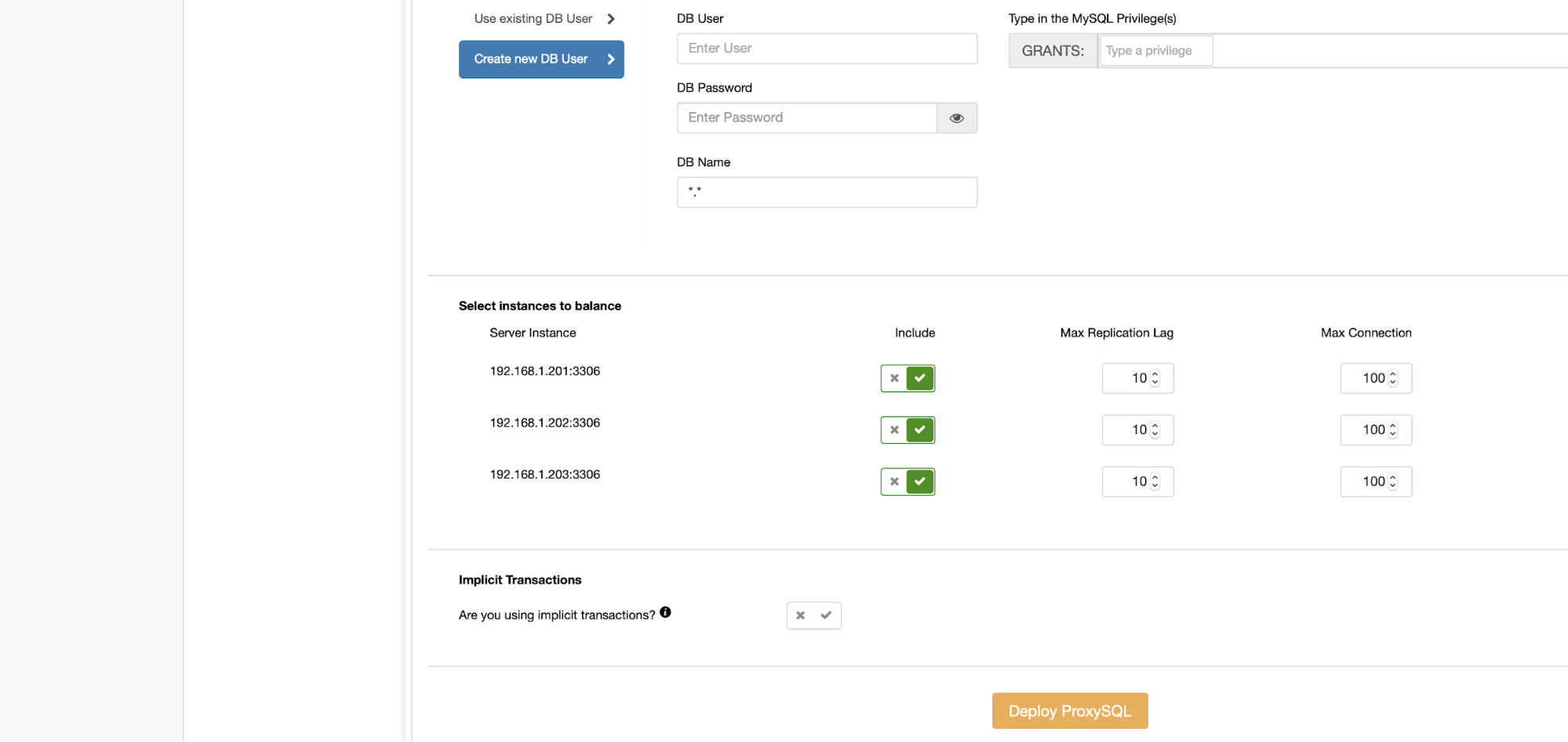

For ProxySQL ClusterControl also requires filling in some information – you have to pick the host to install it on, decide on ProxySQL version, credentials for the administrative and monitoring users. Those users will be used to manage ProxySQL and monitor the state of your Galera cluster. You should also import existing database users or create a new one for your application. Finally, it is up to you to decide which database nodes you want to use with ProxySQL and decide if you use implicit transactions.

Deploying Keepalived

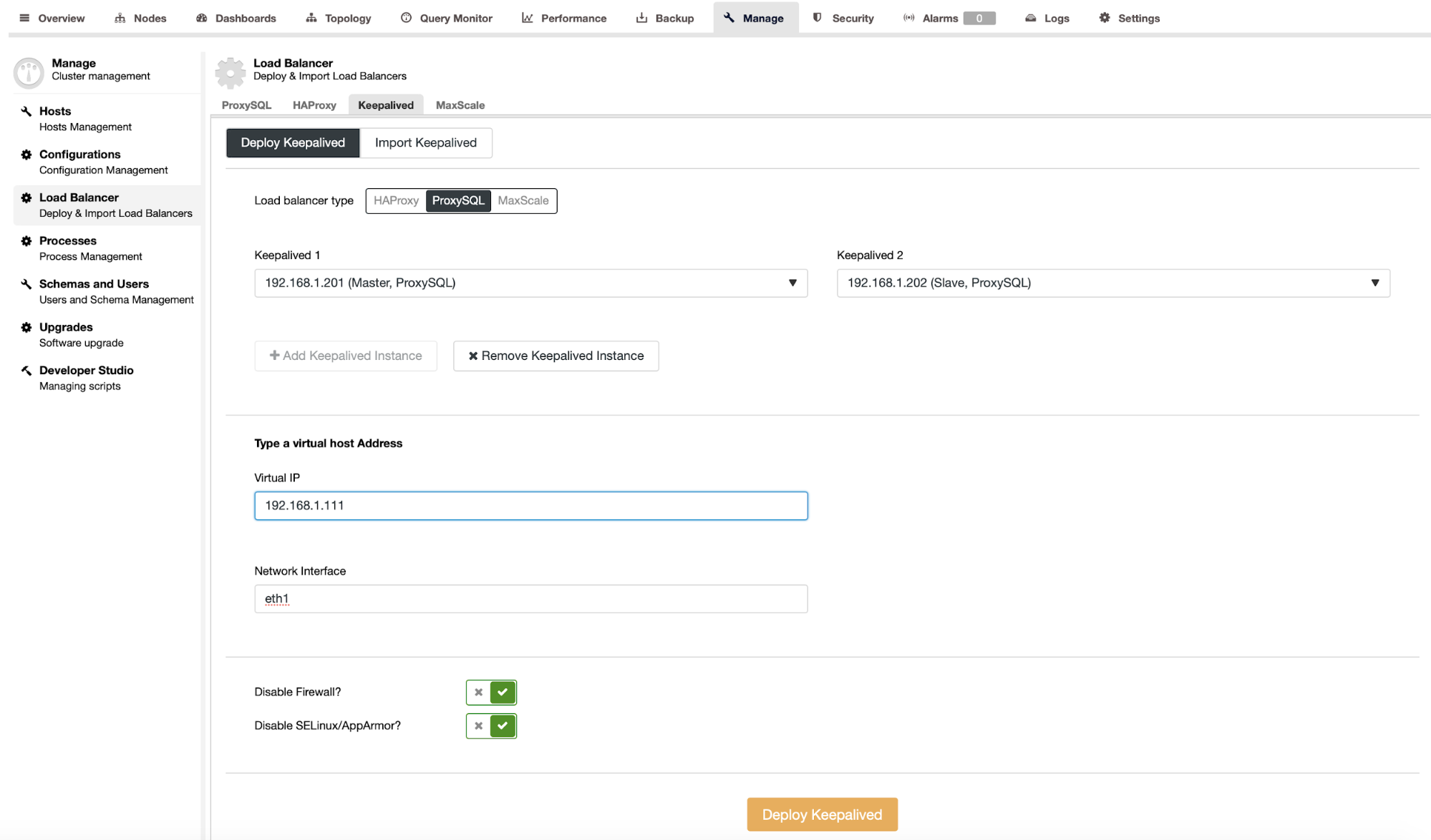

As the next step we will deploy Keepalived. The idea here is to have a virtual IP that will point to the working ProxySQL instance. Such VIP can then be used in the application as the endpoint for the MySQL database connectivity.

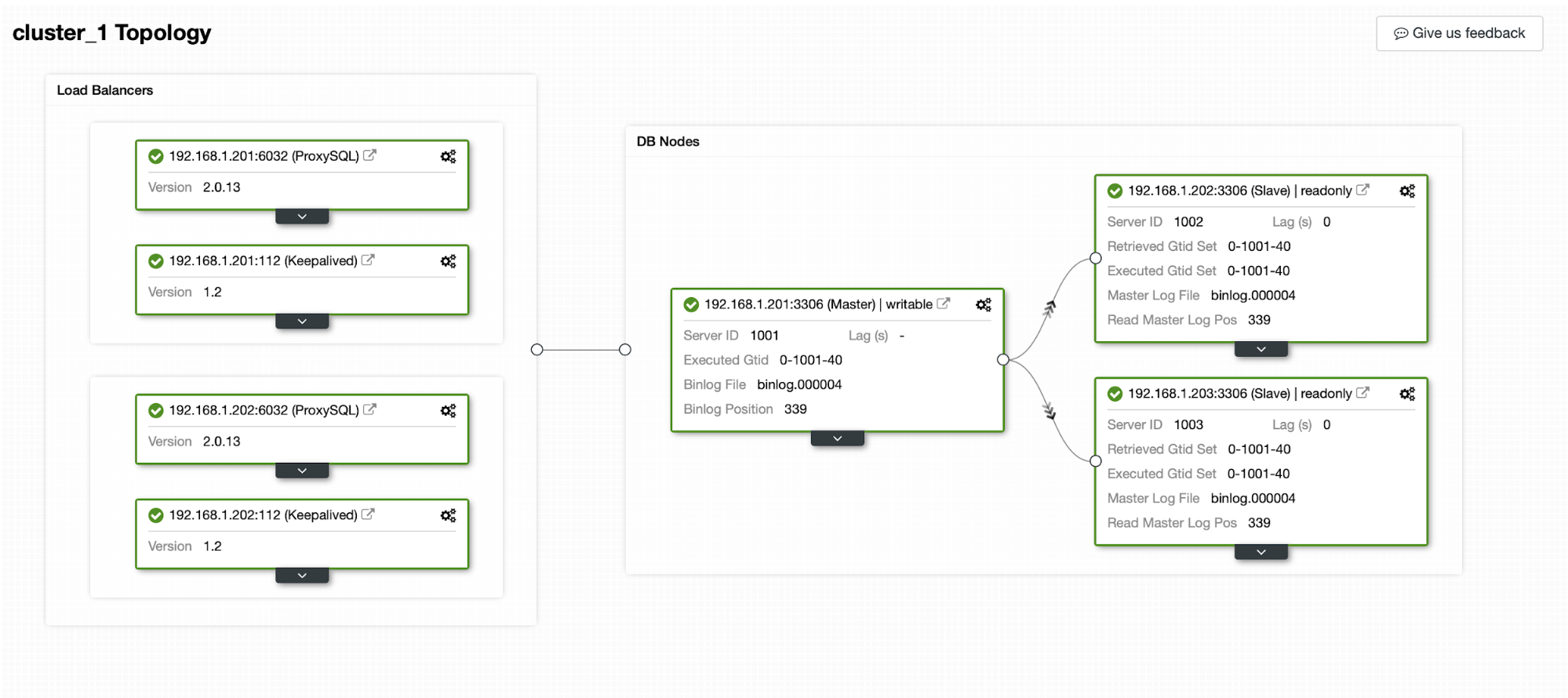

After passing details like ProxySQL instances that should be monitored, Virtual IP and the interface VIP should bind to we are ready to deploy. After couple of minutes everything should be ready and the topology should look like below:

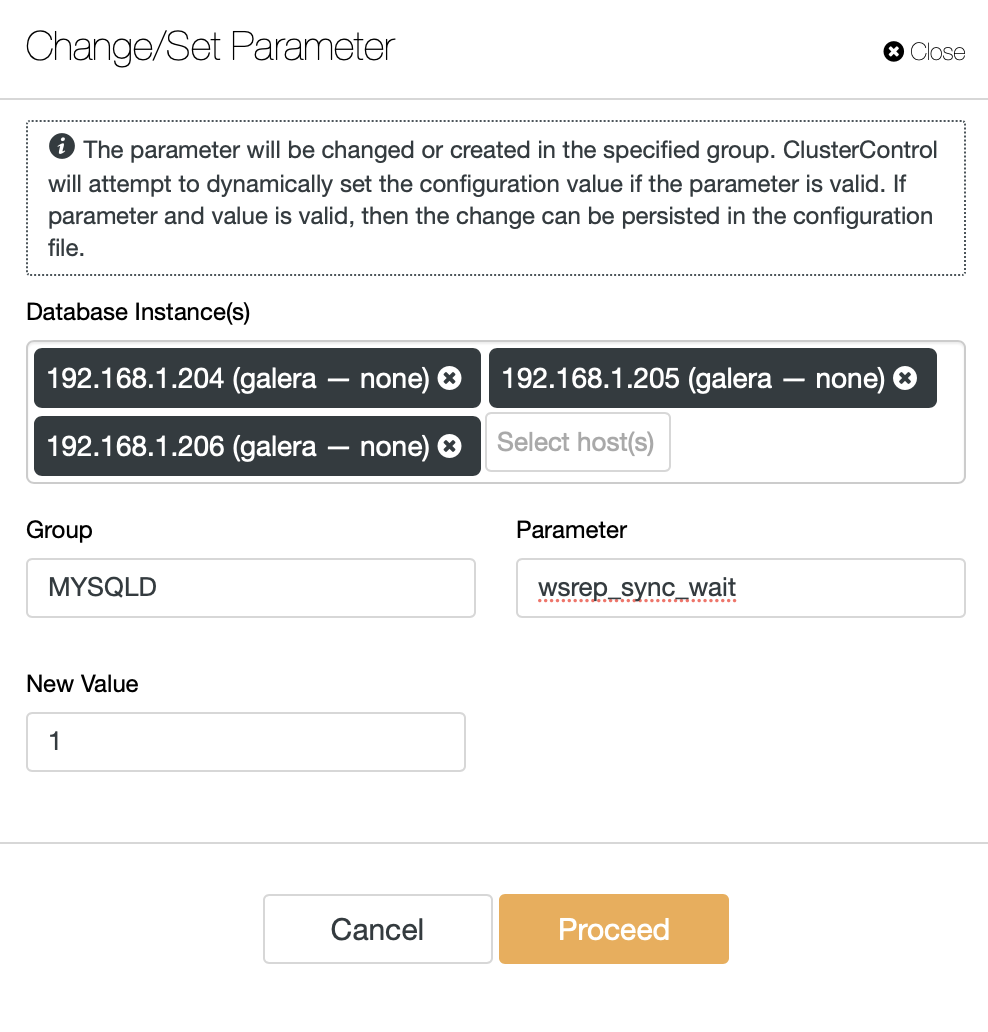

That’s pretty much it when it comes to the deployment. You can point your Open edX platform towards the VIP and port 6033, this should be enough to get the connectivity to your backend database. The last remaining bit, should you find it necessary, would be to configure causality checks. There is a wsrep_sync_wait variable that can do just that. It can enable tests on several access patterns: reads, updates, inserts, deletes, replaces and SHOW commands. If we are interested only in the SELECT queries, we will set this variable to ‘1’ using ClusterControl configuration management.

This will perform this change on all of the MariaDB Cluster nodes.

That’s pretty much it. If you would like to share some of your experience with Open edX, you are welcome to leave us a comment.