blog

Building Your Own Private DBaaS with ClusterControl

As we explained in one of our blogs, DBaaS are powerful tools for the users that require a database for their purposes. This can be a developer that spins up database clusters for their applications, an user that automatically deploys applications in a highly available fashion, including the database cluster for it. The requirement is that the deployment process is fast, automated and unattended.

There are numerous ways you can approach the problem of creating DBaaS service. After all there are many ways in which you can deploy databases, starting from scripts through infrastructure orchestration tools. It also depends on the environment in which you want to accomplish it as some cloud providers make available infrastructure management tools that can be used to automate deployments in their cloud infrastructure.

The main common part across those methods is that you have to write the code that will accomplish the deployment or find someone else’s code that does what you want and modify it to your liking. No matter how you do that, you will have to maintain that code from now on – new versions of the software will deprecate some functionality and add new features, you may be forced to rewrite parts of the code to follow the changes and handle incompatibilities. But what if you could use a tested tool, managed and supported by a well-established company, that can be used to deliver the same functionality?

Let’s take a look at the options available in ClusterControl.

Using ClusterControl as the Heart of Your DBaaS

ClusterControl can be used to deploy clusters of open source databases – MySQL, MariaDB, PostgreSQL, MongoDB.

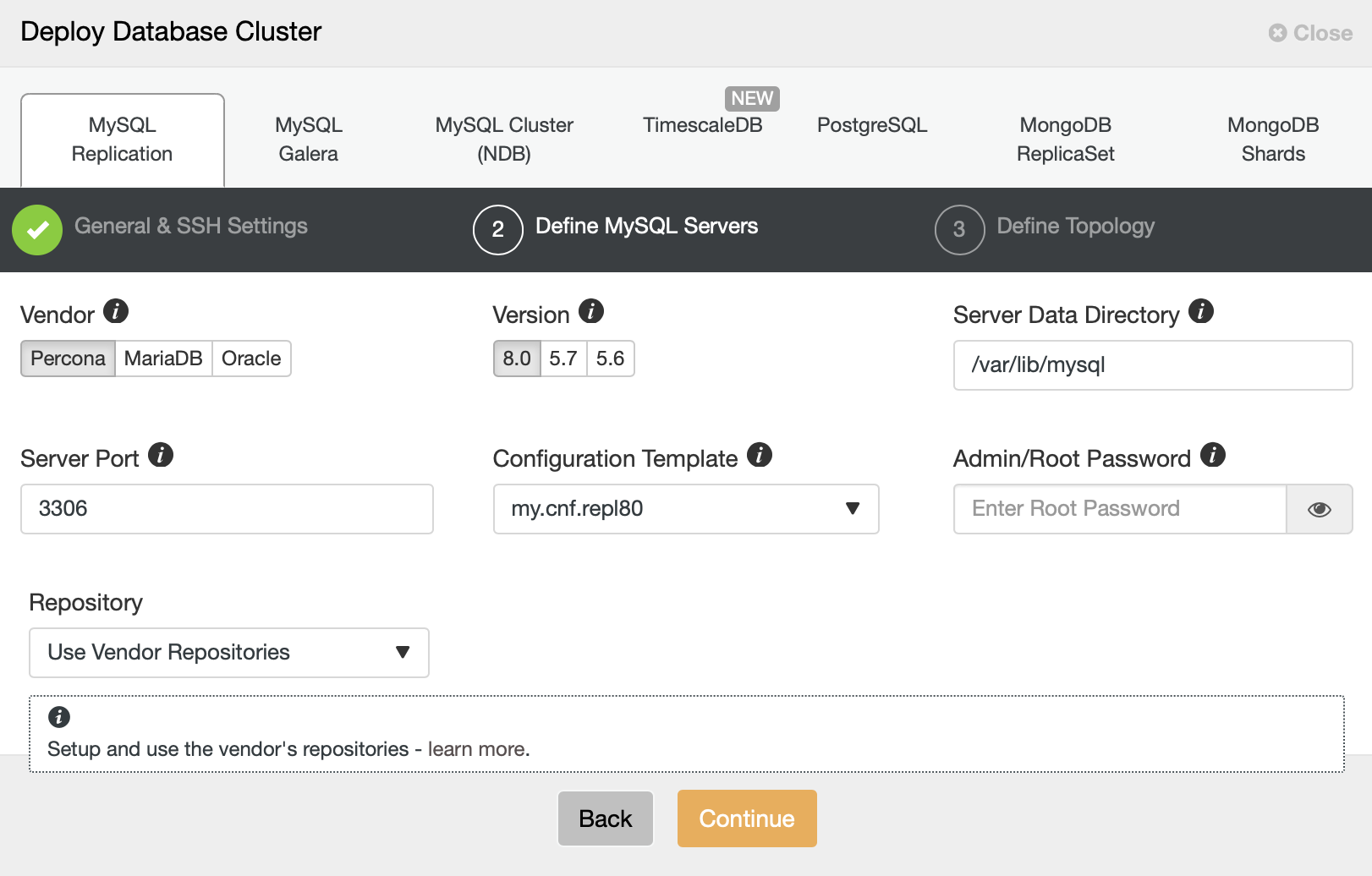

With a couple of clicks in the UI you can deploy one of the supported setups, passing just a handful of information and settings. While a UI may not be the best way to automate actions, ClusterControl still has you covered through the use of CLI and RPC.

Using the Command Line Interface

ClusterControl comes with a CLI that allows you to manage your databases through the use of commands executed from the shell. For example, we can create a replication cluster by running following command:

root@vagrant:~# s9s cluster --create --cluster-type=mysqlreplication --nodes="10.0.0.101?master;10.0.0.102?slave" --vendor=mariadb --cluster-name=MariaDB_replication --provider-version=10.4 --os-user=root --os-key-file=/root/.ssh/id_rsa

Job with ID 1 registered.We can see that the job has been registered:

root@vagrant:~# s9s jobs --list --long

----------------------------------------------------------------------------------------------------------

Create MySQL Replication Cluster

Installing MySQL on 10.0.0.101 [████▎ ]

42.42%

Created : 2020-07-30 08:56:40 ID : 1 Status : RUNNING

Started : 2020-07-30 08:56:40 User : admin Host : 127.0.0.1

Ended : Group: admins Cluster: 0

Tags : -

RPC : 2.0

----------------------------------------------------------------------------------------------------------

Total: 1We can, as well, see the job log and where we are at right now:

root@vagrant:~# s9s jobs --job-id=1 --log

Cluster will be created on 2 data node(s).

Verifying job parameters.

Checking ssh/sudo on 2 hosts with credentials ssh_cred_job_6656.

All 2 hosts are accessible by SSH.

10.0.0.101: Checking if host already exists in another cluster.

10.0.0.102: Checking if host already exists in another cluster.

Found replication link 0: 10.0.0.101 -> 10.0.0.102.

Setting up server 10.0.0.101.

10.0.0.101: Checking OS information.

10.0.0.101: Vendor is 'ubuntu'.

10.0.0.101: Release is 'bionic'.

10.0.0.101: Type is 'debian'.

10.0.0.101: Check and disable AppArmor.

10.0.0.101: Checking SELinux status (enabled = false).

10.0.0.101: Checking firewall.

10.0.0.101: Disabling ufw.

10.0.0.101: Flushing iptables.

10.0.0.101: Tuning OS parameters.

10.0.0.101: Setting vm.swappiness = 1.

10.0.0.101: Installing helper packages.

10.0.0.101: Waiting for dpkg lock.

10.0.0.101: Proceeding.

10.0.0.101: Installing psmisc.

10.0.0.101: Installing rsync.

10.0.0.101: Installing libaio1.

10.0.0.101: Installing netcat.

10.0.0.101: Installing netcat-openbsd.

10.0.0.101: Installing socat.

10.0.0.101: Installing lsb-release.

10.0.0.101: Installing wget.

10.0.0.101: Installing curl.

10.0.0.101: Installing pigz.

10.0.0.101: Installing libnuma1.

10.0.0.101: Installing libmecab2.

10.0.0.101: Installing bzip2.

10.0.0.101: Installing iproute2.

10.0.0.101: Installing tar.

10.0.0.101: Installing libatomic1.

10.0.0.101: Installing openssl.

10.0.0.101: Installing apt-transport-https.

10.0.0.101: Installing libssl1.0.0.

10.0.0.101: Installing augeas-tools.

10.0.0.101: Finished with helper packages.

10.0.0.101: Verifying helper packages (checking 'socat').

Auto-generating MySQL root password.

Using skip_name_resolve as all servers are defined by IP.

Cluster type is 'replication'.

10.0.0.101:3306: Using template 'my.cnf.mdb10x-replication'.

Using config template '/usr/share/cmon/templates/my.cnf.mdb10x-replication'.

Loaded template '/usr/share/cmon/templates/my.cnf.mdb10x-replication'.

10.0.0.101:3306: Detecting total memory.

10.0.0.101: Detected memory: 733MB.

10.0.0.101:3306: Detected memory: 733.16 MiB.

10.0.0.101:3306: The memory size might be insufficient.

10.0.0.101:3306: Checking free-disk space of '/var/lib/mysql'.

10.0.0.101:3306: Free disk space: 57.05 GiB.

10.0.0.101:3306: Total disk space: 61.80 GiB.

10.0.0.101: Using external repositories.

10.0.0.101: Setting up repository 'mariadb'

Using repository template file 'mariadb.list'.

10.0.0.101: Setting up repository 'percona'

Using repository template file 'percona.list'.

10.0.0.101: Uninstalling existing MySQL packages.

10.0.0.101: Checking OS user mysql.

10.0.0.101:3306: Cleaning old MySQL packages:

10.0.0.101:3306: Prepare MySQL environment (user/group).

10.0.0.101: Checking OS user mysql.

10.0.0.101: OS user mysql already exists.

10.0.0.101: mysqld: no process found

mysqld_safe: no process found

mysql: no process found

mysql_chk: no process found

cmon: no process found

10.0.0.101:3306: Installing the MySQL packages.When the job is finished we can perform additional actions like adding a ProxySQL load balancer to the cluster:

root@vagrant:~# s9s jobs --list

ID CID STATE OWNER GROUP CREATED RDY TITLE

1 0 FINISHED admin admins 08:56:40 100% Create MySQL Replication Cluster

Total: 1

root@vagrant:~# s9s cluster --add-node --cluster-id=1 --nodes="proxysql://10.0.0.101"

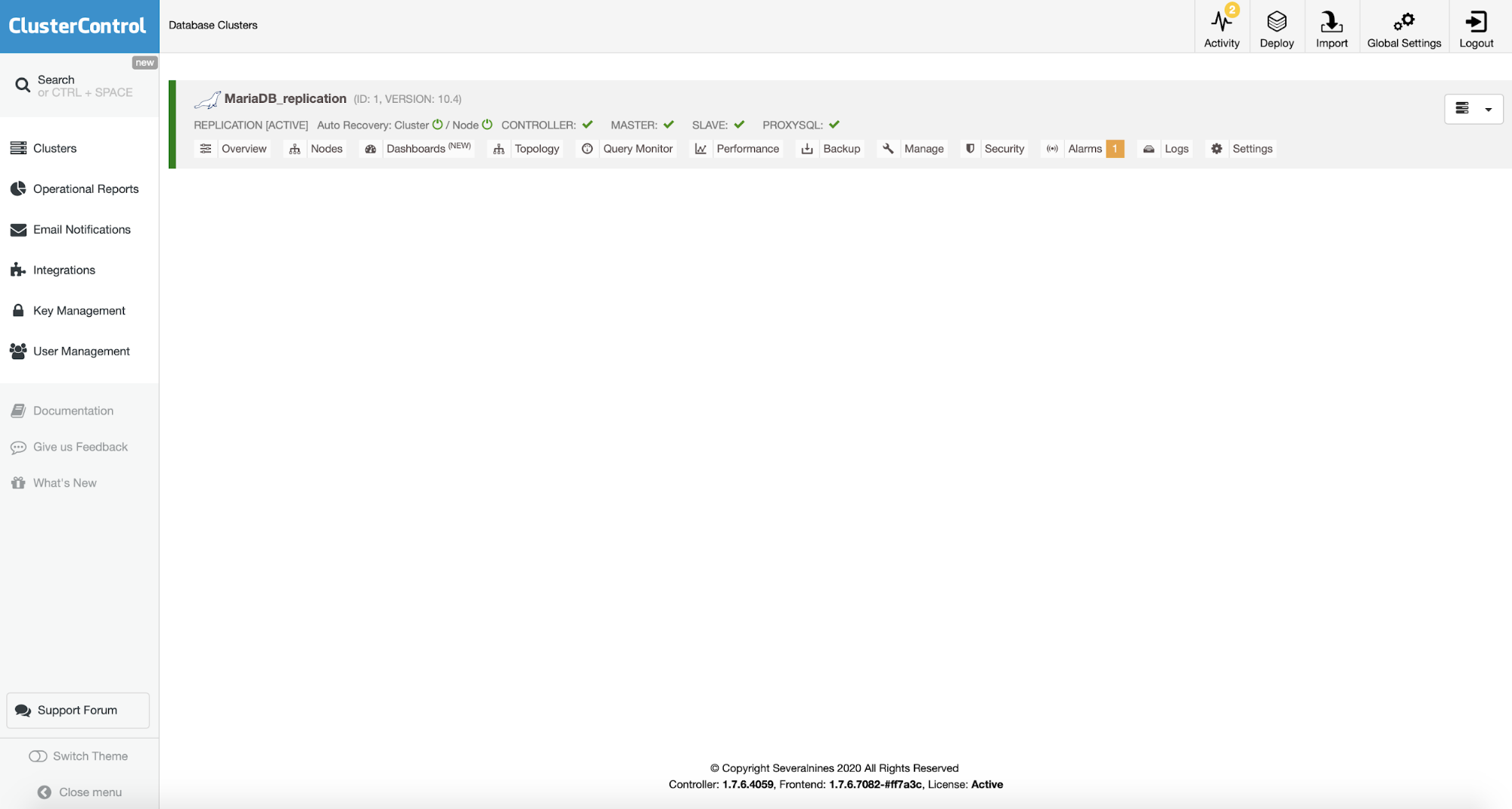

Job with ID 2 registered.All of those actions are, of course, represented in the UI as well:

Given that we have a cluster now, we can take a backup using CLI:

root@vagrant:~# s9s backup --create --nodes=10.0.0.101:3306 --cluster-id=1 --backup-method=mariabackupfullPlease keep in mind that this is, by no means, full functionality of the ClusterControl CLI. It is just a list of examples that are intended to show you what can be accomplished by using CLI. The full documentation is available on our website.

RPC

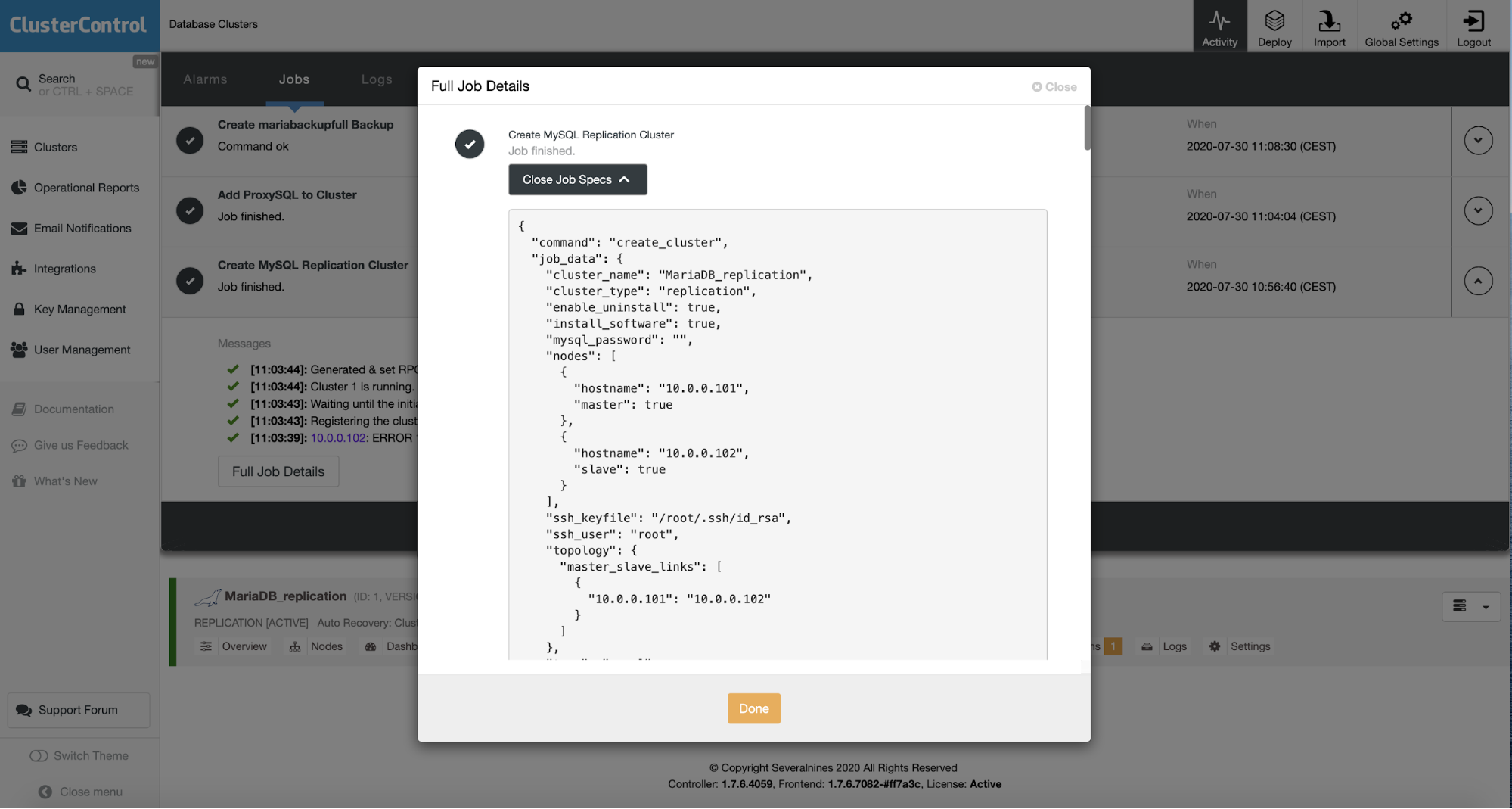

Another option to interact with ClusterControl is to use RPC. ClusterControl itself uses RPC for the communication between UI and the backend (cmon) therefore pretty much every job that you can start in the UI can be executed through direct use of the RPC. Let’s take a look at the jobs that we executed via CLI and see how they can be executed through the RPC. One of the ways to see that is in the UI. You can go to the “Activity” tab, and then “Jobs”, pick the job that you want to see, click on “Full Job Details” and, finally, “Expand Job Specs”:

The JSON that you see is the RPC call used to pass all required information for the given job to be executed by the backend service. More details about how to construct the calls can be found in the documentation.

Designing a DBaaS with ClusterControl

Of course, there are many ways in which you can build the DBaaS solution based on ClusterControl. Here we would like to describe some of the challenges that you will encounter while designing DBaaS solutions.

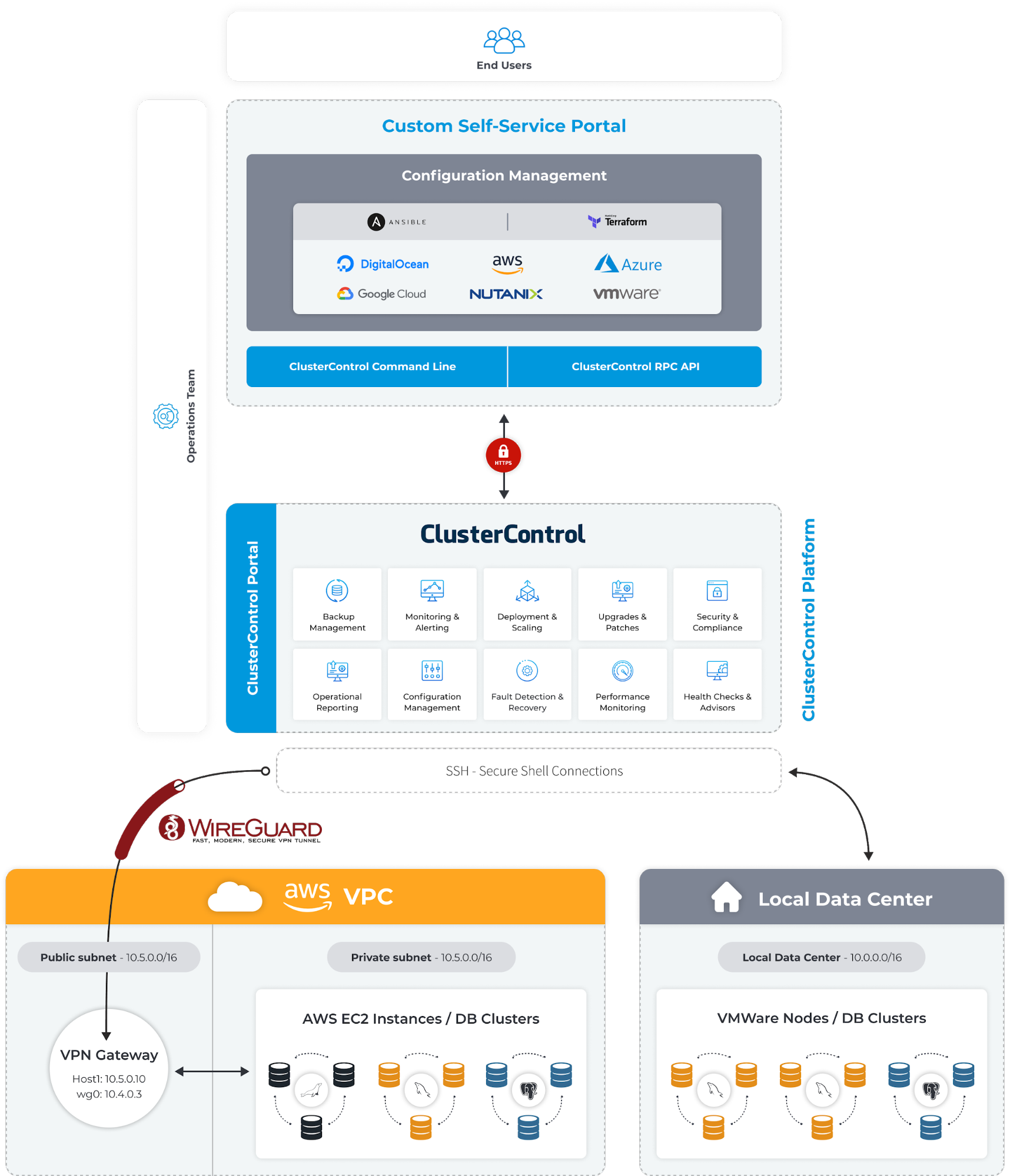

First, provisioning of the instances. ClusterControl can do that to some extent for the most popular cloud providers, but if you would like to have a better control over this process, you may have to figure out a solution on your own. Such a solution can be based on the tools like Terraform that can be used to define the infrastructure and deploy it.

If you want to stick to your on-prem data center, there are solutions like Nutanix that can help you build cloud solutions using the local infrastructure. Whatever you’ll choose, that’s the first building block. Next step is to integrate with ClusterControl – you can use CLI made available by ClusterControl, you can use RPC calls – it is up to you. As we mentioned, integration can be accomplished through tools like Ansible, Terraform or straight up code.

Depending on the purpose, it might be a good idea to cover the cogs and wheels of the integration under the hood of a nice UI. Such UI can be built in a way that allows users to easily deploy their database clusters wherever they need to do so using just a handful of clicks. An example solution may look like on the diagram below.

As you can see, the whole setup is not very complex and it mostly depends on the environment that you work with. What’s great, ClusterControl does not really care what environment you have as long as it can connect to the nodes using SSH connectivity. This allows you to mix and match whatever solutions you use. Mixed cloud and on-prem architecture will work just as fine as single or multi-cloud deployments. The only complexity related to the mix of environments would be in the configuration management stage where you would have to define methods to provision instances and ensure they can be reliably reached by ClusterControl.

We hope this very short introduction to the subject of building your own DBaaS using ClusterControl will give you some ideas how it can be accomplished. In a future blog post, we will discuss such a setup in a bit more detail.