blog

Multi-Cloud Full Database Cluster Failover Options for MariaDB Cluster

High availability is paramount in today’s business reality. But every service provider comes with an inherited risk of disruption — and cloud service providers are no different. If they go down, even temporarily, then so does your database.

A multi-cloud approach can help to alleviate the risk by providing additional redundancy. But if you’re dealing with a MariaDB cluster, you may still run into one particular issue: latency.

Now, you’re faced with two options that will address the need for both high availability and low latency.

Let’s explore what they are, why that is, and how you can keep your clusters up and available when they’re deployed in a multi-cloud setup.

MariaDB Database Clustering in Multi-Cloud Environments

If the SLA proposed by one cloud service provider is not enough, there’s always an option to create a disaster recovery site outside of that provider.

Thanks to this approach, whenever one of the cloud providers experiences service degradation, you can always switch to another provider and keep your database up and available.

However, this opens the door to another problem if you’re dealing with a MariaDB cluster.

The most common problem for MariaDB clusters in multi-cloud setups is network latency. This is unavoidable if we are talking about larger distances or, in general, multiple geographically separated locations.

Speed of light is quite high but it is finite. Every hop and every router also adds some latency into the network infrastructure.

This is an issue because a MariaDB cluster works best on low-latency networks. It is a quorum-based cluster where prompt communication between all nodes is required to keep the operations smooth. Increases in network latency will impact cluster operations, especially performance of the writes.

How to Address High Latency Issues for MariaDB Clusters in Multi-Cloud Environments

Let’s say you are experiencing latency issues and it’s causing performance degradation. There are two main ways this problem can be addressed.

Both come with their own unique considerations to take into account, but will help you to address latency-related failures in your MariaDB clusters.

1. Asynchronous Replication Between MariaDB Clusters

First we have an option to use separate clusters connected using asynchronous replication links. This allows us to almost forget about latency because asynchronous replication is significantly better suited to work in high latency environments.

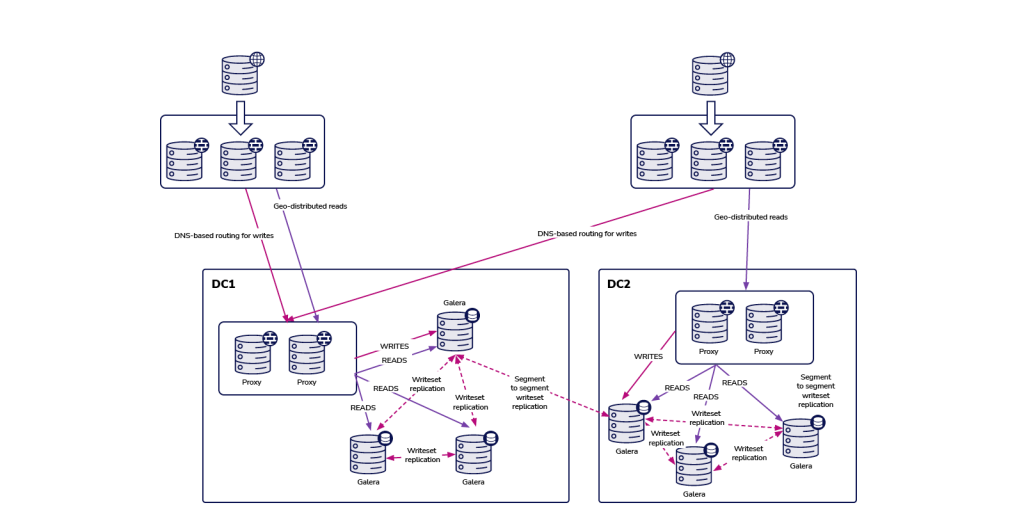

Let’s take a quick look at the asynchronous approach. The idea is simple – two clusters connected with each other using asynchronous replication.

With this approach, you need to keep several things in mind.

For starters, you have to decide if you want to use multi-master or would you send all traffic to one datacenter only. We would recommend to stay away from writing to both datacenters and using master – master replication. This may lead to serious issues if you do not exercise caution.

If you decide to use the active – passive setup, you would probably want to implement some sort of a DNS-based routing for writes, to make sure that your application servers will always connect to a set of proxies located in the active datacenter.

This is achieved by creating a DNS entry that is changed when failover is required. Alternatively, it can be done through some sort of a service discovery solution like Consul or etcd.

The main downside of an environment built using asynchronous replication is the lack of ability to deal with network splits between datacenters.

This is inherited from the replication – no matter what you want to link with the replication (single nodes, MariaDB Clusters), there is no way to get around the fact that replication is not quorum-aware. There is no mechanism to track the state of the nodes and understand the high level picture of the whole topology.

As a result, whenever the link between two datacenters goes down, you end up with two separate MariaDB clusters that are not connected and that are both ready to accept traffic.

It will be up to you to define what to do in such a case.

It is possible to implement additional tools that would monitor the state of the databases from outside (i.e. from the third datacenter) and then take actions (or do not take actions) based on that information.

It is also possible to collocate tools that would share the infrastructure with databases but would be cluster-aware and could track the state of the datacenter connectivity and be used as the source of truth for the scripts that would manage the environment.

For example, ClusterControl can be deployed in a three-node cluster, node per datacenter setup, with RAFT protocol in place to ensure the quorum. In this situation, if a node loses connectivity with the rest of the cluster it would be assumed that the datacenter has experienced network partitioning.

2. Multi-Data Center MariaDB Clusters

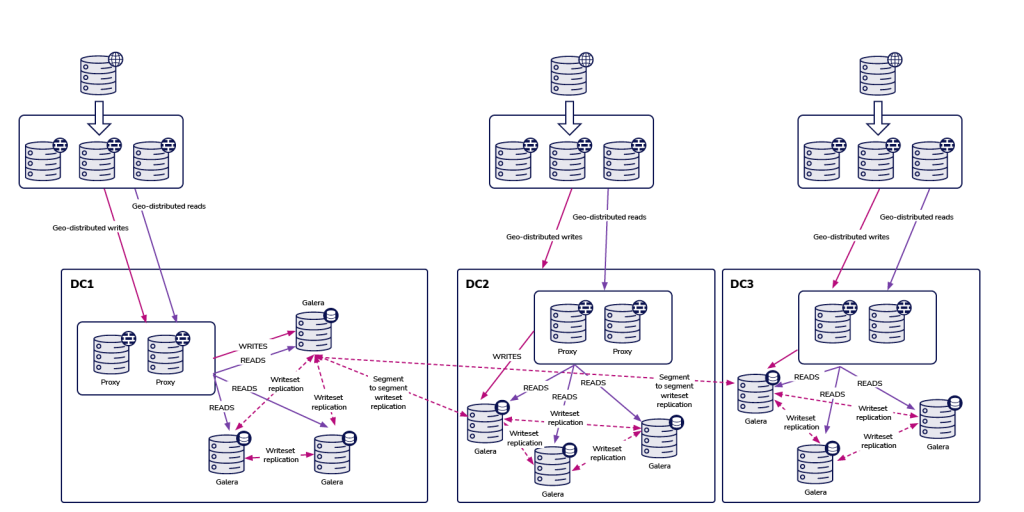

The second option relies upon the presence of low latency networks between the datacenters you use. If these are in place, you should be perfectly able to run a MariaDB Cluster spanning across several data centers.

After all, multiple datacenters are not always spread across vast distances geographically.

You can also use multiple providers located within the same metropolitan area, connected with fast, low-latency networks. Then we’ll be talking about latency increases to tens of milliseconds at most, definitely not hundreds. It all depends on the application but such an increase may be acceptable.

Here’s how this could work.

MariaDB Cluster, just like every Galera-based cluster, will be impacted by the high latency that’s likely to occur in a multi-datacenter setup. Having said that, it is perfectly acceptable to run it in “not-so-high” latency environments and expect it to behave properly, delivering acceptable performance.

It all depends on the network throughput and design, distance between datacenters, and application requirements. Such an approach works particularly well if we use segments to differentiate separate data centers. It allows the MariaDB Cluster to optimize its intra cluster connectivity and reduce cross-DC traffic to the minimum.

The main advantage of this setup is that it relies on the cluster itself to handle failures. If you use three data centers, you are pretty much covered against the split-brain situation – as long as there is a majority, it will continue to operate.

It is not required to have a full-blown node in the third datacenter. You could use Galera Arbitrator, a daemon that acts as a part of the cluster but it does not have to handle any database operations.

It connects to the nodes, takes part in the quorum calculation and may be used to relay the traffic should the direct connection between the two data centers not work.

In that case, the whole failover process can be described as: define all nodes in the load balancers (if data centers are close to each other, otherwise you may want to add some priority for the nodes located closer to the load balancer) and that’s pretty much it.

MariaDB Cluster nodes that form the majority will be reachable through any proxy.

Deploying a Multi-Cloud MariaDB Cluster Using ClusterControl

Let’s take a look at two options you can use to deploy multi-cloud MariaDB Clusters using ClusterControl.

Keep in mind that ClusterControl requires SSH connectivity to all of the nodes it will manage so it would be up to you to ensure network connectivity across multiple datacenters or cloud providers.

As long as the connectivity is there, we can proceed with two methods.

1. Deploying MariaDB Clusters Using Asynchronous Replication

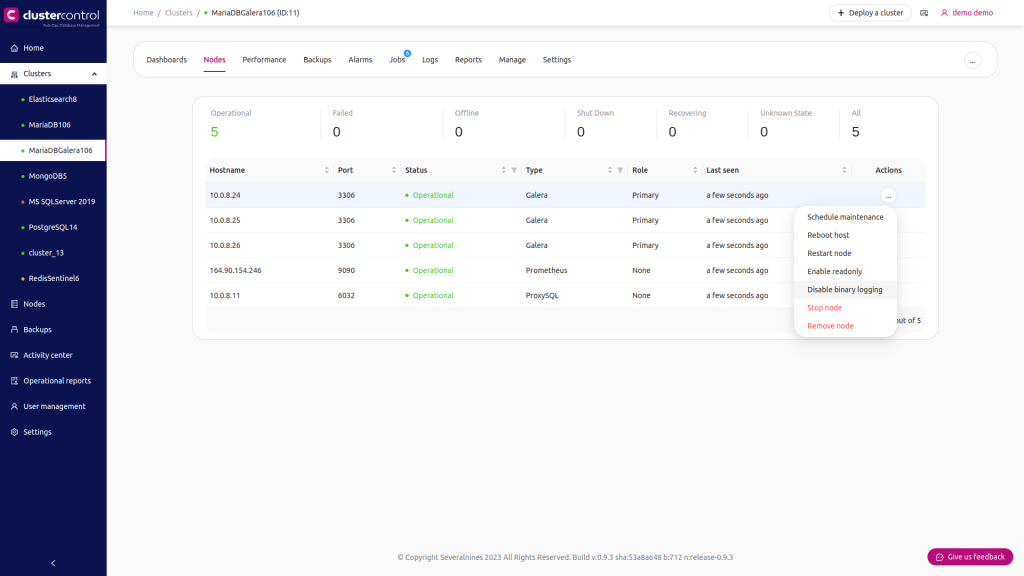

ClusterControl can help you to deploy two clusters connected using asynchronous replication. When you have a single MariaDB Cluster deployed, you want to ensure that one of the nodes has binary logs enabled. This will allow you to use that node as a master for the second cluster.

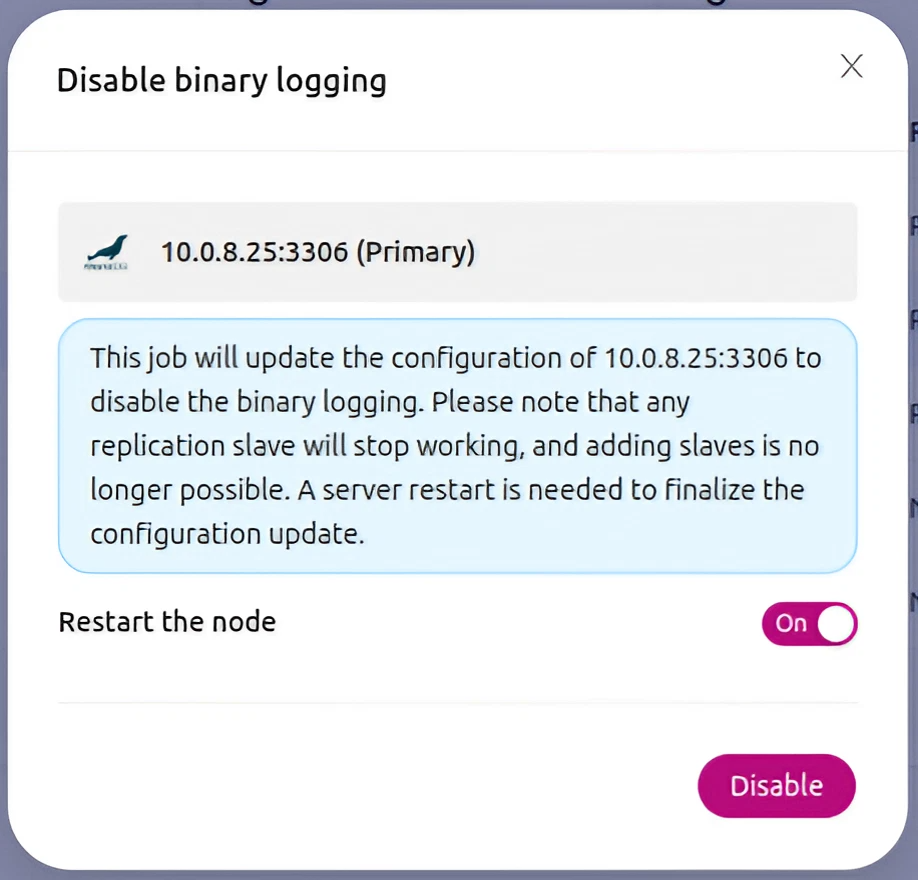

Currently, ClusterControl always deploys MariaDB Galera Cluster with binary logs enabled. If, for whatever reason, some of the nodes do not have binlogs enabled, you can always enable them:

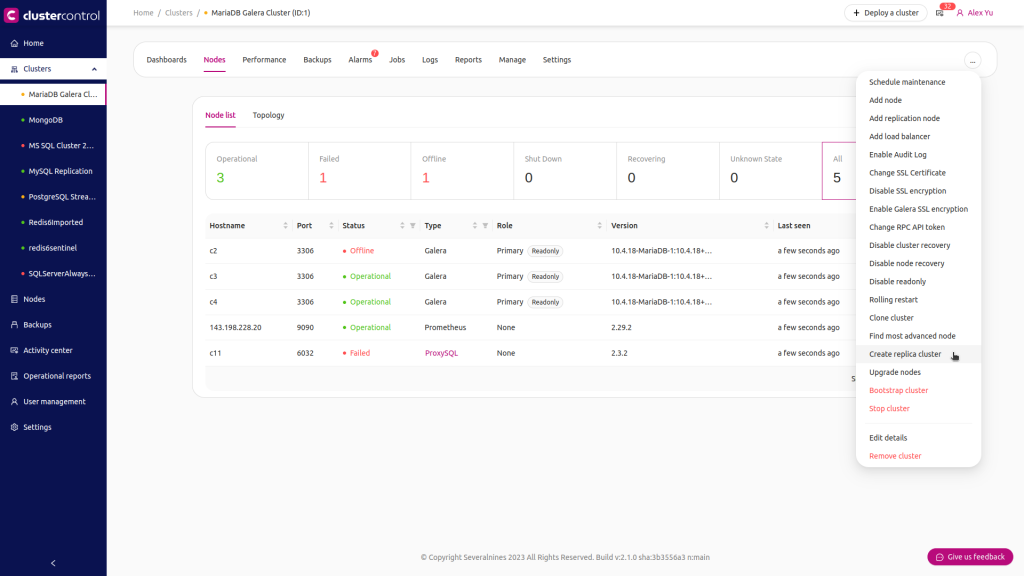

Once you have ensured the binary log is enabled, we can use the ‘Create Replica Cluster’ job to start the deployment wizard.

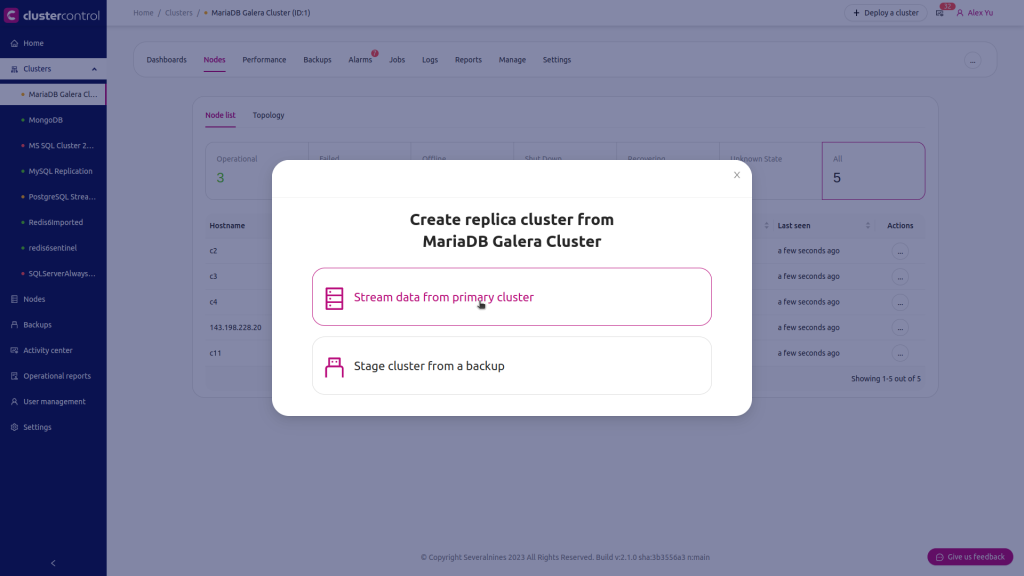

We can either stream the data directly from the primary node or you can use one of the backups to provision the data.

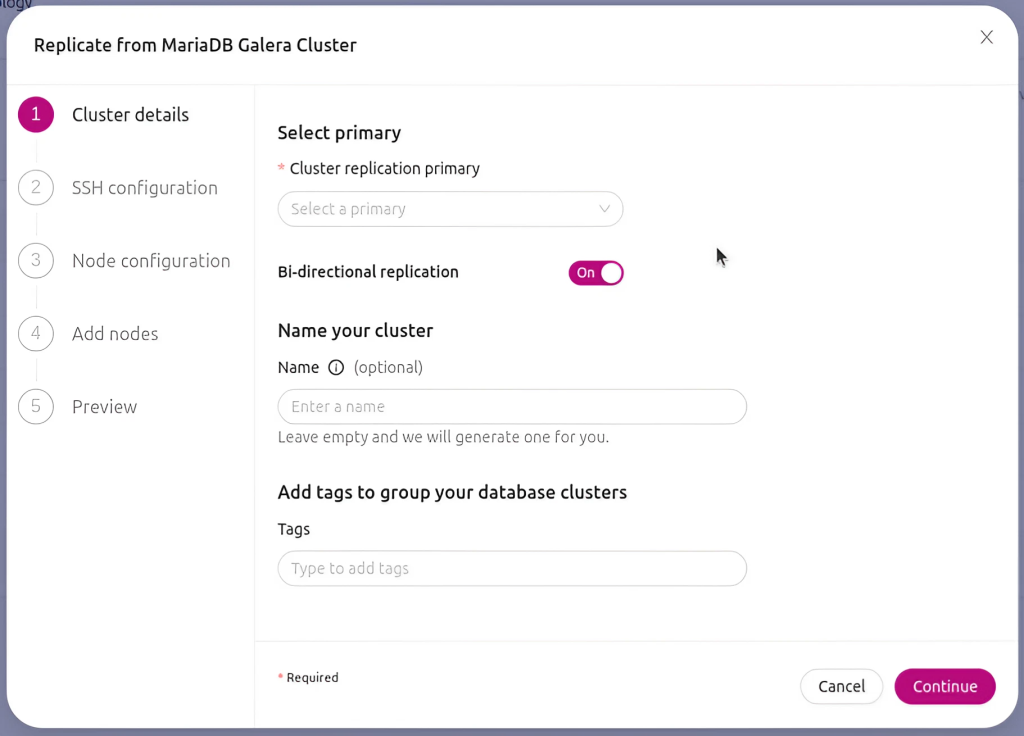

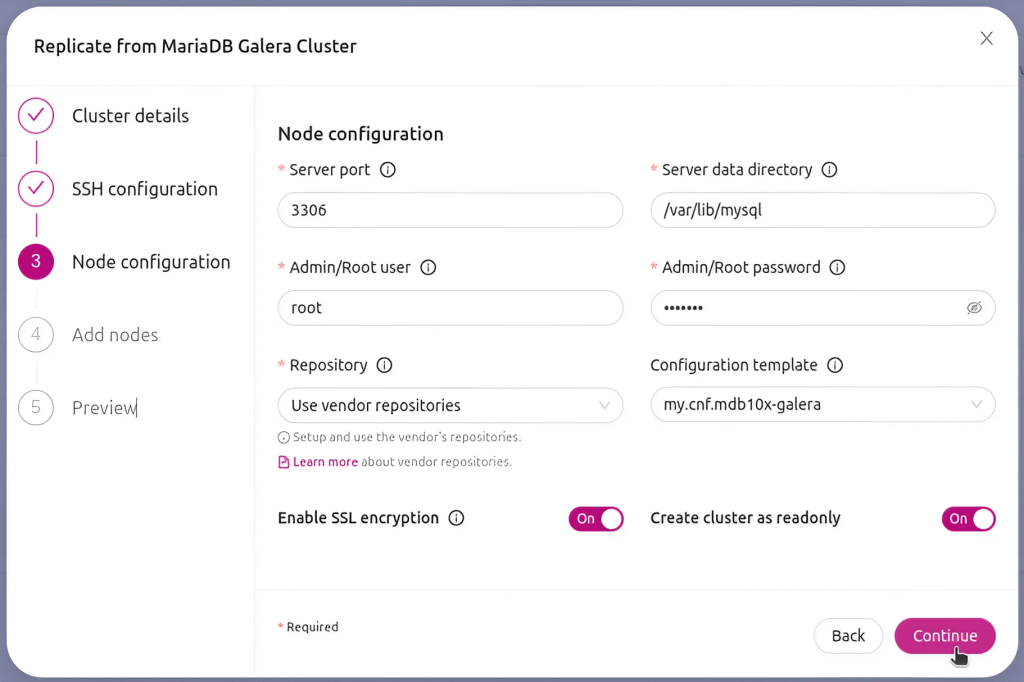

Then you are presented with a standard cluster deployment wizard where you have to provide a few basic details about your cluster, as well as SSH configuration.

You will be asked to pick the vendor and version of the databases as well as asked for the password for the root user.

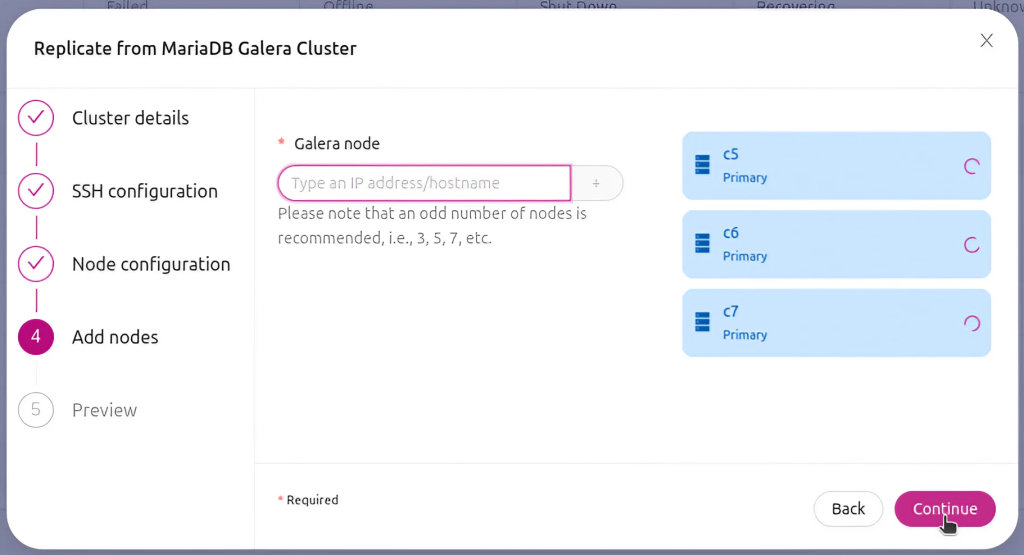

Finally, you are asked to define nodes you would like to add to the cluster and you are all set.

Once the cluster has been successfully deployed, you will see it in the list of the clusters in the ClusterControl UI.

Deploying Multi-Cloud MariaDB Cluster

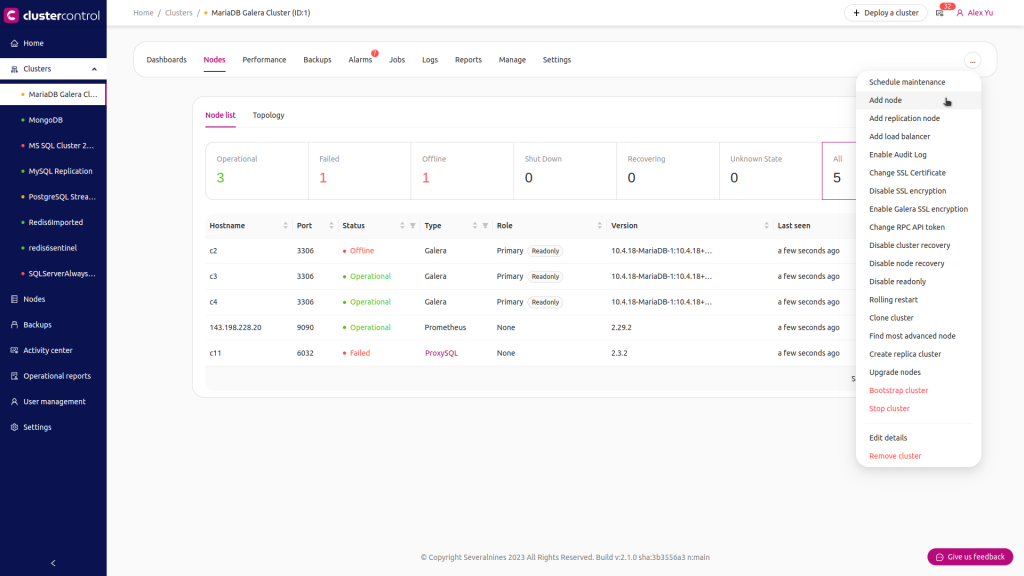

As we mentioned earlier, another option to deploy MariaDB Cluster would be to use separate segments when adding nodes to the cluster. In the ClusterControl UI you will find an option to “Add Node”:

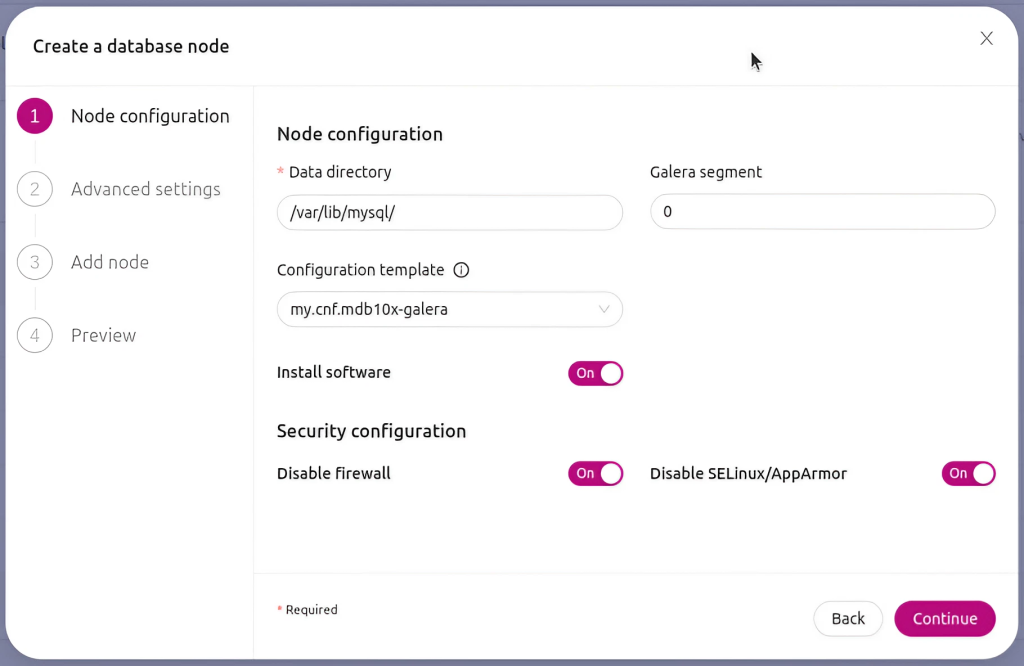

When you use it, you will be presented with following screen:

The default segment is 0 so you want to change it to a different value.

After nodes have been added you can check in which segment they are located by looking at the Overview tab.

Conclusion

Multi-cloud environments are a great way to alleviate the risk of service disruption, which will always pose a bigger threat to your application if your database availability is tied to a single provider.

When taking this approach with MariaDB clusters, you need to pay particular attention to network latency. You can use asynchronous replication or a multi-data center approach to address the latency issue, but each of these solutions come with its own risks and considerations.

Using a tool like ClusterControl allows you to automate the deployment of your MariaDB clusters, whether you choose to go with asynchronous replication or a multi-datacenter setup. You can also configure those clusters to ensure that a robust and fully-tested failover process takes place automatically, whenever it is required.

Want a more in-depth look at multi-cloud setups? Read our complete multi-cloud guide, which covers everything from what it means and why you might use the approach, to practical steps on deploying and maintaining a multi-cloud environment.

Stay on top of all things MariaDB and multi-cloud by subscribing to our newsletter below.Follow us on LinkedIn and Twitter for more great content in the coming weeks. Stay tuned!