blog

How to Avoid PostgreSQL Cloud Vendor Lock-in

Vendor lock-in is a well-known concept for database technologies. With cloud usage increasing, this lock-in has also expanded to include cloud providers. We can define vendor lock-in as a proprietary lock-in that makes a customer dependent on a vendor for their products or services. Sometimes this lock-in doesn’t mean that you can’t change the vendor/provider, but it could be an expensive or time-consuming task.

PostgreSQL, an open source database technology, doesn’t have the vendor lock-in problem in itself, but if you’re running your systems in the cloud, it’s likely you’ll need to cope with that issue at some time.

In this blog, we’ll share some tips about how to avoid PostgreSQL cloud lock-in and also look at how ClusterControl can help in avoiding it.

Tip #1: Check for Cloud Provider Limitations or Restrictions

Cloud providers generally offer a simple and friendly way (or even a tool) to migrate your data to the cloud. The problem is when you want to leave them it can be hard to find an easy way to migrate the data to another provider or to an on-prem setup. This task usually has a high cost (often based on the amount of traffic).

To avoid this issue, you must always first check the cloud provider documentation and limitations to know the restrictions that may be inevitable when leaving.

Tip #2: Pre-Plan for a Cloud Provider Exit

The best recommendation that we can give you is don’t wait until the last minute to know how to leave your cloud provider. You should plan it long in advance so you can know the best, fastest, and least expensive way to make your exit.,

Because this plan most-likely depends on your specific business requirements the plan will be different depending on whether you can schedule maintenance windows and if the company will accept any downtime periods. Planning it beforehand, you will definitely avoid a headache at the end of the day.

Tip #3: Avoid Using Any Exclusive Cloud Provider Products

A cloud provider’s product will almost always run better than an open source product. This is due to the fact that it was designed and tested to run on the cloud provider’s infrastructure. The performance will often be considerably better than the second one.

If you need to migrate your databases to another provider, you’ll have the technology lock-in problem as the cloud provider product is only available in the current cloud provider environment. This means you won’t be able to migrate easily. You can probably find a way to do it by generating a dump file (or another backup method), but you’ll probably have a long downtime period (depending on the amount of data and technologies that you want to use).

If you are using Amazon RDS or Aurora, Azure SQL Database, or Google Cloud SQL, (to focus on the most currently used cloud providers) you should consider checking the alternatives to migrate it to an open source database. With this, we’re not saying that you should migrate it, but you should definitely have an option to do it if needed.

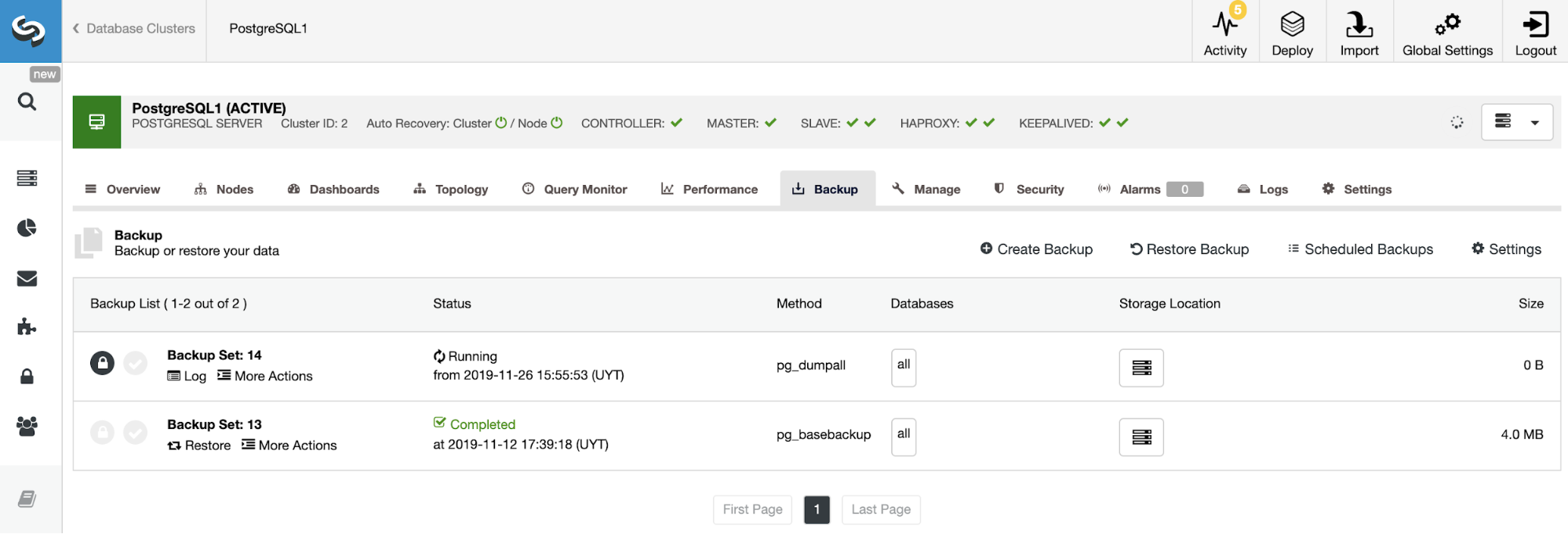

Tip #4: Store You Backups to Another Cloud Provider

A good practice to decrease downtime, whether in the case of migration or for disaster recovery, is not only to store backups in the same place (for a faster recovery reasons), but also to store backups in a different cloud provider or even on-prem.

By following this practice when you need to restore or migrate your data, you just need to copy the latest data after the backup was taken back. The amount of traffic and time will be considerably less than copying all data without compression during the migration or failure event.

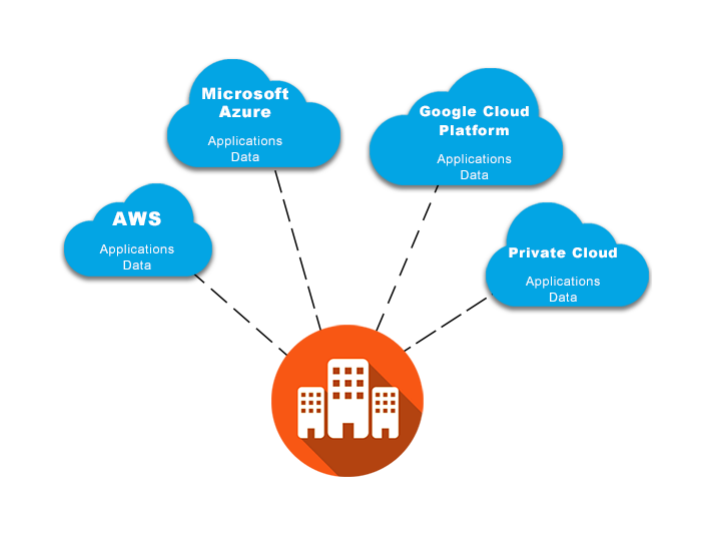

Tip #5: Use a Multi-Cloud or Hybrid Model

This is probably the best option if you want to avoid cloud lock-in. Storing the data in two or more places in real-time (or as close to real-time as you can get) allows you to migrate in a fast way and you can do it with the least downtime possible. If you have a PostgreSQL cluster in one cloud provider and you have a PostgreSQL standby node in another one, in case that you need to change your provider, you can just promote the standby node and send the traffic to this new primary PostgreSQL node.

A similar concept is applied to the hybrid model. You can keep your production cluster in the cloud, and then you can create a standby cluster or database node on-prem, which generates a hybrid (cloud/on-prem) topology, and in case of failure or migration necessities, you can promote the standby node without any cloud lock-in as you’re using your own environment.

In this case, keep in mind that probably the cloud provider will charge you for the outbound traffic, so under heavy traffic, keep this method working could generate an excessive cost for the company.

How ClusterControl Can Help Avoid PostgreSQL Lock-in

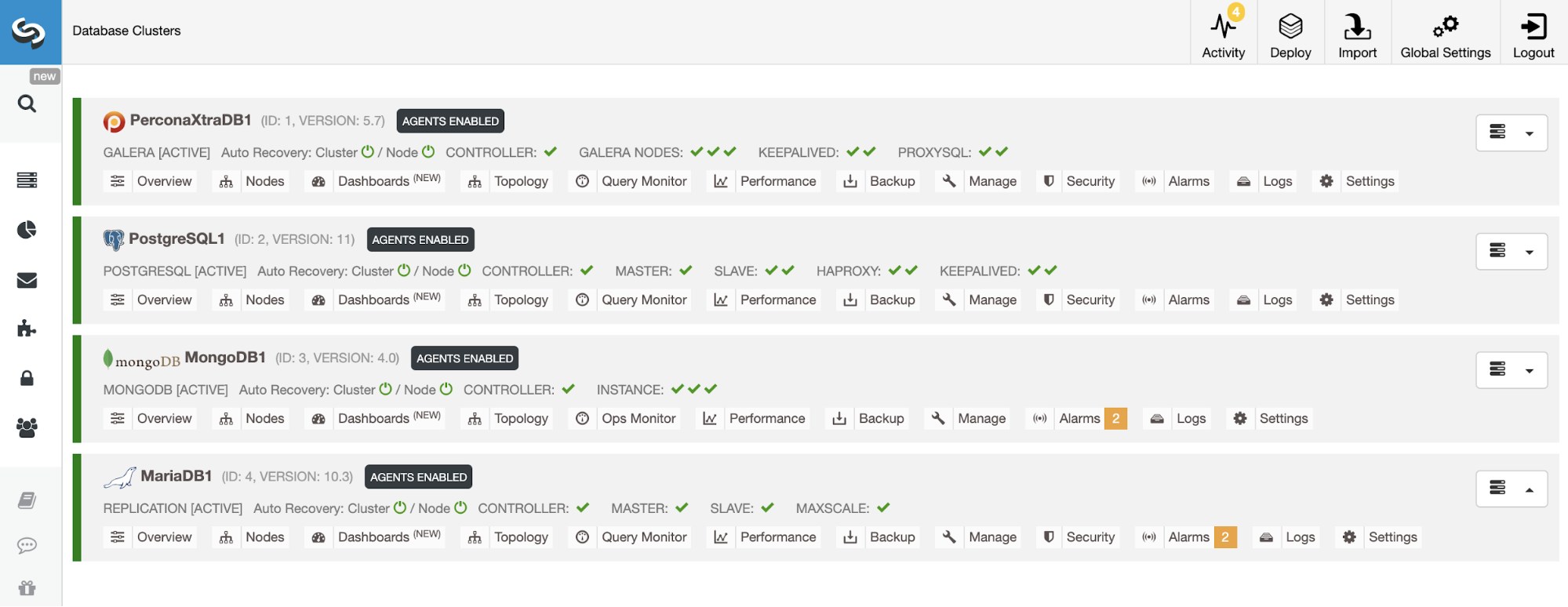

In order to avoid PostgreSQL lock-in, you can also use ClusterControl to deploy (or import), manage, and monitor your database clusters. This way you won’t depend on a specific technology or provider to keep your systems up and running.

ClusterControl has a friendly and easy-to-use UI, so you don’t need to use a cloud provider management console to manage your databases, you just need to login in and you’ll have an overview of all your database clusters in the same system.

It has three different versions (including a community free version). You can still use ClusterControl (without some paid features) even if your license is expired and it won’t affect your database performance.

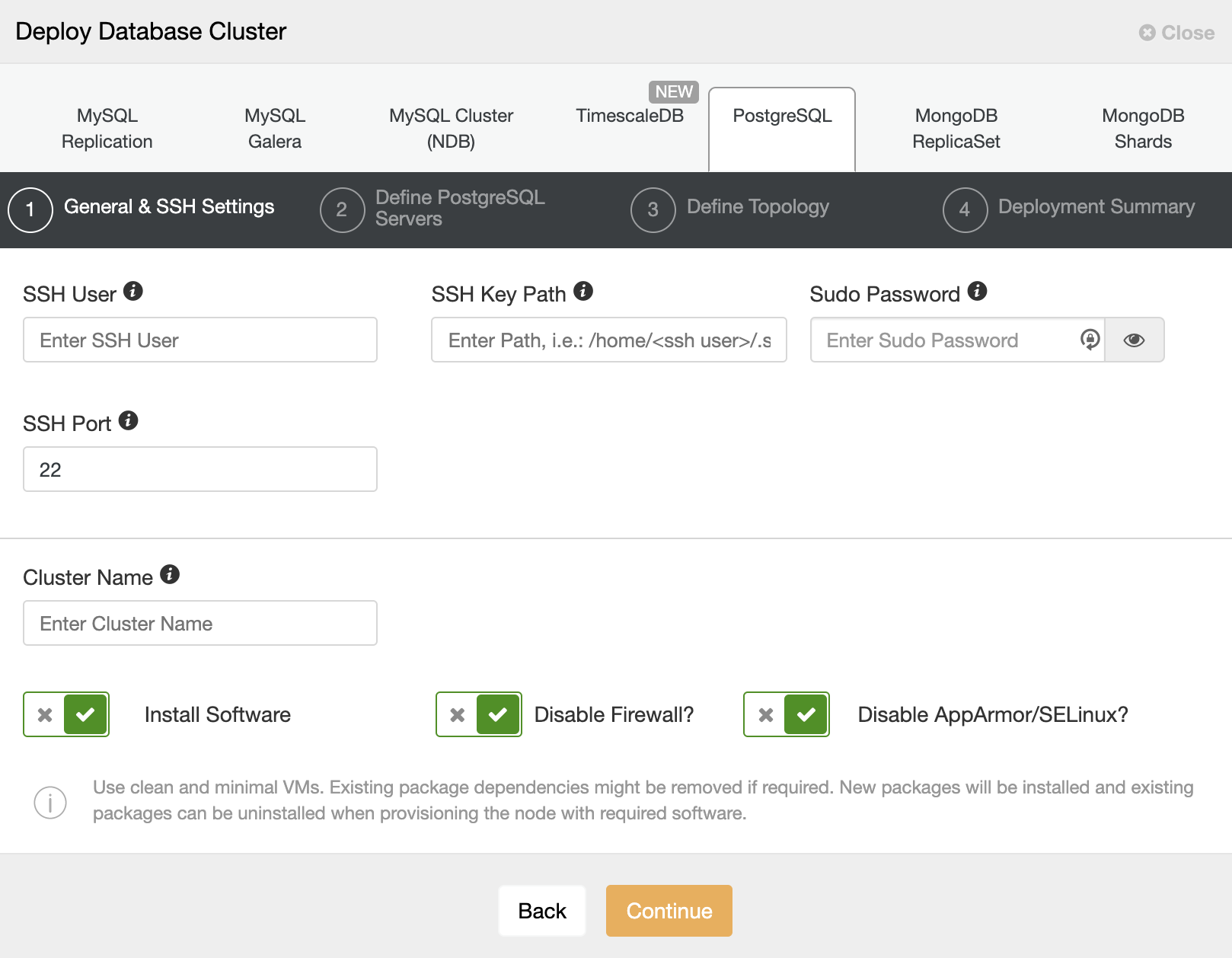

You can deploy different open source database engines from the same system, and only SSH access and a privileged user is required to use it.

ClusterControl can also help in managing your backup system. From here, you can schedule a new backup using different backup methods (depending on the database engine), compress, encrypt, verify your backups by restoring it in a different node. You can also store it in multiple different locations at the same time (including the cloud).

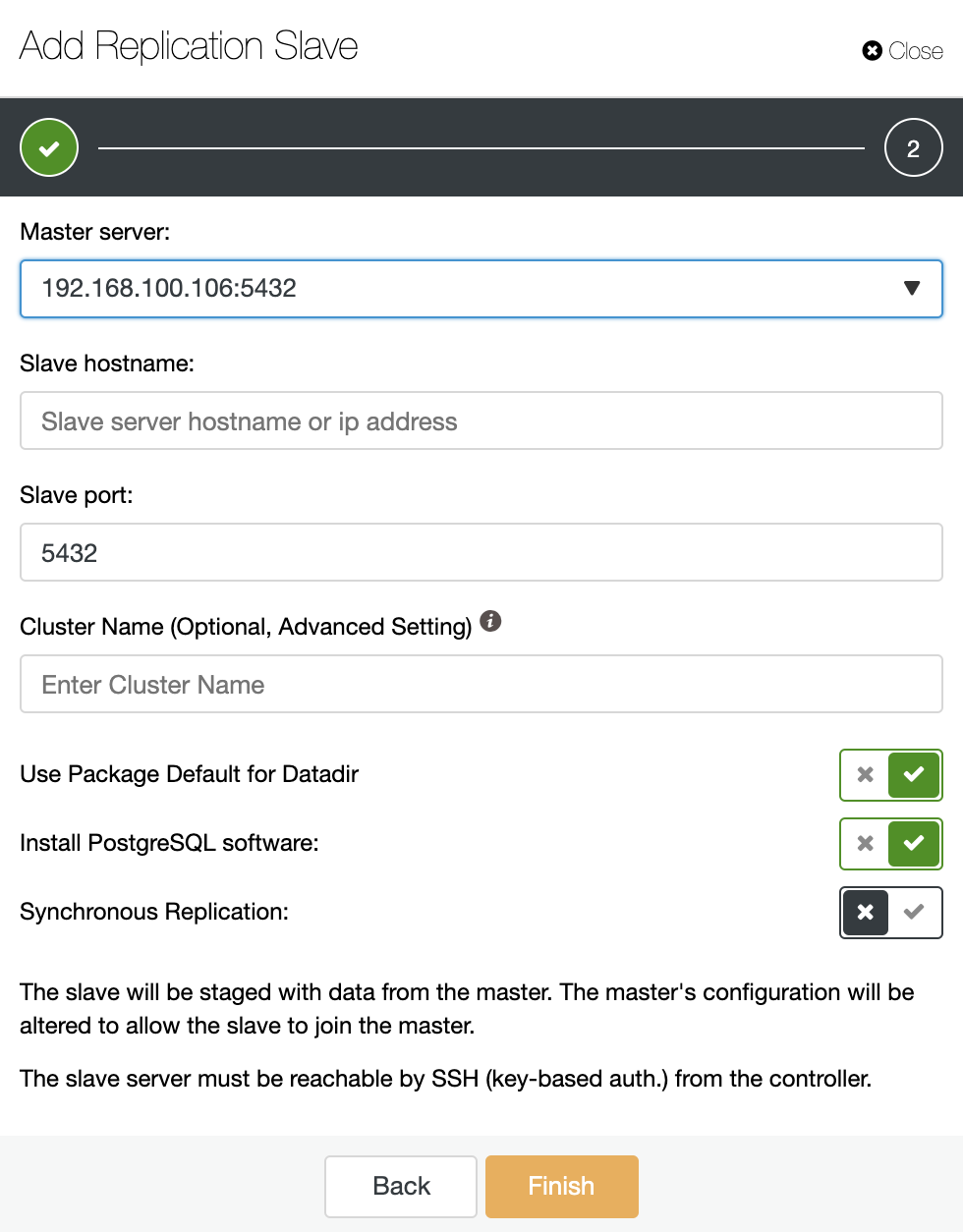

The multi-cloud or hybrid implementation is easily doable with ClusterControl by using the Cluster-to-Cluster Replication or the Add Replication Slave feature. You only need to follow a simple wizard to deploy a new database node or cluster in a different place.

Conclusion

As data is probably the most important asset to the company, most probably you’ll want to keep data as controlled as possible. Having a cloud lock-in doesn’t help on this. If you’re in a cloud lock-in scenario, it means that you can’t manage your data as you wish, and that could be a problem.

However, cloud lock-in is not always a problem. It could be possible that you’re running all your system (databases, applications, etc) in the same cloud provider using the provider products (Amazon RDS or Aurora, Azure SQL Database, or Google Cloud SQL) and you’re not looking for migrating anything, instead of that, it’s possible that you’re taking advantage of all the benefits of the cloud provider. Avoiding cloud lock-in is not always a must as it depends on each case.

We hope you enjoyed our blog sharing the most common ways to avoid a PostgreSQL cloud lock-in and how ClusterControl can help.