blog

Cloud Backup Options for PostgreSQL

This blog was updated on 11/27/18 and 11/29/18 to make changes as recommended by our awesome commentors!

As with any other component of a business, databases are extremely important its inner workings.

Whether it’s the core of the business or just another component, databases should be backed up regularly, and stored in safe locations for possible future recovery.

Should I Backup To The Cloud?

A general rule is to have at least 3 copies of anything of value and to store those backups in different locations. Backups on the same drive are useless if the drive itself dies, same host backups are also at risk if the host goes down, and same building backups are also in danger if the building burns down (drastic and unlikely, but possible).

Cloud backups offer an easy solution for the need of off-site backups without having to spin up new hardware in a secondary location. There are many different cloud services that offer backup storage, and choosing the right one will depend on backup needs, size requirements, cost, and security.

The benefits of having cloud backups are many, but mainly revolve around having these backups stored in a different location than the main database, allowing us to have a safety net in the case of a disaster recovery. While we won’t go into detail about how to set up each of these backup options, we will explore some different ideas and configurations for backups.

There are some downsides to storing backups in the cloud, starting with the transfer. If the backups for the database are extremely large, it could take a long time to do the actual upload, and could even have increased costs if the cloud service charges for bandwidth transfer. Compression is highly suggested to keep time and costs low.

Security could be another concern with hosting backups in the cloud, while some companies have strict guidelines for where their data is stored and exists. If security is a concern, any backups can be encrypted before exporting them to a cloud hosting service.

Cloud Backup Options

There are several different ways to create database backups for PostgreSQL, and depending on the type of backup, recovery time, size, and infrastructure options will vary. Since many of the cloud storage solutions are simply storage with different API front ends, any clever backup solution can be created with a bit of scripting.

Snapshot Backups

Snapshots are backups that have a copy of the PostgreSQL database at a specific point in time. These backups are created either by using pg_dump, which simply dumps the database to a single file, or by copying the base data directory for PostgreSQL. Either of these can be compressed, copied to other drives and servers, and copied to the desired cloud storage option.

Using pg_dump with compression

pg_dump -Fc severalnines > severalnines.dmpData directory backup using pg_basebackup

The program pg_basebackup can be used to create a base backup very easily. For more information on all the features and how to set it up, visit the official documentation for the version of PostgreSQL being used.

pg_basebackup --format=tar -z -D severalnines_basebackupAmazon S3

With Amazon’s AWS platform, S3 is a data storage service that can be used to store database backups. While backups can be uploaded through the web interface, the Amazon CLI (Command Line Interface) can be used to do it from the command line and through backup automation scripts. Information about the AWS CLI can be found here. If backups are to be kept for a very long time, and recovery time isn’t a concern, backups can be transferred to Amazon’s Glacier service, providing much cheaper long term storage.

aws s3 cp severalnines.dmp s3://severalninesbucket/backupsAmazon also has different regions for their services all around the world. Even though they have a good uptime history, spreading copies of backups across multiple regions increases disaster recovery options, and lowers chances of losing valuable data.

Microsoft Azure Storage

Microsoft’s cloud platform, Azure, has storage options with their own command line interface, information can be found here.

az storage blob upload --container-name severalnines --file severalnines.dmp --name severalnines_backupAny other modern cloud storage services should offer similar tools to copy backups to their cloud servers, consult their documentation for details.

Standby Backups

Sometimes backups themselves can be extremely large even if compressed, and uploading a daily or weekly backup to a cloud service could be out of the question due to bandwidth speeds and/or costs. So getting a backup into the cloud for safekeeping is much harder.

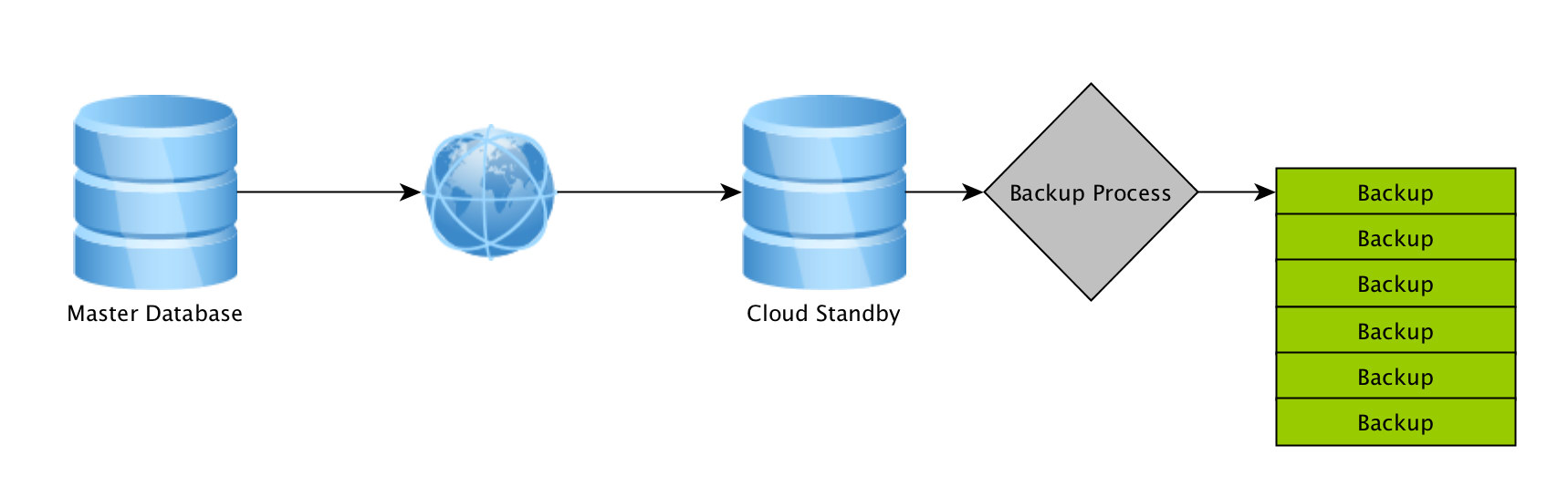

One way to do this is to have a warm or hot standby running in a cloud based Virtual Machine, such as an Amazon’s EC2 instance, where it’s an exact copy of the main master database, and the only data that is sent to the cloud instance is any changes, rather than another copy of the whole database. This would require transferring the whole database at once, but after that, only the changes need to go be transferred.

But is a standby server actually a backup? If the master database goes down, the standby can be turned into the master and applications redirected to it, however, if the goal is to have backups for a certain point in time over the past week / months, this won’t work out.

To fix this, several things can be done. The standby itself can be forced to have a delay, ingesting data only once it’s a day old for example. Another is to create backups in one of the traditional ways (pg_dump, data directory copy) on the cloud standby, meaning these backups won’t need to be transferred over the network since they are being created on the cloud machine itself. In-network transfers are usually quicker and cheaper.

ClusterControl Backups And The Cloud

Severalnines created ClusterControl, a database management system that helps manage many different databases including PostgreSQL. It’s an ultimate toolbox for any Database or System Administrator to have complete control and visibility of their databases, and includes very handy backup features.

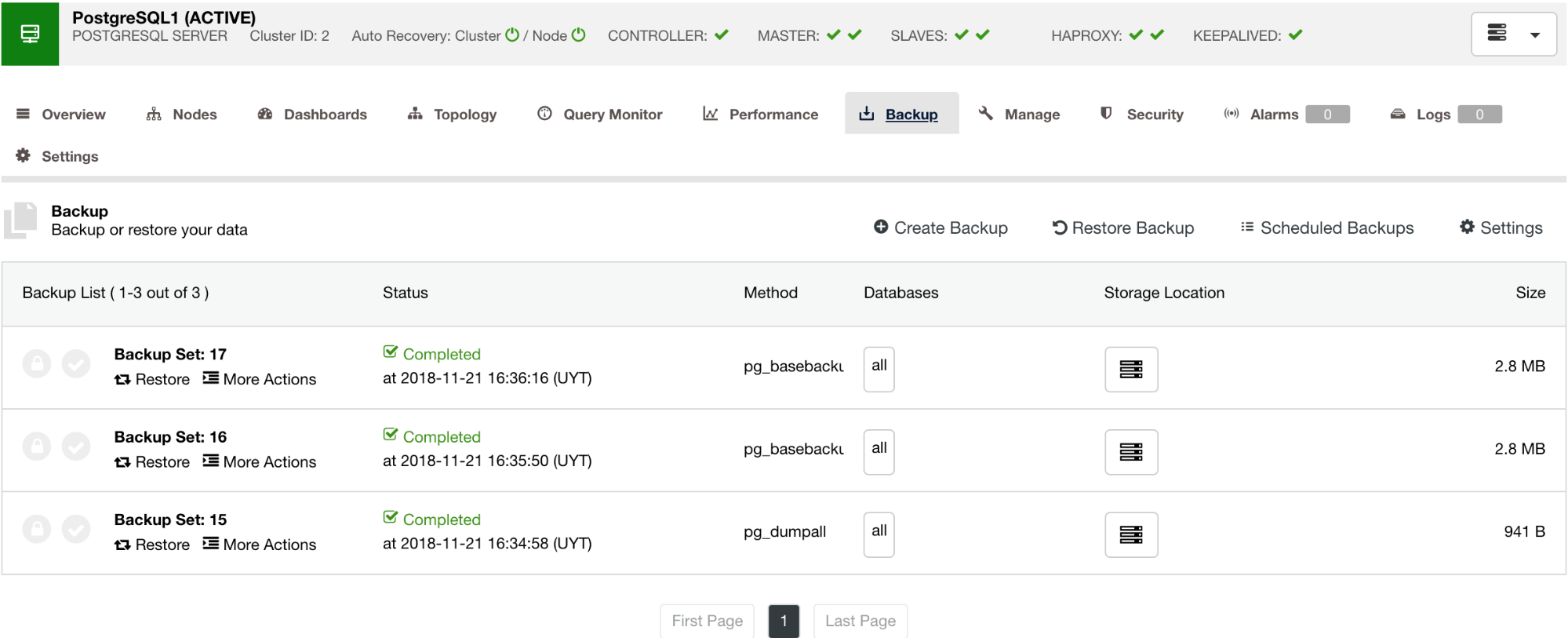

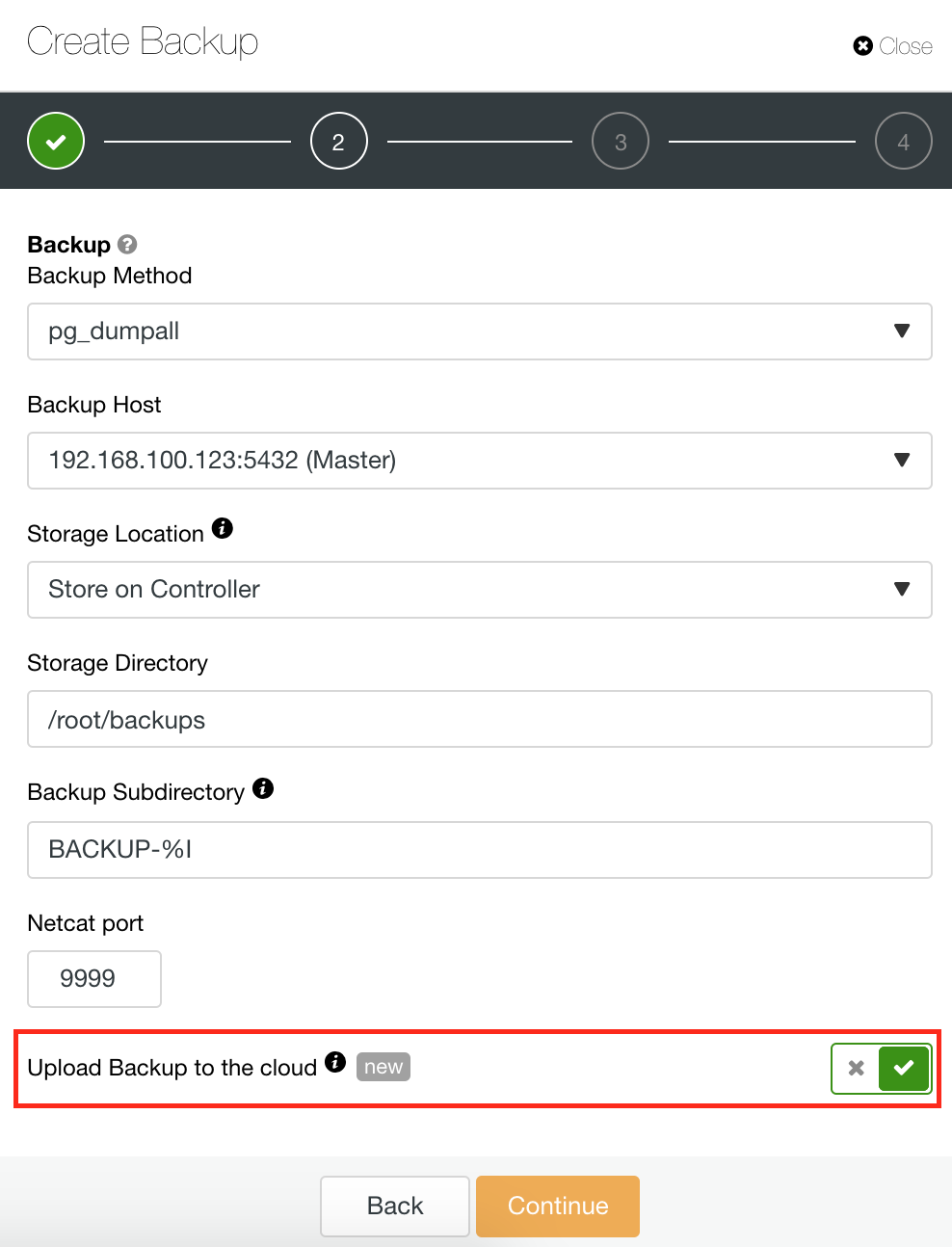

With ClusterControl, backups of PostgreSQL databases can be easily managed, scheduled, and set up to automatically copy the backups made to “cloud storage” services, including Amazon S3, Microsoft Azure, and Google Cloud. This makes it not needed to script up custom tools to upload backups to the cloud, as well as gives a nice user interface for the backups in general.

Backing our databases up should always happen, and storing them in second, third, and fourth locations is a very good and common practice. Throwing in a cloud option increases disaster recovery options, and put yet another layer of back end stability for a business, where in many cases if the database disappears, the company disappears. Exploring cloud backup options today can eliminate disaster tomorrow.