blog

Scaling Out the Moodle Database

Moodle is a very popular platform to run online courses. With the situation we see in 2020, Moodle, along with communicators like Zoom forms the backbone of the services that allow online learning and stay-at-home education. The demand put on Moodle platforms significantly increased compared to previous years. New platforms have been built, additional load has been put on the platforms that, historically, only acted as a helper tool and now they are intended to drive the whole educational effort. How to scale out the Moodle? We have a blog on this topic. How to scale the database backend for Moodle? Well, that’s another story. Let’s take a look at it as scaling out databases is not the easiest thing to do, especially if the Moodle adds its own little twist.

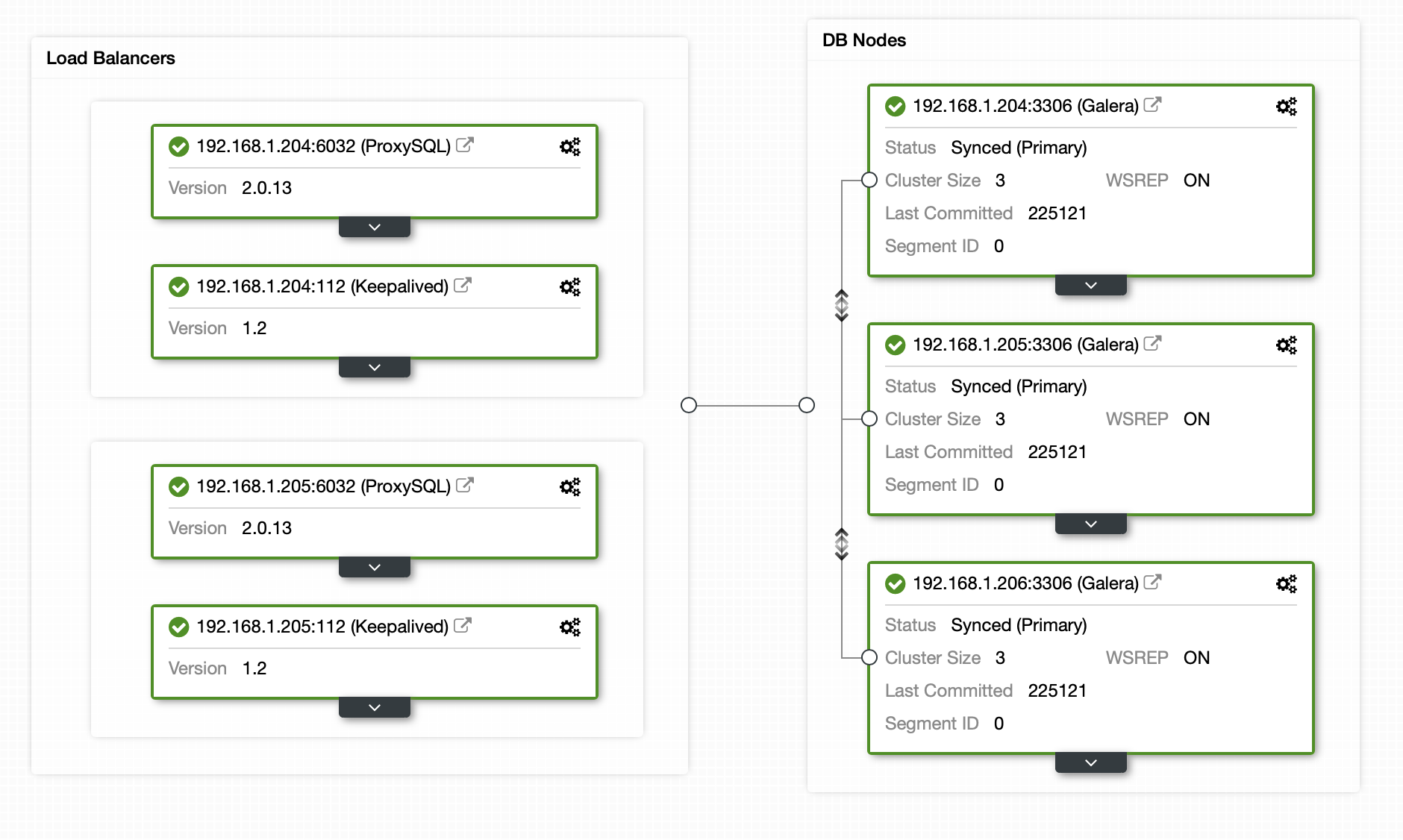

As the entry point we will use the architecture described in one of our earlier posts. MariaDB Cluster with ProxySQL and Keepalived on top of things.

As you can see, we have a three node MariaDB Cluster with ProxySQL that splits safe reads from the rest of the traffic based on the user.

dbtype = 'mysqli';

$CFG->dblibrary = 'native';

$CFG->dbhost = '192.168.1.222';

$CFG->dbname = 'moodle';

$CFG->dbuser = 'moodle';

$CFG->dbpass = 'pass';

$CFG->prefix = 'mdl_';

$CFG->dboptions = array (

'dbpersist' => 0,

'dbport' => 6033,

'dbsocket' => '',

'dbcollation' => 'utf8mb4_general_ci',

'readonly' => [

'instance' => [

'dbhost' => '192.168.1.222',

'dbport' => 6033,

'dbuser' => 'moodle_safereads',

'dbpass' => 'pass'

]

]

);

$CFG->wwwroot = 'http://192.168.1.200/moodle';

$CFG->dataroot = '/var/www/moodledata';

$CFG->admin = 'admin';

$CFG->directorypermissions = 0777;

require_once(__DIR__ . '/lib/setup.php');

// There is no php closing tag in this file,

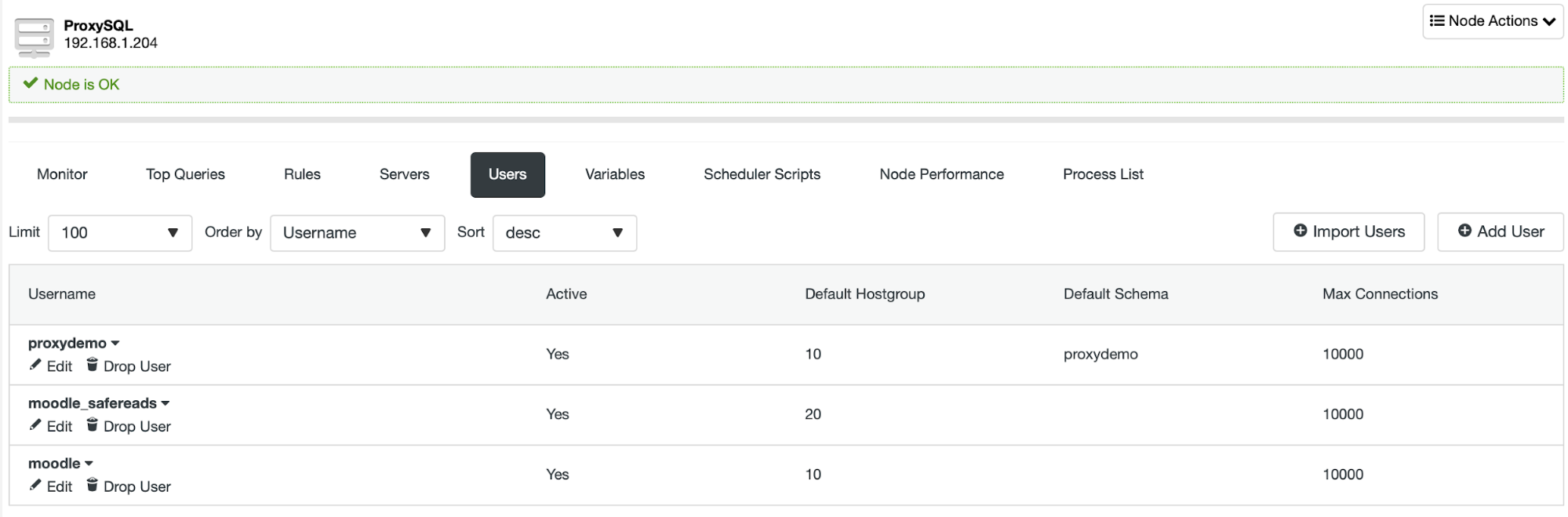

// it is intentional because it prevents trailing whitespace problems!User, as shown above, is defined in the Moodle configuration file. This allows us to automatically and safely send writes and all SELECT statements that require data consistency to the writer node while still sending some of the SELECTs to the remaining nodes in the MariaDB Cluster.

Let’s assume that this particular setup is not enough for us. What are the options that we have? We have two main elements in the setup – MariaDB Cluster and ProxySQL. We’ll consider issues on both sides:

- What can be done if the ProxySQL instance cannot cope with traffic?

- What can be done if MariaDB Cluster cannot cope with traffic?

Let’s start with the first scenario.

ProxySQL Instance is Overloaded

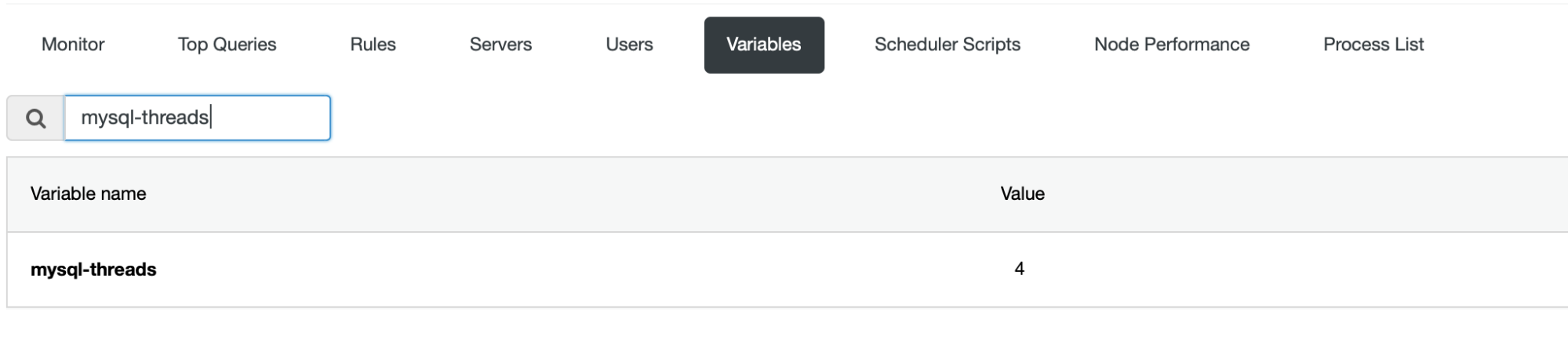

In the current environment only one ProxySQL instance can be handling the traffic – the one that Virtual IP points to. This leaves us with a ProxySQL instance that is acting as a standby – up and running but not used for anything. If the active ProxySQL instance is getting close to CPU saturation, there are a couple of things you may want to do. First, obviously, you can scale vertically – increasing the size of a ProxySQL instance might be the easiest way to let it handle higher traffic. Please keep in mind that ProxySQL, by default, is configured to use 4 threads.

If you want to be able to utilize more CPU cores, this is the setting you need to change as well.

Alternatively, you can attempt to scale out horizontally. Instead of using two ProxySQL instances with VIP you can collocate ProxySQL with Moodle hosts. Then you want to reconfigure Moodle to connect to ProxySQL on the local host, ideally through the Unix socket – it is the most efficient way of connecting to ProxySQL. There is not much of a configuration that we use with ProxySQL therefore using multiple instances of ProxySQL should not add too much of the overhead. If you want, you can always setup ProxySQL Cluster to help you to keep the ProxySQL instances in sync regarding the configuration.

MariaDB Cluster is Overloaded

Now we are talking about a more serious issue. Of course, increasing the size of the instances will help, as usual. On the other hand, horizontal scale out is somewhat limited because of the “safe reads” limitation. Sure, you can add more nodes to the cluster but you can use them only for the safe reads. To what extent this lets you scale out, it depends on the workload. For pure read-only workload (browsing through the contents, forums etc) it looks quite nice:

MySQL [(none)]> SELECT hostgroup, srv_host, srv_port, status, queries FROM stats_mysql_connection_pool WHERE hostgroup IN (20, 10) AND status='ONLINE';

+-----------+---------------+----------+--------+---------+

| hostgroup | srv_host | srv_port | status | Queries |

+-----------+---------------+----------+--------+---------+

| 20 | 192.168.1.204 | 3306 | ONLINE | 5683 |

| 20 | 192.168.1.205 | 3306 | ONLINE | 5543 |

| 10 | 192.168.1.206 | 3306 | ONLINE | 553 |

+-----------+---------------+----------+--------+---------+

3 rows in set (0.002 sec)This is pretty much a ratio of 1:20 – for one query that hits the writer we have 20 “safe reads” that can be spread across the remaining nodes. On the other hand, when we start to modify the data, the ratio quickly changes.

MySQL [(none)]> SELECT hostgroup, srv_host, srv_port, status, queries FROM stats_mysql_connection_pool WHERE hostgroup IN (20, 10) AND status='ONLINE';

+-----------+---------------+----------+--------+---------+

| hostgroup | srv_host | srv_port | status | Queries |

+-----------+---------------+----------+--------+---------+

| 20 | 192.168.1.204 | 3306 | ONLINE | 3117 |

| 20 | 192.168.1.205 | 3306 | ONLINE | 3010 |

| 10 | 192.168.1.206 | 3306 | ONLINE | 6807 |

+-----------+---------------+----------+--------+---------+

3 rows in set (0.003 sec)This is an output after issuing several grades, creating forum topics and adding some course content. As you can see, with such a safe/unsafe queries ratio the writer will be saturated earlier than the readers therefore scaling out by adding more nodes is not suitable.

What can be done about it? There is a setting called “latency”. As per the configuration file, it determines when it is safe to read the table after the write. When write happens, the table is marked as modified and for the “latency” time all SELECTs will be sent to the writer node. Once the time longer than “latency” passed, SELECTs from that table may again be sent to read nodes. Please keep in mind that with MariaDB Cluster, time required for writeset to be applied across all of the nodes is typically very low, counted in milliseconds. This would allow us to set the latency quite low in the Moodle configuration file, for example the value like 0.1s (100 milliseconds) should be quite ok. Of course, should you run into any problems, you can always increase this value even further.

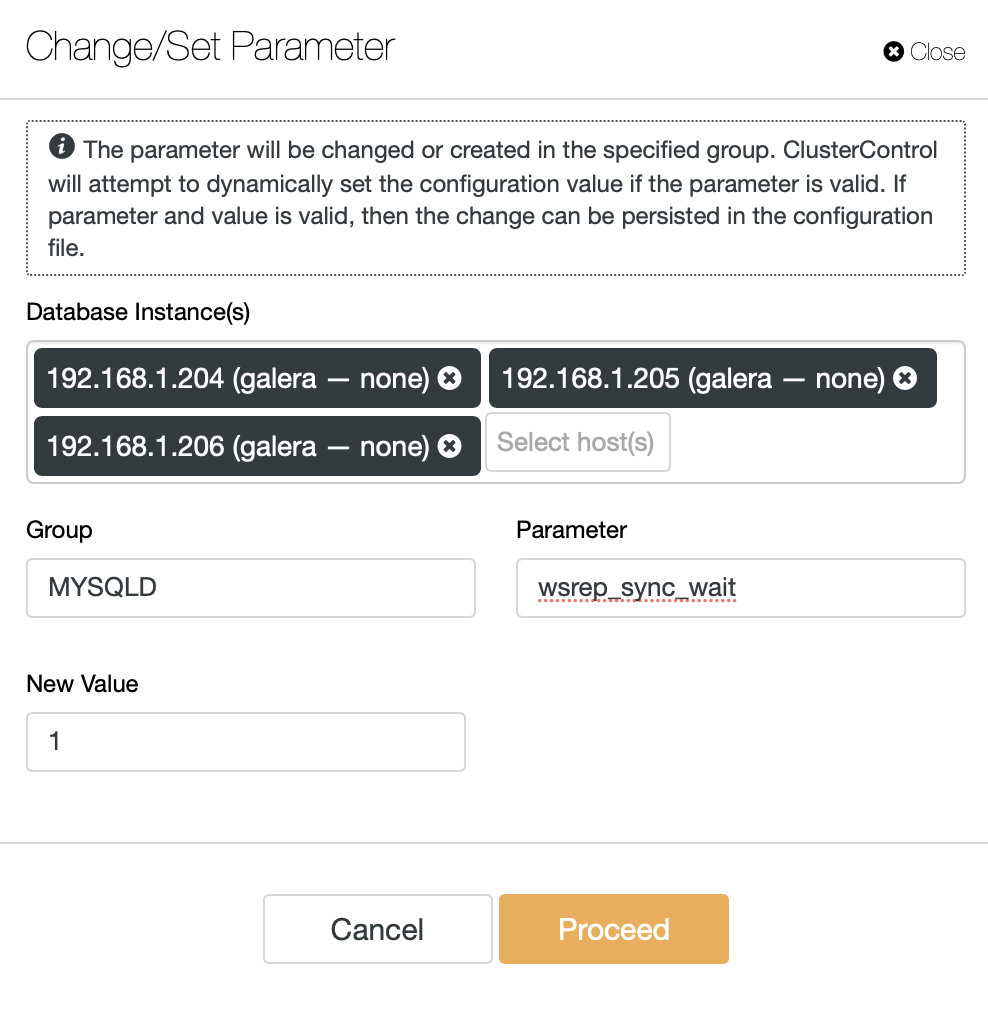

Another option to test would be to rely purely on MariaDB Cluster to tell when the read is safe and when it is not. There is a wsrep_sync_wait variable that can be configured to force causality checks on several access patterns (reads, updates, inserts, deletes, replaces and SHOW commands). For our purpose it would be enough to ensure that reads are executed with the causality enforced thus we shall set this variable to ‘1’.

We are going to make this change on all of the MariaDB Cluster nodes. We will also need to reconfigure ProxySQL for read/write split based on the query rules, not just the users, as we had previously. We will also remove the ‘moodle_safereads’ user as it is not needed anymore in this setup.

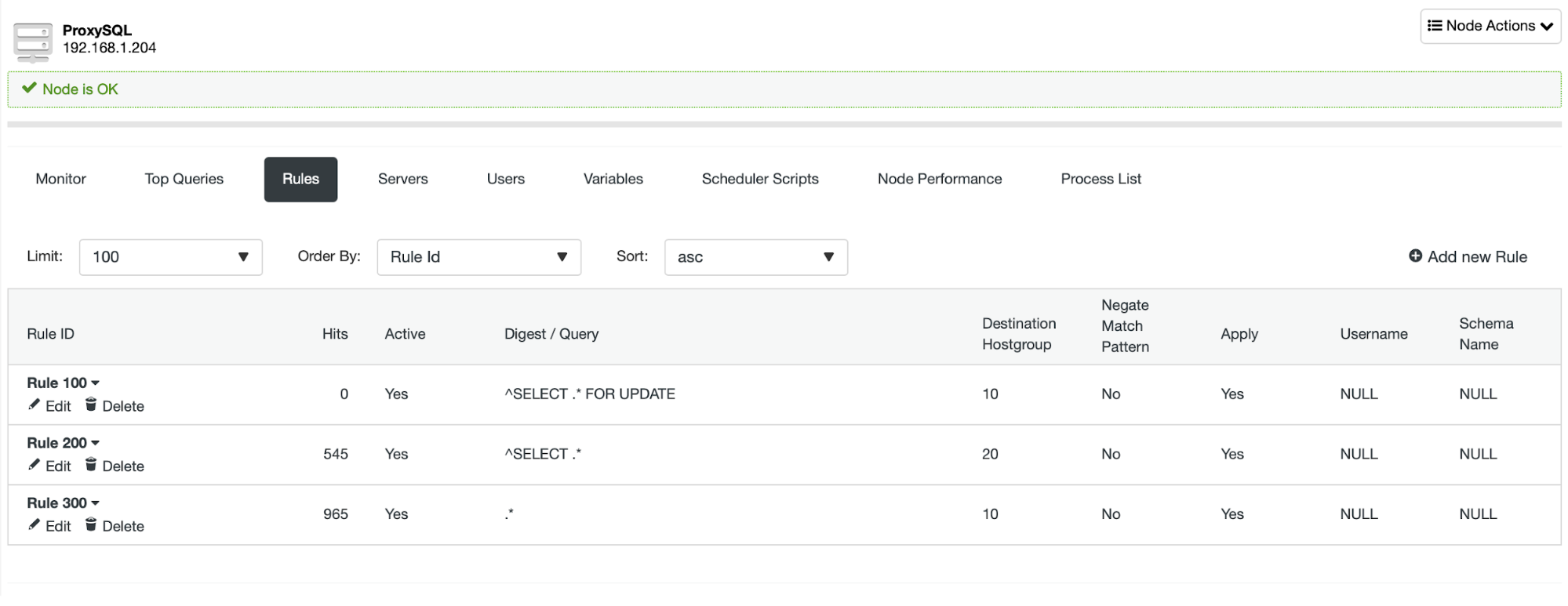

We set up three query rules that distribute the traffic based on the query. SELECT … FOR UPDATE is sent to the writer node, all SELECT queries are sent to readers and everything else (INSERT, DELETE, REPLACE, UPDATE, BEGIN, COMMIT and so on) is sent to the writer node as well.

This allows us to ensure that all the reads can be spread across the reader nodes thus allowing horizontal scale out through adding more nodes to the MariaDB Cluster.

We hope with those couple of tips you will be able to scale out your Moodle database backend much easier and to a greater extent