blog

What's New in MariaDB Cluster 10.4

In one of the previous blogs, we covered new features which are coming out in MariaDB 10.4. We mentioned there that included in this version will be a new Galera Cluster release. In this blog post we will go over the features of Galera Cluster 26.4.0 (or Galera 4), take a quick look at them, and explore how they will affect your setup when working with MariaDB Galera Cluster.

Streaming Replication

Galera Cluster is by no means a drop-in replacement for standalone MySQL. The way in which the writeset certification works introduced several limitations and edge cases which may seriously limit the ability to migrate into Galera Cluster. The three most common limitations are…

- Problems with long transactions

- Problems with large transactions

- Problems with hot-spots in tables

What’s great to see is that Galera 4 introduces Streaming Replication, which may help in reducing these limitations. Let’s review the current state in a little more detail.

Long Running Transactions

In this case we are talking timewise, which are definitely problematic in Galera. The main thing to understand is that Galera replicates transactions as writesets. Those writesets are certified on the members of the cluster, ensuring that all nodes can apply given writeset. The problem is, locks are created on the local node, they are not replicated across the cluster therefore if your transaction takes several minutes to complete and if you are writing to more than one Galera node, with time it is more and more likely that on one of the remaining nodes some transactions will modify some of the rows updated in your long-running transaction. This will cause certification to fail and long running transaction will have to be rolled back. In short, given you send writes to more than one node in the cluster, longer the transaction, the more likely it is to fail certification due to some conflict.

Hotspots

By that we mean rows, which are frequently updated. Typically it’s some sort of a counter that’s being updated over and over again. The culprit of the problem is the same as in long transactions – rows are locked only locally. Again, if you send writes to more than one node, it is likely that the same counter will be modified at the same time on more than one node, causing conflicts and making certification fail.

For both those problems there is one solution – you can send your writes to just one node instead of distributing them across the whole cluster. You can use proxies for that – ClusterControl deploys HAProxy and ProxySQL, both can be configured so that writes will be sent to only one node. If you cannot send writes to one node only, you have to accept you will be seeing certification conflicts and rollbacks from time to time. In general, application has to be able to handle rollbacks from the database – there is no way around that, but it is even more important when application works with Galera Cluster.

Still, sending the traffic to one node is not enough to handle third problem.

Large Transactions

What is important to keep in mind is that the writeset is sent for certification only when the transaction completes. Then, the writeset is sent to all nodes and the certification process takes place. This induces limits on how big the single transaction can be as Galera, when preparing writeset, stores it in in-memory buffer. Too large transactions will reduce the cluster performance. Therefore two variables has been introduced: wsrep_max_ws_rows, which limits the number of rows per transaction (although it can be set to 0 – unlimited) and, more important: wsrep_max_ws_size, which can be set up to 2 GB. So, the largest transaction you can run with Galera Cluster is up to 2GB in size. Also, you have to keep in mind that certification and applying of the large transaction also takes time, creating “lag” – read after write, that hit node other than where you initially committed the transaction, will most likely result in incorrect data as the transaction is still being applied.

Galera 4 comes with Streaming Replication, which can be used to mitigate all those problems. The main difference will be that the writeset now can be split into parts – no longer it will be needed to wait for the whole transaction to finish before data will be replicated. This may make you wonder – how the certification look like in such case? In short, certification is on the fly – each fragment is certified and all involved rows are locked on all of the nodes in the cluster. This is a serious change in how Galera works – until now locks were created locally, with streaming replication locks will be created on all of the nodes. This helps in the cases we discussed above – locking rows as transaction fragments come in, helps to reduce the probability that transaction will have to be rolled back. Conflicting transactions executed locally will not be able to get the locks they need and will have to wait for the replicating transaction to complete and release the row locks.

In the case of hotspots, with streaming replication it is possible to get the locks on all of the nodes when updating the row. Other queries which want to update the same row will have to wait for the lock to be released before they will execute their changes.

Large transactions will benefit from the streaming replication because it will no longer be needed to wait for the whole transaction to finish nor they will be limited by the transaction size – large transaction will be split into fragments. It also helps to utilize network better – instead of sending 2GB of data at once the same 2GB of data can be split into fragments and sent over a longer period of time.

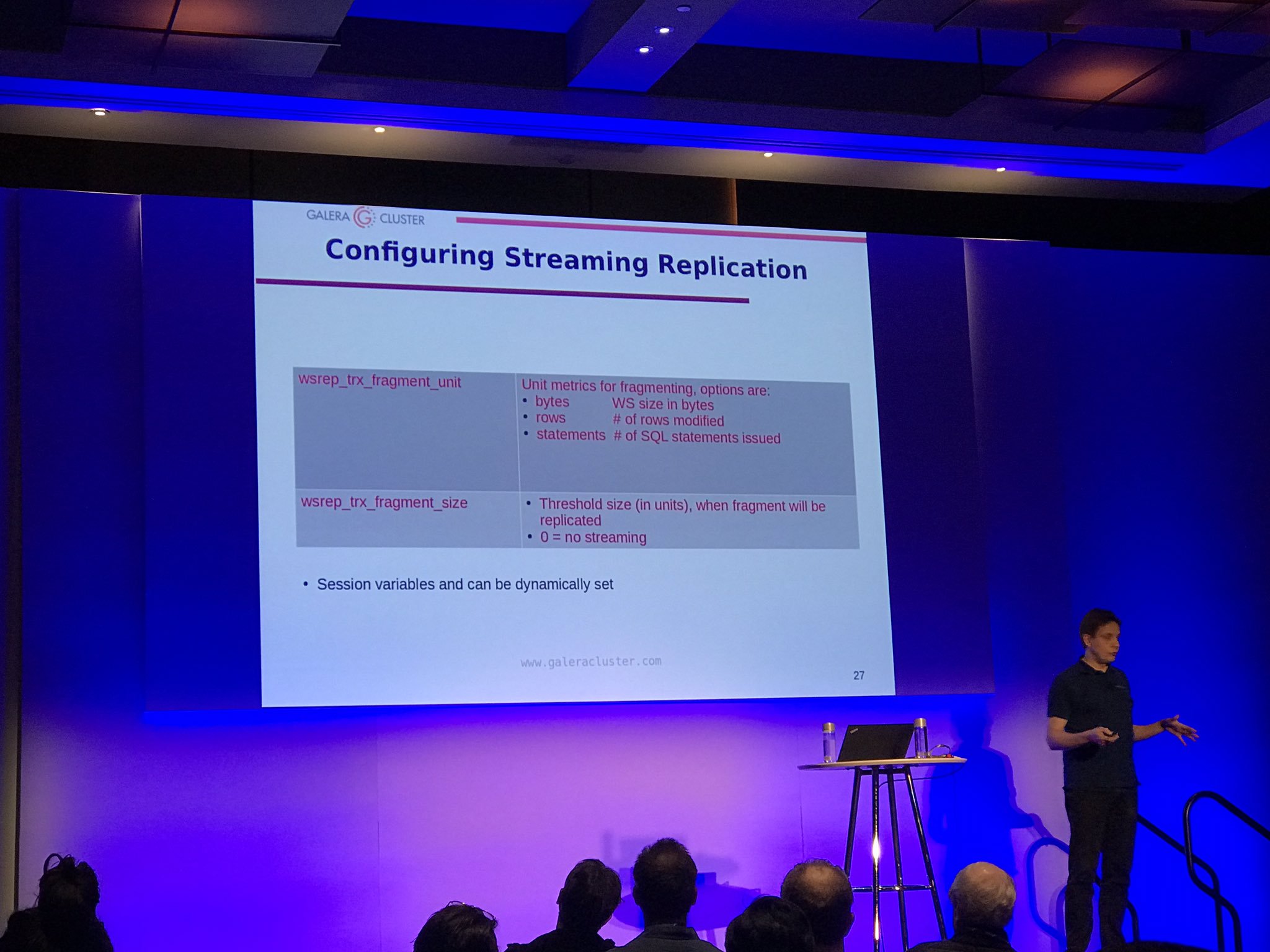

There are two configuration options for streaming replication: wsrep_trx_fragment_size, which tells how big a fragment should be (by default it is set to 0, which means that the streaming replication is disabled) and wsrep_trx_fragment_unit, which tells what the fragment really is. By default it is bytes, but it can also be a ‘statements’ or ‘rows’. Those variables can (and should) be set on a session level, making it possible for user to decide which particular query should be replicated using streaming replication. Setting unit to ‘statements’ and size to 1 allow, for example, to use streaming replication just for a single query which, for example, updates a hotspot.

Of course, there are drawbacks of running the streaming replication, mainly due to the fact that locks are now taken on all nodes in the cluster. If you have seen large transaction rolling back for ages, now such transaction will have to roll back on all of the nodes. Obviously, the best practice is to reduce the size of a transaction as much as possible to avoid rollbacks taking hours to complete. Another drawback is that, for the crash recovery reasons, writesets created from each fragment are stored in wsrep_schema.SR table on all nodes, which, sort of, implements double-write buffer, increasing the load on the cluster. Therefore you should carefully decide which transaction should be replicated using the streaming replication and, as long as it is feasible, you should still stick to the best practices of having small, short transactions or splitting the large transaction into smaller batches.

Backup Locks

Finally, MariaDB users will be able to benefit from backup locks for SST. The idea behind SST executed using (for MariaDB) mariabackup is that the whole dataset has to be transferred, on the fly, with redo logs being collected in the background. Then, a global lock has to be acquired, ensuring that no write will happen, final position of the redo log has to be collected and stored. Historically, for MariaDB, the locking part was performed using FLUSH TABLES WITH READ LOCK which did its job but under heavy load it was quite hard to acquire. It is also pretty heavy – not only transactions have to wait for the lock to be released but also the data has to be flushed to disk. Now, with MariaDB 10.4, it will be possible to use less intrusive BACKUP LOCK, which will not require data to be flushed, only commits will be blocked for the duration of the lock. This should mean less intrusive SST operations, which is definitely great to hear. Everyone who had to run their Galera Cluster in emergency mode, on one node, keeping fingers crossed that SST will not impact cluster operations should be more than happy to hear about this improvement.

Causal Reads From the Application

Galera 4 introduced three new functions which are intended to help add support for causal reads in the applications – WSREP_LAST_WRITTEN_GTID(), which returns GTID of the last write made by the client, WSREP_LAST_SEEN_GTID(), which returns the GTID of the last write transaction observed by the client and WSREP_SYNC_WAIT_UPTO_GTID(), which will block the client until the GTID passed to the function will be committed on the node. Sure, you can enforce causal reads in Galera even now, but by utilizing those functions it will be possible to implement safe read after write in those parts of the application where it is needed, without having a need to make changes in Galera configuration.

Upgrading to MariaDB Galera 10.4

If you would like to try Galera 4, it is available in the latest release candidate for MariaDB 10.4. As per MariaDB documentation, at this moment there is no way to do a live upgrade of 10.3 Galera to 10.4. You have to stop the whole 10.3 cluster, upgrade it to 10.4 and then start it back. This is a serious blocker and we hope this limitation will be removed in one of the next versions. It is of utmost importance to have the option for a live upgrade and for that both MariaDB 10.3 and MariaDB 10.4 will have to coexist in the same Galera Cluster. Another option, which also may be suitable, is to set up asynchronous replication between old and new Galera Cluster.

We really hope you enjoyed this short review of the features of MariaDB 10.4 Galera Cluster, we are looking forward to see streaming replication in real live production environments. We also hope those changes will help to increase Galera adoption even further. After all, streaming replication solves many issues which can prevent people from migrating into Galera.