blog

Deploying a Hybrid Infrastructure Environment for Percona XtraDB Cluster

A hybrid infrastructure environment is a mix of active hosts residing on both on-premises and cloud infrastructures in a single operational or distributed system. Percona XtraDB Cluster, one of the Galera Cluster variants, can be configured to run on a hybrid infrastructure environment with a bit of tuning to ensure it can deal with most of the uncertainties when involving multiple sites.

In this blog post, we will look into how to deploy Percona XtraDB Cluster on a hybrid infrastructure environment, between on-premises and cloud infrastructure running on AWS. This setup allows us to bring the database closer to the clients and applications residing in a cloud environment while keeping a copy of the database on the on-premises for disaster recovery and live backup purposes.

Consideration for a Hybrid Environment

In a hybrid environment, it is better to use an easily distinguishable fully-qualified domain name (FQDN) for every database node in a cluster, instead of using the IP address as an identifier. The best way to achieve this by using the host mapping technique, where we define all the hosts inside /etc/hosts on all nodes in the cluster:

# content of /etc/hosts on every node

54.129.6.197 cc.pxc-hybrid.mycompany.com cc

18.131.73.6 db1.pxc-hybrid.mycompany.com db1

18.116.111.116 db2.pxc-hybrid.mycompany.com db2

202.86.57.37 db3.pxc-hybrid.mycompany.com db3Make sure the firewalls are configured to allow communication between these ports – 3306, 4444, 4567, 4568. If you have ClusterControl to manage the cluster, consider allowing port 9999 and SSH (default is 22).

Note that Galera Cluster is sensitive to the network due to its virtually synchronous nature. The farther the Galera nodes are in a given cluster, the higher latency and its write capability to distribute and certify the writesets. Also, if the connectivity is not stable, cluster partitioning can easily happen, which could trigger cluster synchronization on the joiner nodes. In some cases, this can introduce instability to the cluster.

Some recommendations are to increase Galera’s heartbeat interval and health check timeout parameters to tolerate transient network connectivity failures between geographical locations. The following parameters can tolerate 30-second connectivity outages:

wsrep_provider_options = "{any existing_configurations};evs.keepalive_period = PT3S;evs.suspect_timeout = PT30S;evs.inactive_timeout = PT1M; evs.install_timeout = PT1M"In configuring these parameters, consider the following:

-

Set the evs.keepalived_period (default is PT1S) to at least higher than the highest round trip time (RTT) value. The default value of 1 second is good for 10-100 milliseconds latency. A simple rule of thumb is 5 to 10 times the max RTT of the ping report.

-

Set the evs.suspect_timeout (default is PT5S) as high as possible to avoid partitions. Partitions cause state transfers, which can affect performance.

-

Set the evs.inactive_timeout (default is PT15S) to a value higher than that of the evs.suspect_timeout (default is PT5S).

-

Set the evs.install_timeout (default is PT15S) to a value higher than the value of the evs.inactive_timeout (default is PT15S).

By increasing the above parameters, we can reduce the risk of cluster partitioning, which can help to stabilize the cluster in a non-stable network.

Also, consider setting a different gmcast.segment value on the database node that resides on the secondary site. As in this example, we are going to set gmcast.segment=1 on db3, while the rest of the nodes will use the default value 0. With this configuration, optimizations on communication are performed to minimize the amount of traffic between network segments including writeset relaying and IST and SST donor selection.

Deploying a Hybrid Percona XtraDB Cluster

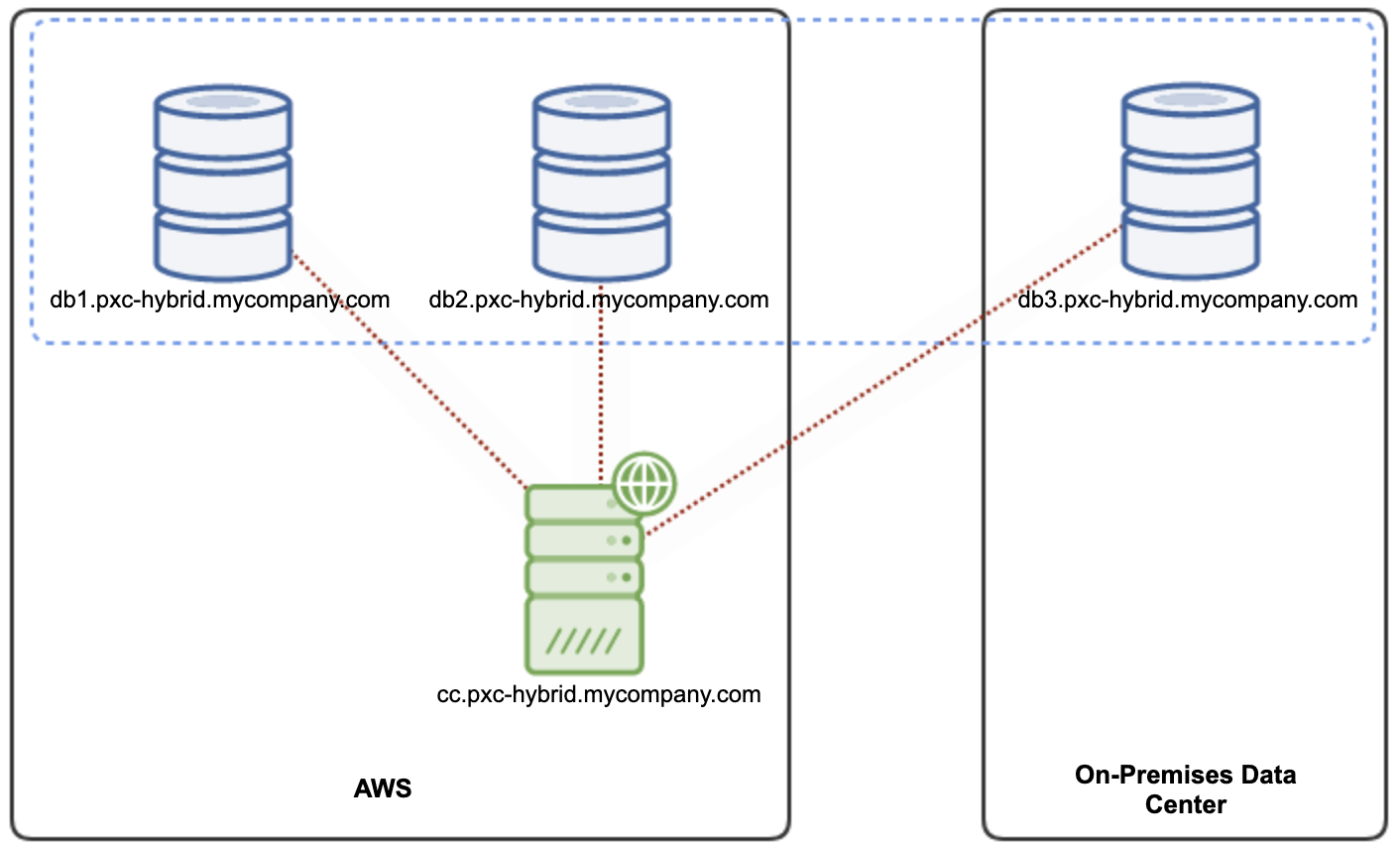

Our deployment consists of 4 nodes – One node for ClusterControl and 3 nodes Percona XtraDB Cluster, where 2 of the database nodes are located in the AWS data center as the primary datacenter (as well as ClusterControl), while the third node is located in the on-premises datacenter. The following diagram illustrates our final architecture:

All instructions provided here are based on Ubuntu Server 18.04 machines. We will deploy our cluster using ClusterControl Community Edition, where the deployment and monitoring features are free forever.

First of all, we need to modify the MySQL configuration template that will be used for the deployment. Some amendments are required to tune for our hybrid infrastructure setup, as discussed in the previous section.

Start by creating the custom template directory under /etc/cmon directory:

$ sudo mkdir -p /etc/cmon/templatesThen, copy the default template file under /usr/share/cmon/templates for our target deployment, Percona XtraDB Cluster 8.0 called my.cnf.80-pxc. We are going to name it as my.cnf.80-pxc-hybrid:

$ sudo cp /usr/share/cmon/templates/my.cnf.80-pxc /etc/cmon/templates/my.cnf.80-pxc-hybridThen modify the content using your favorite text editor:

$ sudo vim /etc/cmon/templates/my.cnf.80-pxc-hybridFrom:

wsrep_provider_options="base_port=4567; gcache.size=@GCACHE_SIZE@; gmcast.segment=@SEGMENTID@;socket.ssl_key=server-key.pem;socket.ssl_cert=server-cert.pem;socket.ssl_ca=ca.pem @WSREP_EXTRA_OPTS@"To:

wsrep_provider_options="evs.keepalive_period = PT3S;evs.suspect_timeout = PT30S;evs.inactive_timeout = PT1M; evs.install_timeout = PT1M;base_port=4567; gcache.size=@GCACHE_SIZE@; gmcast.segment=@SEGMENTID@;socket.ssl_key=server-key.pem;socket.ssl_cert=server-cert.pem;socket.ssl_ca=ca.pem @WSREP_EXTRA_OPTS@"Now, our configuration template is ready to be used for deployment. Next is setting up passwordless SSH from ClusterControl nodes to all database nodes:

$ whoami

ubuntu

$ ssh-keygen -t rsa # press Enter on all promptsRetrieve the public key content on ClusterControl host:

$ cat /home/ubuntu/.ssh/id_rsa.pubPaste the output of the above command inside /home/ubuntu/.ssh/authorized_keys on all database nodes. If the authorized_keys file does not exist, create it beforehand as the corresponding sudo user. In this example, the user is “ubuntu”:

$ whoami

ubuntu

$ mkdir -p /home/ubuntu/.ssh/

$ touch /home/ubuntu/.ssh/authorized_keys

$ chmod 600 /home/ubuntu/.ssh/authorized_keysWe can verify if passwordless SSH is configured correctly by running the following command on the ClusterControl host:

$ ssh -i /home/ubuntu/.ssh/authorized_keys [email protected] "sudo ls -al /root"Make sure you see a directory listing for the root user without specifying any password. This means our passwordless configuration is correct.

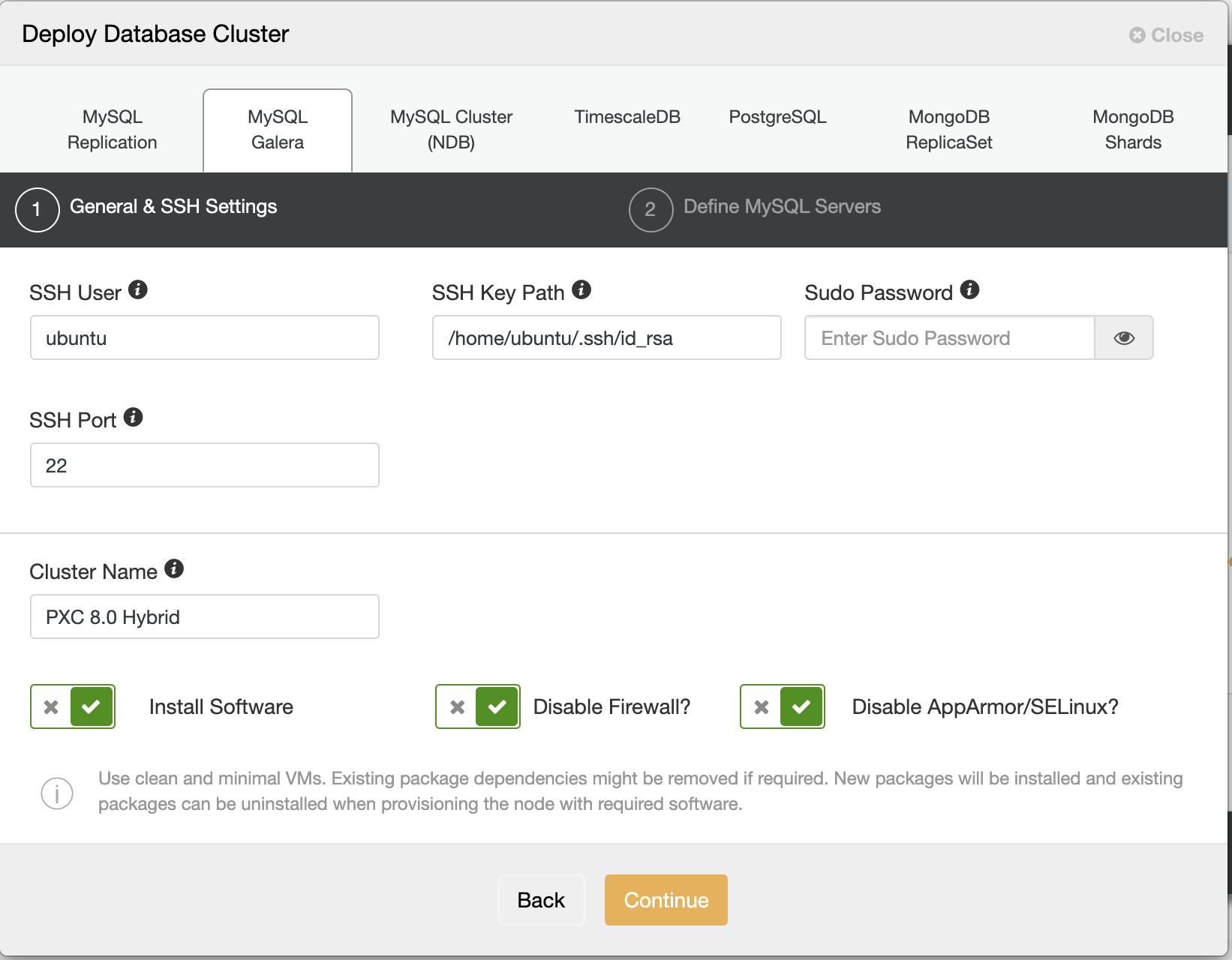

Go to ClusterControl -> Deploy -> MySQL Galera and specify the SSH credentials as follows:

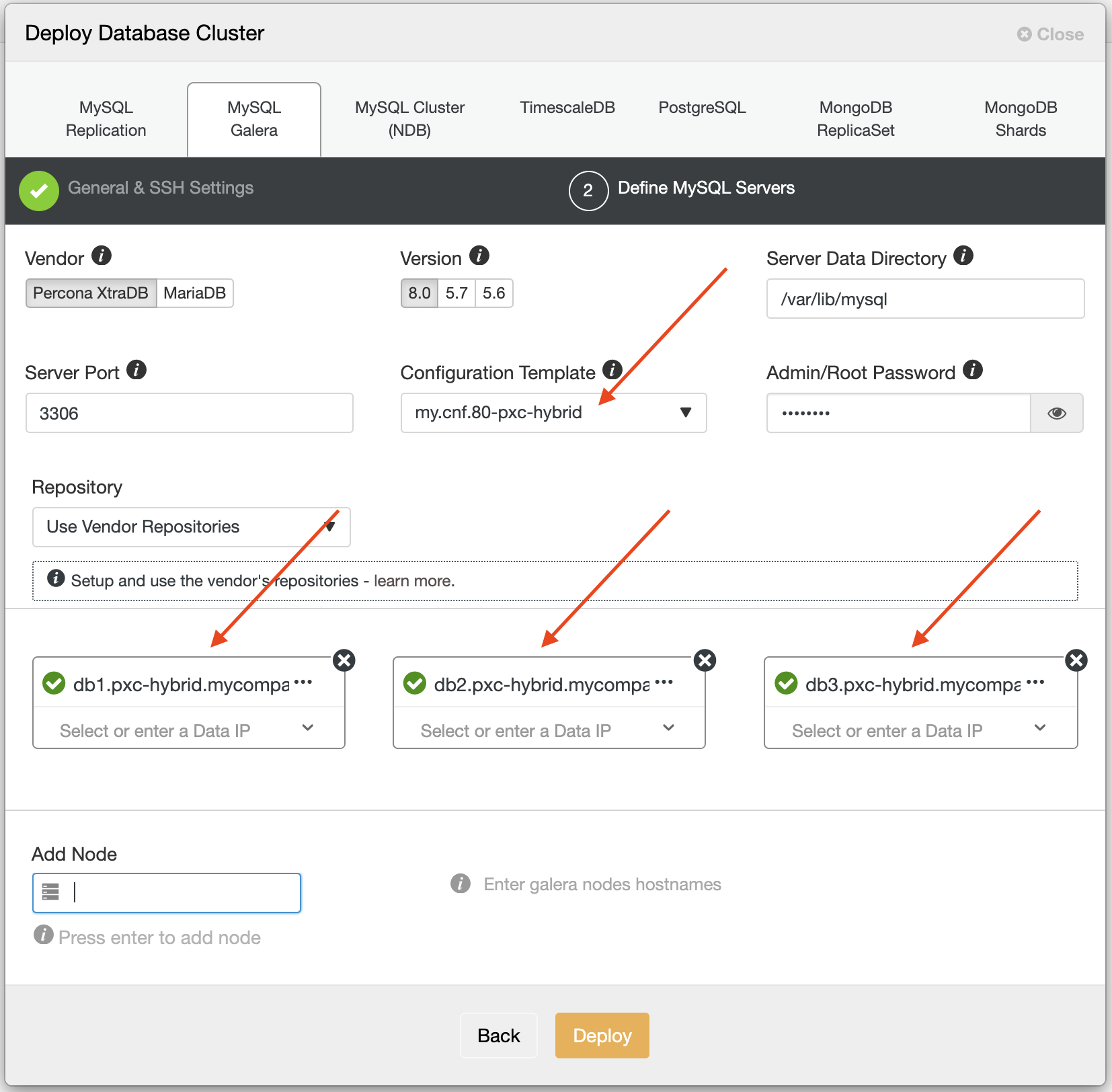

On the second step, “Define MySQL Servers”, specify the template that we have prepared under /etc/cmon/templates/my.cnf.80-pxc-hybrid, and specify the FQDN of all database nodes, as highlighted in the following screenshot:

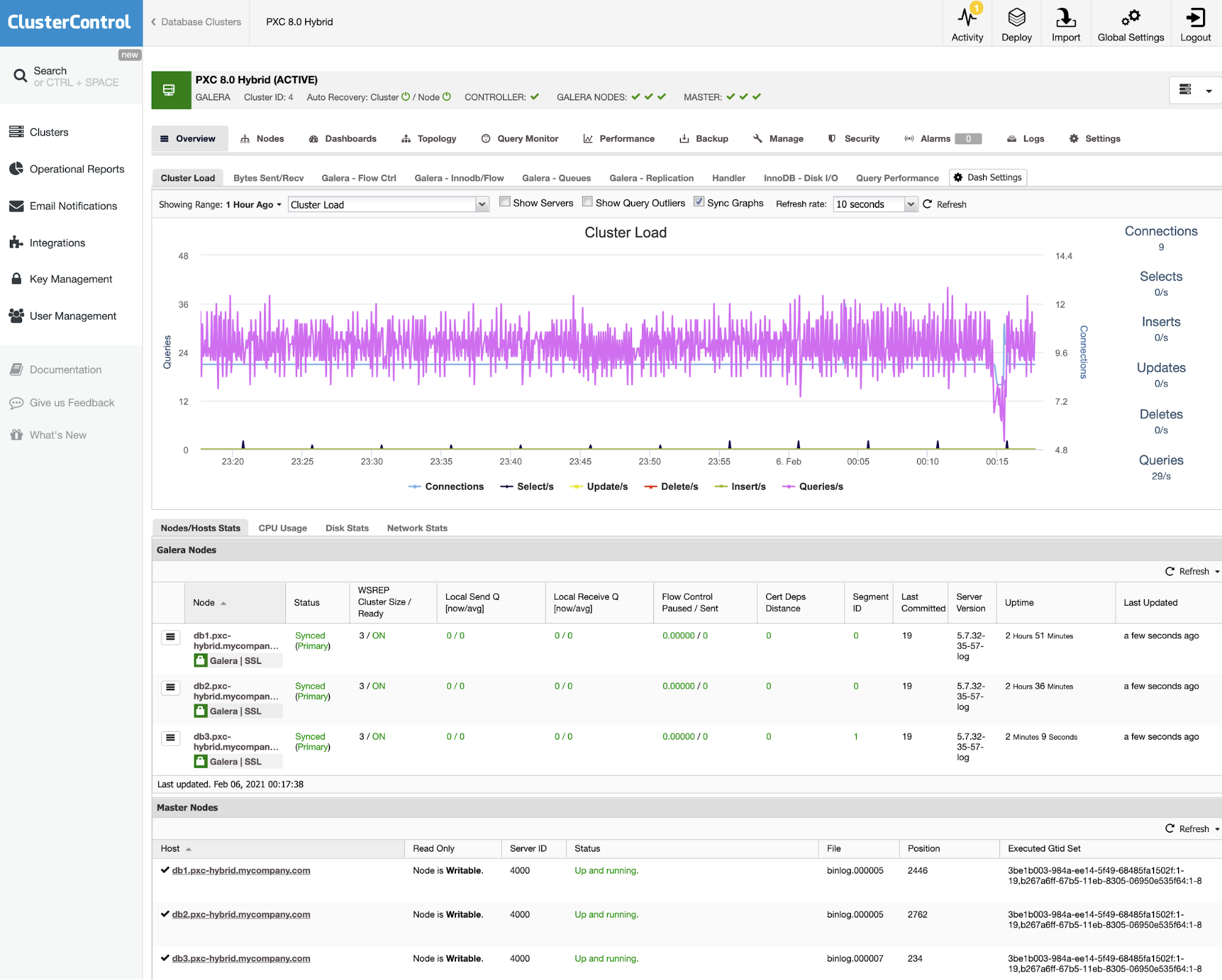

Make sure you get a green tick icon next to the database FQDN name, indicating ClusterControl is able to connect to the database nodes to perform the deployment. Click on the “Deploy” button to start the deployment and you can monitor the deployment progress under Activity -> Jobs -> Create Cluster. After the deployment is finished, you should see the database cluster is listed in the ClusterControl dashboard:

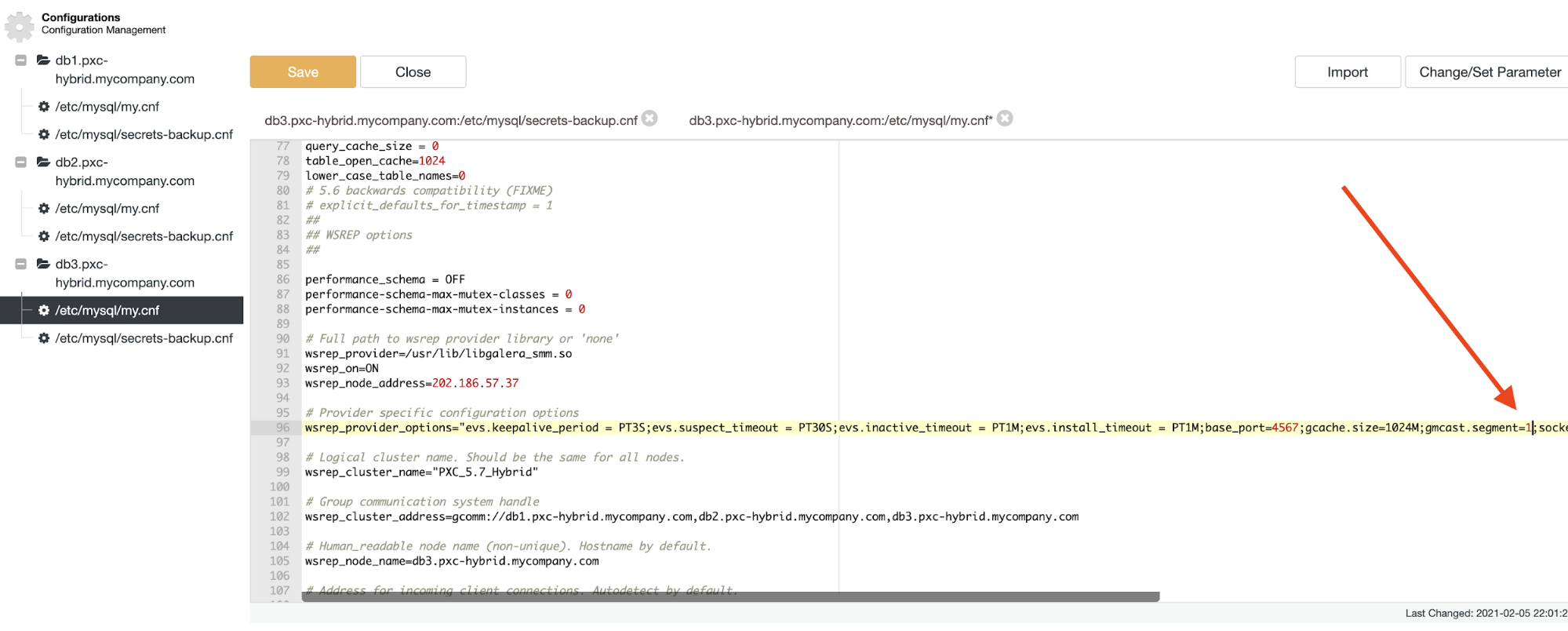

As the final step, we have to define a different segment ID for db3 since it is located away from the majority of the nodes. To achieve this, we need to append “gmcast.segment=1” setting to the wsrep_provider_options on db3. You can perform the modification manually inside the MySQL configuration file, or you can use ClusterControl Configuration Manager under ClusterControl -> Manage -> Configurations:

Do not forget to restart the database node to apply the changes. In ClusterControl, just to go to Nodes -> choose db3 -> Node Actions -> Restart Node -> Proceed to restart the node gracefully.

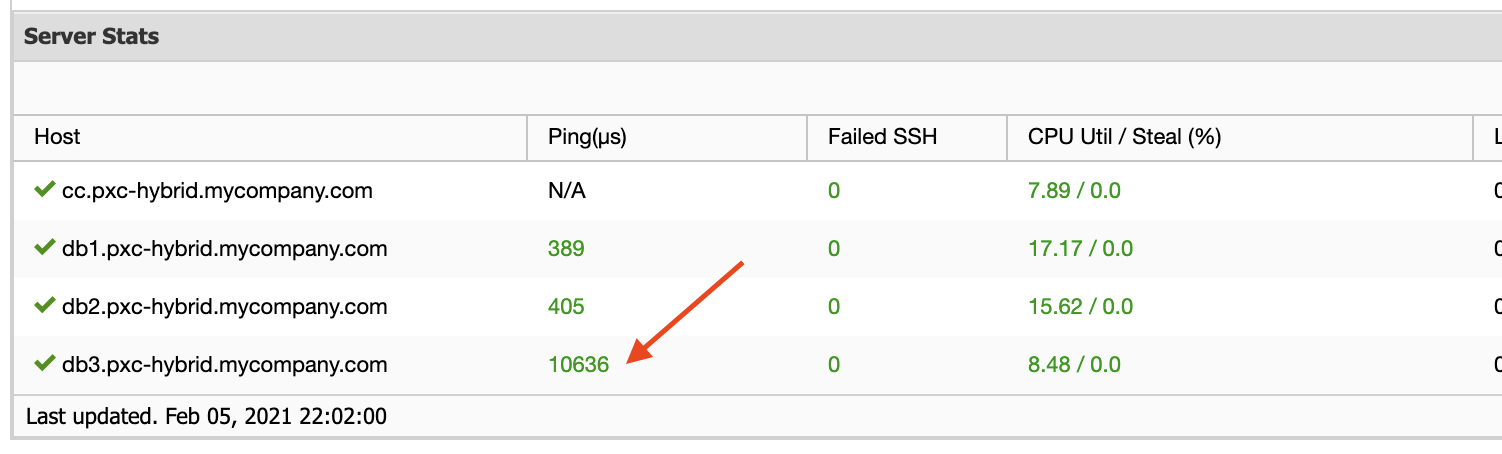

At a glance, we can tell that db3 is located in a different network segment, indicated by a higher ping RTT value:

With ClusterControl, our database cluster on a hybrid environment can be easily deployed with full visibility on the whole topology. The applications/clients can now connect to both Percona XtraDB Cluster nodes in the primary site (AWS), while the third node in the secondary site (on-premises datacenter) can also serve as the recovery site when necessary.