blog

Clustering Moodle on Multiple Servers for High Availability and Scalability

Moodle is an open-source e-learning platform (aka Learning Management System) that is widely adopted by educational institutions to create and administer online courses. For larger student bodies and higher volumes of instruction, moodle must be robust enough to serve thousands of learners, administrators, content builders and instructors simultaneously.

Availability and scalability are key requirements as moodle becomes a critical application for course providers. In this blog, we will show you how to deploy and cluster moodle/web, database and file-system components on multiple servers to achieve both high availability and scalability.

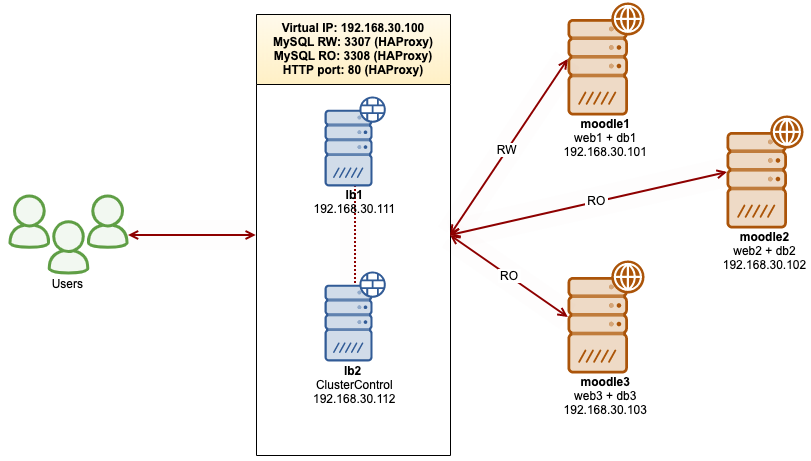

We are going to deploy Moodle 3.9 LTS on top of the GlusterFS clustered file system and MariaDB Cluster 10.4. To eliminate any single point of failure, we will use three nodes to serve the application and database while the remaining two are used for load balancers and the ClusterControl management server. This is illustrated in the following diagram:

Prerequisites

All hosts are running on CentOS 7.6 64bit with SElinux and iptables disabled. The following is the host definition inside /etc/hosts:

192.168.30.100 moodle web db virtual-ip mysql

192.168.30.101 moodle1 web1 db1

192.168.30.102 moodle2 web2 db2

192.168.30.103 moodle3 web3 db3

192.168.30.111 lb1

192.168.30.112 lb2 clustercontrolCreating Gluster replicated volumes on the root partition is not recommended. We will use another disk /dev/sdb1 on every web node (web1, web2 and web3) for the GlusterFS storage backend, mounted as /storage. This is shown with the following mount command:

$ mount | grep storage

/dev/sdb1 on /storage type ext4 (rw,relatime,seclabel,data=ordered)Moodle Database Cluster Deployment

We will start with database deployment for our three-node MariaDB Cluster using ClusterControl. Install ClusterControl on lb2:

$ wget https://severalnines.com/downloads/cmon/install-cc

$ chmod 755 install-cc

$ ./install-ccThen set up passwordless SSH to all nodes that are going to be managed by ClusterControl (3 MariaDB Cluster + 2 HAProxy nodes). On the ClusterControl node, do:

$ whoami

root

$ ssh-keygen -t rsa # Press Enter on all prompts

$ ssh-copy-id 192.168.30.111 #lb1

$ ssh-copy-id 192.168.30.112 #lb2

$ ssh-copy-id 192.168.30.101 #moodle1

$ ssh-copy-id 192.168.30.102 #moodle2

$ ssh-copy-id 192.168.30.103 #moodle3**Enter the root password for the respective host when prompted.

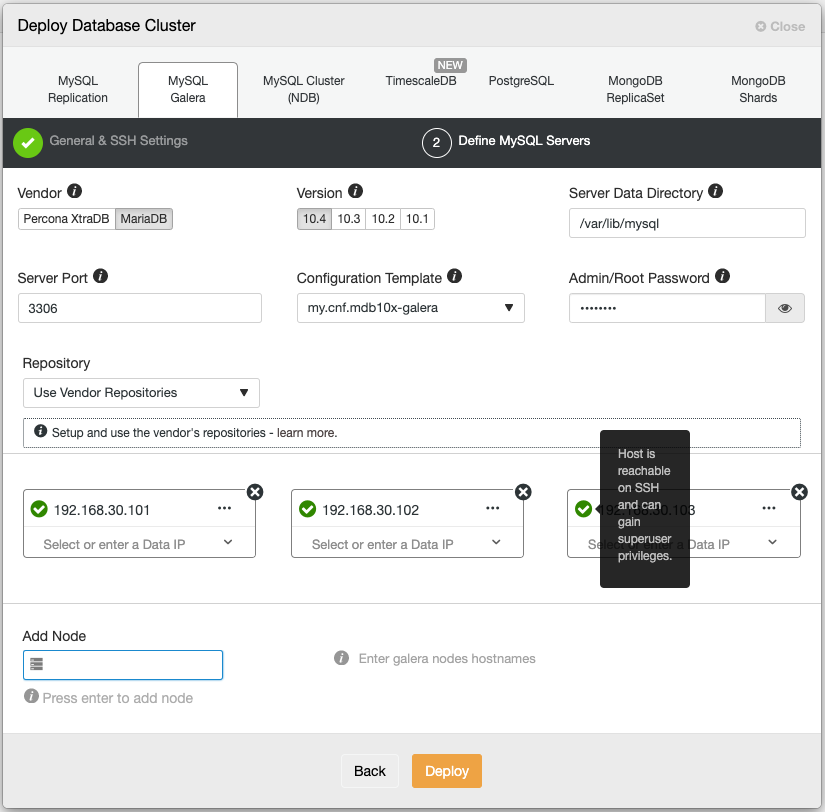

Open a web browser and go to https://192.168.30.102/clustercontrol and create an admin user. Then go to Deploy -> MySQL Galera. Follow the deployment wizard accordingly. At the second stage ‘Define MySQL Servers’, pick MariaDB 10.4 and specify the IP address for every database node. Make sure you get a green tick after entering the database node details, as shown below:

Click “Deploy” to start the deployment. The database cluster will be ready in 15~20 minutes. You can follow the deployment progress at Activity -> Jobs -> Create Cluster -> Full Job Details. The cluster will be listed under the Database Cluster dashboard once deployed.

We can now proceed to database load balancer deployment.

Load Balancers and Virtual IP for Moodle

Since HAProxy and ClusterControl are co-located on the same server (lb2), we need to change the Apache default port to another port, for example, port 8080 so it won’t conflict with port 80 that is going to be assigned to HAProxy for Moodle web load balancing.

On lb2, open the Apache configuration file at /etc/httpd/conf/httpd.conf and make the following changes:

Listen 8080Also we need to make changes on the ClusterControl’s virtualhost definition and modify the VirtualHost line inside /etc/httpd/conf.d/s9s.conf:

Restart Apache webserver to apply changes:

$ systemctl restart httpdAt this point, ClusterControl is accessible via port 8080 on HTTP at http://192.168.30.112:8080/clustercontrol or you can connect through the default HTTPS port at https://192.168.30.112/clustercontrol.

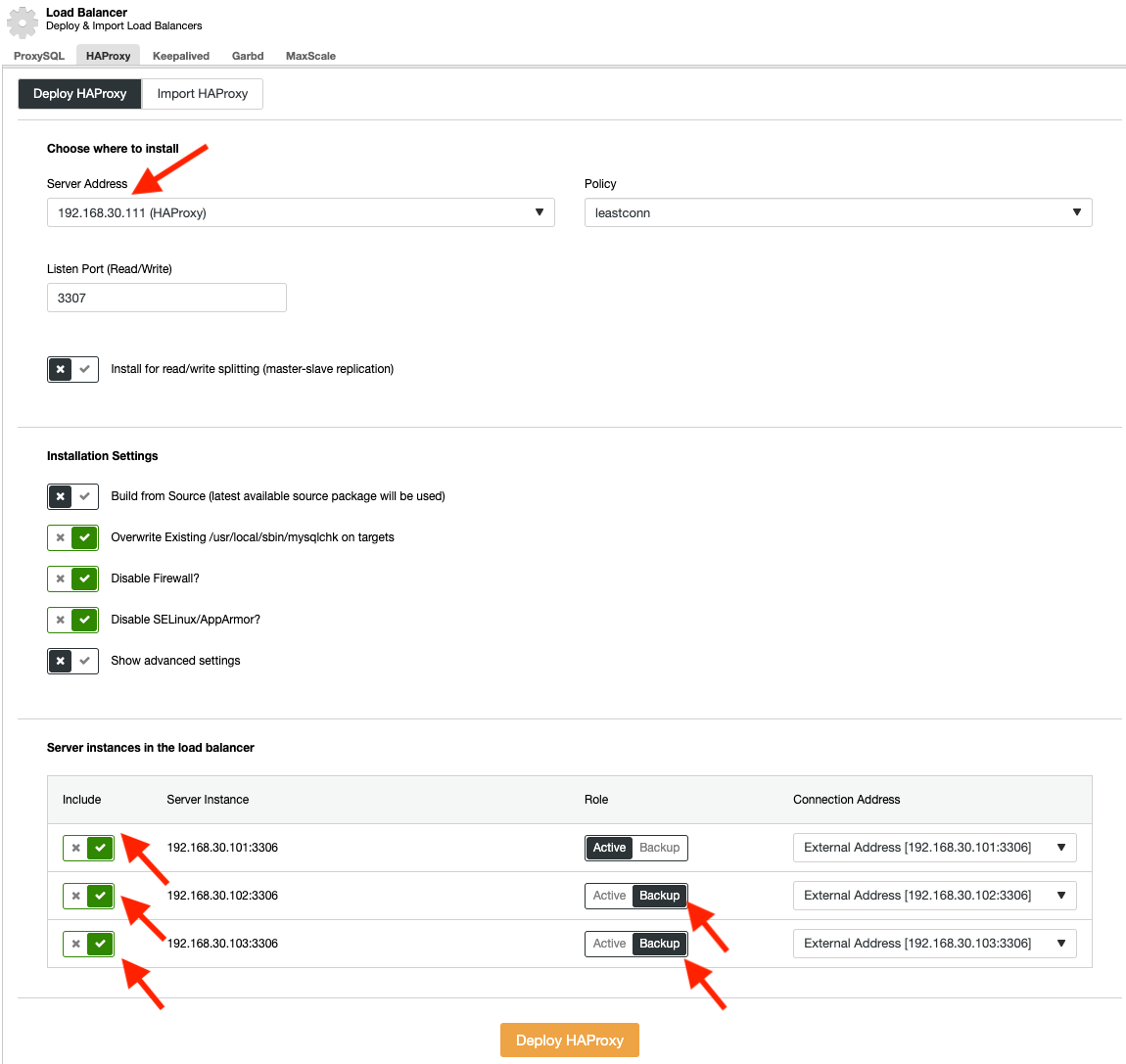

Login to ClusterControl, drill down to the database cluster and go to Add Load Balancer -> HAProxy -> Deploy HAProxy. Deploy lb1 and lb2 with HAProxy similar to the screenshots below:

Note that we are going to set up 3 listening ports for HAProxy, 3307 for database single-writer (deployed via ClusterControl), 3308 for database multi-writer/reader (will be customized later on) and port 80 for Moodle web load balancing (will be customized later on).

Repeat the same deployment step for lb2 (192.168.30.112).

Modify the HAProxy configuration files on both HAProxy instances (lb1 and lb2) by adding the following lines for database multi-writer (3308) and Moodle load balancing (80):

listen haproxy_192.168.30.112_3308_ro

bind *:3308

mode tcp

tcp-check connect port 9200

timeout client 10800s

timeout server 10800s

balance leastconn

option httpchk

default-server port 9200 inter 2s downinter 5s rise 3 fall 2 slowstart 60s maxconn 64 maxqueue 128 weight 100

server 192.168.30.101 192.168.30.101:3306 check

server 192.168.30.102 192.168.30.102:3306 check

server 192.168.30.103 192.168.30.103:3306 check

frontend http-in

bind *:80

default_backend web_farm

backend web_farm

server moodle1 192.168.30.101:80 maxconn 32 check

server moodle2 192.168.30.102:80 maxconn 32 check

server moodle3 192.168.30.103:80 maxconn 32 checkRestart the HAProxy service to load the changes:

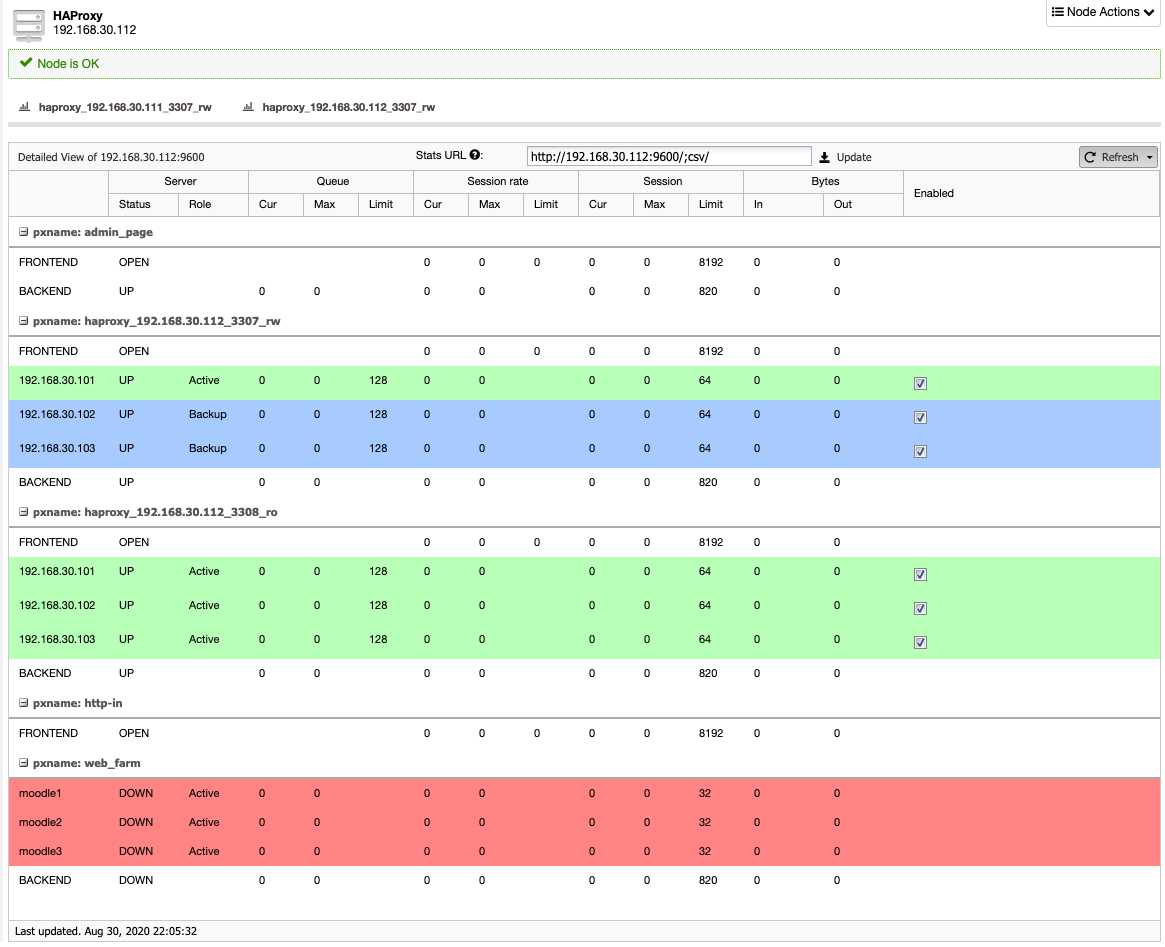

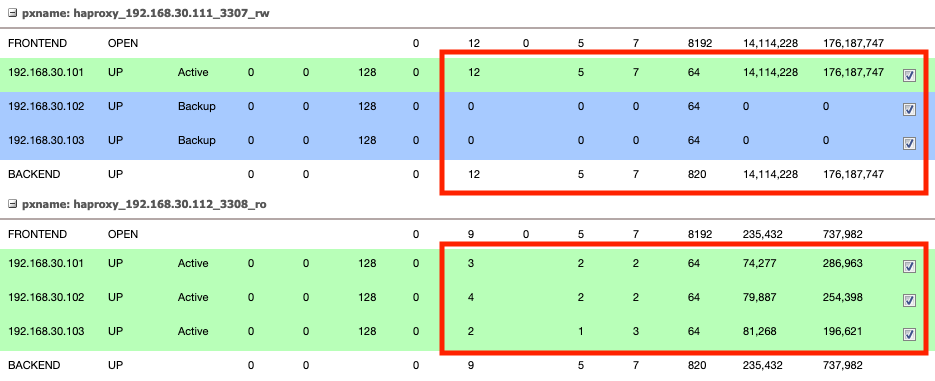

$ systemctl restart haproxyAt this point, we should be able to see the HAProxy stats page (accessible under ClusterControl -> Nodes -> pick the HAProxy nodes) reporting as below:

The first section (with 2 blue lines) represents the single-writer configuration on port 3307. All database connections coming to this port will be forwarded to only one database server (the green line). The second section represents the multi-writer configuration on port 3308, where all database connections will be forwarded to multiple available nodes using leastconn balancing algorithm. The last section in red represents the Moodle web load balancing that we are yet to set up.

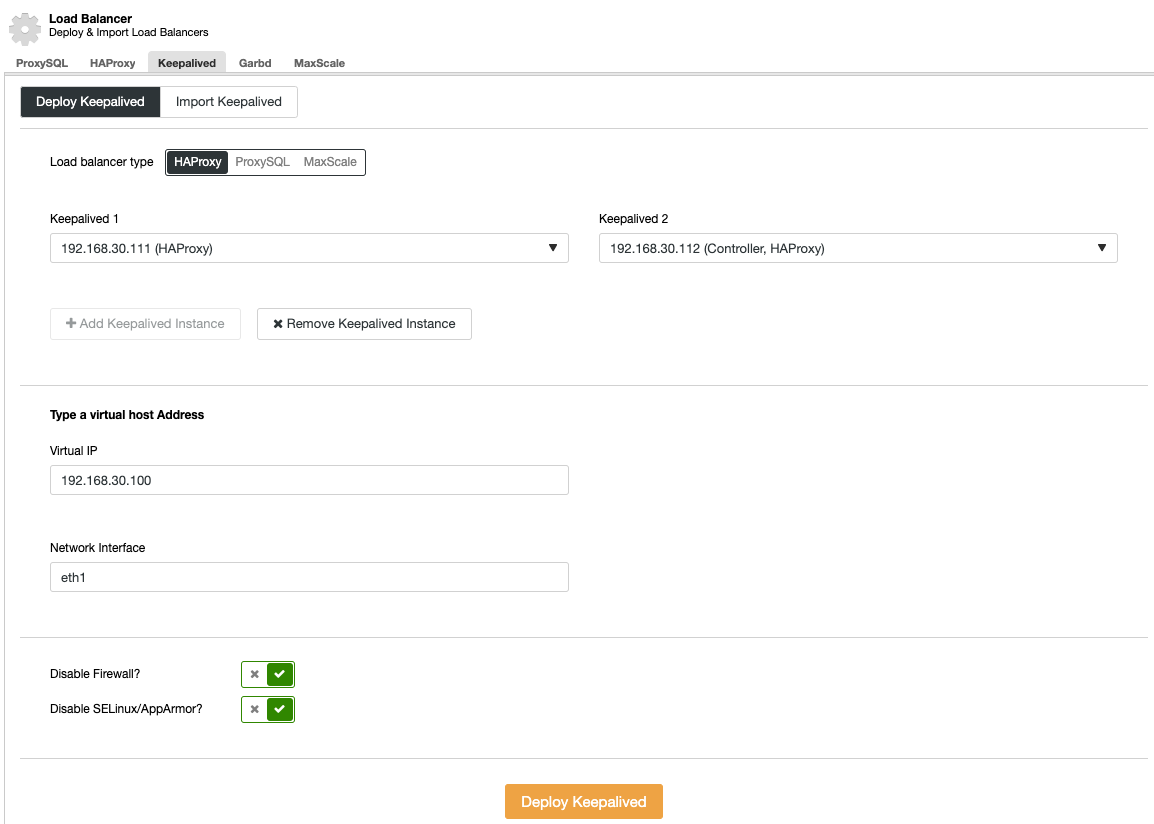

Go to ClusterControl -> Manage -> Load Balancer -> Keepalived -> Deploy Keepalived -> HAProxy and pick both HAProxy instances that will be tied together with a virtual IP address:

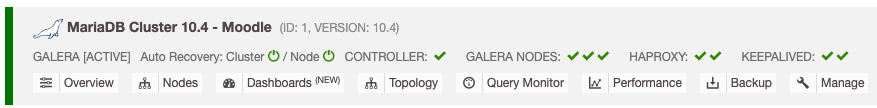

8. The load balancer nodes have now been installed, and are integrated with ClusterControl. You can verify this by checking out the ClusterControl’s summary bar:

Deploying GlusterFS

*The following steps should be performed on moodle1, moodle2 and moodle3 unless specified otherwise.

Install GlusterFS repository for CentOS 7 and install GlusterFS server and all of its dependencies:

$ yum install centos-release-gluster -y

$ yum install glusterfs-server -yCreate a directory called brick under /storage partition:

$ mkdir /storage/brickStart GlusterFS service:

$ systemctl start glusterdOn moodle1, probe the other nodes (moodle2 and moodle3):

$ gluster peer probe 192.168.30.102

peer probe: success.

$ gluster peer probe 192.168.30.103

peer probe: success.You can verify the peer status with the following command:

$ gluster peer status

Number of Peers: 2

Hostname: 192.168.30.102

Uuid: dd976496-fda4-4ace-801e-7ee55461b458

State: Peer in Cluster (Connected)

Hostname: 192.168.30.103

Uuid: 27a81fba-c529-4c2c-9cf7-2dcaf5880cef

State: Peer in Cluster (Connected)On moodle1, create a replicated volume on probed nodes:

$ gluster volume create rep-volume replica 3 192.168.30.101:/storage/brick 192.168.30.102:/storage/brick 192.168.30.103:/storage/brick

volume create: rep-volume: success: please start the volume to access dataStart the replicated volume on moodle1:

$ gluster volume start rep-volume

volume start: rep-volume: successEnsure the replicated volume and processes are online:

$ gluster volume status

Status of volume: rep-volume

Gluster process TCP Port RDMA Port Online Pid

------------------------------------------------------------------------------

Brick 192.168.30.101:/storage/brick 49152 0 Y 15059

Brick 192.168.30.102:/storage/brick 49152 0 Y 24916

Brick 192.168.30.103:/storage/brick 49152 0 Y 23182

Self-heal Daemon on localhost N/A N/A Y 15080

Self-heal Daemon on 192.168.30.102 N/A N/A Y 24944

Self-heal Daemon on 192.168.30.103 N/A N/A Y 23203

Task Status of Volume rep-volume

------------------------------------------------------------------------------

There are no active volume tasksMoodle requires a data directory (moodledata) to be outside of the webroot (/var/www/html). So, we’ll mount the replicated volume on /var/www/moodledata instead. Create the path:

$ mkdir -p /var/www/moodledataAdd following line into /etc/fstab to allow auto-mount:

localhost:/rep-volume /var/www/moodledata glusterfs defaults,_netdev 0 0Mount the GlusterFS volume into /var/www/moodledata:

$ mount -aApache and PHP Setup for Moodle

*The following steps should be performed on moodle1, moodle2 and moodle3.

Moodle 3.9 requires PHP 7.4. To simplify the installation, install the Remi YUM repository:

$ yum -y install http://rpms.famillecollet.com/enterprise/remi-release-7.rpmInstall all required packages with remi repository enabled:

$ yum-config-manager --enable remi-php74

$ yum install -y httpd php php-xml php-gd php-mbstring php-soap php-intl php-mysqlnd php-pecl-zendopcache php-xmlrpc php-pecl-zipStart Apache web server and enable it on boot:

$ systemctl start httpd

$ systemctl enable httpdInstalling Moodle

*The following steps should be performed on moodle1.

Download Moodle 3.9 from download.moodle.org and extract it under /var/www/html:

$ wget http://download.moodle.org/download.php/direct/stable39/moodle-latest-39.tgz

$ tar -xzf moodle-latest-39.tgz -C /var/www/htmlMoodle requires a data directory outside /var/www/html. The default location would be /var/www/moodledata. Create the directory and assign correct permissions:

$ mkdir /var/www/moodledata

$ chown apache.apache -R /var/www/html /var/www/moodledataBefore we proceed with the Moodle installation, we need to prepare the Moodle database. From ClusterControl, go to Manage -> Schema and Users -> Create Database and create a database called ‘moodle’.

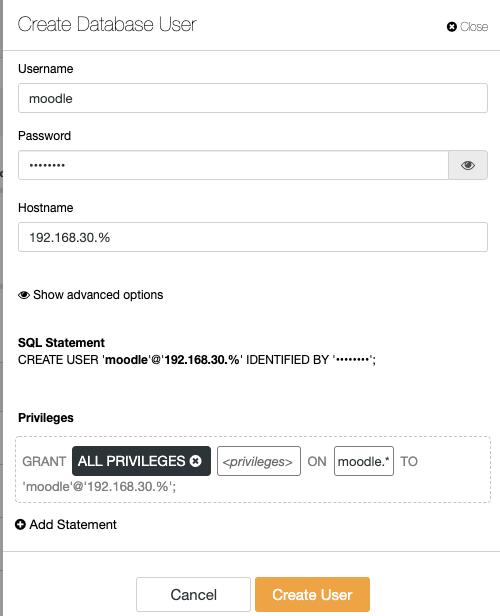

Create a new database user called “moodle” under the Users tab. Allow this user to be accessed from the 192.168.30.0/24 IP address range, with ALL PRIVILEGES on the moodle database:

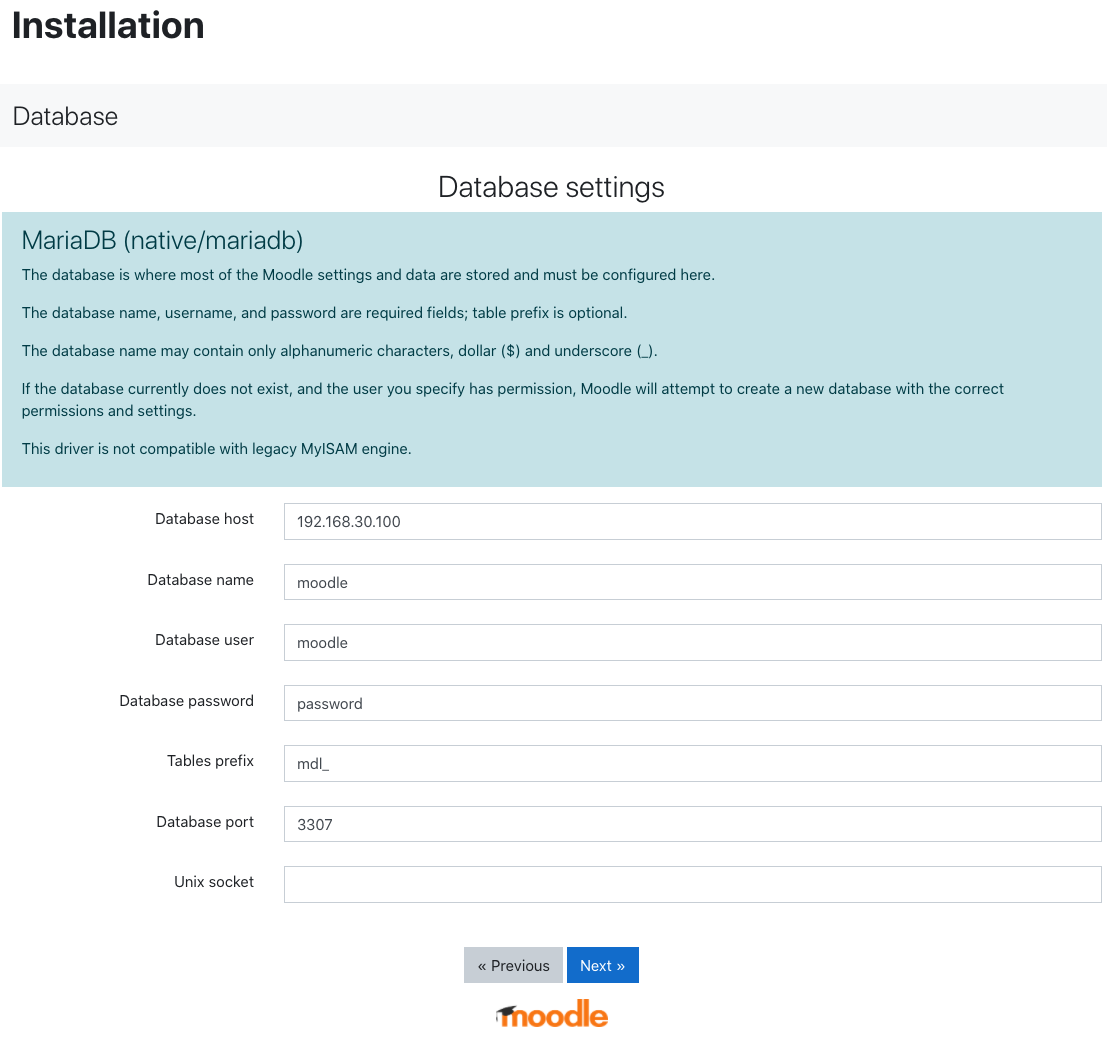

Open the browser and navigate to http://192.168.30.101/moodle/. You should see moodle’s installation page. Accept all default values and choose “MariaDB (native/mariadb)” in the database driver dropdown. Then, proceed to the “Database settings” page. Enter the configuration details as below:

The “Database host” value is the virtual IP address that we have created with Keepalived, while the database port, is the single-writer MySQL port load-balanced by HAProxy.

Proceed to the last page and let the Moodle installation process complete. After completion, you should see the Moodle admin dashboard.

Moodle 3.9 supports read-only database instances, where we can use the multi-writer load-balanced port 3308 to distribute read-only connections from Moodle to multiple servers. Add the “readonly” dboptions array inside config.php as the following example:

dbtype = 'mariadb';

$CFG->dblibrary = 'native';

$CFG->dbhost = '192.168.30.100';

$CFG->dbname = 'moodle';

$CFG->dbuser = 'moodle';

$CFG->dbpass = 'password';

$CFG->prefix = 'mdl_';

$CFG->dboptions = array (

'dbpersist' => 0,

'dbport' => 3307,

'dbsocket' => '',

'dbcollation' => 'utf8mb4_general_ci',

'readonly' => [

'instance' => [

'dbhost' => '192.168.30.100',

'dbport' => 3308,

'dbuser' => 'moodle',

'dbpass' => 'password'

]

]

);

$CFG->wwwroot = 'http://192.168.30.100/moodle';

$CFG->dataroot = '/var/www/moodledata';

$CFG->admin = 'admin';

$CFG->directorypermissions = 0777;

require_once(__DIR__ . '/lib/setup.php');In the configuration above, we also modified the $CFG->wwwroot line and change the IP address to the virtual IP address, which will be load-balanced by HAProxy on port 80.

Once the above is configured, we can copy the content of /var/www/html/moodle from moodle1 to moodle2 and moodle3, so Moodle can be served by the other web servers as well. Once done, you should be able to access the load-balanced Moodle on http://192.168.30.100/moodle.

Check the HAProxy statistics by logging into ClusterControl -> Nodes -> pick the primary HAProxy node. You should see some statistics bytes in and out on both 3307_rw and 3308_ro sections:

This indicates that the Moodle reads/writes database workloads are distributed among the backend MariaDB Cluster servers.

Congratulations, you have now deployed a scalable Moodle infrastructure with clustering on the web, database and file system layers. To download ClusterControl, click here.