blog

An Overview of ProxySQL Clustering in ClusterControl

ProxySQL is a well known load balancer in MySQL world – it comes with a great set of features that allow you to take control over your traffic and shape it however you see it fit. It can be deployed in many different ways – dedicated nodes, collocated with application hosts, silo approach – all depends on the exact environment and business requirements. The common challenge is that you, most of the cases, want your ProxySQL nodes to contain the same configuration. If you scale out your cluster and add a new server to ProxySQL, you want that server to be visible on all ProxySQL instances, not just on the active one. This leads to the question – how to make sure you keep the configuration in sync across all ProxySQL nodes?

You can try to update all nodes by hand, which is definitely not efficient. You can also use some sort of infrastructure orchestration tools like Ansible or Chef to keep the configuration across the nodes in a known state, making the modifications not on ProxySQL directly but through the tool you use to organize your environment.

If you happen to use ClusterControl, it comes with a set of features that allow you to synchronize the configuration between ProxySQL instances but this solution has its cons – it is a manual action, you have to remember to execute it after a configuration change. If you forget to do that, you may be up to a nasty surprise if, for example, keepalived will move Virtual IP to the non-updated ProxySQL instance.

None of those methods is simple or 100% reliable and the situation is when the ProxySQL nodes have different configurations and might be potentially dangerous.

Luckily, ProxySQL comes with a solution for this problem – ProxySQL Cluster. The idea is fairly simple – you can define a list of ProxySQL instances that will talk to each other and inform others about the version of the configuration that each of them contains. Configuration is versioned therefore any modification of any setting on any node will result in the configuration version being increased – this triggers the configuration synchronization and the new version of the configuration is distributed and applied across all nodes that form the ProxySQL cluster.

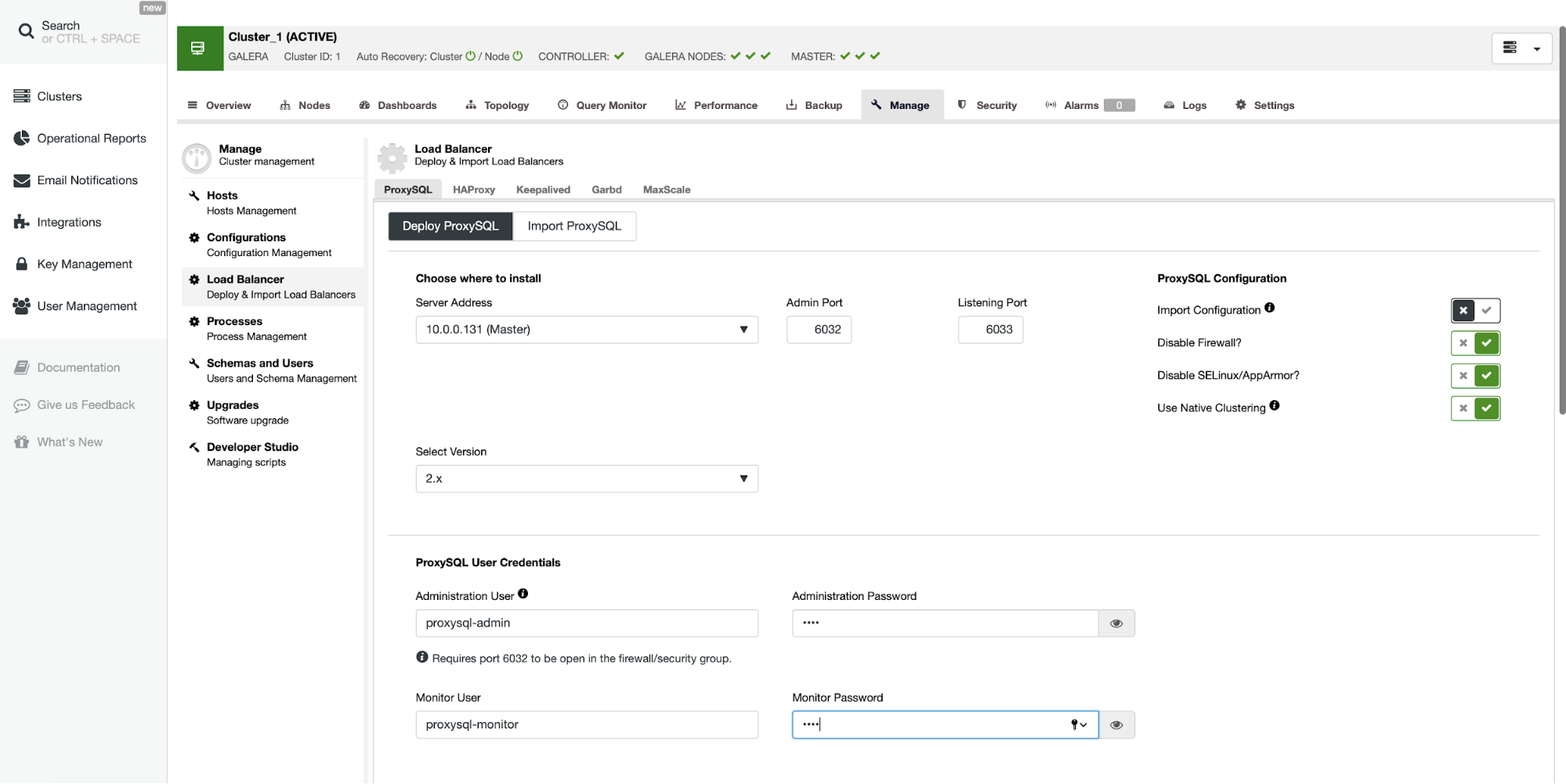

The recent version of ClusterControl allows you to set up ProxySQL clusters effortlessly. When deploying ProxySQL you should tick the “Use Native Clustering” option for all of the nodes you want to be part of the cluster.

Once you do that, you are pretty much done – the rest happens under the hood.

MySQL [(none)]> select * from proxysql_servers;

+------------+------+--------+----------------+

| hostname | port | weight | comment |

+------------+------+--------+----------------+

| 10.0.0.131 | 6032 | 0 | proxysql_group |

| 10.0.0.132 | 6032 | 0 | proxysql_group |

+------------+------+--------+----------------+

2 rows in set (0.001 sec)On both of the servers the proxysql_servers table was set properly with the hostnames of the nodes that form the cluster. We can also verify that the configuration changes are properly propagated across the cluster:

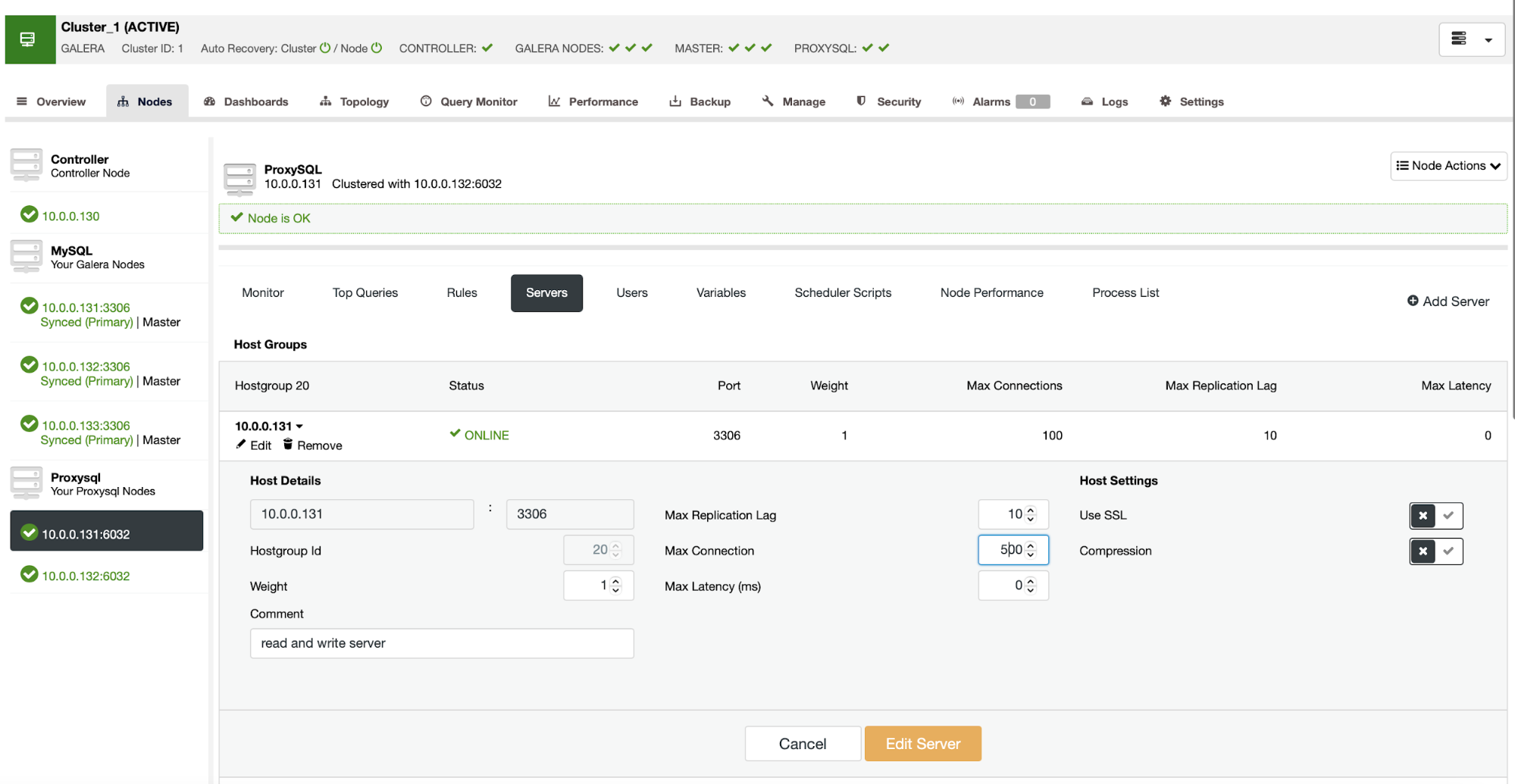

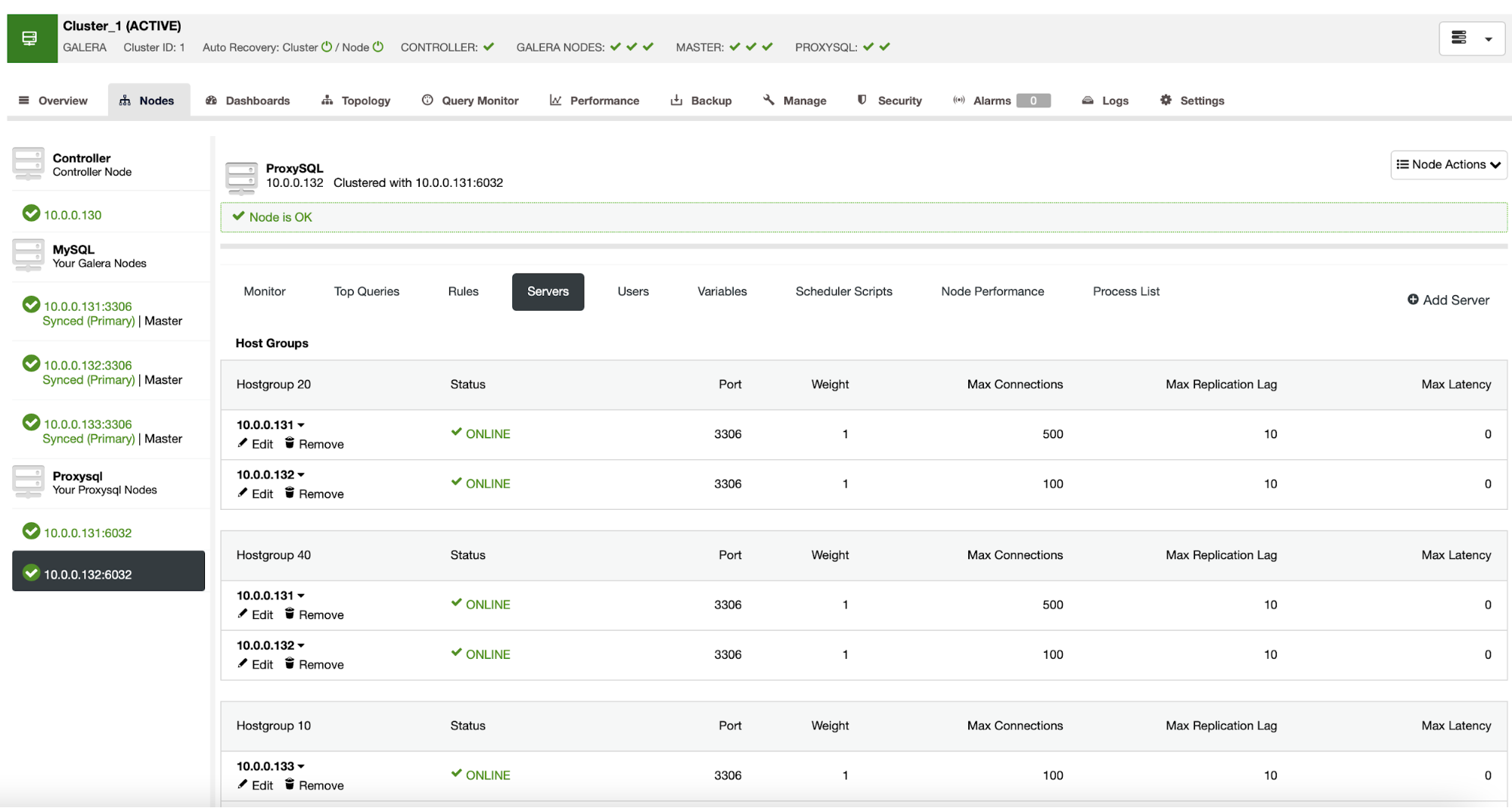

We have increased the Max Connections setting on one of the ProxySQL nodes (10.0.0.131) and we can verify that the other node (10.0.0.132) will see the same configuration:

In case of a need to debug the process, we can always look to the ProxySQL log (typically located in /var/lib/proxysql/proxysql.log) where we will see information like this:

2020-11-26 13:40:47 [INFO] Cluster: detected a new checksum for mysql_servers from peer 10.0.0.131:6032, version 11, epoch 1606398059, checksum 0x441378E48BB01C61 . Not syncing yet ...

2020-11-26 13:40:49 [INFO] Cluster: detected a peer 10.0.0.131:6032 with mysql_servers version 12, epoch 1606398060, diff_check 3. Own version: 9, epoch: 1606398022. Proceeding with remote sync

2020-11-26 13:40:50 [INFO] Cluster: detected a peer 10.0.0.131:6032 with mysql_servers version 12, epoch 1606398060, diff_check 4. Own version: 9, epoch: 1606398022. Proceeding with remote sync

2020-11-26 13:40:50 [INFO] Cluster: detected peer 10.0.0.131:6032 with mysql_servers version 12, epoch 1606398060

2020-11-26 13:40:50 [INFO] Cluster: Fetching MySQL Servers from peer 10.0.0.131:6032 started. Expected checksum 0x441378E48BB01C61

2020-11-26 13:40:50 [INFO] Cluster: Fetching MySQL Servers from peer 10.0.0.131:6032 completed

2020-11-26 13:40:50 [INFO] Cluster: Fetching checksum for MySQL Servers from peer 10.0.0.131:6032 before proceessing

2020-11-26 13:40:50 [INFO] Cluster: Fetching checksum for MySQL Servers from peer 10.0.0.131:6032 successful. Checksum: 0x441378E48BB01C61

2020-11-26 13:40:50 [INFO] Cluster: Writing mysql_servers table

2020-11-26 13:40:50 [INFO] Cluster: Writing mysql_replication_hostgroups table

2020-11-26 13:40:50 [INFO] Cluster: Loading to runtime MySQL Servers from peer 10.0.0.131:6032This is the log from 10.0.0.132 where we can clearly see that a configuration change for table mysql_servers was detected on 10.0.0.131 and then it was synced and applied on 10.0.0.132, making it in sync with the other node in the cluster.

As you can see, clustering ProxySQL is an easy yet efficient way to ensure its configuration stays in sync and helps significantly to use larger ProxySQL deployments. Let us know down in the comments what your experience with ProxySQL clustering is.