blog

Asynchronous Replication Automatic Failover in MySQL 8.0.22

Oracle recently released MySQL 8.0.22, and this new version came with a new asynchronous connection failover mechanism. It allows a replica to automatically establish an asynchronous replication connection to a new source, in case its existing one fails.

In this blog, we’ll look at this connection failover mechanism.

Overview

The asynchronous failover mechanism can be used to keep a replica synchronized with a group of servers that share data (Multisource slave). It will move the replication connection to a new source when the existing source connection fails.

Working Principle

When the existing connection source fails, the replica first retries the same connection for the number of times specified by the MASTER_RETRY_COUNT. The interval between attempts is set by the MASTER_CONNECT_RETRY option. When these attempts are exhausted, the asynchronous connection failover mechanism takes over the failover process.

Note that by default the MASTER_RETRY_COUNT is 86400 (1 day –> 24 hours) and the MASTER_CONNECT_RETRY default value is 60.

To ensure that the asynchronous connection failover mechanism can be activated promptly, set MASTER_RETRY_COUNT to a minimal number that just allows a few retry attempts with the same source, in case the connection failure is caused by a transient network outage.

How to Activate Asynchronous Connection Failover

- To activate asynchronous connection failover for a replication channel, set SOURCE_CONNECTION_AUTO_FAILOVER=1 on the CHANGE MASTER TO statement for the channel.

- We have two new functions, which will help to add and delete the server entries from the source list.

- asynchronous_connection_failover_add_source (add the server entries from the source list)

- asynchronous_connection_failover_delete_source (delete the server entries from the source list)

While using these functions, you need to specify the arguments like (‘channel’,’host’,port,’network_namespace’,weight).

Example

mysql> select asynchronous_connection_failover_add_source('testing', '192.168.33.12', 3306, '', 100);

+----------------------------------------------------------------------------------------+

| asynchronous_connection_failover_add_source('testing', '192.168.33.12', 3306, '', 100) |

+----------------------------------------------------------------------------------------+

| The UDF asynchronous_connection_failover_add_source() executed successfully. |

+----------------------------------------------------------------------------------------+

1 row in set (0.00 sec)The source servers need to be configured in the table “mysql.replication_asynchronous_connection_failover”. We can also use the table “performance_schema.replication_asynchronous_connection_failover” to view the available servers in the source list.

Note: If you are not using any channel based replication, this failover mechanism will work. While running the change master statement, there is no need to mention any channel name. But make sure GTID is enabled on all the servers.

Production Use Cases

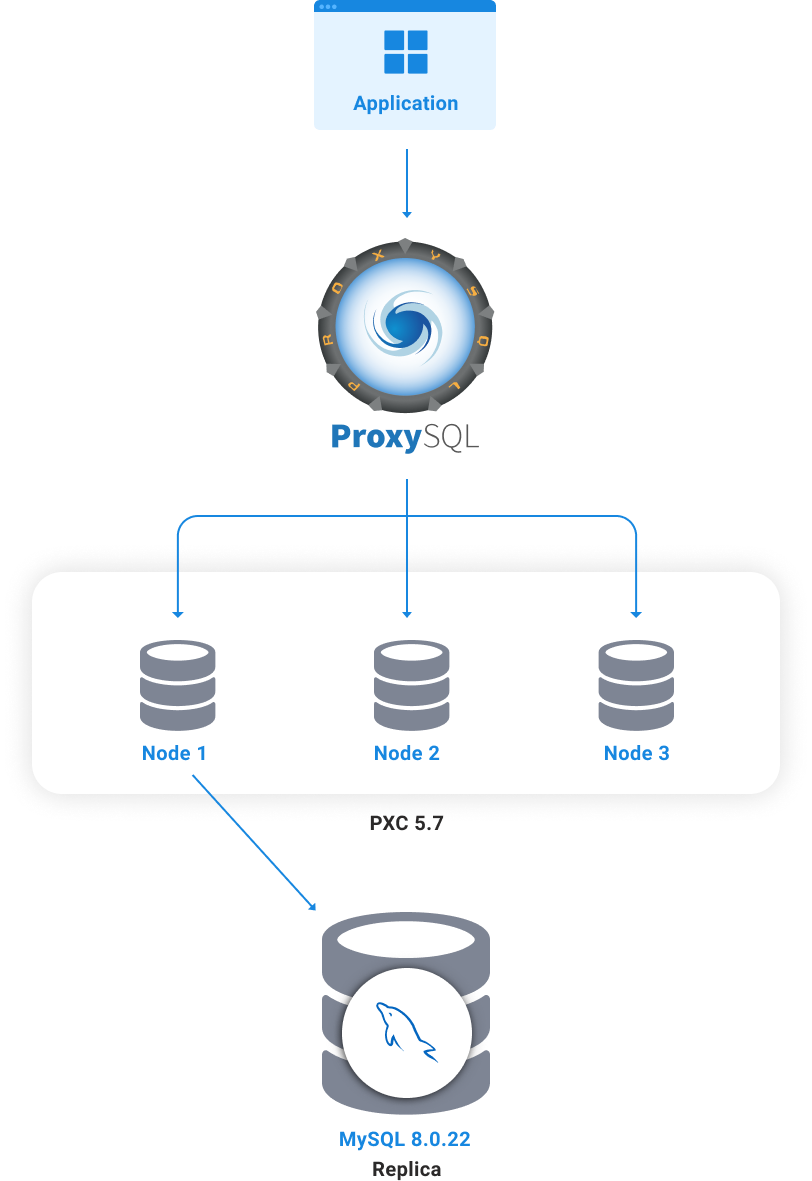

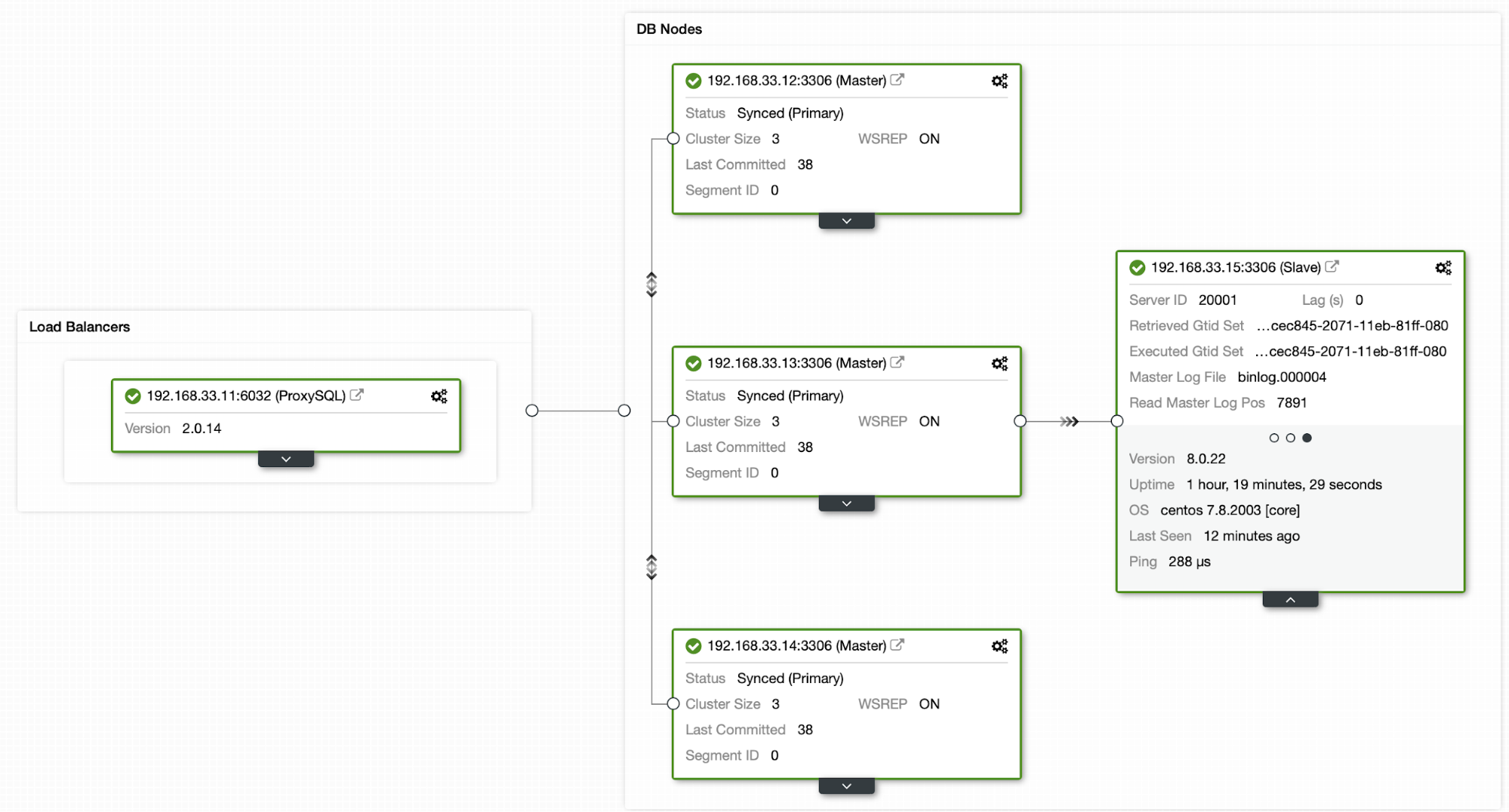

Say you have three PXC-5.7 node with production data, running behind ProxySQL. Now, we will going to configure the channel based asynchronous replication under node 1 (192.168.33.12).

- node 1 – 192.168.33.12

- node 2 – 192.168.33.13

- node 3 – 192.168.33.14

- Read Replica – 192.168.33.15

mysql> change master to master_user='repl',master_password='Repl@123',master_host='192.168.33.12',master_auto_position=1,source_connection_auto_failover=1,master_retry_count=3,master_connect_retry=6 for channel "prod_replica";

Query OK, 0 rows affected, 2 warnings (0.01 sec)

mysql> start replica for channel 'prod_replica';

Query OK, 0 rows affected (0.00 sec)Architecture Diagram

Test Case 1

We will going to add the failover settings:

mysql> select asynchronous_connection_failover_add_source('prod_replica', '192.168.33.12', 3306, '', 100);

+---------------------------------------------------------------------------------------------+

| asynchronous_connection_failover_add_source('prod_replica', '192.168.33.12', 3306, '', 100) |

+---------------------------------------------------------------------------------------------+

| The UDF asynchronous_connection_failover_add_source() executed successfully. |

+---------------------------------------------------------------------------------------------+

1 row in set (0.00 sec)

mysql> select asynchronous_connection_failover_add_source('prod_replica', '192.168.33.13', 3306, '', 80);

+--------------------------------------------------------------------------------------------+

| asynchronous_connection_failover_add_source('prod_replica', '192.168.33.13', 3306, '', 80) |

+--------------------------------------------------------------------------------------------+

| The UDF asynchronous_connection_failover_add_source() executed successfully. |

+--------------------------------------------------------------------------------------------+

1 row in set (0.01 sec)

mysql> select asynchronous_connection_failover_add_source('prod_replica', '192.168.33.14', 3306, '', 60);

+--------------------------------------------------------------------------------------------+

| asynchronous_connection_failover_add_source('prod_replica', '192.168.33.14', 3306, '', 60) |

+--------------------------------------------------------------------------------------------+

| The UDF asynchronous_connection_failover_add_source() executed successfully. |

+--------------------------------------------------------------------------------------------+

1 row in set (0.00 sec)

mysql> select * from mysql.replication_asynchronous_connection_failover;

+-------------------+---------------+------+-------------------+--------+

| Channel_name | Host | Port | Network_namespace | Weight |

+-------------------+---------------+------+-------------------+--------+

| prod_replica | 192.168.33.12 | 3306 | | 100 |

| prod_replica | 192.168.33.13 | 3306 | | 80 |

| prod_replica | 192.168.33.14 | 3306 | | 60 |

+-------------------+---------------+------+-------------------+--------+

3 rows in set (0.00 sec)Ok all good, I can activate the auto_failover. Let’s stop node 1 (192.168.33.12) MySQL. ProxySQL will promote the next suitable master.

[root@centos12 lib]# service mysqld stop

Redirecting to /bin/systemctl stop mysqld.serviceIn the Replica Server

mysql> show replica statusG

*************************** 1. row ***************************

Slave_IO_State: Reconnecting after a failed master event read

Master_Host: 192.168.33.12

Master_User: repl

Master_Port: 3306

Connect_Retry: 6

Master_Log_File: binlog.000004

Read_Master_Log_Pos: 1143

Relay_Log_File: relay-bin-testing.000006

Relay_Log_Pos: 1352

Relay_Master_Log_File: binlog.000004

Slave_IO_Running: Connecting

Slave_SQL_Running: Yes

Replicate_Do_DB:

Last_IO_Error: error reconnecting to master '[email protected]:3306' - retry-time: 10 retries: 2 message: Can't connect to MySQL server on '192.168.33.12' (111)The IO thread is in “connecting” state. This means it is trying to establish the connection from the existing source (node 1) based on the master_retry_count and master_connect_retry settings.

After a few seconds, you can see the source_host was changed to node 2 (192.168.33.13). Now the failover is done.

mysql> show replica statusG

*************************** 1. row ***************************

Slave_IO_State: Waiting for master to send event

Master_Host: 192.168.33.13

Master_User: repl

Master_Port: 3306

Connect_Retry: 6

Master_Log_File: binlog.000004

Read_Master_Log_Pos: 1162

Relay_Log_File: relay-bin-testing.000007

Relay_Log_Pos: 487

Relay_Master_Log_File: binlog.000004

Slave_IO_Running: Yes

Slave_SQL_Running: Yes

Last_IO_Error:From the Error Log

2020-10-29T22:22:05.679951Z 54 [ERROR] [MY-010584] [Repl] Slave I/O for channel 'prod_replica': error reconnecting to master '[email protected]:3306' - retry-time: 10 retries: 3 message: Can't connect to MySQL server on '192.168.33.12' (111), Error_code: MY-002003

2020-10-29T22:22:05.681121Z 58 [Warning] [MY-010897] [Repl] Storing MySQL user name or password information in the master info repository is not secure and is therefore not recommended. Please consider using the USER and PASSWORD connection options for START SLAVE; see the 'START SLAVE Syntax' in the MySQL Manual for more information.

2020-10-29T22:22:05.682830Z 58 [System] [MY-010562] [Repl] Slave I/O thread for channel 'prod_replica': connected to master '[email protected]:3306',replication started in log 'FIRST' at position 2660

2020-10-29T22:22:05.685175Z 58 [Warning] [MY-010549] [Repl] The master's UUID has changed, although this should not happen unless you have changed it manually. The old UUID was 31b5b7d0-1a25-11eb-8076-080027090068.

(END)Test Case 2

While running the change master statement, there is no need to mention any channel name, whether you are using channel based replication or not.

Example

mysql> change master to master_user='repl',master_password='Repl@123',master_host='192.168.33.12',master_auto_position=1,source_connection_auto_failover=1,master_retry_count=3,master_connect_retry=10;

Query OK, 0 rows affected, 2 warnings (0.01 sec)

mysql> start replica;

Query OK, 0 rows affected (0.00 sec)Then add the failover settings like below,

select asynchronous_connection_failover_add_source('', '192.168.33.12', 3306, '', 100);

select asynchronous_connection_failover_add_source('', '192.168.33.13', 3306, '', 80);

select asynchronous_connection_failover_add_source('', '192.168.33.14', 3306, '', 60);

mysql> select * from mysql.replication_asynchronous_connection_failover;

+--------------+---------------+------+-------------------+--------+

| Channel_name | Host | Port | Network_namespace | Weight |

+--------------+---------------+------+-------------------+--------+

| | 192.168.33.12 | 3306 | | 100 |

| | 192.168.33.13 | 3306 | | 80 |

| | 192.168.33.14 | 3306 | | 60 |

+--------------+---------------+------+-------------------+--------+

3 rows in set (0.00 sec)Now, I’m going to stop node 1 (192.168.33.12).

Replication Error

Last_IO_Error: error connecting to master '[email protected]:3306' - retry-time: 10 retries: 2 message: Can't connect to MySQL server on '192.168.33.12' (111)From the Error Log

2020-10-30T00:38:03.471482Z 27 [ERROR] [MY-010584] [Repl] Slave I/O for channel '': error connecting to master '[email protected]:3306' - retry-time: 10 retries: 3 message: Can't connect to MySQL server on '192.168.33.12' (111), Error_code: MY-002003

2020-10-30T00:38:03.472002Z 29 [Warning] [MY-010897] [Repl] Storing MySQL user name or password information in the master info repository is not secure and is therefore not recommended. Please consider using the USER and PASSWORD connection options for START SLAVE; see the 'START SLAVE Syntax' in the MySQL Manual for more information.

2020-10-30T00:38:03.473493Z 29 [System] [MY-010562] [Repl] Slave I/O thread for channel '': connected to master '[email protected]:3306',replication started in log 'FIRST' at position 234

2020-10-30T00:38:03.475471Z 29 [Warning] [MY-010549] [Repl] The master's UUID has changed, although this should not happen unless you have changed it manually. The old UUID was 1ff8a919-1a39-11eb-a27a-080027090068.Using ClusterControl

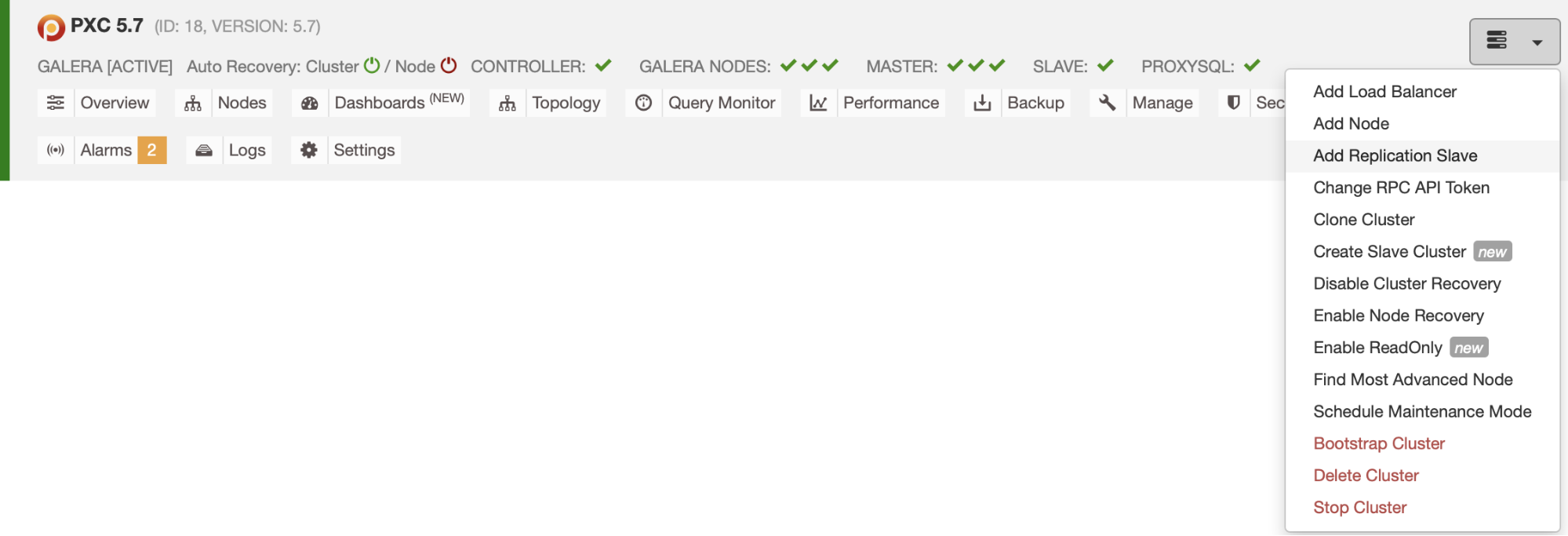

Now we will use ClusterControl to repeat this automatic failover process. I have three nodes pxc (5.7) deployed by ClusterControl. I have an 8.0.22 replication slave under my PXC node2 and we will going to add this read replica using ClusterControl.

Step 1

Setup the passwordless SSH login from ClusterControl node to read replica node.

$ ssh-copy-id -i ~/.ssh/id_rsa 192.168.33.15Step 2

Go to ClusterControl and click the drop down icon and select Add Replication slave option.

Step 3

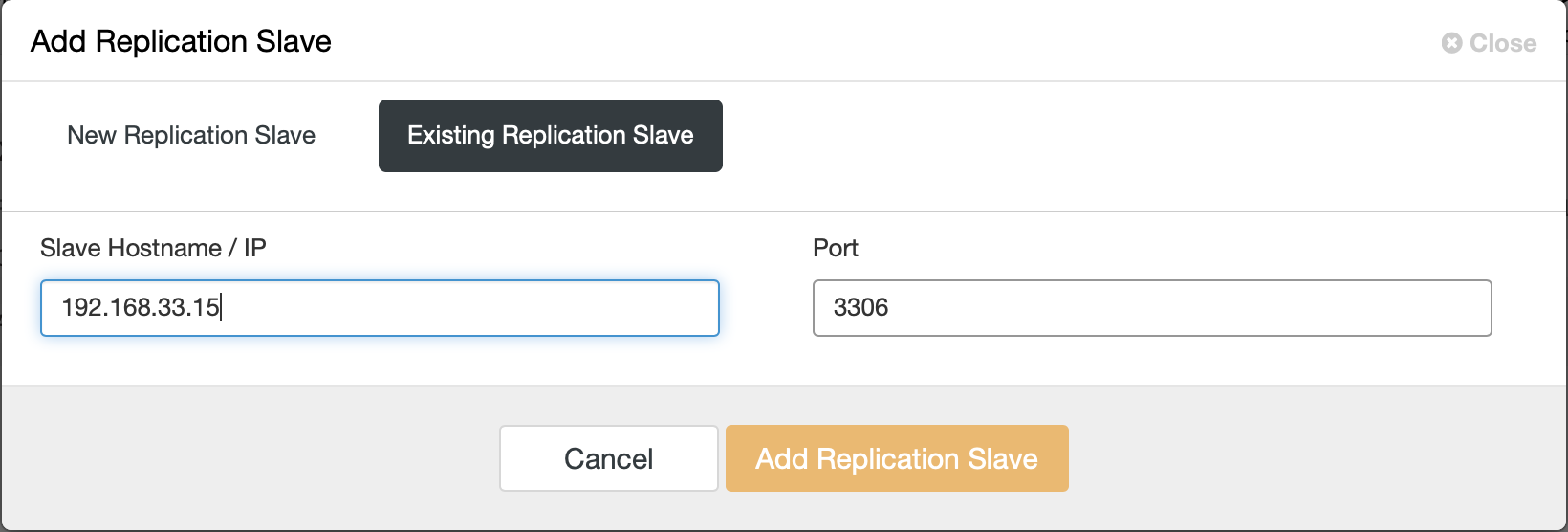

Then choose the “Existing Replication Slave” option and enter the read replica IP then click “Add Replication Slave”.

Step 4

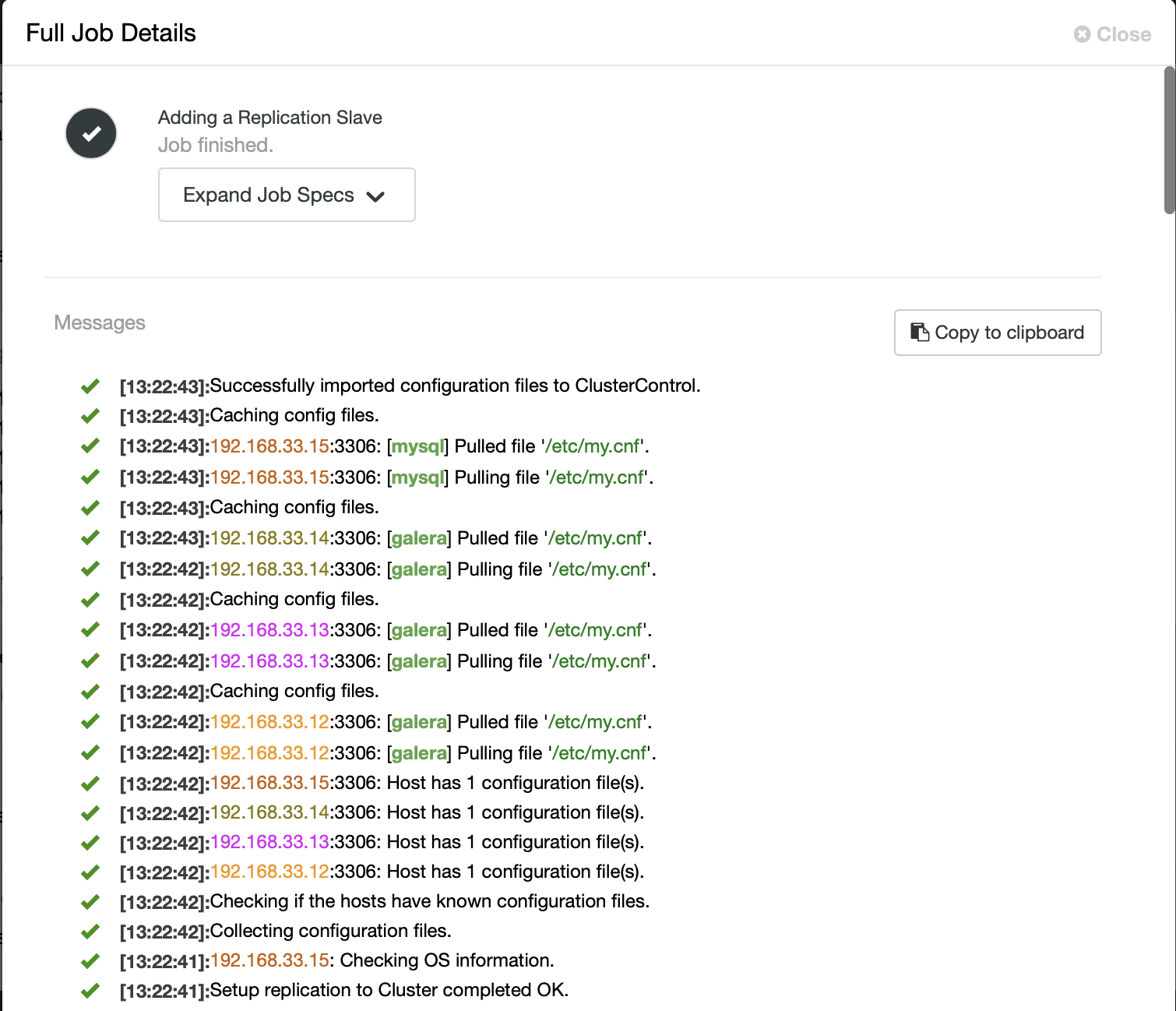

A job will be triggered and you can monitor the progress at ClusterControl > Logs > Jobs. Once the process is complete, the slave will show up in your Overview page as highlighted in the following screenshot.

Now you can check the current topology at ClusterControl > Topology

Replica Auto Failover Process

Now I’m going to do failover testing and added the below settings to this function (asynchronous_connection_failover_add_source) in my read replica.

select asynchronous_connection_failover_add_source('prod_replica', '192.168.33.12', 3306, '', 100);

select asynchronous_connection_failover_add_source('prod_replica', '192.168.33.13', 3306, '', 80);

select asynchronous_connection_failover_add_source('prod_replica', '192.168.33.14', 3306, '', 60);

mysql> select * from mysql.replication_asynchronous_connection_failover;

+--------------+---------------+------+-------------------+--------+

| Channel_name | Host | Port | Network_namespace | Weight |

+--------------+---------------+------+-------------------+--------+

| prod_replica | 192.168.33.12 | 3306 | | 100 |

| prod_replica | 192.168.33.13 | 3306 | | 80 |

| prod_replica | 192.168.33.14 | 3306 | | 60 |

+--------------+---------------+------+-------------------+--------+

3 rows in set (0.00 sec)

mysql> select CONNECTION_RETRY_INTERVAL,CONNECTION_RETRY_COUNT,SOURCE_CONNECTION_AUTO_FAILOVER from performance_schema.replication_connection_conf

iguration;

+---------------------------+------------------------+---------------------------------+

| CONNECTION_RETRY_INTERVAL | CONNECTION_RETRY_COUNT | SOURCE_CONNECTION_AUTO_FAILOVER |

+---------------------------+------------------------+---------------------------------+

| 6 | 3 | 1 |

+---------------------------+------------------------+---------------------------------+

1 row in set (0.00 sec)

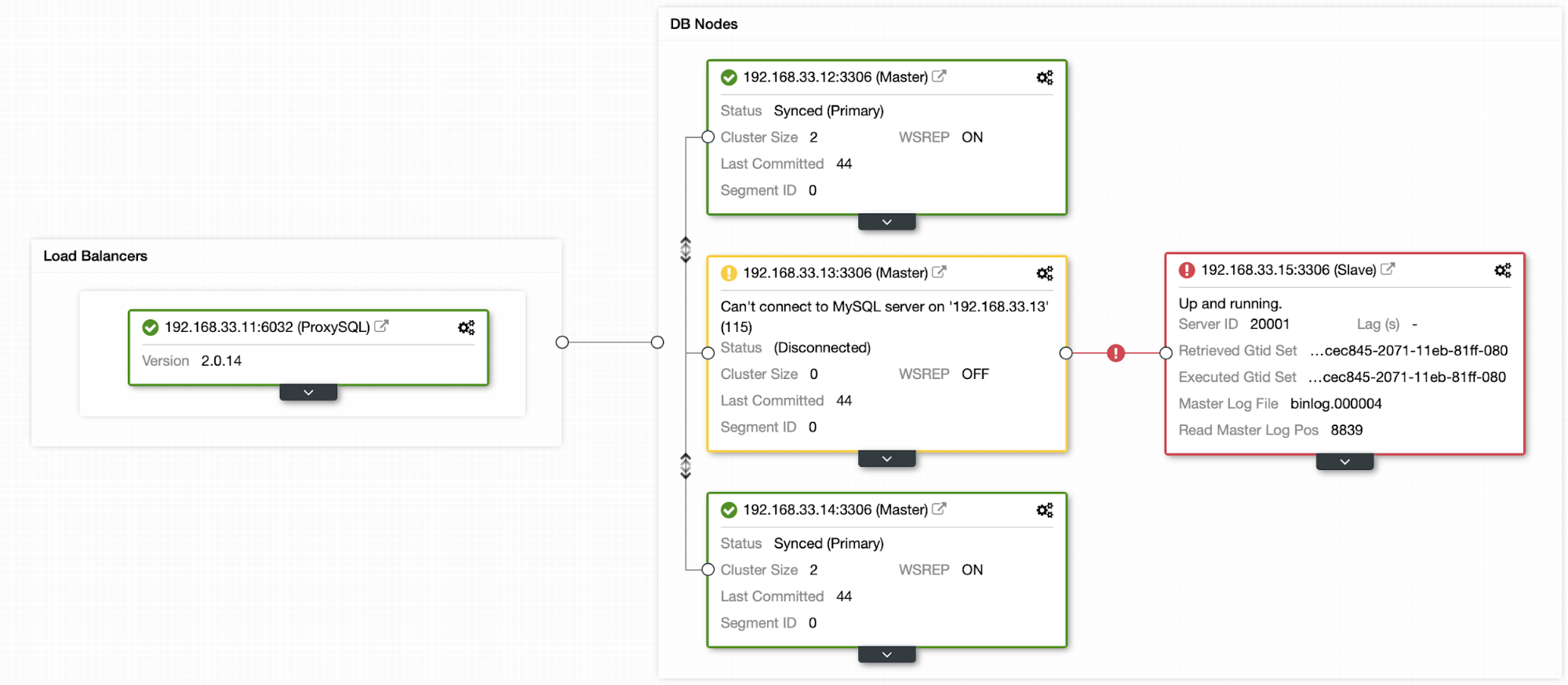

I’m going to stop node 2 (192.168.33.13). In ClusterControl, the (enable_cluster_autorecovery) parameter is enabled so it will promote the next suitable master.

Now my current master is down, so the read replica is retrying to connect the master.

Replication Error From Read Replica

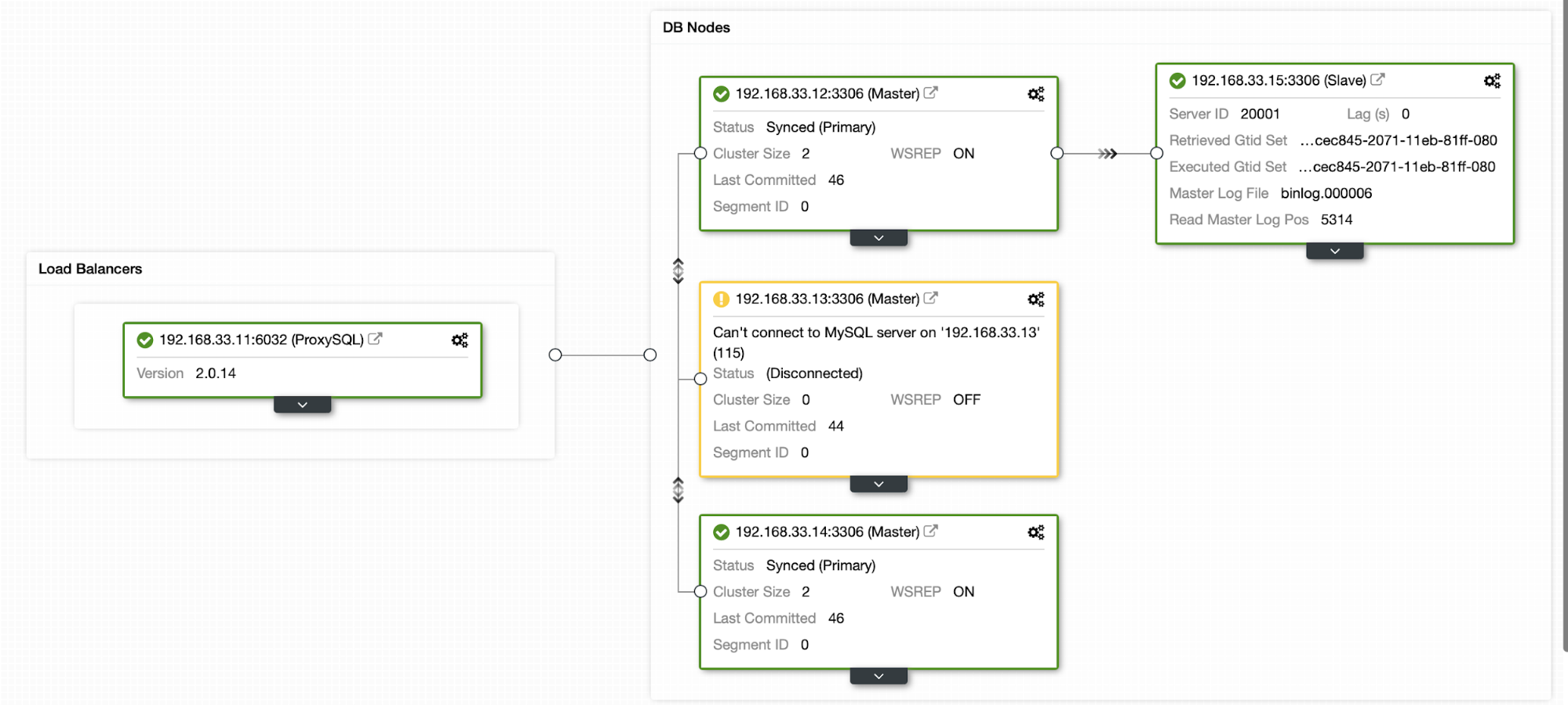

Last_IO_Error: error connecting to master '[email protected]:3306' - retry-time: 6 retries: 2 message: Can't connect to MySQL server on '192.168.33.13' (111)Once the ClusterControl promotes the next suitable master, my read replica will connect to any one of the available cluster nodes.

The automatic failover process is completed and my read replica joined back to node 1 (192.168.33.13) server.

Conclusion

This is one of the great features in MySQL, there is no manual intervention needed. This auto failover process can save you some time. And it reduces the replica server outage. Worth noting, when my old master came back to rotation, the replication connection would not switch back to the old master.