blog

Monitoring Percona Distribution for PostgreSQL – Key Metrics

Monitoring is the base to know if your systems are working properly, and it allows you to fix or prevent any issue before affecting your business. Even in a robust technology like PostgreSQL, monitoring is a must, and the main goal is to know what to monitor, otherwise, it couldn’t make sense or not be useful in case you need to use it. In this blog, we will see what Percona Distribution for PostgreSQL is and what key metrics to monitor on it.

Percona Distribution for PostgreSQL

It is a collection of tools to assist you in managing your PostgreSQL database system. It installs PostgreSQL and complements it by a selection of extensions that enable solving essential practical tasks efficiently, including:

- pg_repack: It rebuilds PostgreSQL database objects.

- pgaudit: It provides detailed session or object audit logging via the standard PostgreSQL logging facility.

- pgBackRest: It is a backup and restore solution for PostgreSQL.

- Patroni: It is a High Availability solution for PostgreSQL.

- pg_stat_monitor: It collects and aggregates statistics for PostgreSQL and provides histogram information.

- A collection of additional PostgreSQL contrib extensions.

Percona Distribution for PostgreSQL is also shipped with the libpq library. It contains a set of library functions that allow client programs to pass queries to the PostgreSQL backend server and to receive the results of these queries.

What to Monitor in Percona Distribution for PostgreSQL

When monitoring a database cluster, there are two main things to take into account: the operating system and the database itself. You will need to define which metrics you are going to monitor from both sides and how you are going to do it.

Keep in mind that when one of your metrics is affected, it can also affect others, making troubleshooting of the issue more complex. Having a good monitoring and alerting system is important to make this task as simple as possible.

Operating System Monitoring

One important thing is to monitor the Operating System behavior. Let’s see some points to check here.

CPU Usage

An excessive percentage of CPU usage could be a problem if it is not usual behavior. In this case, it is important to identify the processes that are generating this issue. If the problem is the database process, you will need to check what is happening inside the database.

RAM Memory or SWAP Usage

If you are seeing a high value for this metric and nothing has changed in your system, you probably need to check your database configuration. Parameters like shared_buffers and work_mem can affect this directly as they define the amount of memory to be able to use for the PostgreSQL database.

Disk Usage

An abnormal increase in the use of disk space or an excessive disk access consumption are important things to monitor as you could have a high number of errors logged in the PostgreSQL log file or a bad cache configuration that could generate an important disk access consumption instead of using memory to process the queries.

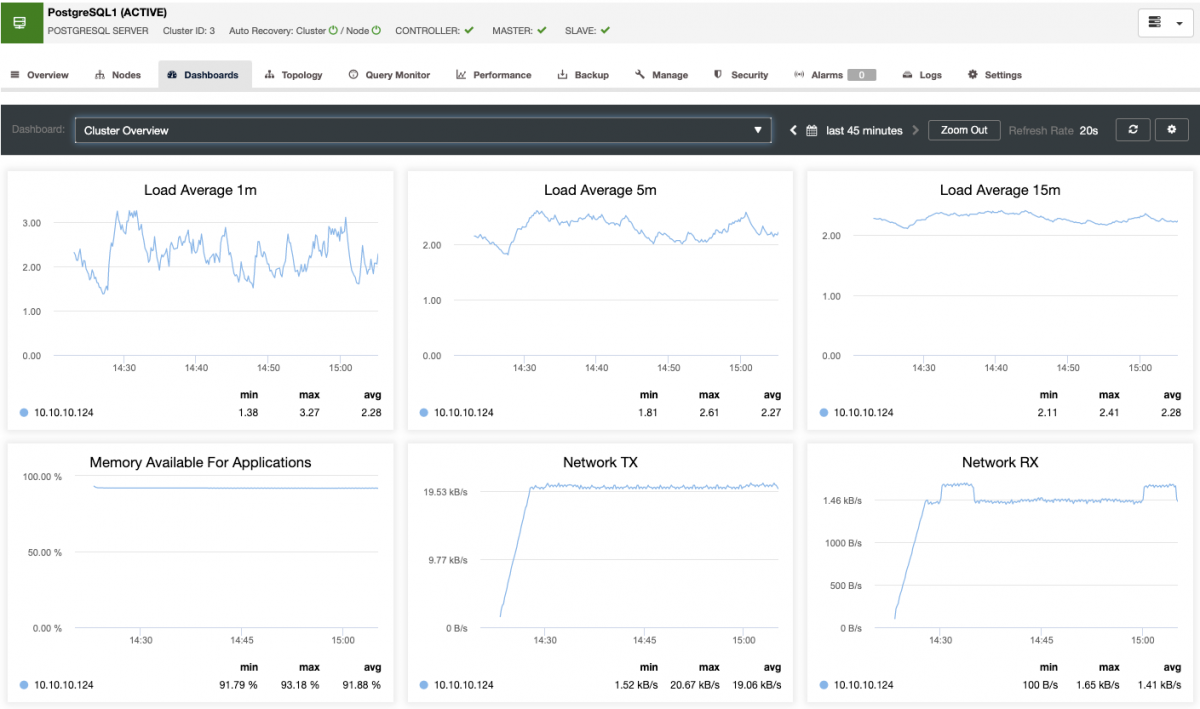

Load Average

It is related to the three points mentioned above. A high load average could be generated by an excessive CPU, RAM, or disk usage.

Network

A network issue can affect all the systems as the application can’t connect (or connect losing packages) to the database, so this is an important metric to monitor indeed. You can monitor latency or packet loss, and the main issue could be a network saturation, a hardware issue, or just a bad network configuration.

PostgreSQL Database Monitoring

Monitoring your PostgreSQL database is not only important to see if you are having an issue, but also to know if you need to change something to improve your database performance, that is probably one of the most important things to monitor in a database. Let’s see some metrics that are important for this.

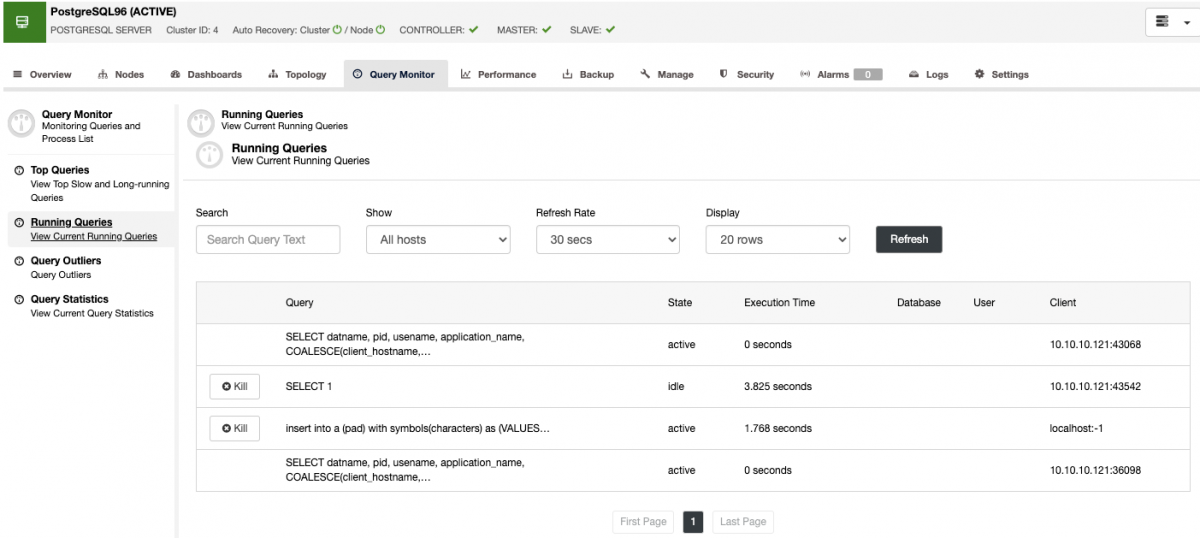

Query Monitoring

By default, PostgreSQL is configured with compatibility and stability in mind, so you need to know your queries and his pattern, and configure your databases depending on the traffic that you have. Here, you can use the EXPLAIN command to check the query plan for a specific query, and you can also monitor the amount of SELECT, INSERT, UPDATE, or DELETEs on each node. If you have a long query or a high number of queries running at the same time, that could be a problem for all the systems.

Monitoring Active Sessions

You should also monitor the number of active sessions. If you are near the limit, you need to check if something is wrong or if you just need to increment the max_connections value. The difference in the number can be an increase or decrease of connections. Bad usage of connection pooling, locking, or a network issue are the most common problems related to the number of connections.

Database Locks

If you have a query waiting for another query, you need to check if that another query is a normal process or something new. In some cases, if somebody is making an update on a big table, for example, this action can be affecting the normal behavior of your database, generating a high number of locks.

Monitoring Replication

The key metrics to monitor for replication are the lag and the replication state. The most common issues are networking issues, hardware resource issues, or under dimensioning issues. If you are facing a replication issue you will need to know this asap as you will need to fix it to ensure the high availability environment.

Monitoring Backups

Avoiding data loss is one of the basic DBA tasks, so you don’t only need to take the backup, you should know if the backup was completed, and if it is usable. Usually, this last point is not taken into account, but it is probably the most important check in a backup process.

Monitoring Database Logs

You should monitor your database log for errors like FATAL or deadlock, or even for common errors like authentication issues or long-running queries. Most of the errors are written in the log file with detailed useful information to fix it.

Dashboards

Visibility is useful for fast issue detection. It is definitely a more time-consuming task to read a command output than just watch a graph. So, the usage of a dashboard could be the difference between detecting a problem now or in the next 15 minutes, most sure that time could be really important for the company.

Alerting

Just monitoring a system doesn’t make sense if you don’t receive a notification about each issue. Without an alerting system, you should go to the monitoring tool to see if everything is fine, and it could be possible that you were having a big issue since many hours ago.

Monitoring Your PostgreSQL Database with ClusterControl

It is really difficult to find a tool to monitor all the necessary metrics for PostgreSQL, in general, you will need to use more than one and even some scripting will need to be made. One way to centralize the monitoring and alerting task is by using ClusterControl, which provides you with features like backup management, monitoring and alerting, deployment and scaling, automatic recovery, and more important features to help you to manage your databases. All these features on the same system.

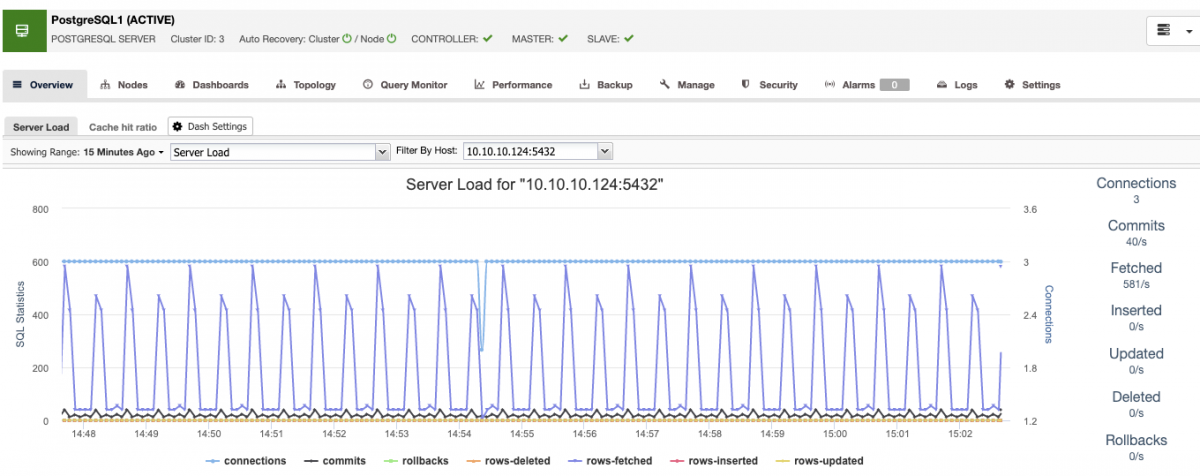

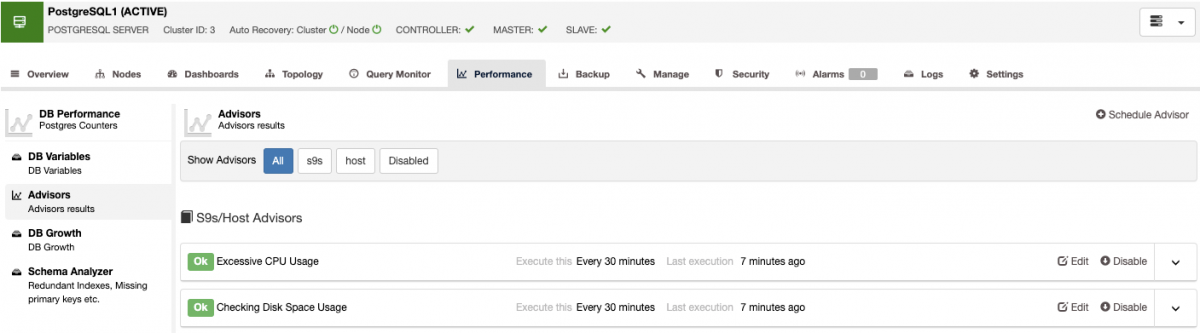

ClusterControl has a predefined set of dashboards for you, to analyze some of the most common metrics.

It allows you to customize the graphs available in the cluster, and you can enable the agent-based monitoring to generate more detailed dashboards.

You can also create alerts, which inform you of events in your cluster, or integrate with different services such as PagerDuty or Slack.

Also, you can check the query monitor section, where you can find the top queries, the running queries, queries outliers, and the queries statistics.

With these features, you can see how your PostgreSQL database is going.

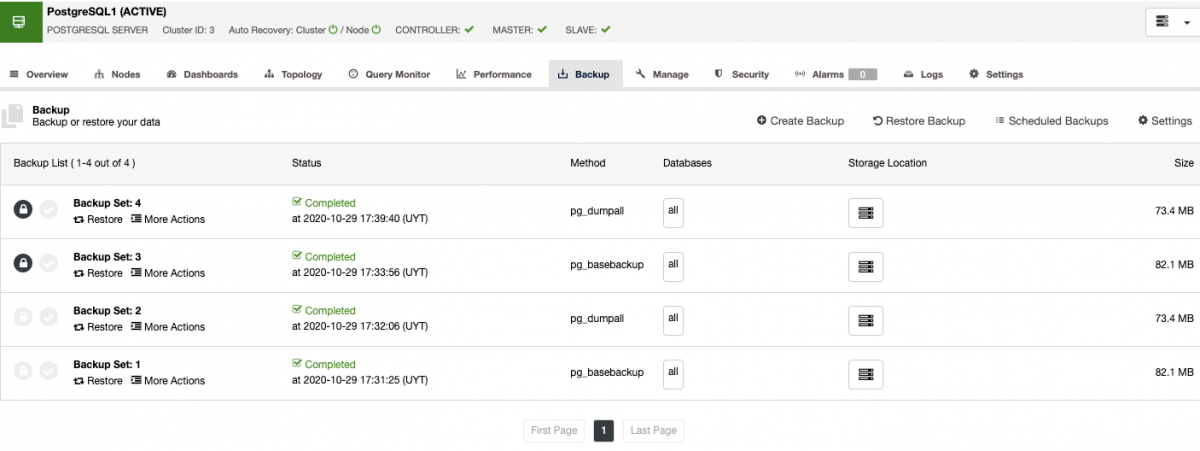

For backup management, ClusterControl centralizes it to protect, secure, and recover your data, and with the verification backup feature, you can confirm if the backup is good to go.

This verification backup job will restore the backup in a separate standalone host, so you can make sure that the backup is working.

Monitoring with the ClusterControl Command Line

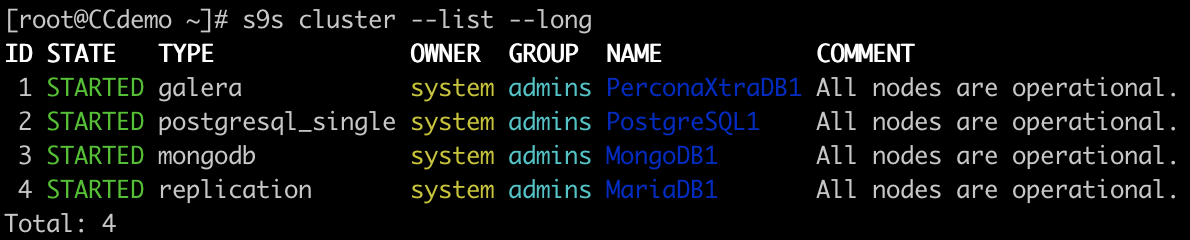

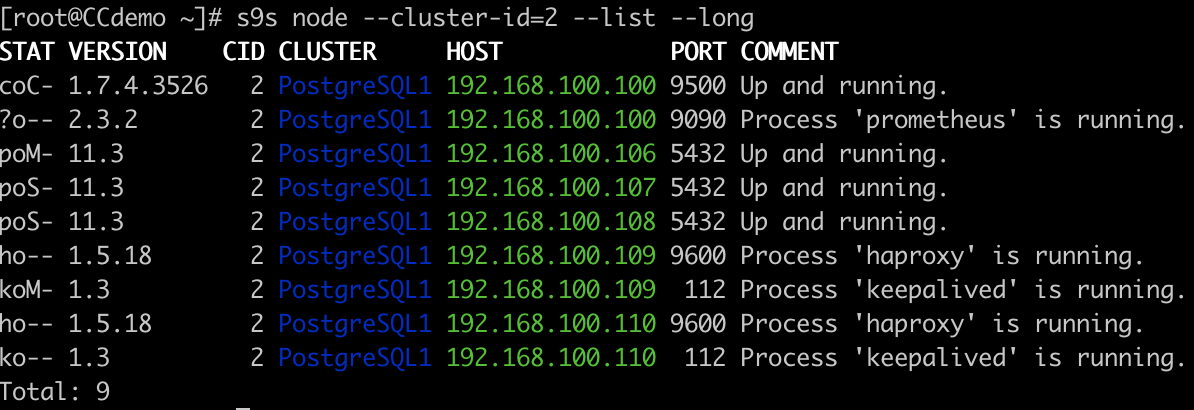

For scripting and automating tasks, or even if you just prefer the command line, ClusterControl has the s9s tool. It is a command-line tool for managing your database cluster.

Cluster List

Node List

Conclusion

Monitoring is absolutely necessary, and the best way on how to do it depends on the infrastructure and the system itself. In this blog, we introduce you to Percona Distribution for PostgreSQL, and we mentioned some important metrics to monitor in your PostgreSQL environment. We also showed you how ClusterControl is useful for this task.