blog

How to Scale Joomla on Multiple Servers

Joomla! is estimated to be the second most used CMS on the internet after WordPress, with users like eBay, IKEA, Sony, McDonald’s and Pizza Hut. In this post, we will describe how to scale Joomla on multiple servers. This architecture not only allows the CMS to handle more users, by load-balancing traffic across multiple servers. It also brings high availability by providing fail-over between servers.

This post is similar to our previous posts on web application scalability and high availability:

- Drupal – Apache, MySQL Galera Cluster, csync2 with lsyncd

- WordPress – Apache, Percona XtraDB Cluster, GlusterFS

- Magento – nginx, MySQL Galera Cluster, OCFS2

In this post, we will focus on setting up Joomla 3.1 on Apache and MariaDB Galera Cluster with GFS2 (Global File System) using RedHat Cluster Suite on CentOS 6.3 64bit. We will use a server for shared storage and ClusterControl. Please note that you need to set up redundancy for the shared storage, or you could also look replicating the file-systems of node1, node2 and node3 using csync2 and lsyncd as we covered in a previous post.

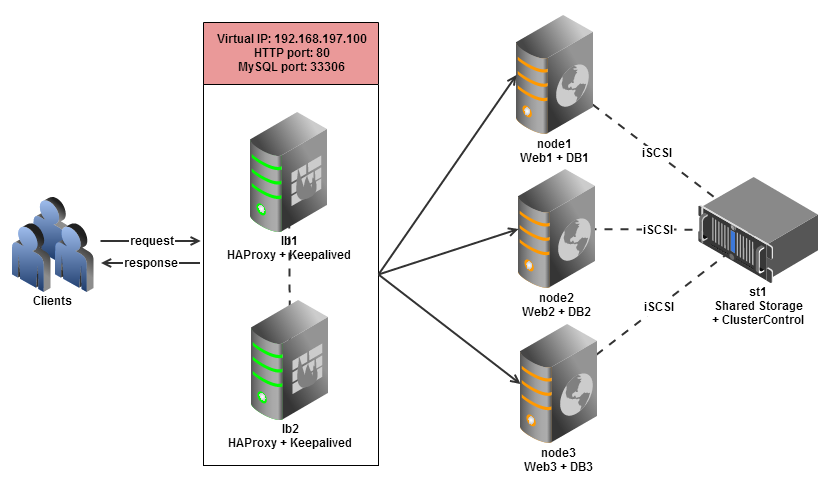

The following diagram illustrates our setup:

Our setup consists of 6 servers or nodes:

- node1: web server + database server

- node2: web server + database server

- node3: web server + database server

- lb1: load balancer (master) + keepalived

- lb2: load balancer (backup) + keepalived

- st1: shared storage + ClusterControl

Our major steps would be:

- Prepare 6 hosts

- Deploy MariaDB Cluster onto node1, node2 and node3 from st1

- Configure iSCSI target on st1

- Configure GFS2 and mount the shared disk onto node1, node2 and node3

- Configure Apache on node1, node2 and node3

- Configure Keepalived and HAProxy for web and database load balancing with auto failover

- Install Joomla and connect it to the Web/DB cluster via the load balancer

Prepare Hosts

Add following hosts definition in /etc/hosts:

192.168.197.100 mytestjoomla.com www.mytestjoomla.com mysql.mytestjoomla.com #virtual IP 192.168.197.101 node1 web1 db1 192.168.197.102 node2 web2 db2 192.168.197.103 node3 web3 db3 192.168.197.111 lb1 192.168.197.112 lb2 192.168.197.121 st1

To simplify the deployment process, we will turn off SElinux and iptables in all hosts:

$ setenforce 0 $ service iptables stop $ sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/selinux/config $ chkconfig iptables off

Deploy MariaDB Cluster

** The deployment of the database cluster will be done from st1

1. To set up MariaDB Cluster, go to the Galera Configurator to generate a deployment package. In the wizard, we used the following values when configuring our database cluster:

- Vendor: MariaDB Cluster

- Infrastructure: none/on-premises

- Operating System: RHEL6 – Redhat 6.3/Fedora/Centos 6.3/OLN 6.3/Amazon AMI

- Number of Galera Servers: 3+1

- OS user: root

- ClusterControl Server: 192.168.197.112

- Database Servers: 192.168.197.101 192.168.197.102 192.168.197.103

At the end of the wizard, a deployment package will be generated and emailed to you.

2. Download the deployment package and run deploy.sh:

$ wget https://severalnines.com/galera-configurator/tmp/5p7q2amjqf7pioummp2hgo6iu2/s9s-galera-mariadb-2.4.0-rpm.tar.gz $ tar xvfz s9s-galera-mariadb-2.4.0-rpm.tar.gz $ cd s9s-galera-mariadb-2.4.0-rpm/mysql/scripts/install $ bash ./deploy.sh 2>&1 | tee cc.log

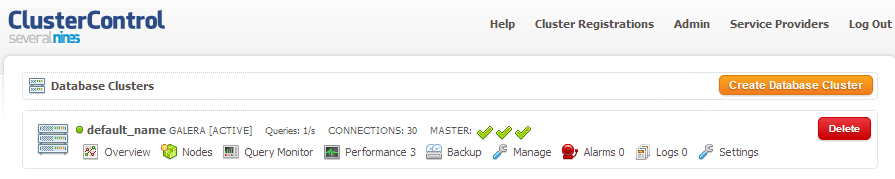

3. The deployment takes about 15 minutes, and once it is completed, note your API key. Use it to register the cluster with the ClusterControl UI by going to http://192.168.197.121/cmonapi . You will now see your MariaDB Cluster in the UI.

Configure iSCSI Target

1. The storage server (st1) needs to export a disk through iSCSI so it can be mounted on all three web servers (node1, node2 and node3). iSCSI basically tells your kernel you have a SCSI disk, and it transports that access over IP. The “server” is called the “target” and the “client” that uses that iSCSI device is the “initiator”. Install iSCSI target in st1:

$ yum install -y scsi-target-utils

2. Node st1 will have another disk mounted as /dev/sdb, this will be our shared (iSCSI) disk. Create one primary partition for /dev/sdb:

$ fdisk /dev/sdb

Sequence press: n > p > 1 > Enter > Enter > w

3. Define the iSCSI target configuration file by adding following lines:

# /etc/tgt/targets.conf <target iqn.2013-06.storage:shared_disk1> backing-store /dev/sdb1 initiator-address 192.168.197.0/24 target>

4. Start the iSCSI target service and enable the service to start on boot:

$ service tgtd start $ chkconfig tgtd on

Create Cluster

1. Install required packages in node1, enable luci and ricci on boot and start them:

$ yum groupinstall -y "High Availability" "High Availability Management" "Resilient Storage" $ chkconfig luci on $ chkconfig ricci on $ service luci start $ service ricci start

2. Install required packages in node2 and node3, enable ricci on boot and start the service:

$ yum groupinstall -y "High Availability" "Resilient Storage" $ chkconfig ricci on $ service ricci start

3. Reset the user ricci password. It is recommended to use the same password across all nodes:

$ passwd ricci

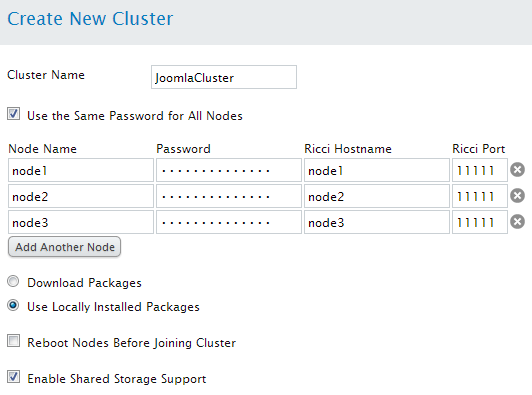

4. Open the management page at https://192.168.197.101:8084/. Login using root user and password and go to Manage Clusters > Create and enter following details:

Configure iSCSI Initiator and Mount Point

** The following steps should be performed on node1, node2 and node3 unless specified.

1. Install iSCSI initiator on respective hosts:

$ yum install -y iscsi-initiator-utils

2. Discover iSCSI targets that we have setup earlier:

$ iscsiadm -m discovery -t sendtargets -p st1 192.168.197.111:3260,1 iqn.2013-06.storage:shared_disk1

3. If you are able to see the above output, it means we can see and are able to connect to the iSCSI target. Set the iSCSI initiator to automatically start and restart the iSCSI initiator service to connect to the iSCSI disk:

$ chkconfig iscsi on $ service iscsi restart

4. List out all disks and make sure you can see the new disk, /dev/sdb:

$ fdisk -l

5. Create a partition inside /dev/sdb which is mounted via iSCSI on node1:

$ fdisk /dev/sdb

6. Format the partition with GFS2 from node1. Take note that our cluster name is JoomlaCluster and the iSCSI partition is /dev/sdb1:

$ mkfs.gfs2 -p lock_dlm -t JoomlaCluster:data -j 4 /dev/sdb1

7. Once finished, you will see some information on the newly created GFS2 partition. Get the UUID value and add it into /etc/fstab:

UUID=fbf71d17-2742-2758-d917-9bd104685194 /home/joomla/public_html gfs2 noatime,nodiratime 0 0

8. Create a user named “joomla” and the web directory which we will used to mount GFS2:

$ useradd joomla $ mkdir -p /home/joomla/public_html $ chmod 755 /home/joomla

9. Restart the iSCSI service again to get the updated partition on the iSCSI disk:

$ service iscsi restart

10. Enable GFS2 on boot and start the service. This will mount GFS2 into /home/joomla/public_html:

$ chkconfig gfs2 on $ service gfs2 start

Configure Apache and PHP

** The following steps should be performed on node1, node2 and node3.

1. Install Apache and PHP and all related packages:

$ yum install httpd php php-pdo php-gd php-xml php-mbstring php-mysql ImageMagick unzip sendmail -y

2. Create Apache logs directory for our Joomla site with log files:

$ mkdir -p /home/joomla/logs $ touch /home/joomla/logs/error_log $ touch /home/joomla/logs/access_log

3. Create a new configuration file for the site under /etc/httpd/conf.d/ and add following lines:

# /etc/httpd/conf.d/joomla.conf NameVirtualHost *:80 <VirtualHost *:80> ServerName testjoomla.com ServerAlias www.testjoomla.com ServerAdmin [email protected] DocumentRoot /home/joomla/public_html ErrorLog /home/joomla/logs/error_log CustomLog /home/joomla/logs/access_log combined VirtualHost>

4. Enable Apache on boot and start the service:

$ chkconfig httpd on $ service httpd start

5. Apply correct ownership to the public_html folder:

$ chown apache.apache -Rf /home/joomla/public_html

Load Balancers and Failover

** The following steps should be performed on st1

1. We have deploy scripts to install HAProxy and Keepalived, these can be obtained from our Git repository. Install git and clone the repo:

$ yum install -y git $ git clone https://github.com/severalnines/s9s-admin.git

2. Make sure lb1 and lb2 are accessible using passwordless SSH. Copy the SSH keys to lb1 and lb2:

$ ssh-copy-id -i ~/.ssh/id_rsa 192.168.197.111 $ ssh-copy-id -i ~/.ssh/id_rsa 192.168.197.112

3. Install HAProxy on both nodes:

$ cd s9s-admin/cluster/ $ ./s9s_haproxy --install -i 1 -h 192.168.197.111 $ ./s9s_haproxy --install -i 1 -h 192.168.197.112

4. Install Keepalived on lb1 (master) and lb2 (backup) with 192.168.197.100 as virtual IP:

$ ./s9s_haproxy --install-keepalived -i 1 -x 192.168.197.111 -y 192.168.197.112 -v 192.168.197.100

** The following steps should be performed on lb1 and lb2

5. By default, the script will configure the MySQL reverse proxy service to listen on port 33306. We will need to add a few more lines to tell HAproxy to load balance our web server farm as well. Add following line in /etc/haproxy/haproxy.cfg:

frontend http-in

bind *:80

default_backend web_farm

backend web_farm

server node1 192.168.197.101:80 maxconn 32

server node2 192.168.197.102:80 maxconn 32

server node3 192.168.197.103:80 maxconn 32

6. Restart HAProxy service:

$ killall haproxy $ /usr/sbin/haproxy -f /etc/haproxy/haproxy.cfg -p /var/run/haproxy.pid -st `cat /var/run/haproxy.pid`

Install Joomla

1. Download Joomla in node1:

$ cd /home/joomla/public_html $ wget http://joomlacode.org/gf/download/frsrelease/18323/80368/Joomla_3.1.1-Stable-Full_Package.zip $ unzip Joomla_3.1.1-Stable-Full_Package.zip

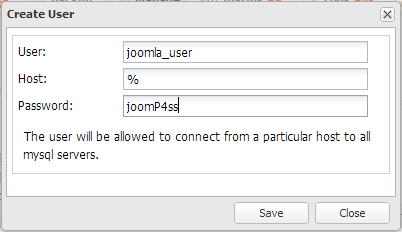

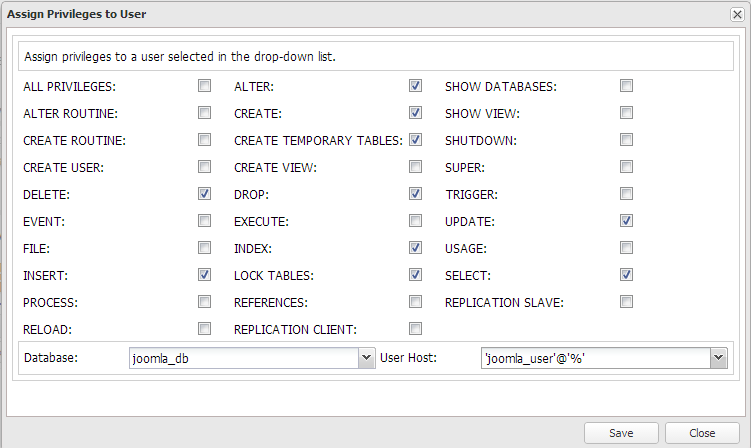

2. Create the database for Joomla. Go to ClusterControl Schema Management features under Manage > Schemas and Users to create schema and then create a database user as example below:

3. Assign specific privileges to joomla_user@’%’ as below:

4. In order to perform the Joomla installation, we need to bypass the HAproxy and connect directly to one of the web server. Instead of accessing mytestjoomla.com through virtual IP, 192.168.197.100, we need to access to the site directly in node1. You want to do this to avoid the following error:

“The most recent request was denied because it contained an invalid security token. Please refresh the page and try again.”

This is due to the way Joomla handles security tokens as described in this page: http://docs.joomla.org/How_to_add_CSRF_anti-spoofing_to_forms

To do this, add following line temporarily into your client PC /etc/hosts for Linux or Mac, or C:WindowsSystem32driversetchosts for Windows:

192.168.197.101 mytestjoomla.com www.mytestjoomla.com

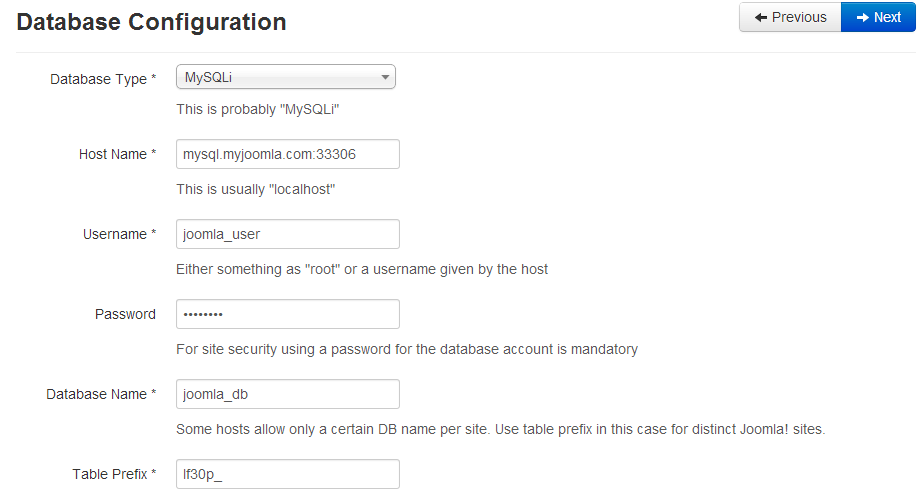

5. You can now start to install Joomla by accessing http://www.mytestjoomla.com and you should see the Joomla installation page. Enter the required details with following details on database configuration section:

6. Once the installation is complete, remove the line (as described in step #4 above) from your client PC so you can access the website via virtual IP, 192.168.197.100.

Verify the Architecture

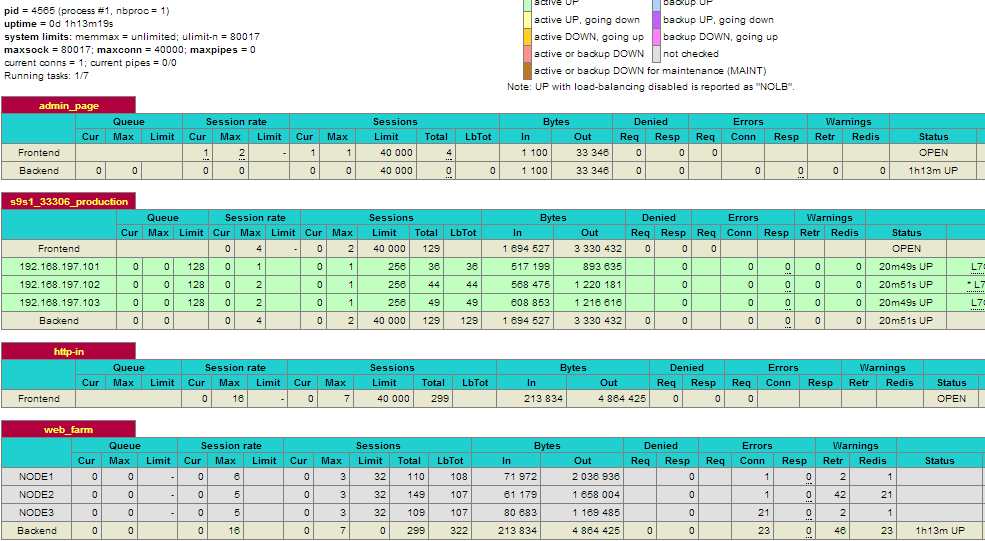

1. Check the HAproxy statistics by logging into the HAProxy admin page at lb1 host port 9600. The default username/password is admin/admin. You should see some bytes in and out on the web_farm and s9s_33306_production sections:

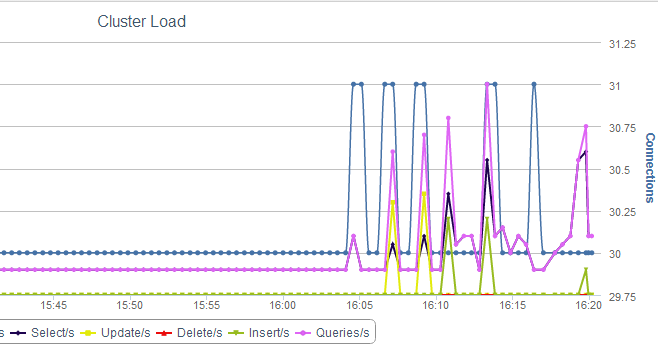

2. Check and observe the traffic on your database cluster from the ClusterControl overview page at https://192.168.197.121/clustercontrol :

Further Reading

This article only provides a basic configuration on how to put the different components together. You should have a backup of your shared storage server using DRBD, setup fencing and configure iSCSI failover to provide better redundancy and high availability.

If you are not familiar with Red Hat Cluster and GFS2, we highly recommend the Red Hat Cluster Administration documentation.