blog

Scaling WordPress and MySQL on Multiple Servers for Performance

Over the years, WordPress has evolved from a simple blogging platform to a CMS. Over seven million sites use it today, including the likes of CNN, Forbes, The New York Times and eBay. So, how do you scale WordPress on multiple servers for high performance?

This post is similar to our previous post on Drupal, Scaling Drupal on Multiple Servers with Galera Cluster for MySQL but we will focus on WordPress, Percona XtraDB Cluster and GlusterFS using Debian Squeeze 64bit.

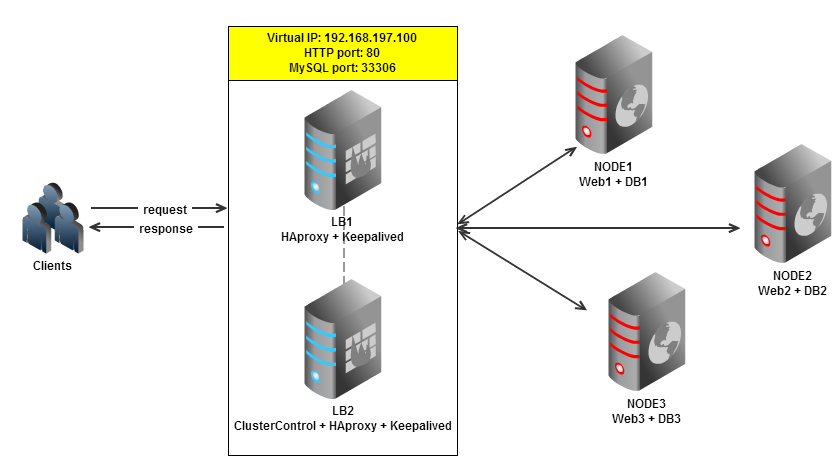

Our setup consists of 5 nodes or servers:

- NODE1: web server + database server

- NODE2: web server + database server

- NODE3: web server + database server

- LB1: load balancer (master) + keepalived

- LB2: ClusterControl + load balancer (backup) + keepalived

We will be using GlusterFS to serve replicated web content across 3 nodes. Each of these nodes will have an Apache web server colocated with a Percona XtraDB Cluster instance.

We will be using 2 other nodes for load balancing, with the ClusterControl server colocated with one of the load balancers.

Our major steps would be:

- Prepare 5 servers

- Deploy Percona XtraDB Cluster onto NODE1, NODE2 and NODE3 from LB2

- Configure GlusterFS on NODE1, NODE2 and NODE3 so the web contents can be automatically replicated

- Setup Apache in NODE1, NODE2 and NODE3

- Setup Keepalived and HAProxy for web and database load balancing with auto failover

- Install WordPress and connect it to the Web/DB cluster via the load balancer

Preparing Hosts

Add following hosts definition in /etc/hosts:

192.168.197.100 blogsite.com www.blogsite.com mysql.blogsite.com #virtual IP

192.168.197.101 NODE1 web1 db1

192.168.197.102 NODE2 web2 db2

192.168.197.103 NODE3 web3 db3

192.168.197.111 LB1

192.168.197.112 LB2 clustercontrolDeploy Percona XtraDB Cluster

** The deployment of the database cluster will be done from LB2

- To set up Percona XtraDB Cluster, go to the online MySQL Galera Configurator to generate a deployment package. In the wizard, we used the following values when configuring our database cluster:

- Vendor: Percona XtraDB Cluster

- Infrastructure: none/on-premises

- Operating System: Debian 6.0.5+

- Number of Galera Servers: 3+1

- OS user: root

- ClusterControl Server: 192.168.197.112

- Database Servers: 192.168.197.101 192.168.197.102 192.168.197.103

At the end of the wizard, a deployment package will be generated and emailed to you.

- Login to LB2, download the deployment package and run deploy.sh:

$ wget https://severalnines.com/galera-configurator/tmp/f43ssh1mmth37o1nv8vf58jdg6/s9s-galera-percona-2.4.0.tar.gz $ tar xvfz s9s-galera-percona-2.4.0.tar.gz $ cd s9s-galera-percona-2.4.0/mysql/scripts/install $ bash ./deploy.sh 2>&1 | tee cc.log - The deployment takes about 15 minutes, and once it is completed, note your API key. Use it to register the cluster with the ClusterControl UI by going to http://192.168.197.112/cmonapiYou will now see your Percona XtraDB Cluster in the UI.

Configure GlusterFS

** The following steps should be performed on NODE1, NODE2 and NODE3

- Update repository and install GlusterFS server package using package manager:

$ apt-get update && apt-get install -y glusterfs-server - Create a directory which will be exported through GlusterFS:

$ mkdir /storage - Make corresponding changes to the GlusterFS server configuration file:

# /etc/glusterfs/glusterfsd.vol volume posix type storage/posix option directory /storage end-volume volume locks type features/posix-locks subvolumes posix end-volume volume brick type performance/io-threads option thread-count 8 subvolumes locks end-volume volume server type protocol/server option transport-type tcp subvolumes brick option auth.addr.brick.allow * end-volume - Restart GlusterFS server service:

$ service glusterfs-server restart - Make corresponding changes to the GlusterFS client configuration file:

# /etc/glusterfs/glusterfs.vol volume node1 type protocol/client option transport-type tcp option remote-host NODE1 option remote-subvolume brick end-volume volume node2 type protocol/client option transport-type tcp option remote-host NODE2 option remote-subvolume brick end-volume volume node3 type protocol/client option transport-type tcp option remote-host NODE3 option remote-subvolume brick end-volume volume replicated_storage type cluster/replicate subvolumes node1 node2 node3 end-volume - Create a new directory for the WordPress blog content:

$ mkdir -p /var/www/blog - Add following line into /etc/fstab to allow auto-mount:

/etc/glusterfs/glusterfs.vol /var/www/blog glusterfs defaults 0 0 - Mount the GlusterFS to /var/www/blog:

$ mount -a

Configure Apache and PHP

** The following steps should be performed on NODE1, except step #1 which need to be performed on NODE2 and NODE3 as well.

- Install required packages using package manager:

$ apt-get update && apt-get install -y apache2 php5 php5-common libapache2-mod-php5 php5-xmlrpc php5-gd php5-mysql - Download and extract WordPress:

$ wget http://wordpress.org/latest.tar.gz $ tar -xzf latest.tar.gz - Copy the WordPress content into /var/www/blog directory:

$ cp -Rf wordpress/* /var/www/blog/ - Create the uploads directory and assign permissions:

$ mkdir /var/www/blog/wp-content/uploads $ chmod 777 /var/www/blog/wp-content/uploads

Load Balancers and Failover

** The following steps should be performed on LB2

- We have built scripts to install HAProxy and Keepalived, these can be obtained from our Git repository. For more detailed information about setting up HAProxy, check out this post.Install git and clone the repo:

$ apt-get install -y git $ git clone https://github.com/severalnines/s9s-admin.git - Before we start to deploy, make sure LB1 is accessible using passwordless SSH. Copy the SSH keys to LB1:

$ ssh-copy-id -i ~/.ssh/id_rsa 192.168.197.111 - Since HAProxy and ClusterControl are co-located on the same server, we need to change the Apache default port to another port, for example port 8080. ClusterControl will run on port 8080 while HAProxy will take over port 80 to perform web load balancing. Open the Apache configuration file at /etc/apache2/ports.conf and make the following changes:

NameVirtualHost *:8080 Listen 8080 - Configure the default site to use the new port:

$ vim /etc/apache2/sites-enabled/000-defaultChange following line:

- Restart Apache to apply the changes:

$ service apache2 restart** Take note that the ClusterControl address has changed to port 8080, https://192.168.197.112:8080/clustercontrol.

- Install HAProxy on both nodes:

$ cd s9s-admin/cluster/ $ ./s9s_haproxy --install -i 1 -h 192.168.197.111 $ ./s9s_haproxy --install -i 1 -h 192.168.197.112 - Install Keepalived on LB1 (master) and LB2 (backup) with 192.168.197.100 as virtual IP:

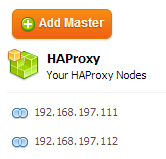

$ ./s9s_haproxy --install-keepalived -i 1 -x 192.168.197.111 -y 192.168.197.112 -v 192.168.197.100 - The 2 load balancer nodes have now been installed, and are integrated with ClusterControl. You can verify this by checking out the Nodes tab in the ClusterControl UI under HAProxy section:

Configure HAproxy for Apache Load Balancing

** The following steps should be performed on LB1 and LB2

- By default, our script will configure the MySQL reverse proxy service to listen on port 33306. We will need to add a few more lines to tell HAproxy to load balance our web server farm as well. Add following line in /etc/haproxy/haproxy.cfg:

frontend http-in bind *:80 default_backend web_farm backend web_farm server NODE1 192.168.197.101:80 maxconn 32 check server NODE2 192.168.197.102:80 maxconn 32 check server NODE3 192.168.197.103:80 maxconn 32 check - Restart HAProxy service:

$ killall haproxy $ /usr/sbin/haproxy -f /etc/haproxy/haproxy.cfg -p /var/run/haproxy.pid -st `cat /var/run/haproxy.pid`

Install WordPress

** The following steps should be performed from a Web browser.

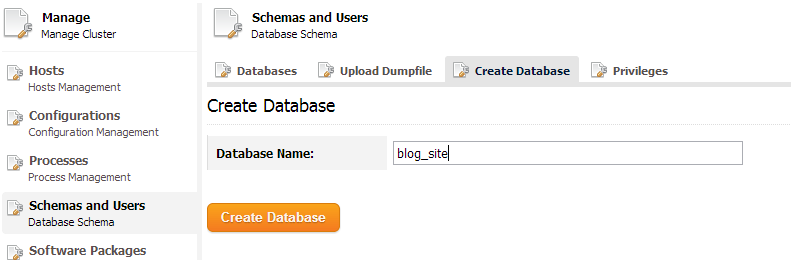

- Now that we have a load-balanced setup that is ready to support WordPress, we will now create our WordPress database. From the ClusterControl UI, go to Manage > Schema and Users > Create Database to create the database:

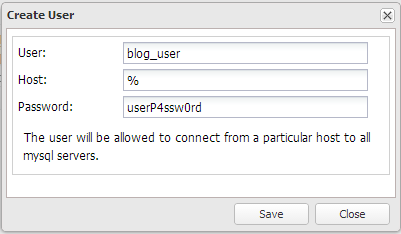

- Create the database user under Privileges tab:

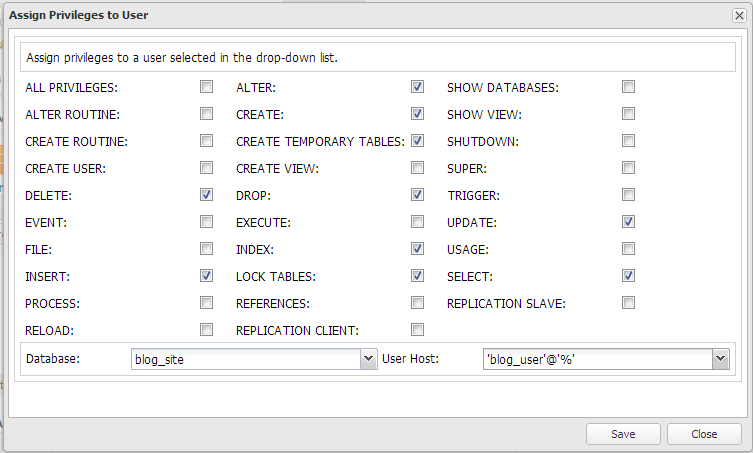

- Assign the correct privileges for blog_user on database blog_site:

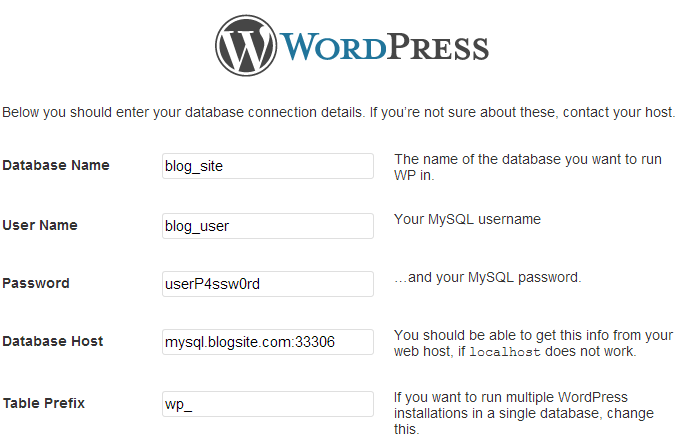

- Once done, navigate to the WordPress URL through virtual IP at http://192.168.197.100/blog and follow the installation wizard. Your database credentials would be something like this :

- Click Submit and proceed with the rest of the wizard. WordPress will be ready and accessible using virtual IP http://192.168.197.100/blog.

Verify the New Architecture

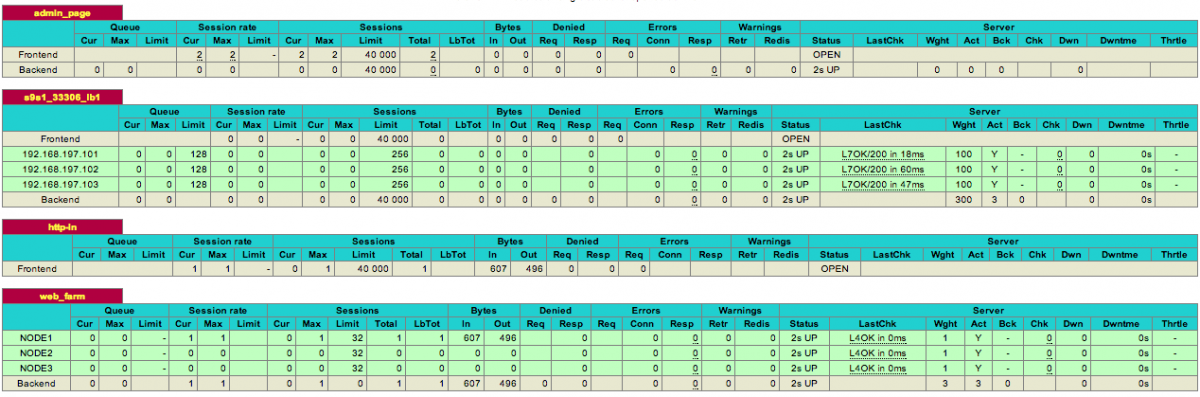

- Check the HAproxy statistics by logging into the HAProxy admin page at LB1 host port 9600. The default username/password is admin/admin. You should see some bytes in and out on the web_farm and s9s_33306_production sections:

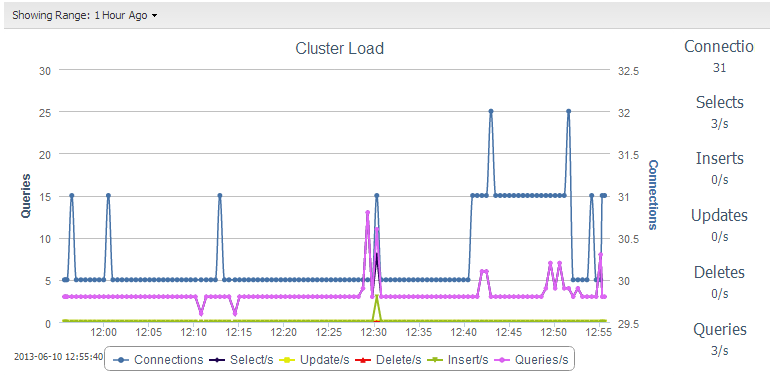

- Check and observe the traffic on your database cluster from the ClusterControl overview page at https://192.168.197.112:8080/clustercontrol :

- Create a new article and upload a new image. Make sure the image file exists on all web servers.

Congratulations, you have now deployed a scalable WordPress setup with clustering both at the web and the database layers.