blog

Active-Active Alfresco Cluster with MySQL Galera and GlusterFS

Alfresco is a popular collaboration tool available on the open-source market. It is Java based, and has a content repository, web application framework and web content management system. For critical large-scale implementations that require 24*7 uptime, a multi-node cluster would be appropriate. Since Alfresco depends on external components such as the database and the filesystem, clustering the Alfresco instances only would not be enough.

In this post, we are going to show you how to deploy an active-active Alfresco cluster with MySQL Galera Cluster (database), GlusterFS (filesystem) and HAproxy with Keepalived (load balancer) to achieve redundancy of all the required system components.

Please note that clustering of Alfresco instances is only available in the Alfresco Enterprise. Hazelcast is used to provide multicast messaging between the web-tier nodes. This blog will be using the community edition, and note that e.g. site/user dashboard layout changes on one Alfresco node are not replicated to the other nodes since Hazelcast is only enabled in the enterprise edition. However, the content repository works fine in the community edition as the content files are on the same GlusterFS partition and the content metadata is replicated via Galera.

Architecture

We will have a three-node Galera cluster co-located with Alfresco. Another two nodes, fs1 and fs2 will be used as a replicated file storage system for Alfresco content using GlusterFS. These two nodes will also have HAproxy and Keepalived for high availability load-balancing. ClusterControl will be hosted on fs2 to monitor Galera nodes. We will be using Alfresco version 4.2 (Community edition) and all hosts are running on Debian 7.2 Wheezy 64bit. All commands shown below are executed in root environment.

Our hosts definition in all nodes:

192.168.197.130 virtual_ip 192.168.197.131 alfresco1 galera1 192.168.197.132 alfresco2 galera2 192.168.197.133 alfresco3 galera3 192.168.197.134 fs1 haproxy1 192.168.197.135 fs2 haproxy2 clustercontrol

Deploying MySQL Galera Cluster, HAproxy and Keepalived (Virtual IP)

1. Use the Galera Configurator to deploy a three-node Galera Cluster. Use galera1, galera2 and galera3 for the MySQL nodes, and fs2 (192.168.197.135) for the ClusterControl node. Once deployed, enable passwordless SSH from the ClusterControl node to fs1 so ClusterControl can provision the node:

$ ssh-copy-id -i ~/.ssh/id_rsa 192.168.197.134

2. Deploy HAProxy on fs1 and fs2 by using Add Load Balancer wizard.

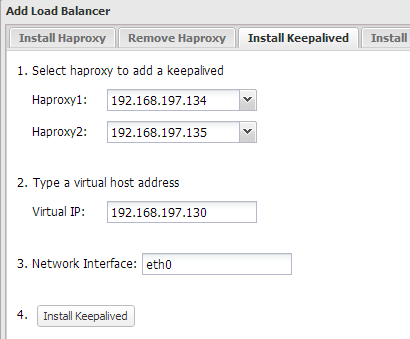

3. Install Keepalived and configure an appropriate virtual IP for HAproxy failover, similar to screenshot below:

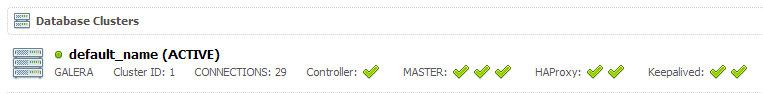

At the end of the deployment, the top summary bar in the ClusterControl UI should look like the below:

4. Configure HAproxy to serve Alfresco on port 8080 by adding the following lines into /etc/haproxy/haproxy.cfg on fs1 and fs2:

listen alfresco_8080 bind *:8080 mode tcp option httpchk GET /share balance source server alfresco1 192.168.197.131:8080 check inter 5000 server alfresco2 192.168.197.132:8080 check inter 5000

Restart the HAproxy instance:

$ killall -9 haproxy && /usr/sbin/haproxy -f /etc/haproxy/haproxy.cfg -p /var/run/haproxy.pid -st $(cat /var/run/haproxy.pid)

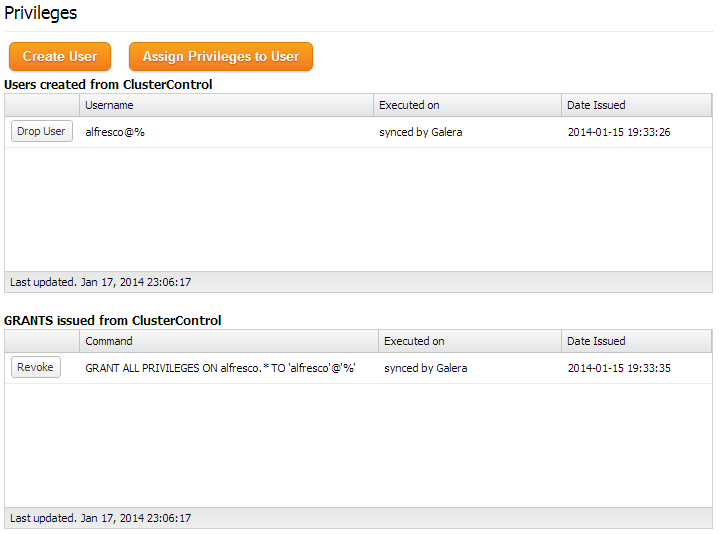

5. Create a schema for Alfresco by using Manage >> Schema and Users >> Create a database called “alfresco”. Then assign all privileges to the schema with a wildcard host (‘%’).

At the moment, MySQL Galera Cluster is load balanced with virtual IP, 192.168.197.130 listening on port 33306.

Configuring GlusterFS

Following steps should be performed on fs1 and fs2 unless specified otherwise.

1. Install GlusterFS server:

$ apt-get install glusterfs-server -y

2. Create a directory for Gluster brick:

$ mkdir /brick

3. On fs1, probe fs2 as peer:

$ gluster peer probe fs2

While on fs2, probe fs1 as peer:

$ gluster peer probe fs1

4. Create the volume named “datastore” with replication on both hosts’ brick:

$ gluster volume create datastore replica 2 transport tcp fs1:/brick fs2:/brick Creation of volume datastore has been successful. Please start the volume to access data.

5. Start the datastore volume:

$ gluster volume start datastore

6. Verify the volume is ready:

$ gluster volume info Volume Name: datastore Type: Replicate Status: Started Number of Bricks: 2 Transport-type: tcp Bricks: Brick1: fs1:/brick Brick2: fs2:/brick

Mounting GlusterFS

Following steps should be performed on all Alfresco nodes.

1. Install GlusterFS client:

$ apt-get install glusterfs-client -y

2. Create the mount point:

$ mkdir /storage

3. Add following line into /etc/fstab:

virtual_ip:/datastore /storage glusterfs defaults,_netdev 0 0

4. Mount the newly defined GlusterFS partition in /etc/fstab:

$ mount -a

5. Verify that the partition is mounted correctly:

$ mount | grep /storage virtual_ip:/datastore on /storage type fuse.glusterfs (rw,relatime,user_id=0,group_id=0,default_permissions,allow_other,max_read=131072)

Installing Alfresco

Following steps should be performed on all Alfresco nodes unless specified otherwise.

1. Install packages required by Alfresco:

$ apt-get install -y openssh-server apache2 xvfb xfonts-base openoffice.org ghostscript imagemagick

2. Download Alfresco Community installer from http://www.alfresco.com/products/community:

$ wget --content-disposition http://www2.alfresco.com/l/1234/2013-10-22/3dntj7

3. Make the installer executable:

$ chmod +x alfresco-community-4.2.e-installer-linux-x64.bin

4. Launch the installer:

$ ./alfresco-community-4.2.e-installer-linux-x64.bin --mode text

Follow the installation wizard. It is worth mentioning some of the non-default answers that we used during the installation:

[1] Easy - Installs servers with the default configuration [2] Advanced - Configures server ports and service properties.: Also choose optional components to install. Please choose an option [1] : 2 Java [Y/n] : Y PostgreSQL [Y/n] :n Alfresco : Y (Cannot be edited) SharePoint [Y/n] :Y Web Quick Start [y/N] : y Google Docs Integration [Y/n] :Y LibreOffice [Y/n] :Y JDBC URL: [jdbc:postgresql://localhost/alfresco]: jdbc:mysql://virtual_ip:33306/alfresco JDBC Driver: [org.postgresql.Driver]: org.gjt.mm.mysql.Driver Web Server domain: [127.0.0.1]: 0.0.0.0 Launch Alfresco Community Share [Y/n]: n

Make sure that Alfresco is not started once the installation is complete. We will need to apply some custom configurations, as in the next section.

Configuring Alfresco

1. Create a symlink to simplify the Alfresco path:

$ ln -s /opt/alfresco-4.2.e /opt/alfresco

2. Download the MySQL connector for Java from MySQL Connector download site and install it into Tomcat library:

$ wget http://dev.mysql.com/get/Downloads/Connector-J/mysql-connector-java-5.1.28.tar.gz $ tar -xzf mysql-connector-java-5.1.28.tar.gz $ cd mysql-connector-java* $ cp mysql-connector-java-5.1.28-bin.jar /opt/alfresco/tomcat/lib/

3. On alfresco1, copy the content of /opt/alfresco/alf_data to /storage:

$ cp -pfR /opt/alfresco/alf_data /storage/

4. Change the Alfresco root directory in /opt/alfresco/tomcat/shared/classes/alfresco-global.properties to the GlusterFS mount point:

dir.root=/storage/alf_data

5. Start Alfresco service:

$ service alfresco start

Wait until the bootstrapping process completes. You can monitor the process by examining the Tomcat process log file at /opt/alfresco/tomcat/logs/catalina.out.

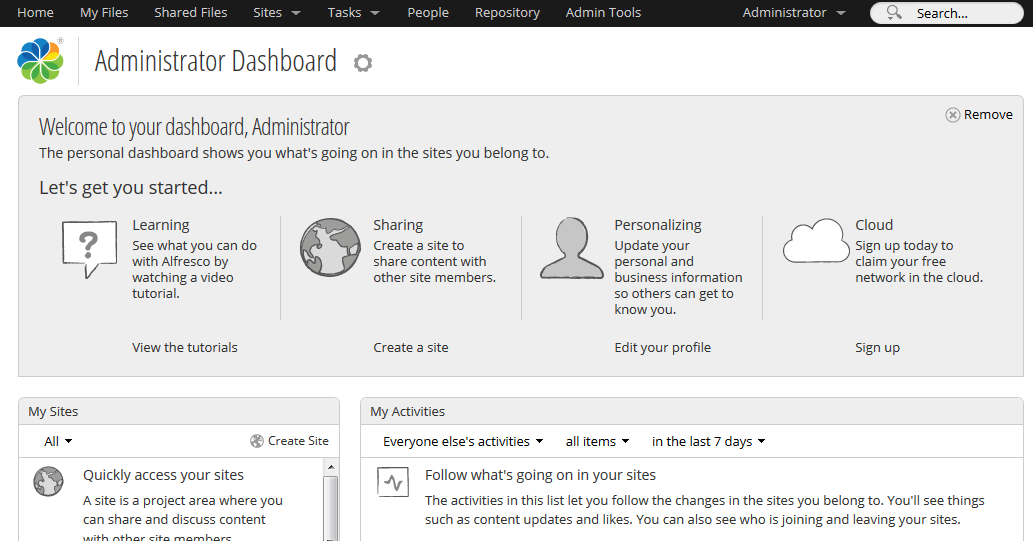

Open the Alfresco page at http://192.168.197.130:8080/share and login using default username “admin” with specified password during the installation process. You should see the Alfresco dashboard similar to the screenshot below:

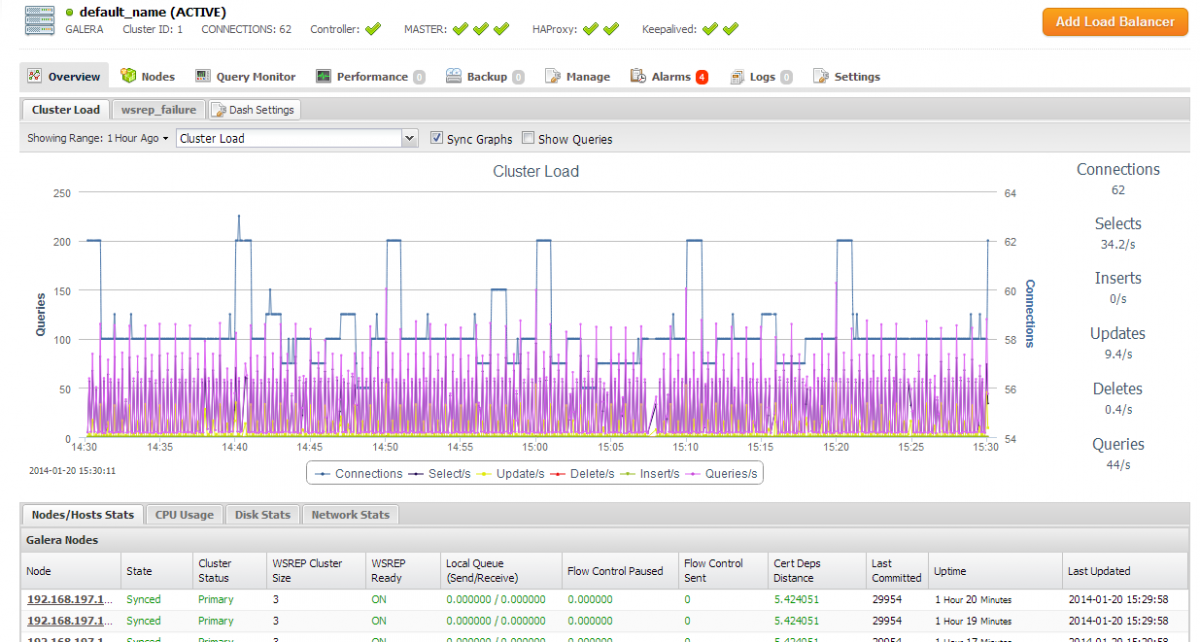

You should see some activity on your database cluster, as seen from the ClusterControl dashboard:

References

- Alfresco Community 4.2 Documentation – http://docs.alfresco.com/community/index.jsp

- Alfreso High Availability on Linux – http://wiki.alfresco.com/wiki/High_Availability_On_Linux

- GlusterFS Quick Start – http://www.gluster.org/community/documentation/index.php/QuickStart