blog

An Overview of the Percona MongoDB Kubernetes Operator

MongoDB and Kubernetes is a great combination, especially in regards to complexity. Yet, Percona’s MongoDB (PSMDB) offers more flexibility for the NoSQL database, and it comes also with tools that are efficient for today’s productivity; not only on-prem but also available for cloud natives.

Ther adoption rate of Kubernetes is steadily increasing. It’s reasonable that a technology must have an operator to do the following: creation, modification, and deletion of items Percona Server for MongoDB environment. The Percona MongoDB Kubernetes Operator contains the necessary k8s settings to maintain a consistent Percona Server for a MongoDB instance. As an alternative option, you might compare this to https://github.com/kubedb/ but the KubeDB for MongoDB does offer very limited options to offer especially on production grade systems.

The Percona Kubernetes Operators boasting its configuration are based and are following the best practices for configuration of PSMDB replica set. What matters most, the operator for MongoDB itself provides many benefits but saving time, a consistent environment are the most important. In this blog, we’ll take an overview of how this is beneficial especially in a containerized environment.

What Does This Operator Can Offer?

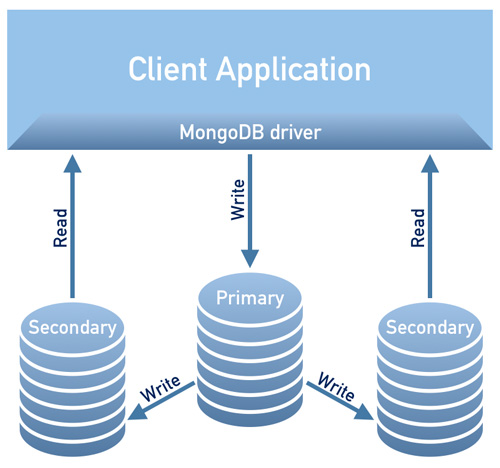

This operator is useful for PSMDB using a replica set. This means that, your database design architecture has to conform with the following diagram below

Image borrowed from Percona’s Documentation Design Overview

Currently, the supported platforms available for this operator are:

- OpenShift 3.11

- OpenShift 4.5

- Google Kubernetes Engine (GKE) 1.15 – 1.17

- Amazon Elastic Container Service for Kubernetes (EKS) 1.15

- Minikube 1.10

- VMWare Tanzu

Other Kubernetes platforms may also work but have not been tested.

Resource Limits

A cluster running an officially supported platform contains at least three Nodes, with the following resources:

- 2GB of RAM

- 2 CPU threads per Node for Pods provisioning

- at least 60GB of available storage for Private Volumes provisioning

Security And/Or Restrictions Constraints

The Kubernetes works as when creating Pods, each Pod has an IP address in the internal virtual network of the cluster. Creating or destroying Pods are both dynamic processes, so it’s not recommendable to bind your pods to specific IP addresses assigned for communication between Pods. This can cause problems as things change over time as a result of the cluster scaling, inadvertent mistakes, dc outage or disasters, or periodic maintenance, etc. In that case, the operator strictly recommends you to connect to Percona Server for MongoDB via Kubernetes internal DNS names in URI (e.g. mongodb+srv://userAdmin:userAdmin123456@

This PSMDB Kubernetes Operator also uses affinity/anti-affinity which provides constraints to which your pods can be scheduled to run or initiated on a specific node. Affinity defines eligible pods that can be scheduled on the node which already has pods with specific labels. Anti-affinity defines pods that are not eligible. This approach reduces costs by ensuring several pods with intensive data exchange occupy the same availability zone or even the same node or, on the contrary, to spread the pods on different nodes or even different availability zones for high availability and balancing purposes. Though the operator encourages you to set affinity/anti-affinity, yet this has limitations when using Minikube.

When using Minikube, it has the following platform-specific limitations. Minikube doesn’t support multi-node cluster configurations because of its local nature, which is in collision with the default affinity requirements of the Operator. To arrange this, the Install Percona Server for MongoDB on Minikube instruction includes an additional step which turns off the requirement of having not less than three Nodes.

In the following section of this blog, we will set up PMSDB Kubernetes Operator using Minikube and we’ll follow the anti-affinity set off to make it work. How does this differ from using anti-affinity, if you change AntiAffinity you’re increasing risks for cluster availability. Let’s say, if your main purpose of deploying your PSMDB to a containerized environment is to spread and have higher-availability yet scalability, then this might defeat the purpose. Yet using Minikube especially on-prem and for testing your PSMDB setup is doable but for production workloads you surely want to run nodes on separate hosts, or in an environment setup such that in a way concurrent failure of multiple pods is unlikely to happen.

Data In Transit/Data At Rest

For data security with PSMDB, the operator offers TLS/SSL for in transit, then also offers encryption when data is at rest. For in transit, options you can choose is to use cert-manager, or generate your own certificate manually. Of course, you can optionally use PSMDB without TLS for this operator. Checkout their documentation with regards to using TLS.

For data at rest, it requires changes within their PSMDB Kubernetes Operator after you downloaded the github branch, then apply changes on the deploy/cr.yaml file. To enable this, do the following as suggested by the documentation:

- The security.enableEncryption key should be set to true (the default value).

- The security.encryptionCipherMode key should specify proper cipher mode for decryption. The value can be one of the following two variants:

- AES256-CBC (the default one for the Operator and Percona Server for MongoDB)

- AES256-GCM

security.encryptionKeySecret should specify a secret object with the encryption key:

mongod:

...

security:

...

encryptionKeySecret: my-cluster-name-mongodb-encryption-keyEncryption key secret will be created automatically if it doesn’t exist. If you would like to create it yourself, take into account that the key must be a 32 character string encoded in base64.

Storing Sensitive Information

The PSMDB Kubernetes Operator uses Kubernetes Secrets to store and manage sensitive information. The Kubernetes Secrets let you store and manage sensitive information, such as passwords, OAuth tokens, and ssh keys. Storing confidential information in a Secret is safer and more flexible than putting it verbatim in a pod definition or in a container image.

For this operator, the user and passwords generated for your pods are stored and can be obtained using kubectl get secrets

For this blog, my example setup achieves the following with the decoded base64 result.

kubectl get secrets mongodb-cluster-s9s-secrets -o yaml | egrep '^s+MONGODB.*'|cut -d ':' -f2 | xargs -I% sh -c "echo % | base64 -d; echo "

WrDry6bexkCPOY5iQ

backup

gAWBKkmIQsovnImuKyl

clusterAdmin

qHskMMseNqU8DGbo4We

clusterMonitor

TQBEV7rtE15quFl5

userAdminEach entry for backup, cluster user, cluster monitor user, and the user for administrative usage shows based on the above result.

Another thing also is that, PSMDB Kubernetes Operator stores also the AWS S3 access and secret keys through Kubernetes Secrets.

Backups

This operator supports backups which is a very nifty feature. It supports on-demand (manual) backup and scheduled backup and uses the backup tool Percona Backup for MongoDB. Take note that backups are only stored on AWS S3 or any S3-compatible storage.

Backups scheduled can be defined through the deploy/cr.yaml file, whereas taking a manual backup can be done anytime whenever the demand is necessary. For S3 access and secret keys, it shall be defined in the file deploy/backup-s3.yaml file and uses Kubernetes Secrets to store the following information as we mentioned earlier.

All actions supported for this PSMDB Kubernetes Operator are the following:

- Making scheduled backups

- Making on-demand backup

- Restore the cluster from a previously saved backup

- Delete the unneeded backup

Using PSMDB Kubernetes Operator With Minikube

In this section, we’ll keep a simple setup using Kubernetes with Minikube, which you can use on-prem without the need for a cloud provider. For cloud-native setup especially for a more enterprise and production grade environment, you can go checkout their documentation.

Before we proceed with the steps, keep in mind that as mentioned above, there has been a known limitation with Minikube as it doesn’t support multi-node cluster configuration which is in collision with the default affinity requirements of the operator. We’ll mention this on how to handle it in the following steps below.

For this blog, the host OS where our Minikube will be installed is on Ubuntu 18.04 (Bionic Beaver).

Let’s Install Minikube

$ curl -LO https://storage.googleapis.com/minikube/releases/latest/minikube_latest_amd64.deb

$ sudo dpkg -i minikube_latest_amd64.debOptionally, you can follow the steps here if you are on different Linux systems.

Let’s add the required key to authenticate our Kubernetes packages and setup the repository

$ curl -s https://packages.cloud.google.com/apt/doc/apt-key.gpg | sudo apt-key add -

$ cat < /etc/apt/sources.list.d/kubernetes.list

deb https://apt.kubernetes.io/ kubernetes-xenial main

deb https://apt.kubernetes.io/ kubernetes-yakkety main

eof Now let’s install the required packages

$ sudo apt-get update

$ sudo apt-get install kubelet kubeadm kubectlStart the Minikube defining the memory, the number of CPU’s and the CIDR for which my nodes shall be assigned,

$ minikube start --memory=4096 --cpus=3 --extra-config=kubeadm.pod-network-cidr=192.168.0.0/16The example results shows like,

minikube v1.14.2 on Ubuntu 18.04

Automatically selected the docker driver

docker is currently using the aufs storage driver, consider switching to overlay2 for better performance

Starting control plane node minikube in cluster minikube

Creating docker container (CPUs=3, Memory=4096MB) ...

Preparing Kubernetes v1.19.2 on Docker 19.03.8 ...

kubeadm.pod-network-cidr=192.168.0.0/16

> kubeadm.sha256: 65 B / 65 B [--------------------------] 100.00% ? p/s 0s

> kubectl.sha256: 65 B / 65 B [--------------------------] 100.00% ? p/s 0s

> kubelet.sha256: 65 B / 65 B [--------------------------] 100.00% ? p/s 0s

> kubeadm: 37.30 MiB / 37.30 MiB [---------------] 100.00% 1.46 MiB p/s 26s

> kubectl: 41.01 MiB / 41.01 MiB [---------------] 100.00% 1.37 MiB p/s 30s

> kubelet: 104.88 MiB / 104.88 MiB [------------] 100.00% 1.53 MiB p/s 1m9s

Verifying Kubernetes components...

Enabled addons: default-storageclass, storage-provisioner

Done! kubectl is now configured to use "minikube" by defaultAs you noticed, it does as well install the utility tools to manage and administer your nodes or pods.

Now, let’s check the nodes and pods by running the following commands,

$ kubectl get pods -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system coredns-f9fd979d6-gwngd 1/1 Running 0 45s

kube-system etcd-minikube 0/1 Running 0 53s

kube-system kube-apiserver-minikube 1/1 Running 0 53s

kube-system kube-controller-manager-minikube 0/1 Running 0 53s

kube-system kube-proxy-m25hm 1/1 Running 0 45s

kube-system kube-scheduler-minikube 0/1 Running 0 53s

kube-system storage-provisioner 1/1 Running 1 57s

$ kubectl get nodes -owide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

minikube Ready master 2d4h v1.19.2 192.168.49.2 Ubuntu 20.04 LTS 4.15.0-20-generic docker://19.3.8 Now, download the PSMDB Kubernetes Operator,

$ git clone -b v1.5.0 https://github.com/percona/percona-server-mongodb-operator

$ cd percona-server-mongodb-operatorWe’re now ready to deploy the operator,

$ kubectl apply -f deploy/bundle.yamlAs mentioned earlier, Minikube’s limitations require adjustments to make things run as expected. Let’s do the following:

- Depending on your current hardware capacity, you might change the following as suggested by the documentation. Since minikube runs locally, the default deploy/cr.yaml file should be edited to adapt the Operator for the local installation with limited resources. Change the following keys in the replsets section:

- comment resources.requests.memory and resources.requests.cpu keys (this will fit the Operator in minikube default limitations)

- set affinity.antiAffinityTopologyKey key to “none” (the Operator will be unable to spread the cluster on several nodes)

- Also, switch allowUnsafeConfigurations key to true (this option turns off the Operator’s control over the cluster configuration, making it possible to deploy Percona Server for MongoDB as a one-node cluster).

Now, we’re ready to apply the changes made to the deploy/cr.yaml file.

$ kubectl apply -f deploy/cr.yamlAt this point, you might be able to check the status of the pods and you’ll notice the following progress just like below,

$ kubectl get pods

NAME READY STATUS RESTARTS AGE

percona-server-mongodb-operator-588db759d-qjv29 0/1 ContainerCreating 0 15s

$ kubectl get pods

NAME READY STATUS RESTARTS AGE

mongodb-cluster-s9s-rs0-0 0/2 Init:0/1 0 4s

percona-server-mongodb-operator-588db759d-qjv29 1/1 Running 0 34s

$ kubectl get pods

NAME READY STATUS RESTARTS AGE

mongodb-cluster-s9s-rs0-0 0/2 PodInitializing 0 119s

percona-server-mongodb-operator-588db759d-qjv29 1/1 Running 0 2m29s

kubectl get pods

NAME READY STATUS RESTARTS AGE

mongodb-cluster-s9s-rs0-0 0/2 PodInitializing 0 2m1s

percona-server-mongodb-operator-588db759d-qjv29 1/1 Running 0 2m31s

kubectl get pods

NAME READY STATUS RESTARTS AGE

mongodb-cluster-s9s-rs0-0 2/2 Running 0 33m

mongodb-cluster-s9s-rs0-1 2/2 Running 1 31m

mongodb-cluster-s9s-rs0-2 2/2 Running 0 30m

percona-server-mongodb-operator-588db759d-qjv29 1/1 Running 0 33mNow that we’re almost there. We’ll get the generated secrets by the operator so that we can connect to the created PSMDB pods. To do that, you need to list the secret objects first, then get the value of the yaml so you can get the user/password combination. On the other hand, you can use the combined command below with the username:password format. See the example below,

$ kubectl get secrets

NAME TYPE DATA AGE

default-token-c8frr kubernetes.io/service-account-token 3 2d4h

internal-mongodb-cluster-s9s-users Opaque 8 2d4h

mongodb-cluster-s9s-mongodb-encryption-key Opaque 1 2d4h

mongodb-cluster-s9s-mongodb-keyfile Opaque 1 2d4h

mongodb-cluster-s9s-secrets Opaque 8 2d4h

percona-server-mongodb-operator-token-rbzbc kubernetes.io/service-account-token 3 2d4h

$ kubectl get secrets mongodb-cluster-s9s-secrets -o yaml | egrep '^s+MONGODB.*'|cut -d ':' -f2 | xargs -I% sh -c "echo % | base64 -d; echo" |sed 'N; s/(.*)n(.*)/

2:1/'

backup:WrDry6bexkCPOY5iQ

clusterAdmin:gAWBKkmIQsovnImuKyl

clusterMonitor:qHskMMseNqU8DGbo4We

userAdmin:TQBEV7rtE15quFl5Now, you can base the username:password format and save it somewhere securely.

Since we cannot directly connect to the Percona Server for MongoDB nodes, we need to create a new pod which has the mongodb client,

$ kubectl run -i --rm --tty percona-client --image=percona/percona-server-mongodb:4.2.8-8 --restart=Never -- bash -ilLastly, we’re now ready to connect to our PSMDB nodes now,

bash-4.2$ mongo "mongodb+srv://userAdmin:tujYD1mJTs4QWIBh@mongodb-cluster-s9s-rs0.default.svc.cluster.local/admin?replicaSet=rs0&ssl=false"Alternatively, you can connect to the individual nodes and check it’s health. For example,

bash-4.2$ mongo --host "mongodb://clusterAdmin:gAWBKkmIQsovnImuKyl@mongodb-cluster-s9s-rs0-2.mongodb-cluster-s9s-rs0.default.svc.cluster.local:27017/?authSource=admin&ssl=false"

Percona Server for MongoDB shell version v4.2.8-8

connecting to: mongodb://mongodb-cluster-s9s-rs0-2.mongodb-cluster-s9s-rs0.default.svc.cluster.local:27017/?authSource=admin&compressors=disabled&gssapiServiceName=mongodb&ssl=false

Implicit session: session { "id" : UUID("9b29b9b3-4f82-438d-9857-eff145be0ee6") }

Percona Server for MongoDB server version: v4.2.8-8

Welcome to the Percona Server for MongoDB shell.

For interactive help, type "help".

For more comprehensive documentation, see

https://www.percona.com/doc/percona-server-for-mongodb

Questions? Try the support group

https://www.percona.com/forums/questions-discussions/percona-server-for-mongodb

2020-11-09T07:41:59.172+0000 I STORAGE [main] In File::open(), ::open for '/home/mongodb/.mongorc.js' failed with No such file or directory

Server has startup warnings:

2020-11-09T06:41:16.838+0000 I CONTROL [initandlisten] ** WARNING: While invalid X509 certificates may be used to

2020-11-09T06:41:16.838+0000 I CONTROL [initandlisten] ** connect to this server, they will not be considered

2020-11-09T06:41:16.838+0000 I CONTROL [initandlisten] ** permissible for authentication.

2020-11-09T06:41:16.838+0000 I CONTROL [initandlisten]

rs0:SECONDARY> rs.status()

{

"set" : "rs0",

"date" : ISODate("2020-11-09T07:42:04.984Z"),

"myState" : 2,

"term" : NumberLong(5),

"syncingTo" : "mongodb-cluster-s9s-rs0-0.mongodb-cluster-s9s-rs0.default.svc.cluster.local:27017",

"syncSourceHost" : "mongodb-cluster-s9s-rs0-0.mongodb-cluster-s9s-rs0.default.svc.cluster.local:27017",

"syncSourceId" : 0,

"heartbeatIntervalMillis" : NumberLong(2000),

"majorityVoteCount" : 2,

"writeMajorityCount" : 2,

"optimes" : {

"lastCommittedOpTime" : {

"ts" : Timestamp(1604907723, 4),

"t" : NumberLong(5)

},

"lastCommittedWallTime" : ISODate("2020-11-09T07:42:03.395Z"),

"readConcernMajorityOpTime" : {

"ts" : Timestamp(1604907723, 4),

"t" : NumberLong(5)

},

"readConcernMajorityWallTime" : ISODate("2020-11-09T07:42:03.395Z"),

"appliedOpTime" : {

"ts" : Timestamp(1604907723, 4),

"t" : NumberLong(5)

},

"durableOpTime" : {

"ts" : Timestamp(1604907723, 4),

"t" : NumberLong(5)

},

"lastAppliedWallTime" : ISODate("2020-11-09T07:42:03.395Z"),

"lastDurableWallTime" : ISODate("2020-11-09T07:42:03.395Z")

},

"lastStableRecoveryTimestamp" : Timestamp(1604907678, 3),

"lastStableCheckpointTimestamp" : Timestamp(1604907678, 3),

"members" : [

{

"_id" : 0,

"name" : "mongodb-cluster-s9s-rs0-0.mongodb-cluster-s9s-rs0.default.svc.cluster.local:27017",

"health" : 1,

"state" : 1,

"stateStr" : "PRIMARY",

"uptime" : 3632,

"optime" : {

"ts" : Timestamp(1604907723, 4),

"t" : NumberLong(5)

},

"optimeDurable" : {

"ts" : Timestamp(1604907723, 4),

"t" : NumberLong(5)

},

"optimeDate" : ISODate("2020-11-09T07:42:03Z"),

"optimeDurableDate" : ISODate("2020-11-09T07:42:03Z"),

"lastHeartbeat" : ISODate("2020-11-09T07:42:04.246Z"),

"lastHeartbeatRecv" : ISODate("2020-11-09T07:42:03.162Z"),

"pingMs" : NumberLong(0),

"lastHeartbeatMessage" : "",

"syncingTo" : "",

"syncSourceHost" : "",

"syncSourceId" : -1,

"infoMessage" : "",

"electionTime" : Timestamp(1604904092, 1),

"electionDate" : ISODate("2020-11-09T06:41:32Z"),

"configVersion" : 3

},

{

"_id" : 1,

"name" : "mongodb-cluster-s9s-rs0-1.mongodb-cluster-s9s-rs0.default.svc.cluster.local:27017",

"health" : 1,

"state" : 2,

"stateStr" : "SECONDARY",

"uptime" : 3632,

"optime" : {

"ts" : Timestamp(1604907723, 4),

"t" : NumberLong(5)

},

"optimeDurable" : {

"ts" : Timestamp(1604907723, 4),

"t" : NumberLong(5)

},

"optimeDate" : ISODate("2020-11-09T07:42:03Z"),

"optimeDurableDate" : ISODate("2020-11-09T07:42:03Z"),

"lastHeartbeat" : ISODate("2020-11-09T07:42:04.244Z"),

"lastHeartbeatRecv" : ISODate("2020-11-09T07:42:04.752Z"),

"pingMs" : NumberLong(0),

"lastHeartbeatMessage" : "",

"syncingTo" : "mongodb-cluster-s9s-rs0-2.mongodb-cluster-s9s-rs0.default.svc.cluster.local:27017",

"syncSourceHost" : "mongodb-cluster-s9s-rs0-2.mongodb-cluster-s9s-rs0.default.svc.cluster.local:27017",

"syncSourceId" : 2,

"infoMessage" : "",

"configVersion" : 3

},

{

"_id" : 2,

"name" : "mongodb-cluster-s9s-rs0-2.mongodb-cluster-s9s-rs0.default.svc.cluster.local:27017",

"health" : 1,

"state" : 2,

"stateStr" : "SECONDARY",

"uptime" : 3651,

"optime" : {

"ts" : Timestamp(1604907723, 4),

"t" : NumberLong(5)

},

"optimeDate" : ISODate("2020-11-09T07:42:03Z"),

"syncingTo" : "mongodb-cluster-s9s-rs0-0.mongodb-cluster-s9s-rs0.default.svc.cluster.local:27017",

"syncSourceHost" : "mongodb-cluster-s9s-rs0-0.mongodb-cluster-s9s-rs0.default.svc.cluster.local:27017",

"syncSourceId" : 0,

"infoMessage" : "",

"configVersion" : 3,

"self" : true,

"lastHeartbeatMessage" : ""

}

],

"ok" : 1,

"$clusterTime" : {

"clusterTime" : Timestamp(1604907723, 4),

"signature" : {

"hash" : BinData(0,"HYC0i49c+kYdC9M8KMHgBdQW1ac="),

"keyId" : NumberLong("6892206918371115011")

}

},

"operationTime" : Timestamp(1604907723, 4)

}Since the operator manages the consistency of the cluster, whenever a failure or let say a pod has been deleted. The operator will automatically initiate a new one. For example,

$ kubectl get po

NAME READY STATUS RESTARTS AGE

mongodb-cluster-s9s-rs0-0 2/2 Running 0 2d5h

mongodb-cluster-s9s-rs0-1 2/2 Running 0 2d5h

mongodb-cluster-s9s-rs0-2 2/2 Running 0 2d5h

percona-client 1/1 Running 0 3m7s

percona-server-mongodb-operator-588db759d-qjv29 1/1 Running 0 2d5h

$ kubectl delete po mongodb-cluster-s9s-rs0-1

pod "mongodb-cluster-s9s-rs0-1" deleted

$ kubectl get po

NAME READY STATUS RESTARTS AGE

mongodb-cluster-s9s-rs0-0 2/2 Running 0 2d5h

mongodb-cluster-s9s-rs0-1 0/2 Init:0/1 0 3s

mongodb-cluster-s9s-rs0-2 2/2 Running 0 2d5h

percona-client 1/1 Running 0 3m29s

percona-server-mongodb-operator-588db759d-qjv29 1/1 Running 0 2d5h

$ kubectl get po

NAME READY STATUS RESTARTS AGE

mongodb-cluster-s9s-rs0-0 2/2 Running 0 2d5h

mongodb-cluster-s9s-rs0-1 0/2 PodInitializing 0 10s

mongodb-cluster-s9s-rs0-2 2/2 Running 0 2d5h

percona-client 1/1 Running 0 3m36s

percona-server-mongodb-operator-588db759d-qjv29 1/1 Running 0 2d5h

$ kubectl get po

NAME READY STATUS RESTARTS AGE

mongodb-cluster-s9s-rs0-0 2/2 Running 0 2d5h

mongodb-cluster-s9s-rs0-1 2/2 Running 0 26s

mongodb-cluster-s9s-rs0-2 2/2 Running 0 2d5h

percona-client 1/1 Running 0 3m52s

percona-server-mongodb-operator-588db759d-qjv29 1/1 Running 0 2d5hNow that we’re all set. Of course, you might need to expose the port so you might have to deal with adjustments in deploy/cr.yaml. You can refer here to deal with it.

Conclusion

The Percona Kubernetes Operator for PSMDB can be your complete solution especially for containerized environments for your Percona Server for MongoDB setup. It’s almost a complete solution as it has redundancy built-in for your replica set yet the operator supports backup, scalability, high-availability, and security.