blog

Architecting for Security: A Guide for MySQL

Security is paramount today across the whole of IT. From time to time we hear about ransomware attacks or data leaks that have their origin in not secured databases or IT infrastructure. You may wonder: what are the best practices in architecting MySQL environment so you can feel secure about your data? If so, this blog is for you. Please keep in mind that we will not cover the topic fully – this would fit more into a whitepaper than a blog. We will do our best to mention the most important aspects of securing your MySQL database. The idea behind this blog is so that the reader will know what she or he doesn’t know and help to identify the topics and keywords for further research. We will illustrate it with screenshots from our product, ClusterControl, which comes with a vast set of features, including some around database security.

Network

First of all, we have to deploy MySQL somewhere. Be it standalone instance, primary – replica asynchronous replication or one of the more advanced, synchronous replication topologies like Galera or InnoDB Cluster. No matter what it is, it has to be protected on the network level. Database contains the data, which, quite commonly, is the most valuable asset of a whole organization.

Securing the access

Database instances should never be located on the public network. Network segments in which databases are configured should be accessible only from a limited number of other networks. The rule of thumb is – should a given node be able to access the database network? If the answer is no, the networks should be separated.

Of course, it all depends on the exact setup but in the most common cases, where you have application, proxy, cache and database layers, the most typical setup would be that only the proxy should be able to access the database. All other entities should be configured in a way that they access the database only via proxy layer. This design is good in many ways. On top of the increased security, it also helps to hide the complexity of the database tier from the application.

The proxy layer should be following the database topology and should handle the database node failures and topology changes. Application, connecting to the proxy layer, should always be able to reach out to a working database node relevant to the type of the request. The same is with the cache layer. It can be implemented in the proxy layer, some proxies like ProxySQL allow cache requests within the proxy but if it is a separate layer built around, for example, memcache or Redis, it should always be reaching out to the database via proxy layer.

The one more type of nodes that may need to have direct access to the database layer are management nodes – those which are used by the operational teams to manage the databases. The reason is simple: some of the maintenance tasks may require direct access to the databases. It could be task automation scripts, rolling Ansible playbooks across the whole database fleet or other tasks. In that case, obviously, security measures should be in place to ensure that only the people who have access can log in to such management node.

Another possible type of nodes (although management nodes can also be used for that) that may require access to the database are nodes involved in collecting metrics and presenting them to the users – we are talking here about monitoring and alerting activities.

VPN

For any kind of database tier spanning across multiple datacenters you should consider using VPN to connect them. Open, unencrypted connections over WAN network is something that should never happen. Even setting up the SSL encryption is not the best option as it would require opening access between the database tier and the WAN – SSL connections between database nodes require them to be able to connect directly. VPN solves this problem by adding a middle-man that creates a secure way of connecting segments of the database tier network.

VPN should also be mandatory for any kind of user access to the organization’s network as it implements secure connectivity between a workstation and the production network.

Firewall

Of course, while securing the network we should consider using the firewall. Generally speaking, every database node should only receive connections from a defined set of sources – hostnames and ports. Even the “required” network segments should not have full access to the database network but only to the required ports. If the proxy only needs to connect to the database port, then there is no reason for it to be able to access any other port on the database nodes. Please also note that you should not use the default ports. It is, obviously, security by obscurity – after all the port is open somewhere, but it helps to deal with at least some of the security intrusions that use automated scripts. It won’t prevent someone who’s determined to get the access but may slow him down (when coupled with port scanning detection and anti-scan measures) while preventing automated attacks from succeeding.

Database Security

Network is the first line of defence, there are other security measures and good practices you can use to improve your security even further. Some of them can be implemented on the database itself.

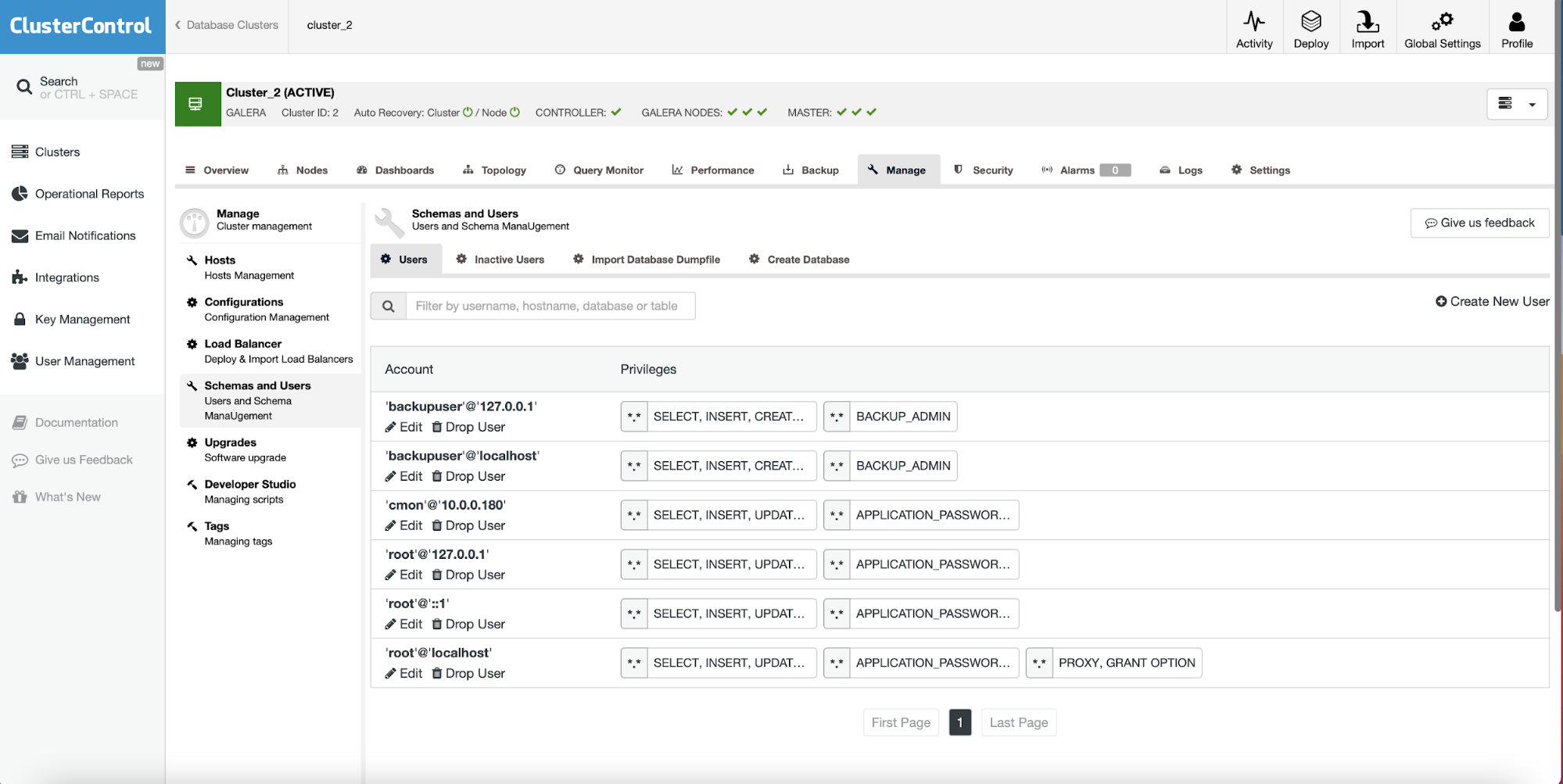

Users and hosts

Databases themselves can be used to implement access control and restrictions. For starters, you can implement host-based access control, preventing hosts other than the short list of nodes to log into the database. Of course, if you used a firewall to limit the access this may sound like a duplicate but it is still a good idea to limit the access on the database itself – you never know when, by accident, a firewall will be disabled. In such a case you still have a second layer of protection.

What you want to implement here is a list of database users and hosts that are allowed to access the database. Most likely what you should end up having is one or more user granted access from hosts located in the proxy layer. How detailed access control you may have depends on the database system that you have. MySQL, for example, doesn’t allow detailed control over the network masks – it just uses /32, /24, /16 or /8. In PostgreSQL, on the other hand, you can use any kind of network mask.

Grants

If this is what your database allows, each of the users should have a defined set of grants – ensuring that the privileges assigned to them are the minimal required for the user to perform the actions he has to do. Database systems may have different set of privileges and different levels of them. Typically we have several levels of privileges – global, affecting the whole database server, schema level – given user may have different privileges assigned to different schemas. You can have privileges on a table or even column level. As we mentioned before, the goal is to create the minimal set of privileges for every user. You will probably want to have at least one user with high privileges – it would be used to manage the database. Such user should be strictly limited when it comes to connectivity. It should not (and in fact neither of users should not) be allowed to connect from any location – it should be either a localhost, or some particular management node, dedicated to performing operations on the database.

Password management

Every user in the database should have a password defined. This is a no-brainer. The password should be stored in a form of a hash. You should ensure that for storing the passwords you are using the safest hash algorithm that your database has to offer. Passwords should not be easy to guess nor they shouldn’t be vulnerable to the dictionary attack. Some database systems, like MySQL, allow you to precisely define the requirements your passwords have to meet in order for them to be used. Lower and upper case letters, numbers, special characters, length of the password – all of it is important and if you can enforce some policies around the password strength, you should do that. Another important bit is password rotation. Passwords should not be created once and for all database lifetime, you should have a password rotation policy. Again, some of the database systems can enforce this for you. Administrative user may be able to create new user accounts with password rotation enforced. He may also be able to enforce password rotation for a given user.

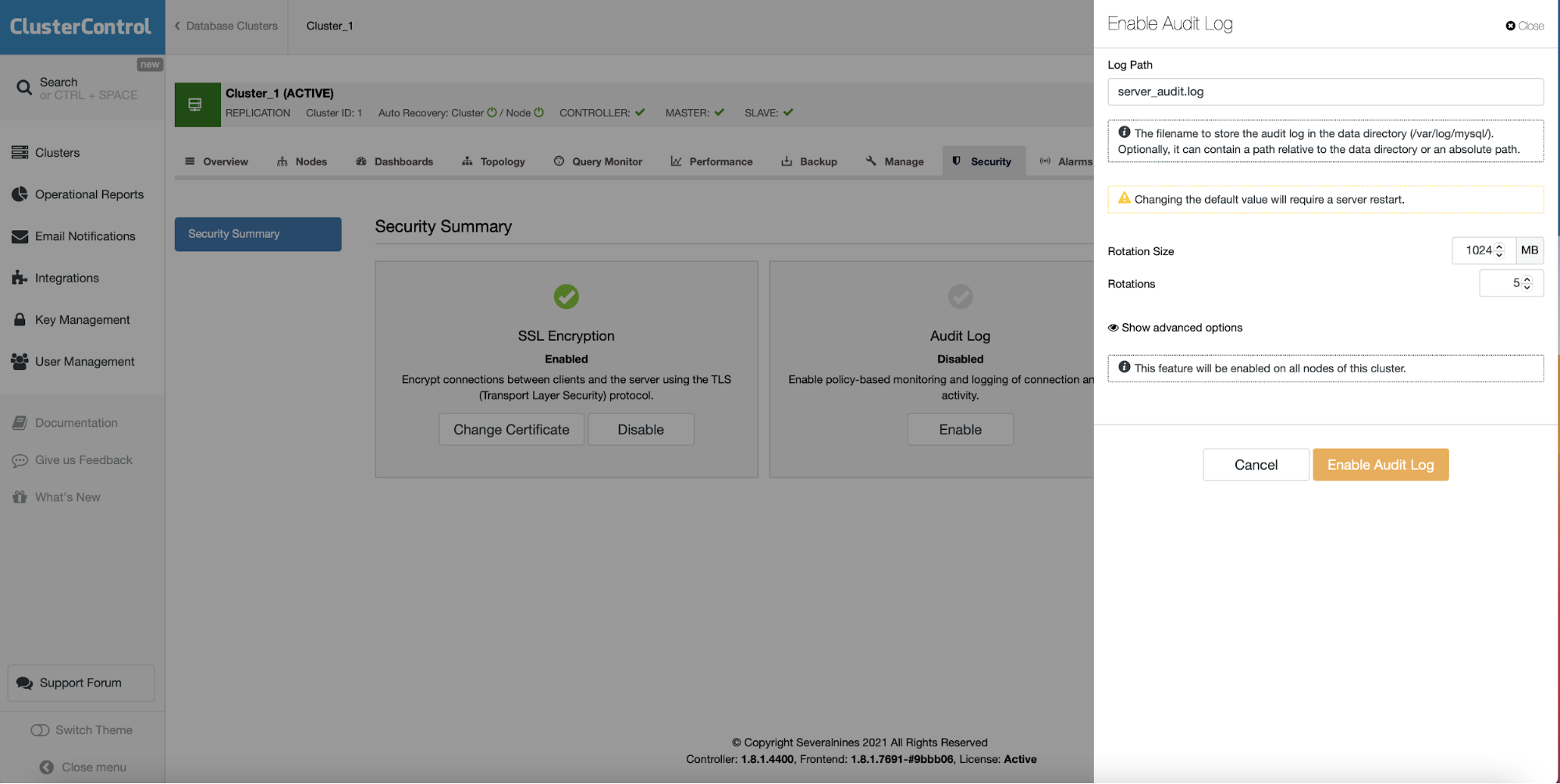

Audit logs

Some of the database systems offer audit logs – the idea is to collect as much information about the activity in the database as possible. Who and when did what? Which query has been executed, by whom? Who attempted to log in but failed? From which host? Ideally, logs containing such information would be stored outside of the database nodes. You can stream them to your central log server for safekeeping, further processing and better search capabilities.

SQL Security

We mentioned users and hosts but the attack can also happen from a different source. If your application is not secured properly and the input is not correctly validated, you may be facing attacks originating from your website. We are talking here about SQL injection. In such case firewalls are not really useful given that the query originated from a valid source (your web server and then proxy node). Assigning grants may actually help to prevent some of this kind of attack, but it is not an ideal solution – after all your application, in majority of the cases, will need a user that can remove or modify the contents of the database. Such user, when exploited, can be used to do harm. There are several ways in which you can try to counter the treat.

SQL firewall

The simplest way to do it is by implementing SQL firewall. It can be accomplished on different levels and in different places. One of the options is to use load balancers for that. Some of them come with this functionality at least easily achievable if not already implemented. The idea behind it is to build a list of queries that your application executes and then configure your proxy to pass through only this kind of traffic. It is not ideal as you will have to maintain it in time, adding new queries and removing old ones that are not used anymore. On the other hand, such a set of rules will prevent any query that is not authorized, from reaching the database.

SQL injection detection

Another possible option would be to implement SQL injection detection in the proxy layer. There are a couple of solutions, ProxySQL among others, that can be configured to attempt to detect SQL injection in the traffic that is passing through them. Of course, all of it is based on heuristics so it may result in false positives, but it can be a good addition to the SQL firewall.

In the past we have discussed how you can implement SQL firewall and SQL injection detection using ProxySQL, a loadbalancer that can be deployed from ClusterControl:

Data Security

Finally, data security. We have discussed so far how one can harden the database, how to limit the access to it and how to prevent different kinds of attacks coming from the application itself. We still should consider protection of the data itself. This can have several layers. Physical security – if you own the datacenter, ensure it is properly locked down. If you use external ISP’s or cloud providers, ensure they have proper security protocols in place when it comes to accessing the hardware. Then we have a server, VM or however you are using. Data sits on disk, stored locally on the server. Data is being transferred between the application, proxy and the database. Data is transferred between database nodes by the means of replication. Data is being stored offsite as backups. This data should be protected.

Backups

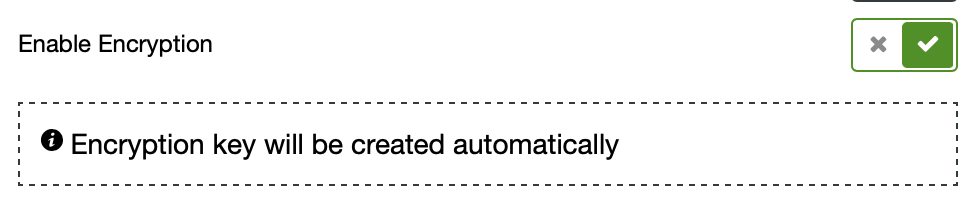

Backups should always be encrypted. The encryption key should be maintained carefully and rotated on a regular basis.

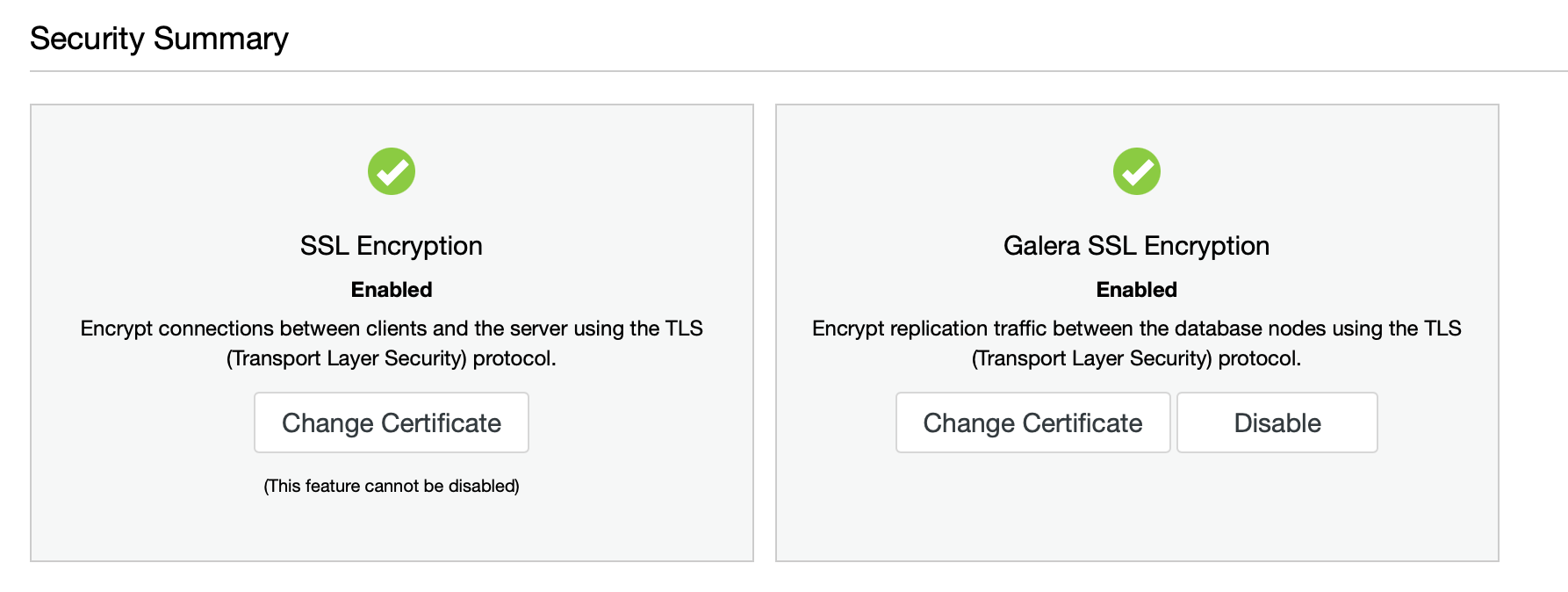

Data in transit

Data that is transferred should be encrypted. Make sure you have configured your application, proxy layer and database to use SSL or TSL. Every means of transferring the data between the database nodes should also be secured and encrypted. The goal is to make any kind of network sniffing pointless.

Data at rest

Finally, the data itself, stored on the database node. It also should be encrypted. There are a couple of methods you can use when approaching this topic. First, encryption on the host level. The volume on which the database has its data directory can be encrypted (and decrypted at the boot). Databases also tend to come with encryption capabilities. What can be encrypted depends on the exact solution and the type and version of the database, but in some cases the options are quite extensive. Tablespace encryption, log encryption, sometimes even encryption of the in-memory structures. If you do it properly, accessing the database node will not be enough to access the data.

Conclusion

As we mentioned before, this blog is not intended to be a hands-on guide to database security but we have touched upon the majority of the aspects that you should consider when architecting your database environment and we hope you will find this guide useful.