blog

MySQL Load Balancing: Migrating ProxySQL from On-Prem to AWS EC2

Migrations between different environments are uncommon in database world. Migrations from one provider to another one. Moving from one datacenter to another. All of this happens on a regular basis. Organisations search for expense reduction, better flexibility and velocity. Those who owned their datacenter look forward to switch to one of the cloud providers where they can benefit from better scalability and handling capacity changes. Migrations touch all elements of the database environment – databases themselves but also the proxy and caching layer. Moving databases around is tricky but it is also hard to manage multiple proxy instances, ensuring that the configuration is in sync across all of them.

In this blog post we will take a look at challenges related to one particular piece of migration – migrating ProxySQL proxy layer from on-prem environment to EC2. Please keep in mind this is just an example, the truth is, the majority of the migration scenarios will look pretty much similar so the suggestions we are going to give in this blog post should apply to the majority of the cases. Let’s take a look at the initial setup.

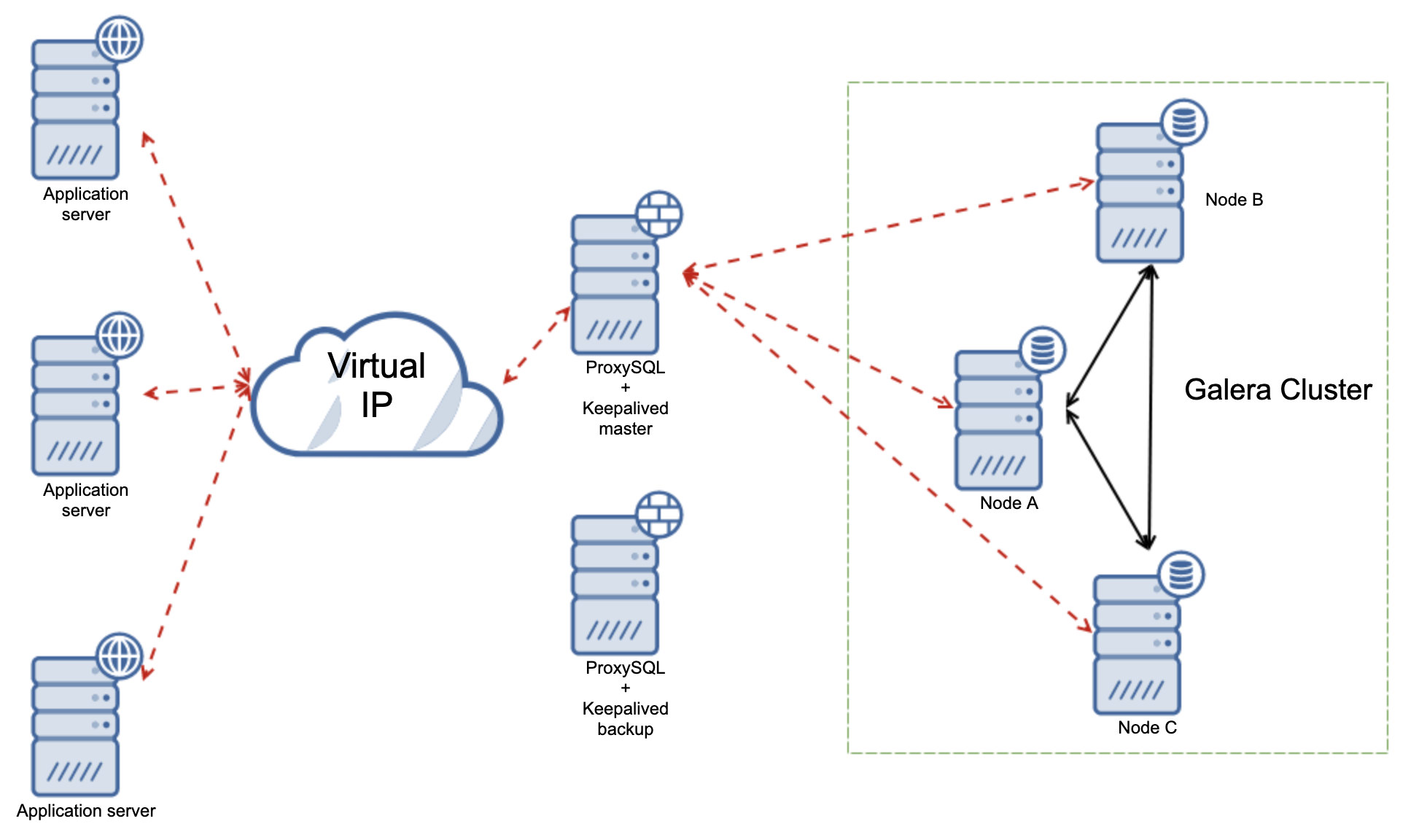

Initial On-Prem Environment

The initial, on-prem setup is fairly simple – we have a three node Galera Cluster, two ProxySQL instances which are configured to route the traffic to backend databases. Each ProxySQL instance has a Keepalived colocated. Keepalived manages Virtual IP and assigns it to one of the available ProxySQL instances. Should that instance fails, VIP will be moved to the other ProxySQL, which will commence serving the traffic. Application servers use VIP to connect to and they are not aware of the setup of the proxy and database tiers.

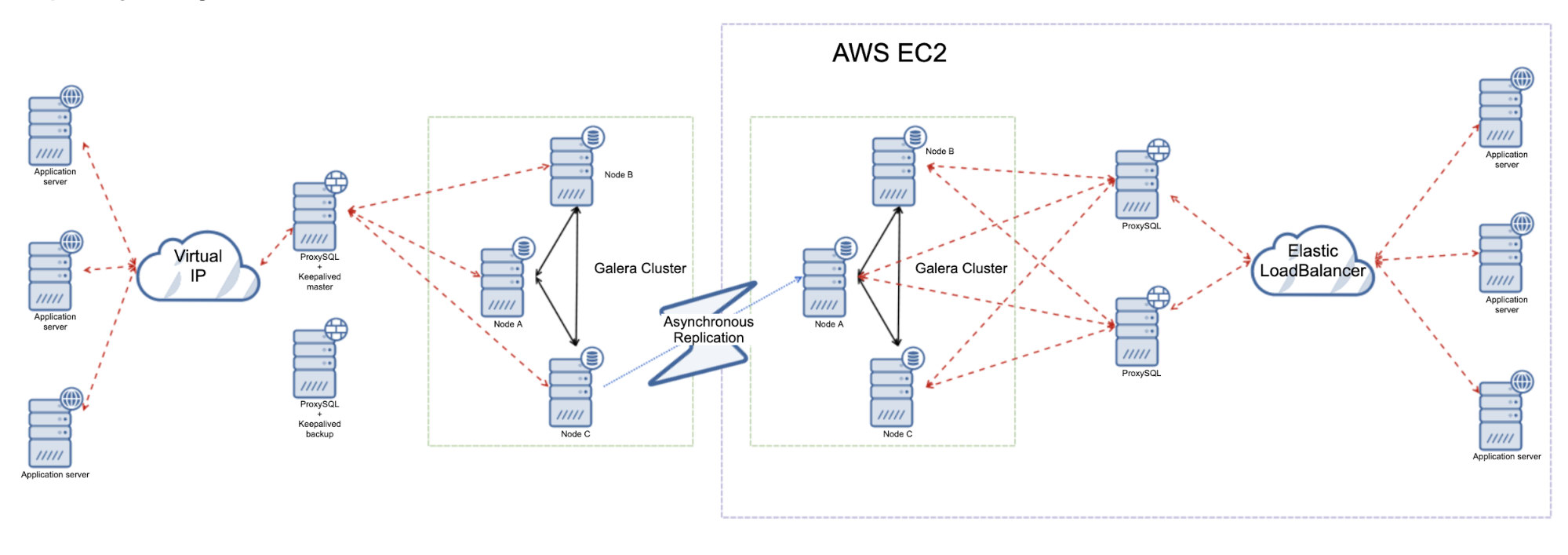

Migrating to an AWS EC2 Environment.

There are a couple of prerequisites that are required before we can plan a migration. Migrating the proxy layer is no different in this regard. First of all, we have to have a network access between existing, on-prem environment and EC2. We will not be going into details here as there are numerous options to accomplish that. AWS provides services like AWS Direct Connect or hybrid cloud integration in Amazon Virtual Private Cloud. You can use solutions like setting up OpenVPN server or even use SSH tunneling to do the trick. All depends on the available hardware and software options at your disposal and how flexible you want the solution to be. Once the connectivity is there, let’s stop a bit and think how the setup should look like.

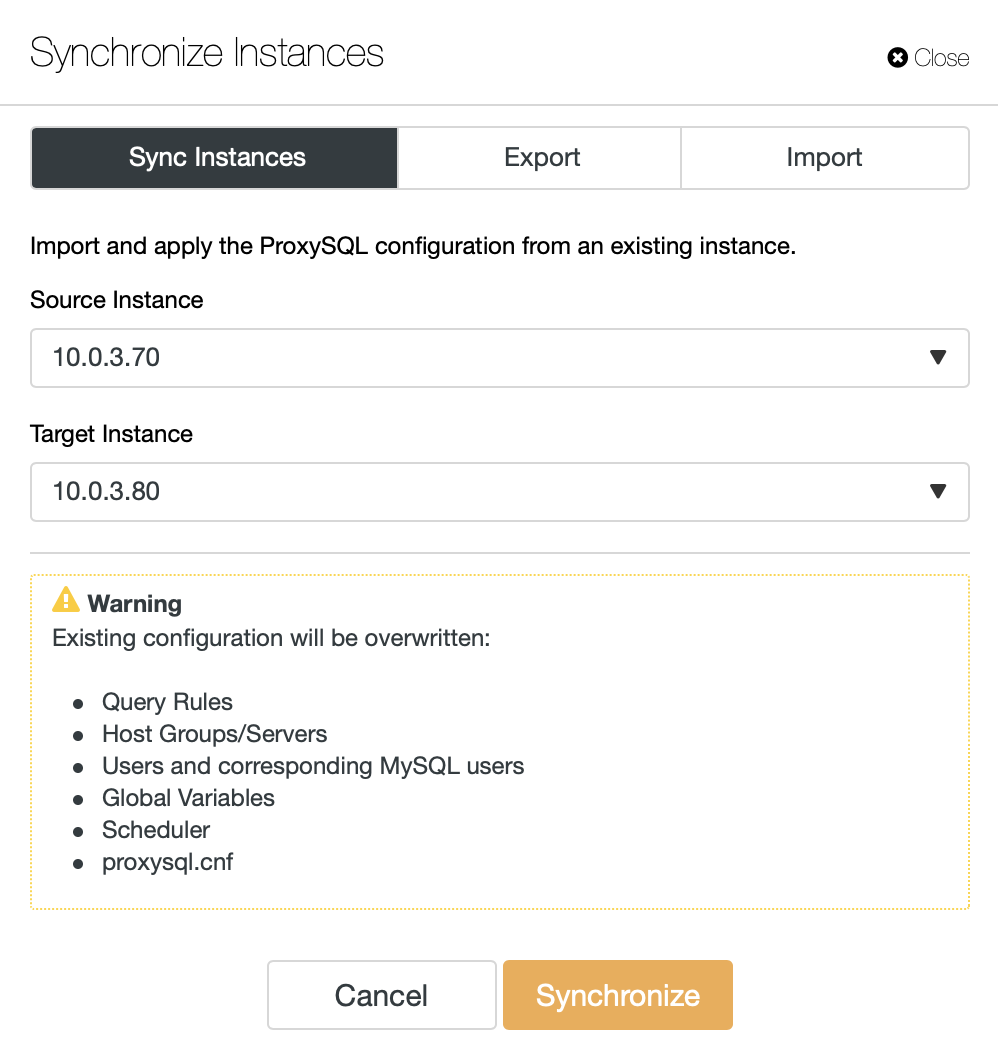

From the ProxySQL standpoint, there is one main concern – how to ensure that the configuration of the ProxySQL instances in the EC2 will be in sync with the configuration of ProxySQL instances on-prem? This may not be a big deal if your configuration is pretty much stable – you are not adding query rules, you are not tweaking the configuration. In that case it will be enough just to apply the existing configuration to newly created ProxySQL instances in EC2. There are a couple of ways to do that. First of all, if you are ClusterControl user, the simplest way will be to use “Synchronize Instances” job which is designed to do exactly this.

Another option could be to use dump command from SQLite: http://www.sqlitetutorial.net/sqlite-dump/

If you store your configuration as a part of some sort of infrastructure orchestration tool (Ansible, Chef, Puppet), you can easily reuse those scripts to provision new ProxySQL instances with proper configuration.

What if the configuration changes quite often? Well, there are additional options to consider. First of all, most likely all the solutions above would work too, as long as the ProxySQL configuration is not changing every couple of minutes (which is highly unlikely) – you can always sync the configuration straight before you do the switchover.

For the cases where configuration changes quite often you can consider setting up a ProxySQL cluster. The setup has been explained in detail in our blog post: https://severalnines.com/blog/how-cluster-your-proxysql-load-balancers. If you would like to use this solution in a hybrid setup, over WAN connection, you may want to increase cluster_check_interval_ms a bit from default 1 second to a higher value (5 – 10 seconds). ProxySQL cluster will ensure that all the configuration changes made in on-prem setup will be replicated by ProxySQL instances in EC2.

Final thing to consider – how to switch to correct servers in ProxySQL? The gist is – ProxySQL stores list of backend MySQL servers to connect to. It tracks their health and monitors latency. In the setup we discuss our on-prem ProxySQL servers hold list of backend servers which are also located on-prem. This is the configuration we will sync to the EC2 ProxySQL servers. This is not a hard problem to tackle and there are a couple of ways to work around it.

For example, you can add servers in OFFLINE_HARD mode in a separate hostgroup – this will imply that the nodes are not available and using a new hostgroup for them will ensure that ProxySQL will not check their state like it does for Galera nodes configured in hostgroups used for read/write splitting.

Alternatively you can simply skip those nodes for now and, while doing the switchover, remove existing servers and then run couple INSERT commands to add backend nodes from EC2.

Conclusion

As you can see, the process of migrating ProxySQL from on-prem setups to cloud is quite easy to accomplish – as long as you have network connectivity, remaining steps are far from complex. We hope this short blog post helped you to understand what’s required in this process and how to plan it.