blog

Installing ClusterControl on an Existing MongoDB Replica Set Using a Bootstrap Script

So, your development project has been humming along nicely on MongoDB, until it was time to deploy the application. That’s when you called your operations person and things got uncomfortable. NoSQL, document database, collections, replica sets, sharding, config servers, query servers,… What the hell’s going on here?

It should not be a surprise for an ops person to question the use of a new database system. Monitoring the health of systems and ensuring they perform optimally are what operations folks do. Time will need to be spent on understanding this new database works, how to deploy so as to avoid or minimize problems, what to monitor, how to perform backups, how to add capacity and how to repair or recover the cluster when things really go wrong.

MMS is a free SaaS solution from MongoDB Inc. to monitor system metrics (1 minute resolution) and send email alerts upon failures. Recently, a cloud backup feature was added.

ClusterControl is an on-premise tool with combined monitoring, cluster management and deployment functionality. It provides high granularity monitoring data (down to 1 second resolution), providing sufficient depth of information to support detailed analysis and optimization activities.

It is possible to run both MMS and ClusterControl on your existing MongoDB or TokuMX cluster. You can see how the graphs compare in this blog post.

In this post, we are going to show on how to install ClusterControl on top of your existing MongoDB/TokuMX Replica Set using the bootstrap script. Note that you are able to colocate ClusterControl with any of the mongod instances.

Requirement

Prior to this installation, whether it is Replica Set or Sharded Cluster, make sure your MongoDB or TokuMX instances meet the following requirements:

- ClusterControl requires every mongod instance to have an associate PID file. This includes shardsvr, configsvr, mongos, and arbiter. To define a PID file, use –pidfilepath variable or pidfilepath option in the configuration file. Starting from version 1.2.5, ClusterControl is able to detect PID even without specifying pidfilepath.

- All MongoDB binaries must be installed on each node identically under one of the following paths:

- /usr/bin

- /usr/local/bin

- /usr/local/mongod/bin

- /opt/local/mongodb

- MongoDB Replica Set/Sharded Cluster has been configured as a cluster. Verify this with sh.status() or rs.status() command.

- Ensure that the designated ClusterControl node meets the hosts requirement.

Architecture

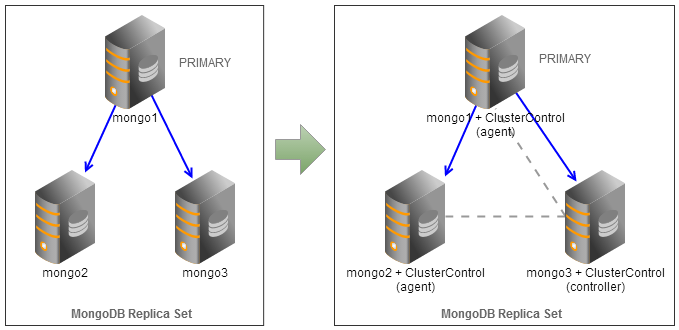

We have a three-node MongoDB Replica Set (one primary, one secondary and one arbiter) running on Ubuntu 12.04. All mongod instances have been installed through 10gen apt repository. ClusterControl controller will be installed on mongo3 so this is where we will perform the installation. mongo3 is configured as an arbiter to the replica set.

Let’s verify our replica set to make sure it meets the requirements. We will need to configure MongoDB to generate a PID file by adding following line into /etc/mongodb.conf:

pidfilepath=/var/lib/mongodb/mongodb.pidRestart MongoDB to apply the change:

$ service mongodb restartTo verify the pidfilepath, you can use following command to concatenate the PID file (it should report the PID number):

$ cat /var/lib/mongodb/mongodb.pid

39101Or use following command line via mongo client:

rs0:PRIMARY> db.runCommand("getCmdLineOpts");Check the MongoDB binaries path. You can use whatever methods to detect path such as locate, command -v or find:

$ sudo updatedb

$ locate mongo | grep bin

/usr/bin/mongo

/usr/bin/mongod

/usr/bin/mongodump

/usr/bin/mongoexport

/usr/bin/mongofiles

/usr/bin/mongoimport

/usr/bin/mongooplog

...Verify the replica set is configured properly:

rs0:PRIMARY> rs.status()

{

"set" : "rs0",

"date" : ISODate("2014-01-09T12:23:59Z"),

"myState" : 1,

"members" : [

{

"_id" : 0,

"name" : "mongo1:27017",

"health" : 1,

"state" : 1,

"stateStr" : "PRIMARY",

"uptime" : 84,

"optime" : Timestamp(1389270224, 1),

"optimeDate" : ISODate("2014-01-09T12:23:44Z"),

"self" : true

},

{

"_id" : 1,

"name" : "mongo2:27017",

"health" : 1,

"state" : 2,

"stateStr" : "SECONDARY",

"uptime" : 27,

"optime" : Timestamp(1389270224, 1),

"optimeDate" : ISODate("2014-01-09T12:23:44Z"),

"lastHeartbeat" : ISODate("2014-01-09T12:23:58Z"),

"lastHeartbeatRecv" : ISODate("2014-01-09T12:23:58Z"),

"pingMs" : 1,

"syncingTo" : "mongo1:27017"

},

{

"_id" : 2,

"name" : "mongo3:27017",

"health" : 1,

"state" : 7,

"stateStr" : "ARBITER",

"uptime" : 15,

"lastHeartbeat" : ISODate("2014-01-09T12:23:58Z"),

"lastHeartbeatRecv" : ISODate("2014-01-09T12:23:58Z"),

"pingMs" : 1

}

],

"ok" : 1

}Installing ClusterControl

1. On mongo3, get the bootstrap script ready for installation:

$ wget https://severalnines.com/downloads/cmon/cc-bootstrap.tar.gz

$ tar zxvf cc-bootstrap.tar.gz

$ cd cc-bootstrap-*2. Start the installation:

$ ./s9s_bootstrap --install3. Follow the configuration wizard accordingly (example as below):

=============================================

ClusterControl Bootstrap Configurator

=============================================

Is this your Controller host IP, 192.168.197.183 [Y/n]:

ClusterControl requires an email address to be configured as super admin user.

What is your email address [[email protected]]: [email protected]

What is your username [ubuntu] (e.g, ubuntu or root for RHEL):

We presume that all hosts in the cluster are running on the same OS distribution.

ClusterControl needs to use a shared key to perform installation and management on all hosts.

Where is your SSH key? (it will be generated if not exist) [/home/ubuntu/.ssh/id_rsa]:

What is your SSH port? [22]:

We presume all hosts in the cluster are running on the same SSH port.

ClusterControl needs to have a directory for installation purposes.

Enter a directory that will be used during this installation [/home/ubuntu/s9s]:

What is your database cluster type [galera] (mysqlcluster|replication|galera|mongodb): mongodb

** MongoDB Replica Set: Minimum 3 nodes (with arbiter) are required (excluding ClusterControl node).

** MongoDB Sharded Cluster: Minimum 3 nodes are required (excluding ClusterControl node).

What type of MongoDB cluster do you have [replicaset] (replicaset|shardedcluster):

Specify MongoDB arbiter instances if any (ip:port) [] (white space separated): 192.168.197.183:27017

Where are your MongoDB shardsvr/replSet instances (ip:port) [ip1:27017 ip2:27017 ... ipN:30000] (white space separated): 192.168.197.181:27017 192.168.197.182:27017

Where are your cluster data dbpath directories [] (white space separated:/mnt/ /mnt/): /data/db

ClusterControl requires MySQL server to be installed on this host. Checking for MySQL server on localhost..

Found a MySQL server.

Enter the MySQL root password for this host [password]:

ClusterControl will create a MySQL user called 'cmon' to perform management, monitoring and automation tasks.

Enter the user cmon password [cmon]: cmonP4ss

Checking for Apache and PHP5..

Found Apache and PHP binary.

Where do you want ClusterControl web app to be installed to? [/var/www]:

=========================================================================

Configuration is now complete. You may proceed to install ClusterControl.

========================================================================= 4. Wait until the deployment completes. You will see the following if the installation is successful:

=================================================

### CLUSTERCONTROL INSTALLATION COMPLETED. ###

Kindly proceed to register your cluster with following details:

URL : https://192.168.197.183/cmonapi

Username : [email protected]

Password : admin

ClusterControl API Token, 806132dcc3d04ac5bc531279cd943e03264ad305

ClusterControl API URL, https://192.168.197.183/cmonapi

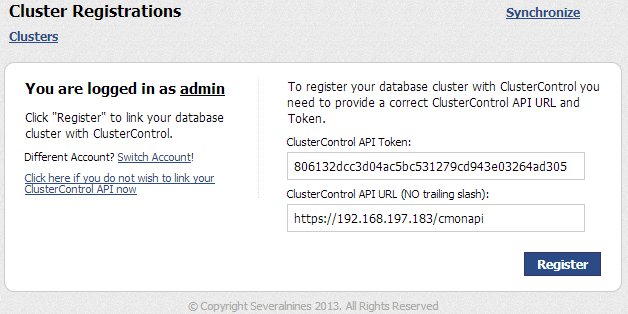

=================================================5. Register the cluster by pointing your web browser to the ClusterControl API URL. Click “Login Now” and log into ClusterControl using the default username and password. You will then be redirected to the Cluster Registrations page. Enter the ClusterControl API Token generated for this installation, similar to screenshot below:

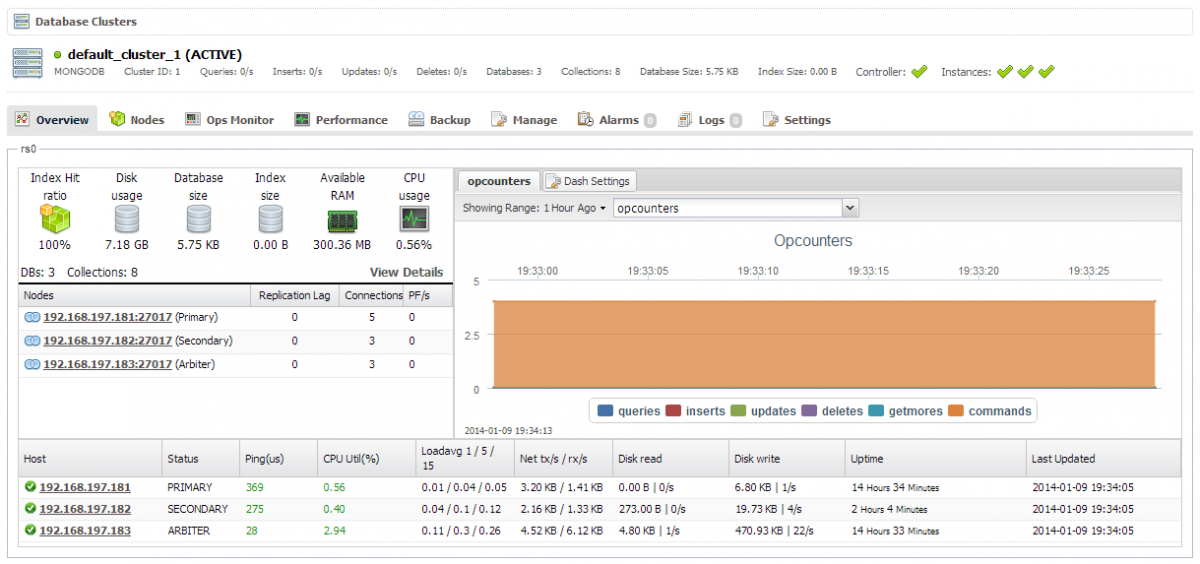

Click “Register”. You should able to see your MongoDB Replica Set in the ClusterControl UI:

Post-Installation

From the ClusterControl UI, you can click the “Help” menu (located on top of the page) for a product tour. This is a quick way of getting to know the functionality available on the current page.

If you encounter any problems during the installation process, or have any questions, please visit our Support portal at https://support.severalnines.com/.

Congratulations, you’ve now got cluster management for your existing MongoDB/TokuMX Replica Set!