blog

Hybrid Cloud Replication for MySQL for High Availability

Hybrid environments, where a part of the database infrastructure is located on-prem and some of it is located in a public cloud are not uncommon. There may be different reasons to use such setup – scalability, flexibility, high availability, disaster recovery. How to implement this setup in a proper way? This might be challenging as you have to consider several pieces of a puzzle that have to fit together. This blog is intended to give you some insights into how such a setup may look like.

Connectivity

We are not going into details here because there are many ways of setting up connectivity between your on-prem setup and the public cloud. It will depend on the infrastructure you have in place, the public cloud you want to use and many other factors. Range of options may start with BGP-enabled routers, through hardware VPN, software VPN ending up on SSH tunnels as a way to temporarily connect your network to the instances in a public cloud. What is important, whatever you are going to do, the final outcome should be full and transparent connectivity from your on-premises network to the instances located in the public cloud.

High Availability Considerations

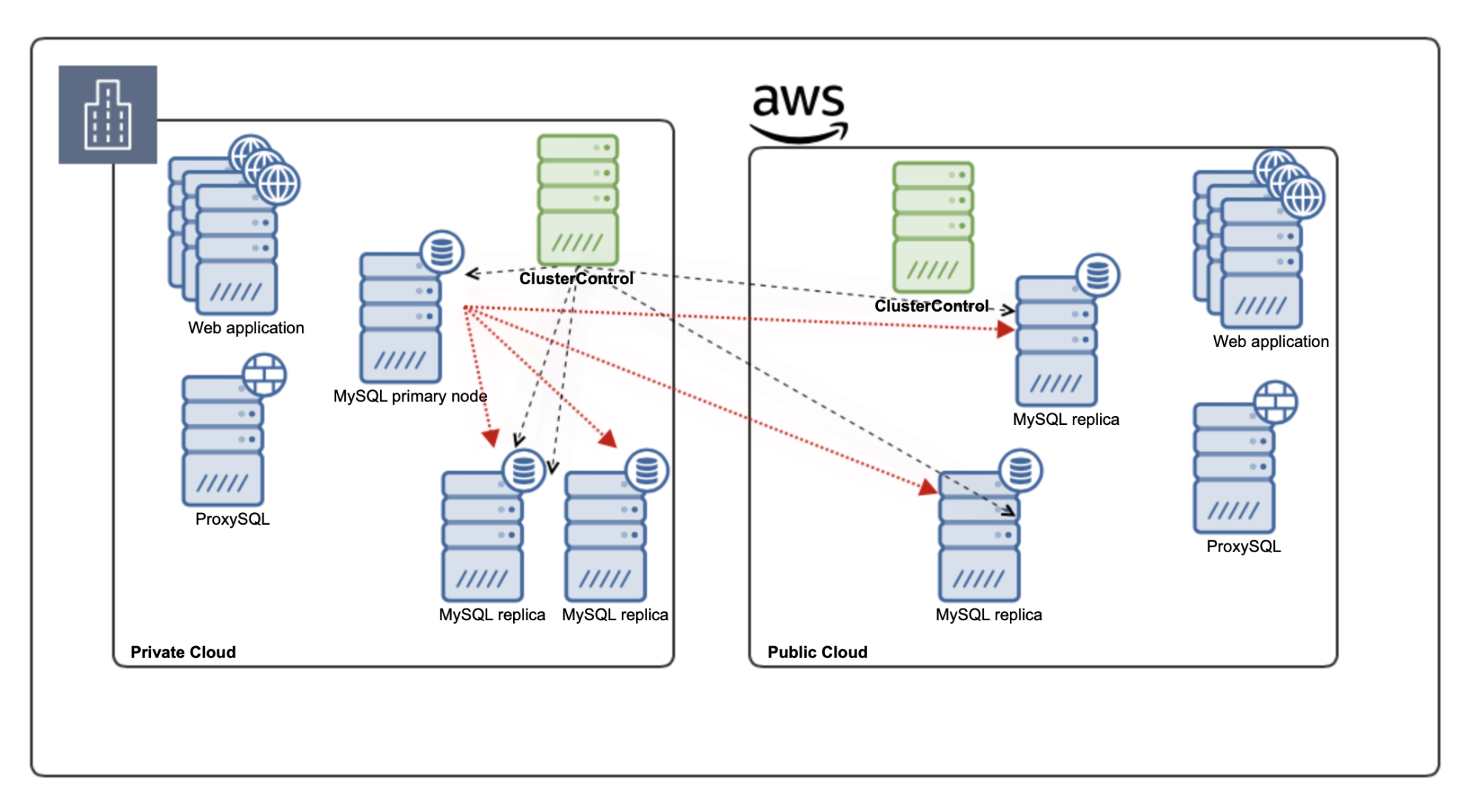

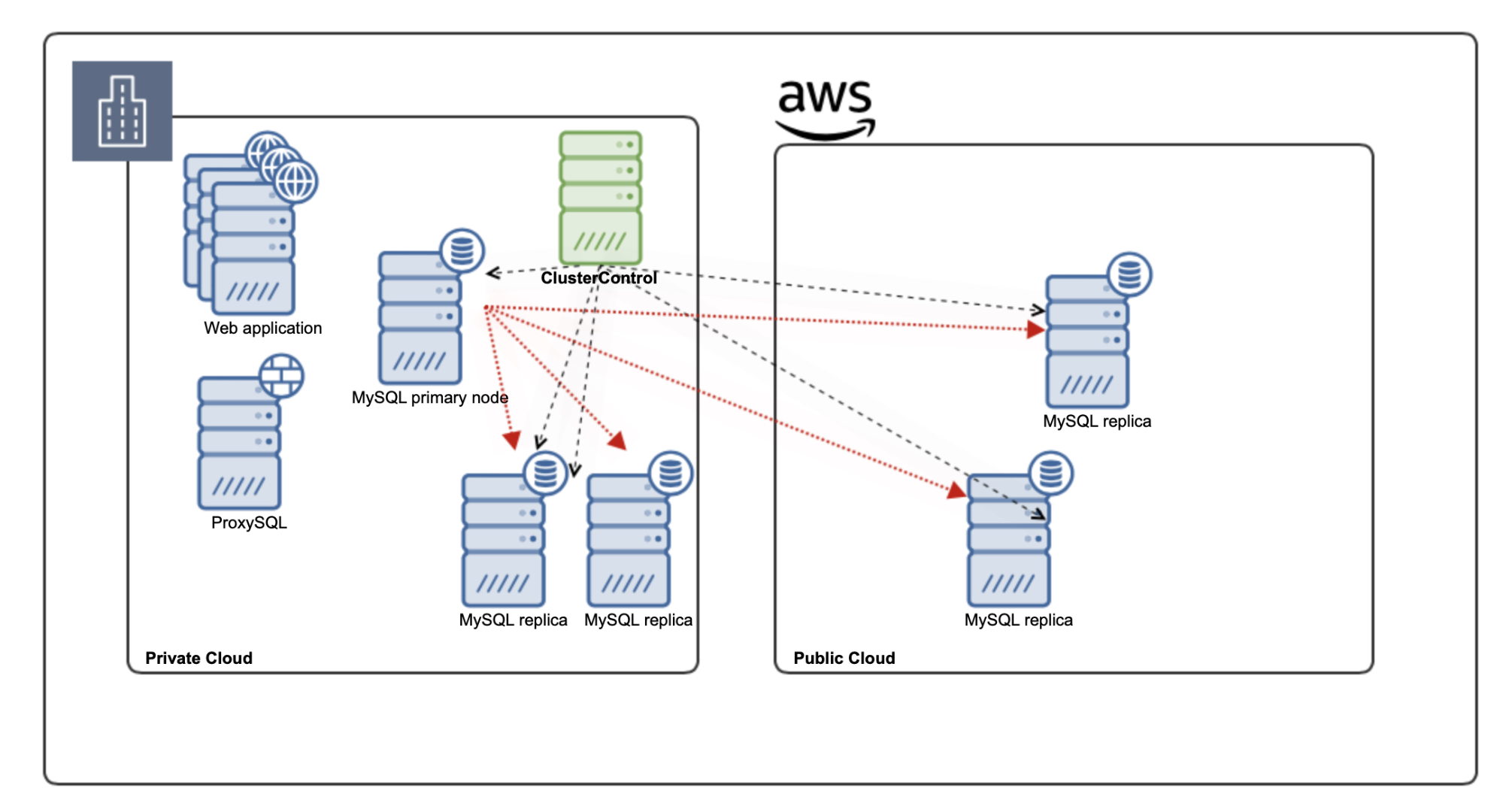

MySQL replication is a great way to build highly available systems but it comes with significant limitations. The main thing to consider is the writer – you can have only one place to send your writes to – the master. No matter how you want to design the whole environment, you have to carefully consider the placement of the master. Most likely you want it to be a part of the environment, which contains the application hosts. Let’s consider the following setup:

We have an on-prem setup with three MySQL nodes and two additional slaves located in the public cloud, acting as a disaster recovery means for the company, it is quite clear that the writeable node should be collocated with the application hosts in the private part of the cloud. We want to keep the latency as low as possible for the connections that matter the most.

This kind of design focuses on the availability of the databases – if the nodes located on prem won’t be available, application hosts may be able to connect to the remote part of the setup – database nodes located in the public cloud. Ideally, you would use some sort of a proxy for this – ProxySQL is one of the solutions that can track the topology and reconfigure as needed based on the existing replication chain.

If you do want to consider more of an active-active setup where you have application nodes across both private and public, you have to make some compromises as the writes will have to be transferred over the WAN, from the public to private cloud (or vice versa, if your main location where you operate in the public cloud).

Again, ProxySQL is the proxy of choice. What is great, ProxySQL can be configured as a ProxySQL Cluster, ensuring that the configuration changes introduced in the one node will be replicated across remaining ProxySQL nodes.

Failure Handling

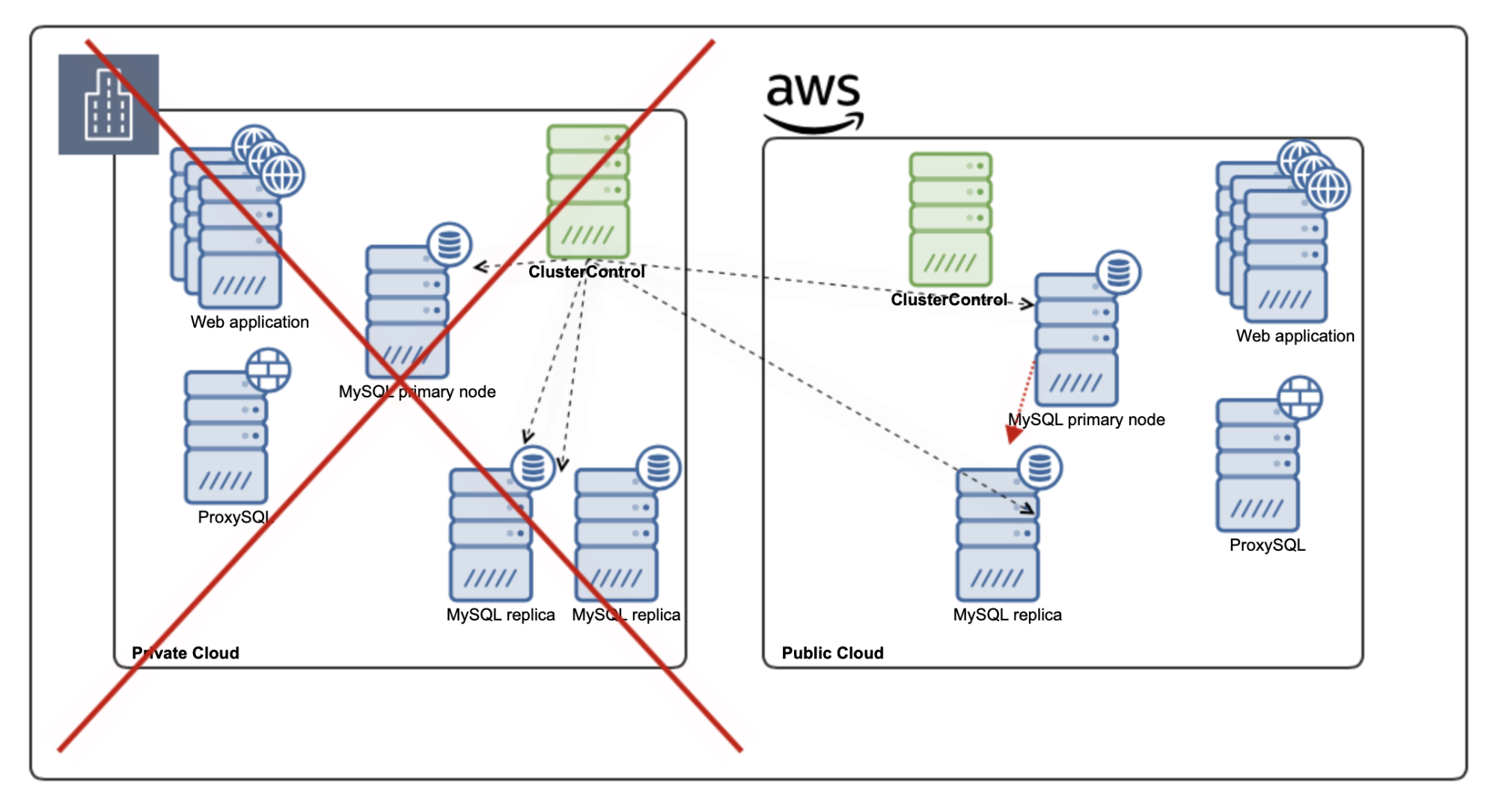

Let’s consider a couple of failure scenarios. Before anything, we have to keep in mind that MySQL asynchronous replication is not cluster-aware therefore the network split is something that has to be handled manually – it will be up to the user to make the decision and pull the switch to promote one of the slaves in the environment that is available. It is also up to the user to ensure that the environment, which has lost the network connectivity will behave as it should and it will not continue to operate.

If the private part of the cloud will become unavailable, as we mentioned earlier, manual action will be required to promote one of the slaves to become a new master. Then all remaining web application servers located in the public cloud, using local ProxySQL, will have their traffic redirected to the new master and all remaining slaves. On the other hand, given that we lost three out of five MySQL nodes, we want to scale out the public cloud setup – ClusterControl can help you in efficiently adding additional nodes to your cluster.

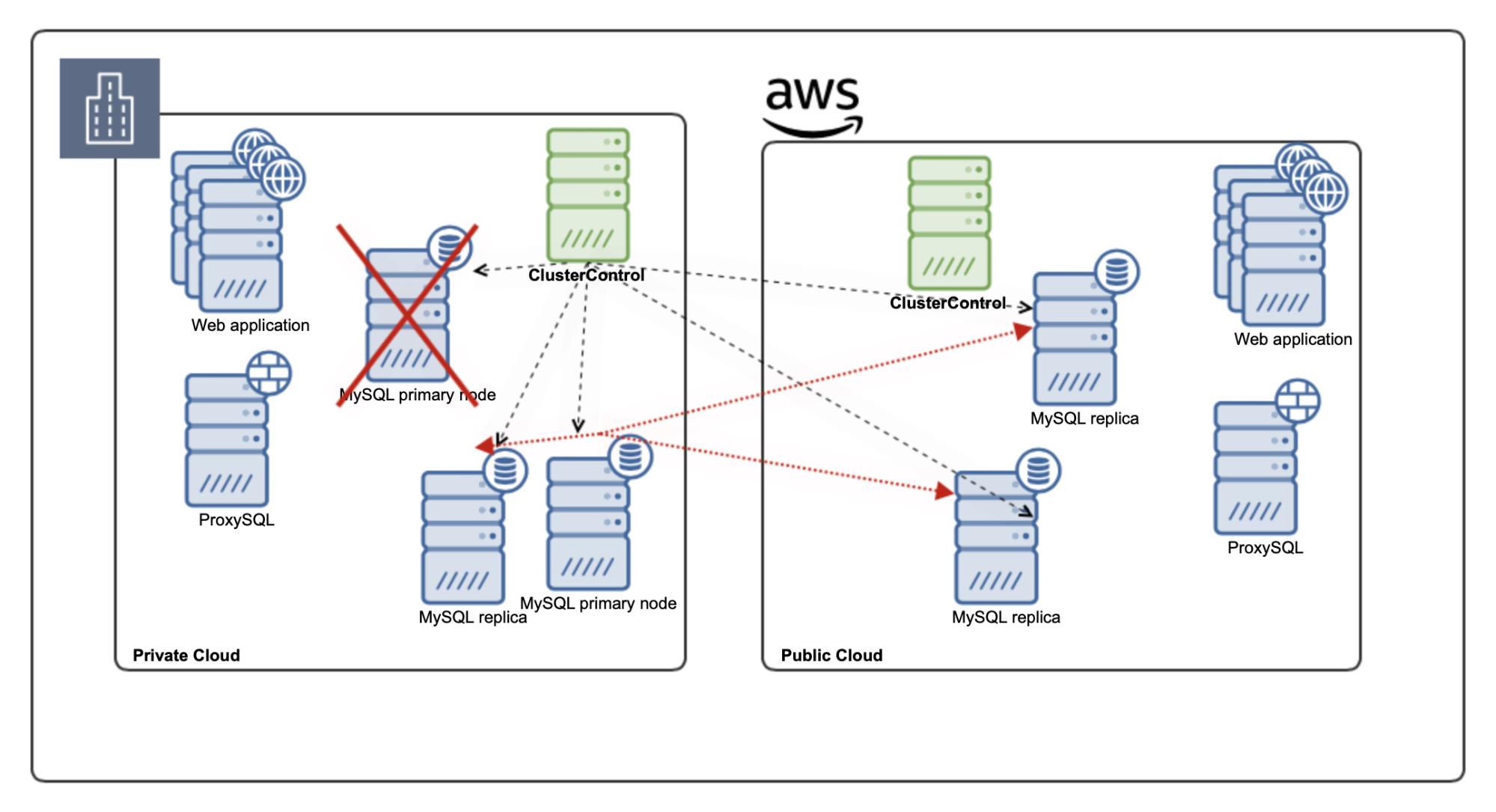

Another scenario might be that the writer has crashed while the connectivity between our on-prem setup and the public cloud works just fine.

In such a scenario we want to promote one of the slaves to become a new master. Depending on the requirements, we may also want the new master to be promoted between nodes in a given part of the environment. ClusterControl has the ability to whitelist or blacklist the nodes for the failover, ensuring that you have full control over the failover process and that you can pick which nodes should be considered as the candidates for a new master and in which order.

We hope this blog gave you some idea about how the hybrid cloud setup for MySQL replication works and how it can protect you in case of the database or network failures.