blog

How to Secure the ClusterControl Server

In our previous blog post, we showed you how you can secure your open source databases with ClusterControl. But what about the ClusterControl server itself? How do we secure it? This will be the topic for today’s blog. We assume the host is solely for ClusterControl usage, with no other applications running on it.

Firewall & Security Group

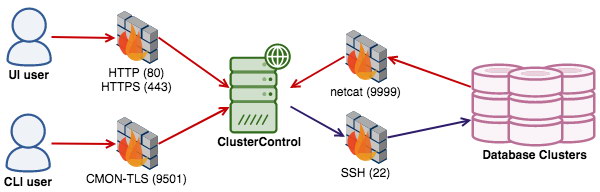

First and foremost, we should close down all unnecessary ports and only open the necessary ports used by ClusterControl. Internally, between ClusterControl and the database servers, only the netcat port matters, where the default port is 9999. This port needs to be opened only if you would like to store the backup on the ClusterControl server. Otherwise, you can close this down.

From the external network, it’s recommended to only open access to either HTTP (80) or HTTPS (443) for the ClusterControl UI. If you are running the ClusterControl CLI called ‘s9s’, the CMON-TLS endpoint needs to be opened on port 9501. It’s also possible to install database-related applications on top of the ClusterControl server, like HAProxy, Keepalived, ProxySQL and such. In that case, you also have to open the necessary ports for these as well. Please refer to the documentation page for a list of ports for each service.

To setup firewall rules via iptables, on ClusterControl node, do:

$ iptables -A INPUT -p tcp --dport 9999 -j ACCEPT

$ iptables -A INPUT -p tcp --dport 80 -j ACCEPT

$ iptables -A INPUT -p tcp --dport 443 -j ACCEPT

$ iptables -A INPUT -p tcp --dport 9501 -j ACCEPT

$ iptables -A OUTPUT -p tcp --dport 22 -j ACCEPTThe above are the simplest commands. You can be stricter and extend the commands to follow your security policy – for example, by adding network interface, destination address, source address, connection state and what not.

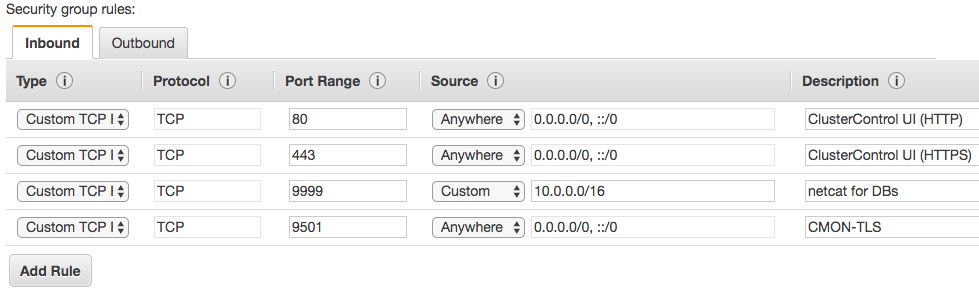

Similar to running the setup in the cloud, the following is an example of inbound security group rules for the ClusterControl server on AWS:

Different cloud providers provide different security group implementations, but the basic rules are similar.

Encryption

ClusterControl supports encryption of communications at different levels, to ensure the automation, monitoring and management tasks are performed as securely as possible.

Running on HTTPS

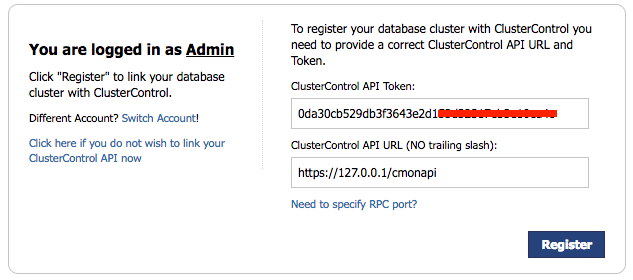

The installer script (install-cc.sh) will configure by default a self-signed SSL certificate for HTTPS usage. If you choose this access method as the main endpoint, you can block the plain HTTP service running on port 80 from the external network. However, ClusterControl still requires an access to CMONAPI (a legacy Rest-API interface) which runs by default on port 80 on the localhost. If you would like to block the HTTP port all over, make sure you change the ClusterControl API URL under Cluster Registrations page to use HTTPS instead:

The self-signed certificate configured by ClusterControl has 10 years (3650 days) of validity. You can verify the certificate validity by using the following command (on CentOS 7 server):

$ openssl x509 -in /etc/ssl/certs/s9server.crt -text -noout

...

Validity

Not Before: Apr 9 21:22:42 2014 GMT

Not After : Mar 16 21:22:42 2114 GMT

...Take note that the absolute path to the certificate file might be different depending on the operating system.

MySQL Client-Server Encryption

ClusterControl stores monitoring and management data inside MySQL databases on the ClusterControl node. Since MySQL itself supports client-server SSL encryption, ClusterControl is a capable of utilizing this feature to establish encrypted communication with MySQL server when writing and retrieving its data.

The following configuration options are supported for this purpose:

- cmondb_ssl_key – path to SSL key, for SSL encryption between CMON and the CMON DB.

- cmondb_ssl_cert – path to SSL cert, for SSL encryption between CMON and the CMON DB

- cmondb_ssl_ca – path to SSL CA, for SSL encryption between CMON and the CMON DB

We covered the configuration steps in this blog post some time back.

There is a catch though. At the time of writing, the ClusterControl UI has a limitation in accessing CMON DB through SSL using the cmon user. As a workaround, we are going to create another database user for the ClusterControl UI and ClusterControl CMONAPI called cmonui. This user will not have SSL enabled on its privilege table.

mysql> GRANT ALL PRIVILEGES ON *.* TO 'cmonui'@'127.0.0.1' IDENTIFIED BY ' FLUSH PRIVILEGES; Update the ClusterControl UI and CMONAPI configuration files located at clustercontrol/bootstrap.php and cmonapi/config/database.php respectively with the newly created database user, cmonui :

# /clustercontrol/bootstrap.php

define('DB_LOGIN', 'cmonui');

define('DB_PASS', ' # /cmonapi/config/database.php

define('DB_USER', 'cmonui');

define('DB_PASS', ' These files will not be replaced when you perform an upgrade through package manager.

CLI Encryption

ClusterControl also comes with a command-line interface called 's9s'. This client parses the command line options and sends a specific job to the controller service listening on port 9500 (CMON) or 9501 (CMON with TLS). The latter is the recommended one. The installer script by default will configure s9s CLI to use 9501 as the endpoint port of the ClusterControl server.

Role-Based Access Control

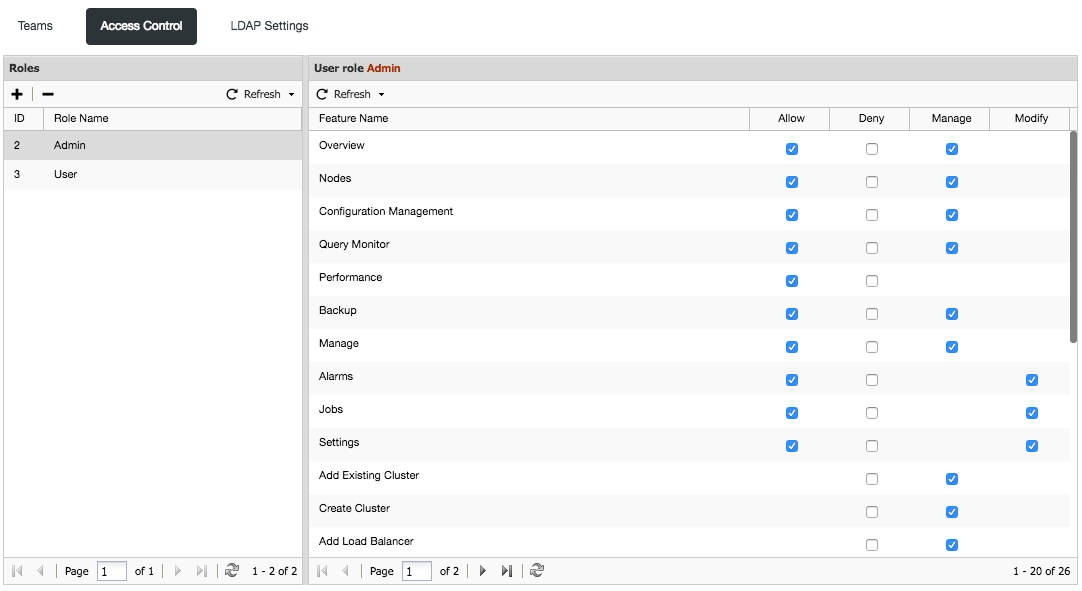

ClusterControl uses Role-Based Access Control (RBAC) to restrict access to clusters and their respective deployment, management and monitoring features. This ensures that only authorized user requests are allowed. Access to functionality is fine-grained, allowing access to be defined by organisation or user. ClusterControl uses a permissions framework to define how a user may interact with the management and monitoring functionality, after they have been authorised to do so.

The RBAC user interface can be accessed via ClusterControl -> User Management -> Access Control:

All of the features are self-explanatory but if you want some additional description, please check out the documentation page.

If you are having multiple users involved in the database cluster operation, it's highly recommended to set access controls for them accordingly. You can also create multiple teams (organizations) and assign them with zero or more clusters.

Running on Custom Ports

ClusterControl can be configured to use custom ports for all the dependant services. ClusterControl uses SSH as the main communication channel to manage and monitor nodes remotely, Apache to serve the ClusterControl UI and also MySQL to store monitoring and management data. You can run these services on custom ports to reduce the attacking vector. The following ports are the usual targets:

- SSH - default is 22

- HTTP - default is 80

- HTTPS - default is 443

- MySQL - default is 3306

There are several things you have to change in order to run the above services on custom ports for ClusterControl to work properly. We have covered this in details in the documentation page, Running on Custom Port.

Permission and Ownership

ClusterControl configuration files hold sensitive information and should be kept discreet and well protected. The files must be permissible to user/group root only, without read permission to others. In case the permission and ownership have been wrongly set, the following command helps restore them back to the correct state:

$ chown root:root /etc/cmon.cnf /etc/cmon.d/*.cnf

$ chmod 700 /etc/cmon.cnf /etc/cmon.d/*.cnf

For MySQL service, ensure the content of the MySQL data directory is permissible to "mysql" group, and the user could be either "mysql" or "root":

$ chown -Rf mysql:mysql /var/lib/mysqlFor ClusterControl UI, the ownership must be permissible to the Apache user, either "apache" for RHEL/CentOS or "www-data" for Debian-based OS.

The SSH key to connect to the database hosts is another very important aspect, as it holds the identity and must be kept with proper permission and ownership. Furthermore, SSH won't permit the usage of an unsecured key file when initiating the remote call. Verify the SSH key file used by the cluster, inside the generated configuration files under /etc/cmon.d/ directory, is set to the permissible to the osuser option only. For example, consider the osuser is "ubuntu" and the key file is /home/ubuntu/.ssh/id_rsa:

$ chown ubuntu:ubuntu /home/ubuntu/.ssh/id_rsa

$ chmod 700 /home/ubuntu/.ssh/id_rsaUse a Strong Password

If you use the installer script to install ClusterControl, you are encouraged to use a strong password when prompted by the installer. There are at most two accounts that the installer script will need to configure (depending on your setup):

- MySQL cmon password - Default value is 'cmon'.

- MySQL root password - Default value is 'password'.

It is user's responsibility to use strong passwords in those two accounts. The installer script supports a bunch special characters for your password input, as mentioned in the installation wizard:

=> Set a password for ClusterControl's MySQL user (cmon) [cmon]

=> Supported special password characters: ~!@#$%^&*()_+{}<>?Verify the content of /etc/cmon.cnf and /etc/cmon.d/cmon_*.cnf and ensure you are using a strong password whenever possible.

Changing the MySQL 'cmon' Password

If the configured password does not satisfy your password policy, to change the MySQL cmon password, there are several steps that you need to perform:

-

Change the password inside the ClusterControl's MySQL server:

$ ALTER USER 'cmon'@'127.0.0.1' IDENTIFIED BY 'newPass'; $ ALTER USER 'cmon'@'{ClusterControl IP address or hostname}' IDENTIFIED BY 'newPass'; $ FLUSH PRIVILEGES; -

Update all occurrences of 'mysql_password' options for controller service inside /etc/cmon.cnf and /etc/cmon.d/*.cnf:

mysql_password=newPass -

Update all occurrences of 'DB_PASS' constants for ClusterControl UI inside /var/www/html/clustercontrol/bootstrap.php and /var/www/html/cmonapi/config/database.php:

#/clustercontrol/bootstrap.php define('DB_PASS', 'newPass'); #/cmonapi/config/database.php define('DB_PASS', 'newPass'); -

Change the password on every MySQL server monitored by ClusterControl:

$ ALTER USER 'cmon'@'{ClusterControl IP address or hostname}' IDENTIFIED BY 'newPass'; $ FLUSH PRIVILEGES; -

Restart the CMON service to apply the changes:

$ service cmon restart # systemctl restart cmon

Verify if the cmon process is started correctly by looking at the /var/log/cmon.log. Make sure you got something like below:

2018-01-11 08:33:09 : (INFO) Additional RPC URL for events: 'http://127.0.0.1:9510'

2018-01-11 08:33:09 : (INFO) Configuration loaded.

2018-01-11 08:33:09 : (INFO) cmon 1.5.1.2299

2018-01-11 08:33:09 : (INFO) Server started at tcp://127.0.0.1:9500

2018-01-11 08:33:09 : (INFO) Server started at tls://127.0.0.1:9501

2018-01-11 08:33:09 : (INFO) Found 'cmon' schema version 105010.

2018-01-11 08:33:09 : (INFO) Running cmon schema hot-fixes.

2018-01-11 08:33:09 : (INFO) Schema auto-upgrade succeed (version 105010).

2018-01-11 08:33:09 : (INFO) Checked tables - seems ok

2018-01-11 08:33:09 : (INFO) Community version

2018-01-11 08:33:09 : (INFO) CmonCommandHandler: started, polling for commands.Running it Offline

ClusterControl is able to manage your database infrastructure in an environment without Internet access. Some features would not work in that environment (backup to cloud, deployment using public repos, upgrades), the major features are there and would work just fine. You also have a choice to initially deploy everything with Internet, and then cut off Internet once the setup is tested and ready to serve production data.

By having ClusterControl and the database cluster isolated from the outside world, you have taken off one of the important attacking vectors.

Summary

ClusterControl can help secure your database cluster but it doesn't get secured by itself. Ops teams must make sure that the ClusterControl server is also hardened from a security point-of-view.