blog

How to Execute and Manage MySQL Backups for Oracle DBA’s

Migrating from Oracle database to open source can bring a number of benefits. The lower cost of ownership is tempting, and pushes a lot of companies to migrate. At the same time DevOps, SysOps or DBA’s need to keep tight SLA’s to address business needs.

One of the key concerns when you plan data migration to another database, especially open source is to how to avoid data loss. It’s not too far fetched that someone accidentally deleted part of the database, someone forgot to include a WHERE clause in a DELETE query or run DROP TABLE accidentally. The question is how to recover from such situations.

Things like that may and will happen, it is inevitable but the impact can be disastrous. As somebody said, “It’s all fun and games until backup fails”. The most valuable asset cannot be compromised. Period.

The fear of the unknown is natural if you are not familiar with new technology. In fact, the knowledge of Oracle database solutions, reliability and great features which Oracle Recovery Manager (RMAN) offers can discourage you or your team to migrate to a new database system. We like to use things we know, so why migrate when our current solution works. Who knows how many projects were put on hold because the team or individual was not convinced about the new technology?

Logical Backups (exp/imp, expdp/impdb)

According to MySQL documentation, logical backup is “a backup that reproduces table structure and data, without copying the actual data files.” This definition can apply to both MySQL and Oracle worlds. The same is “why” and “when” you will use the logical backup.

Logical backups are a good option when we know what data will be modified so you can backup only the part you need. It simplifies potential restore in terms of time and complexity. It’s also very useful if we need to move some portion of small/medium size data set and copy back to another system (often on a different database version). Oracle use export utilities like exp and expdp to read database data and then export it into a file at the operating system level. You can then import the data back into a database using the import utilities imp or impdp.

The Oracle Export Utilities gives us a lot of options to choose what data that needs to be exported. You will definitely not find the same number of features with mysql, but most of the needs are covered and the rest can be done with additional scripting or external tools (check mydumper).

MySQL comes with a package of tools that offer very basic functionality. They are mysqldump, mysqlpump (the modern version of mysqldump that has native support for parallelization) and MySQL client which can be used to extract data to a flat file.

Below you can find several examples of how to use them:

Backup database structure only

mysqldump --no-data -h localhost -u root -ppassword mydatabase > mydatabase_backup.sqlBackup table structure

mysqldump --no-data --single- transaction -h localhost -u root -ppassword mydatabase table1 table2 > mydatabase_backup.sqlBackup specific rows

mysqldump -h localhost --single- transaction -u root -ppassword mydatabase table_name --where="date_created='2019-05-07'" > table_with_specific_rows_dump.sqlImporting the Table

mysql -u username -p -D dbname < tableName.sqlThe above command will stop load if an error occurs.

If you load data directly from the mysql client, the errors will be ignored and the client will proceed

mysql> source tableName.sqlTo log output, you need to use

mysql> tee import_tableName.logYou can find all flags explained under below links:

- https://dev.mysql.com/doc/refman/8.0/en/mysqldump.html

- https://dev.mysql.com/doc/refman/8.0/en/mysqlimport.html

- https://dev.mysql.com/doc/refman/8.0/en/mysql.html

If you plan to use logical backup across different database versions, make sure you have the right collation setup. The following statement can be used to check the default character set and collation for a given database:

USE mydatabase;

SELECT @@character_set_database, @@collation_database;Another way to retrieve the collation_database system variable is to use the SHOW VARIABLES.

SHOW VARIABLES LIKE 'collation%';Because of the limitations of the mysql dump, we often have to modify the output. An example of such modification can be a need to remove some lines. Fortunately, we have the flexibility of viewing and modifying the output using standard text tools before restoring. Tools like awk, grep, sed can become your friend. Below is a simple example of how to remove the third line from the dump file.

sed -i '1,3d' file.txtThe possibilities are endless. This is something that we will not find with Oracle as data is written in binary format.

There are a few things you need to consider when you execute logical mysql. One of the main limitations is pure support of parallelism and the object locking.

Logical backup considerations

When such backup is executed, the following steps will be performed.

- LOCK TABLE table.

- SHOW CREATE TABLE table.

- SELECT * FROM table INTO OUTFILE temporary file.

- Write the contents of the temporary file to the end of the dump file.

- UNLOCK TABLES

By default mysqldump doesn’t include routines and events in its output - you have to explicitly set --routines and --events flags.

Another important consideration is an engine that you use to store your data. Hopefully these days most of productions systems use ACID compliant engine called InnoDB. Older engine MyISAM had to lock all tables to ensure consistency. This is when FLUSH TABLES WITH READ LOCK was executed. Unfortunately, it is the only way to guarantee a consistent snapshot of MyISAM tables while the MySQL server is running. This will make the MySQL server become read-only until UNLOCK TABLES is executed.

For tables on InnoDB storage engine, it is recommended to use --single- transaction option. MySQL then produces a checkpoint that allows the dump to capture all data prior to the checkpoint while receiving incoming changes.

The --single-transaction option of mysqldump does not do FLUSH TABLES WITH READ LOCK. It causes mysqldump to set up a REPEATABLE READ transaction for all tables being dumped.

A mysqldump backup is much slower than Oracle tools exp, expdp. Mysqldump is a single-threaded tool and this is its most significant drawback - performance is ok for small databases but it quickly becomes unacceptable if the data set grows to tens of gigabytes.

- START TRANSACTION WITH CONSISTENT SNAPSHOT.

- For each database schema and table, a dump performs these steps:

- SHOW CREATE TABLE table.

- SELECT * FROM table INTO OUTFILE temporary file.

- Write the contents of the temporary file to the end of the dump file.

- COMMIT.

Physical backups (RMAN)

Fortunately, most of the limitations of logical backup can be solved with Percona Xtrabackup tool. Percona XtraBackup is the most popular, open-source, MySQL/MariaDB hot backup software that performs non-blocking backups for InnoDB and XtraDB databases. It falls into the physical backup category, which consists of exact copies of the MySQL data directory and files underneath it.

It’s the same category of tools like Oracle RMAN. RMAN comes as part of the database software, XtraBackup needs to be downloaded separately. Xtrabackup is available as rpm and deb package and supports only Linux platforms. The installation is very simple:

$ wget https://www.percona.com/downloads/XtraBackup/Percona-XtraBackup-8.0.4/binary/redhat/7/x86_64/percona-XtraBackup-80-8.0.4-1.el7.x86_64.rpm

$ yum localinstall percona-XtraBackup-80-8.0.4-1.el7.x86_64.rpmXtraBackup does not lock your database during the backup process. For large databases (100+ GB), it provides much better restoration time as compared to mysqldump. The restoration process involves preparing MySQL data from the backup files, before replacing or switching it with the current data directory on the target node.

Percona XtraBackup works by remembering the log sequence number (LSN) when it starts and then copying away the data files to another location. Copying data takes some time, and if the files are changing, they reflect the state of the database at different points in time. At the same time, XtraBackup runs a background process that keeps an eye on the transaction log (aka redo log) files, and copies changes from it. This has to be done continually because the transaction logs are written in a round-robin fashion, and can be reused after a while. XtraBackup needs the transaction log records for every change to the data files since it began execution.

When XtraBackup is installed you can finally perform your first physical backups.

xtrabackup --user=root --password=PASSWORD --backup --target-dir=/u01/backups/Another useful option which MySQL administrators do is the streaming of backup to another server. Such stream can be performed with the use of xbstream tool, like on the below example:

Start a listener on the external server on the preferable port (in this example 1984)

nc -l 1984 | pigz -cd - | pv | xbstream -x -C /u01/backupsRun backup and transfer to an external host

innobackupex --user=root --password=PASSWORD --stream=xbstream /var/tmp | pigz | pv | nc external_host.com 1984As you may notice restore process is divided into two major steps (similar to Oracle). The steps are restored (copy back) and recovery (apply log).

XtraBackup --copy-back --target-dir=/var/lib/data

innobackupex --apply-log --use-memory=[values in MB or GB] /var/lib/dataThe difference is that we can only perform recovery to the point when the backup was taken. To apply changes after the backup we need to do it manually.

Point in Time Restore (RMAN recovery)

In Oracle, RMAN does all the steps when we perform recovery of the database. It can be done either to SCN or time or based on the backup data set.

RMAN> run

{

allocate channel dev1 type disk;

set until time "to_date('2019-05-07:00:00:00', 'yyyy-mm-dd:hh24:mi:ss')";

restore database;

recover database; }In mysql, we need another tool to perform to extract data from binary logs (similar to Oracle’s archivelogs) mysqlbinlog. mysqlbinlog can read the binary logs and convert them to files. What we need to do is

The basic procedure would be

- Restore full backup

- Restore incremental backups

- To identify the start and end times for recovery (that could be the end of backup and the position number before unfortunately drop table).

- Convert necessary binglogs to SQL and apply newly created SQL files in the proper sequence - make sure to run a single mysqlbinlog command.

> mysqlbinlog binlog.000001 binlog.000002 | mysql -u root -p

Encrypt Backups (Oracle Wallet)

Percona XtraBackup can be used to encrypt or decrypt local or streaming backups with xbstream option to add another layer of protection to the backups. Both --encrypt-key option and --encryptkey-file option can be used to specify the encryption key. Encryption keys can be generated with commands like

$ openssl rand -base64 24

$ bWuYY6FxIPp3Vg5EDWAxoXlmEFqxUqz1This value then can be used as the encryption key. Example of the innobackupex command using the --encrypt-key:

$ innobackupex --encrypt=AES256 --encrypt-key=”bWuYY6FxIPp3Vg5EDWAxoXlmEFqxUqz1” /storage/backups/encryptedTo decrypt, simply use the --decrypt option with appropriate --encrypt-key:

$ innobackupex --decrypt=AES256 --encrypt-key=”bWuYY6FxIPp3Vg5EDWAxoXlmEFqxUqz1”

/storage/backups/encrypted/2019-05-08_11-10-09/Backup policies

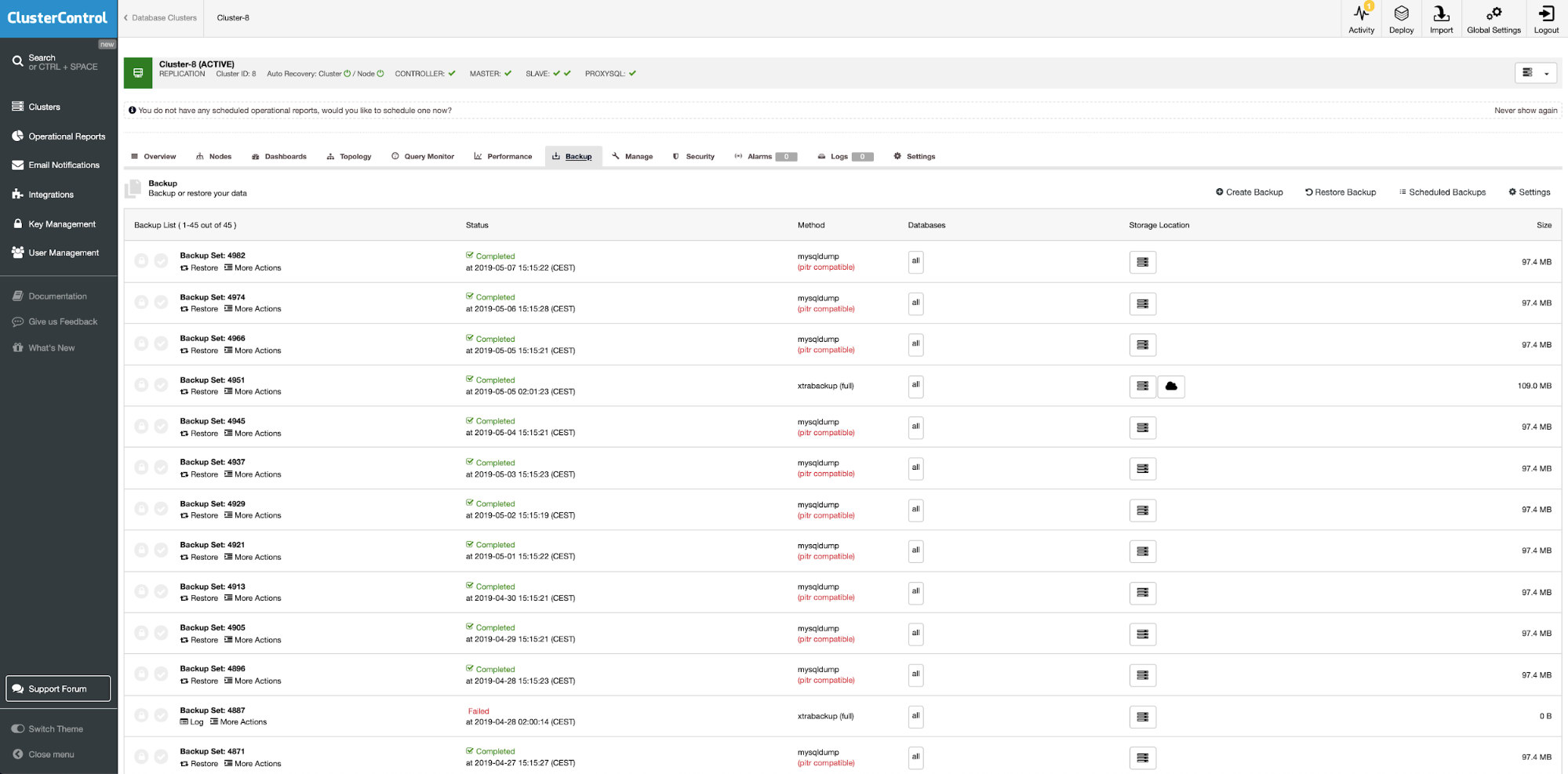

There is no build in backup policy functionality either in MySQL/MariaDB or even Percona’s tool. If you would like to manage your MySQL logical or physical backups you can use ClusterControl for that.

ClusterControl is the all-inclusive open source database management system for users with mixed environments. It provides advanced backup management functionality for MySQL or MariaDB.

With ClusterControl you can:

- Create backup policies

- Monitor backup status, executions, and servers without backups

- Execute backups and restores (including a point in time recovery)

- Control backup retention

- Save backups in cloud storage

- Validate backups (full test with the restore on the standalone server)

- Encrypt backups

- Compress backups

- And many others

Keep backups in the cloud

Organizations have historically deployed tape backup solutions as a means to protect

data from failures. However, the emergence of public cloud computing has also enabled new models with lower TCO than what has traditionally been available. It makes no business sense to abstract the cost of a DR solution from the design of it, so organizations have to implement the right level of protection at the lowest possible cost.

The cloud has changed the data backup industry. Because of its affordable price point, smaller businesses have an offsite solution that backs up all of their data (and yes, make sure it is encrypted). Both Oracle and MySQL does not offer built-in cloud storage solutions. Instead you can use the tools provided by Cloud vendors. An example here could be s3.

aws s3 cp severalnines.sql s3://severalnine-sbucket/mysql_backupsConclusion

There are a number of ways to backup your database, but it is important to review business needs before deciding on a backup strategy. As you can see there are many similarities between MySQL and Oracle backups which hopefully can meet you your SLA’s.

Always make sure that you practice these commands. Not only when you are new to the technology but whenever DBMS becomes unusable so you know what to do.

If you would like to learn more about MySQL please check our whitepaper The DevOps Guide to Database Backups for MySQL and MariaDB.