blog

Full Ops Automation of your Hybrid Cloud Database

In a previous blog, we wrote about about the architecture of hybrid cloud databases. Having database instances in different datacenters sounds all good, but how do you manage all that? How do you know if the instances are out of sync and some have stale data? How do you recover from failures, and ensure the data is consistent across all database instances? How do you determine whether performance problems are due to the network, unstable cloud virtual machines or poorly written database queries?

In this blog, we will discuss the operational aspects you should consider when running a hybrid cloud database.

Operational Management can be complex

Operating a distributed database that is spread across multiple datacenters and different multiple comes with its own set of challenges. Public cloud providers have different platform architectures, APIs and tools, and initial implementation is usually manual and labour intensive. When running databases across private and public cloud, being able to keep things simple is key. Deployment should be as easy as when deployment happens in a single data center.

A hybrid database ops platform is required to:

– Get a single view of all database instances, regardless of where they are running

– Manage data replication effectively

– Understand performance issues

– Detect failures and manage failover/failback procedures

Monitoring & Observability of Hybrid Cloud Databases

When deploying databases across multiple datacenters, being able to monitor everything through a single pane of glass can greatly improve productivity and quality of operations. A single display provides a consistent view for all monitoring, regardless of where the database instances are located.

Monitoring and observability does not only refer to a ‘service is up or down’ type of functionality, it also entails database metrics (including host statistics), workload performance and logs. Databases are complex systems, and e.g. MySQL itself has over 400 metrics. Anomalies can occur, and when they do, you would need enough information to see what is going on. Metrics can help understand how the database systems behave in different operating cloud environments. They are an invaluable source of information when diagnosing problems.

Trending is also another important feature, to understand how the metrics are evolving over time, so you can predict for example that you will be running out of some resource based on the historical trend. Another example is a query that suddenly takes 10x more time to execute, so it is important to understand baseline performance and identify deviations.

ClusterControl provides complete monitoring for hybrid database setups. It provides a systems view of the distributed database setup, with the ability to drill down to individual nodes. Monitoring can be performed with or without agents (using SSH). Metrics are collected and presented in graphs and dashboards. Query performance data is also captured, presented and analyzed. One example is outlier detection, where based on historical trends, we could derive that a query is suddenly much slower.

Managing Security in Hybrid Cloud Databases

Having database deployments in a hybrid cloud environment exposes the database to many kinds of risks, for instance unauthorized access to the database. A security breach can be due to misconfiguration in firewall settings, database roles and privileges and so on.

To avoid having such scenarios, hybrid database management systems require higher security features. Some security measure includes:

– Overview of users and access: Having an accurate view of all users and their permissions

– Database audits : Set the policy on user access and network(IP) access to database.

– Database roles : Set user roles with read and write permissions where appropriate. Super user privileges should be only for root users.

– Firewall setting : Determine and set database ports that are allowed in the firewall.

– Database encryption : encryption of data at rest is also important, there are several ways to achieve this: encryption of the data in the application layer, inside the database itself, or at the disk level.

– SSL / TLS – securing the data in transit is also important, so the data can not be intercepted during the transmission.

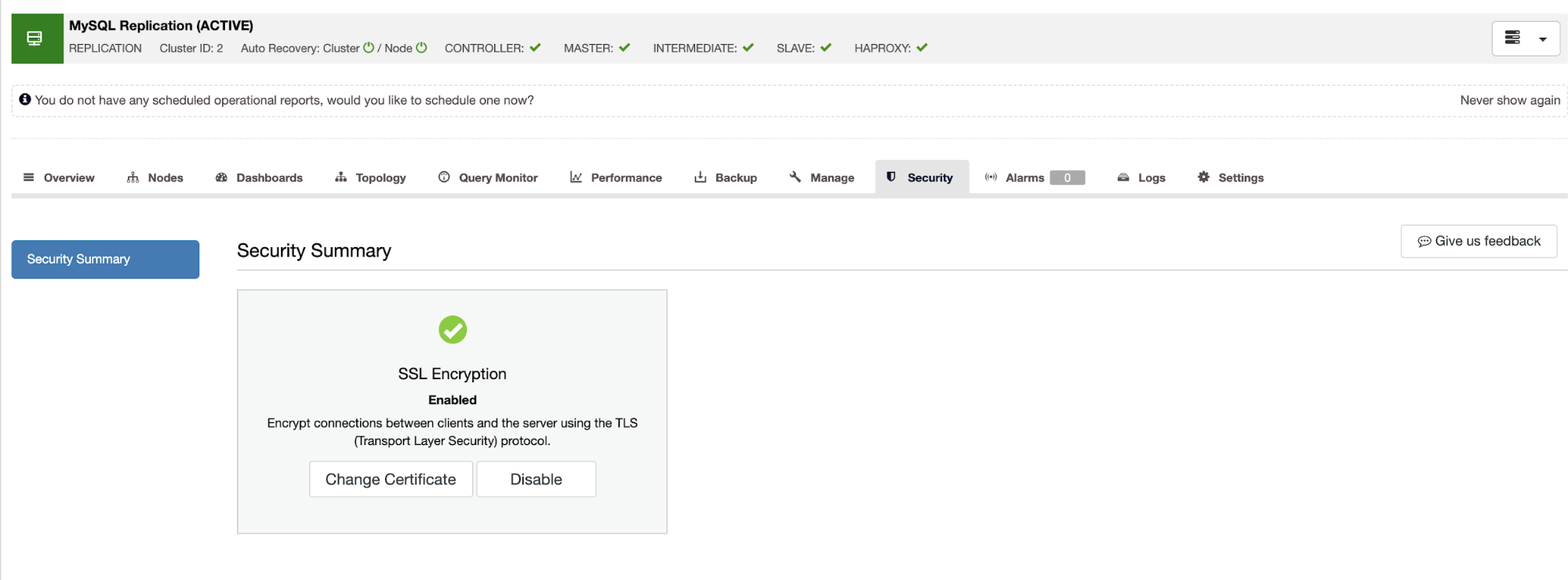

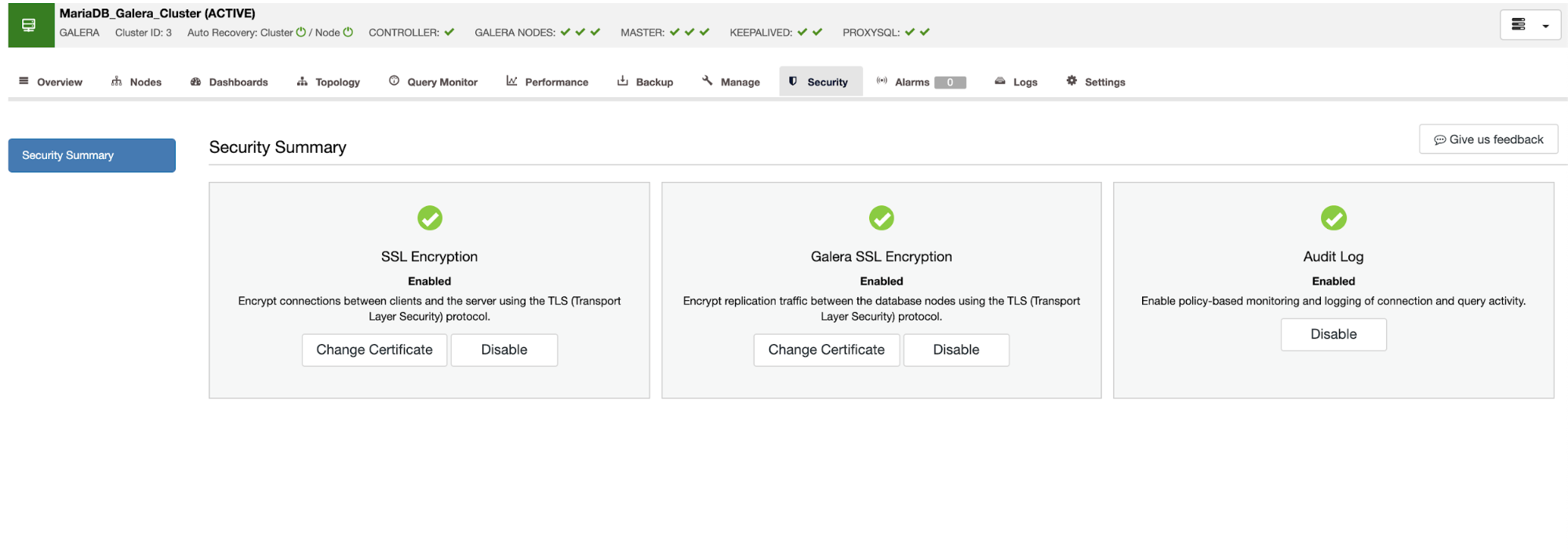

ClusterControl support a range of features, including access management for database users, audit logs for database access and encryption of data in transit.

SSL Encryption between the clients and database server in Master/Slave replication as shown below:

And for Galera Cluster, SSL/TLS encryption between the client and database server and on each Galera node itself. There is also option for enabling Audit Log in Master/Master replication using Galera.

Scalability of Hybrid Cloud Databases

Distributing a database across multiple clouds does not automatically make it scalable. In fact, you might get less scalability than expected, since databases need to replicate with one another to stay in sync and replication is a serious overhead. Latency between clouds is another issue, and very often, that will dictate whether you can afford to synchronously replicate your data, or you need to use asynchronous methods. If the latency is too high, then doing synchronous replication will heavily impact write performance. If latency is unpredictable, then you might not be able to use something like Galera Cluster for MySQL or MariaDB, which depend on the reliability of the network. Therefore, the clouds you choose and the latency characteristics in your hybrid cloud will dictate how you can scale your databases. Disaster recovery considerations may also affect how you distribute your databases across different clouds.

Partitioning your workload and splitting reads from writes and sending them to different nodes may also help scale your distributed database setup. Read write splitting can be done with e.g. ProxySQL for MySQL or MariaDB, or using a combination of pgbouncer (for connection pooling) and pgpool-II (for query routing) for PostgreSQL setups.