blog

Database Backup Considerations for Hybrid Cloud Databases

Hybrid cloud databases are gaining adoption as a strategy for architectural resilience, agility by leveraging cloud resources but without necessarily being locked into one vendor’s cloud infrastructure. But how do you handle database backups in a hybrid setup?

In this blog, we will look at some considerations for your backup strategy when it comes to redundancy, durability, data security, and data retention.

Considerations for Backing Up Hybrid Cloud Databases

How fast and how easy is the recovery process?

Just like planning any other backup implementation, the most important consideration is the recovery process. Here are two key recovery objectives:

1. To what point in time will you need to recover your database

The Recovery Point Objective (RPO) defines what point in time you have to recover your database. In other words, how much data can you afford to lose? For example, if a user dropped a table by accident at 11:55 am, you might want to recover to a point in time just before the delete command. In some circumstances, you may want to selectively exclude some rogue transactions during the recovery process.

2. How much time will you have to recover your database

The Recovery Time Objective (RTO) defines how much time it will take to recover the database. You should carefully consider how long your application can be offline while you do a recovery. Therefore, you will need to determine RPO and RTO for hybrid cloud databases in your environment and implement your backup solution accordingly.

What will be the impact on your Application during the backup?

Backup window refers to the time it takes for backups to complete. During the backup window, your applications can be degraded (in performance or functionality or both) or even offline.

The size of your database and rate of transaction activity will determine how long your backup window will be. Depending on these factors and the requirements of the business, you will need to decide on the scheduling of your backup window.

How will you manage your backup process and backed up data?

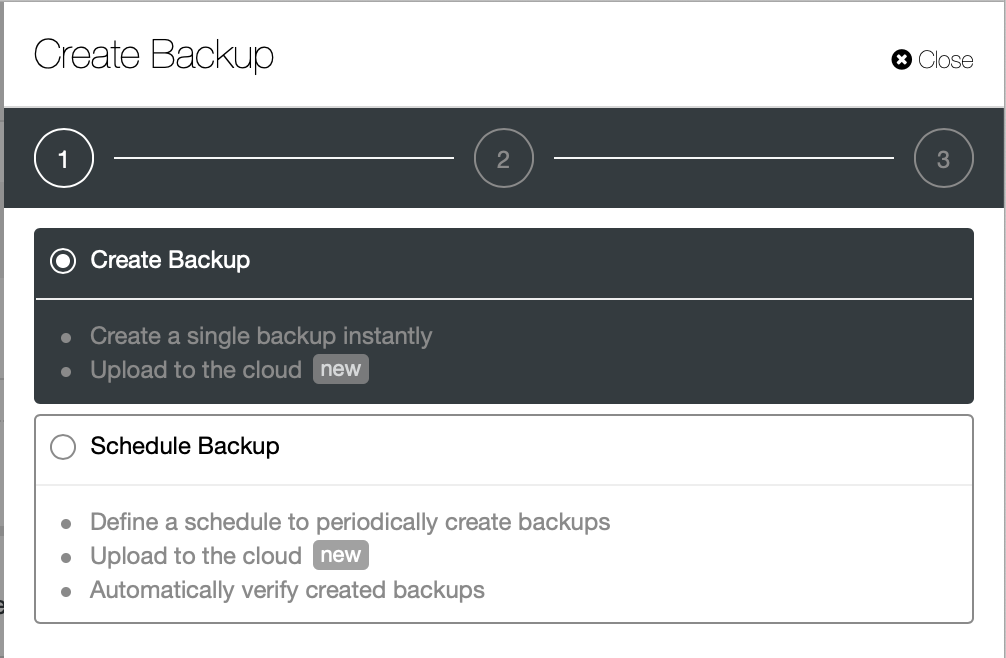

Backups should always be automated. Backups must be taken consistently and regularly, relying on human intervention should be avoided. Backup catalog should be automatically kept up-to-date to keep track of all copies of backup images. A pre-backup needs to check whether the needed storage will be available for the upcoming backup run. At the other end, a post-backup can remove old backup files based on the retention policy after a successful backup has finished. In some cases, you might also want to automatically verify the newly taken backup before removing an old backup file.

Your backup implementation should seamlessly integrate such pre-backup and post-backup checks. Security of your cloud database is a key consideration while implementing a backup solution. While backing up your cloud database you need to consider whether encryption of your data is required. Just like compression, keep in mind that encryption has a computation cost both during backup and recovery. But if you are archiving your backup files with a cloud storage vendor, encryption is not optional.

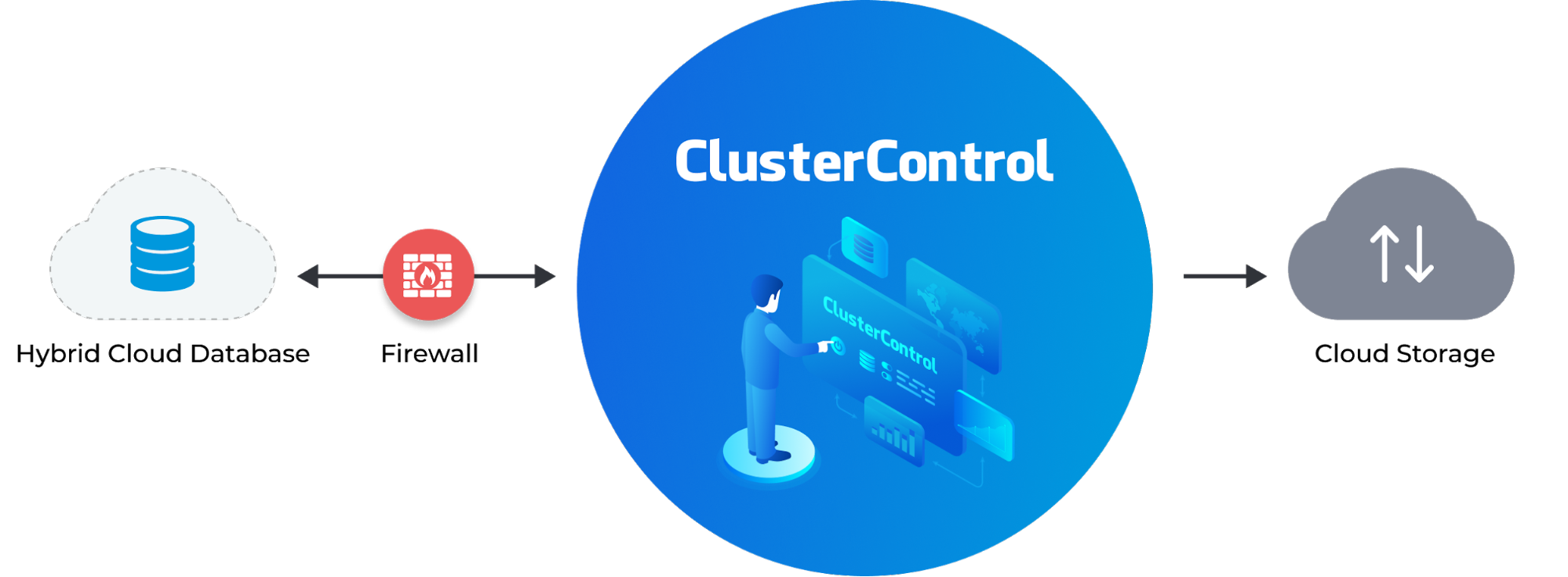

Reducing Complexity With ClusterControl

ClusterControl provides a complete backup management solution, by scheduling different types of backups, providing reports, archiving to public cloud locations, encryption, compression, retention and automatic restore verification.

Your backup strategy should be easy to set and maintain with time. ClusterControl enables you to do all that using a simple wizard.

Monitoring, reporting and compliance

Your backup implementation should provide timely notifications for critical events such as backup failures. Mechanisms may include email, or notifying via Slack or PagerDuty. There should be a mechanism to ensure adherence to your Retention Policy – i.e. how long you want keep to your backed up data. Your backup policy should account for the possibility that different types of data may have different retention policies – depending on compliance and business requirements. The expired backups should be automatically purged.

Conclusion

Having a good database backup plan and policy is important in any organisation. The plan or policy should include enough measures to secure the backup from various types of malicious attacks.

It is always advisable to not only keep your backups in the same data center as your database so as to reduce the likelihood of the backups being destroyed simultaneously. For faster recovery, local backups can be handy as no time is spent downloading the files from some other site. But backups need to be stored offsite, just in case. Also, do not carry out your backups on primary (or master) database servers, since it may increase the load on the database being backed up, resulting in lower performance and instability at worst. The whole backup process can be configured via a GUI from ClusterControl, so do give it a try and let us know what you think.