blog

Database Automation: Integrating the ClusterControl CLI With Your ChatBot

Last year we launched a ClusterControl integration with chatrooms. We made a Hubot based chatbot, called CCBot, to make your database tier accessible from your company wide chat! The chatbot integrates our CMON backend API within the Hubot framework, and this opened up the ability to interact with ClusterControl via the chatbot. This could be very beneficial for companies that rely on close collaboration between development and operations, like DevOps, as this enables all users in the same chatroom to use the same interface to the company’s database infrastructure.

With the availability of the ClusterControl command line client (CLI), we also have a new and improved CCBot, that has a full integration with the CLI. That means that any functionality present in the CLI is also available in your CCBot enabled chat!

Installing CCBot

The CCBot installation is a straightforward installation that we described in our CCBot introductory blog post. CCBot depends on the Hubot framework, which in itself depends on Node.js. Once Node.js has been installed and the CCBot has been checked out from the public CCBot Github repository, all other dependencies will be resolved automatically by npm. The newly added CLI support will be immediately available as well.

Installing the ClusterControl CLI

The ClusterControl CLI will automatically be installed on new installations of ClusterControl 1.4.1 and higher. You can also install it manually on remote hosts (other than the ClusterControl node).On the Severalnines Command Line Interface GitHub repository you can find installation instructions for your specific operating system. Note that the CLI is compatible with version 1.4.1 and up.

After installing the command line interface, a new user has to be created for CCBot under the user that runs CCBot. This really depends on how you have set up your hubot environment, but typically is the root user. This user will then be used by CCBot to interact with ClusterControl via the CLI. An existing user can be reused, however it will be easier to revoke access from a separate CCBot user.

To create the user, use the following command:

s9s user --create --cmon-user=ccbot --generate-key --controller="https://localhost:9501"Then after creating the user we need to modify the config file in .s9s/s9s.conf in the homedir of the user that runs Hubot. Modify this file and set the controller_host_name that runs the ClusterControl backend cmon. If you have installed CCBot on the same host as ClusterControl this will be localhost, otherwise you need to sepecify the hostname of the ClusterControl host. Also set the controller_port to 9501 and enable rpc_tls.

[global]

cmon_user=ccbot

controller_host_name = localhost

controller_port = 9501

rpc_tls = trueWith this command you can verify if the command line client works under the hubot user:

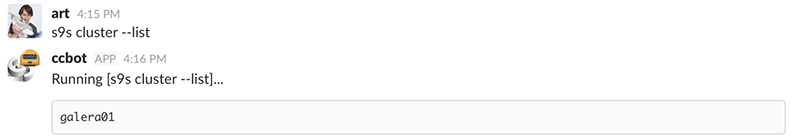

[hubot@n1 ~]$ s9s cluster --listThe output should look similar to this:

If the output is similar to the picture below, the .s9s directory hasn’t been created or Hubot is running under a different user than you have created the user with.

Interacting with ClusterControl via CCBot and CLI

If you are unsure how to use a command, you can always invoke the command line client help by adding –help. For example:

art [4:14 PM] @ccbot s9s --help

ccbot [4:14 PM] Running [s9s --help]...

Usage:

s9s COMMAND [OPTION...]

Where COMMAND is:

backup - to view, create and restore database backups.

cluster - to list and manipulate clusters.

…

Job related options:

--wait Wait until the job ends.

--log Wait and monitor job messages.

--schedule=DATE&TIME Run the job at the specified time.And if you wish to have command related help, you simple add –help after your command:

art [4:14 PM] @ccbot s9s cluster --help

ccbot [4:14 PM] Running [s9s cluster --help]...

Usage:

s9s COMMAND [OPTION...]

…

Options for the "maintenance" command:

--list List the maintenance period.

--create Create a new maintenance period.

--delete Delete a maintenance period.

-u, --cmon-user=USERNAME The username on the Cmon system.

--cluster-id=ID The cluster for cluster maintenances.

--nodes=NODELIST The nodes for the node maintenances.

--full-uuid Print the full UUID.

--start=DATE&TIME The start of the maintenance period.

--end=DATE&TIME The end of the maintenance period.

--reason=STRING The reason for the maintenance.

--date-format=FORMAT The format of the dates printed.

--uuid=UUID The UUID to identify the maintenance period.So now we will give a few examples on how to use the command line client with CCBot. Here is how to create a new cluster:

art [4:15 PM] @ccbot s9s cluster --create --cluster-type=galera --vendor=percona --provider-version=5.7 --db-admin-passwd='pa$$word' --os-user=vagrant --cluster-name='galera01' --nodes="10.10.37.11;10.10.37.12"

ccbot [4:14 PM] Running [s9s cluster --create --cluster-type=galera --vendor=percona --provider-version=5.7 --db-admin-passwd='pa$$word' --os-user=vagrant --cluster-name='galera01' --nodes="10.10.37.11;10.10.37.12"]...

Job with ID 1 registered.After we have created the new cluster deployment, we can keep track of its progress by requesting the output from the jobs:

art [4:16 PM] @ccbot s9s job --list

ccbot [4:16 PM] Running [s9s job --list]...

ID CID STATE OWNER GROUP CREATED RDY TITLE

1 0 RUNNING ccbot users 2017-05-11 11:30:54 100% Create Galera ClusterAnd if we wish to see this job in detail, we can request this from CCBot as well:

art [4:16 PM] @ccbot s9s job --log --job-id=1

ccbot [4:16 PM] Running [s9s job --log --job-id=1]...

Calling job: setupServer(10.10.37.11:3306).

10.10.37.11: Check and disable AppArmor.

10.10.37.11: Checking SELinux status (enabled = false).

...

Wait for a synced node (timeout: 360 sec)

Found a SYNCED galera node: 10.10.37.11:3306

Collecting config files from all nodes

Job finished, all the nodes have been added successfully.After the job has deployed the new cluster, we can list the cluster:

art [4:19 PM] @ccbot s9s cluster --list

ccbot [4:19 PM] Running [s9s job cluster --list]...

galera01And if we need to see the details of the cluster, we can retrieve the current stats for the cluster:

art [4:19 PM] @ccbot s9s cluster --stat --cluster-id=1

ccbot [4:19 PM] Running [s9s job cluster --stat --cluster-id=1]...

galera01 Name: galera01 Owner: ccbot/users

ID: 1 State: STARTED

Type: GALERA Vendor: percona 5.7

Status: All nodes are operational.

Alarms: 1 crit 4 warn

Jobs: 0 abort 0 defnd 0 dequd 0 faild 7 finsd 0 runng

Config: '/etc/cmon.d/cmon_1.cnf'

LogFile: '/var/log/cmon_1.log'We can also scale the cluster by adding one additional node:

art [4:20 PM] @ccbot s9s cluster --add-node --nodes="10.10.37.13" --cluster-id=1

ccbot [4:20 PM] Running [s9s cluster --add-node --nodes="10.10.37.13" --cluster-id=1]...

Job with ID 2 registered.And to remove the very same node, if we just made a mistake adding it in the first place:

art [4:22 PM] @ccbot s9s cluster --remove-node --nodes="10.10.37.13" --cluster-id=1

ccbot [4:22 PM] Running [s9s cluster --remove-node --nodes="10.10.37.13" --cluster-id=1]...

Job with ID 3 registered.Scheduling a backup also has become a simple one liner:

art [4:14 PM] @ccbot s9s backup --create --cluster-id=1 --nodes="10.10.37.12"

--backup-directory="/backups/"

ccbot [4:22 PM] Running [s9s backup --create --cluster-id=1 --nodes="10.10.37.12"

--backup-directory="/backups/"]...

Job with ID 11 registered.Boundaries of the CLI

There are a couple of caveats with using the command line interface under the Hubot framework. First of all, there is no support for webhooks with the CLI. Normally you would use this to send commands or status updates from an external source to a Hubot based chatbot. Since the command line interface is decoupled from CCBot and keeps no state, it will be difficult to integrate webhooks for this functionality. This means all status updates need to be requested from ClusterControl using the job list command.

Also in the initial version of CCBot, we added the ability to run backups. Once a backup had been scheduled as a job, a new thread would be started to monitor the progress of the (backup) job and report updates back (privately) to the user who initiated the backup. The command to do this is still available in CCBot and fully functional. However if a backup gets started via CLI, no new thread will be started as it has been started using the command line. This means the user initiating the backup will not get direct feedback and has to request status updates via the command line client.

Another limitation is that the CLI has been implemented as a single command. This means that if you integrate CCBot in an existing chatbot with user authorization, it would be difficult to disallow certain specific commands that you would send to the command line client. Depending on your needs, you would be able to create additional proxy commands for the CLI and allow/disallow them per user.

These boundaries are present in this initial launch of the CCBot CLI version, but we are working hard to overcome them in the next version.

Conclusion

The command line client is really easy to use, and if you are very accustomed to the command line of your own operating system, it will be a great addition. However not everyone has access to the hosts with the command line client installed, and allowing external connections sending commands to ClusterControl may not be in the best of interest in terms of security. The addition of the command line client to our chatbot, CCBot, will open up the command line client for users that do not work regularly with a command line. It allows them to send commands to ClusterControl that these users normally wouldn’t have had access to. We’d love to hear your thoughts on this.