blog

Comparing Deployment Patterns for MongoDB

MongoDB deployment in production can only really work if the right deployment pattern is adhered to. Deploying a replica set in a single host does not guarantee the high availability of data. Dealing with big data requires extensive research and optimal implementations, either by combining the available options or picking the one with the highest promising benefits.

Deployment patterns for MongoDB include:

- Three member Replica Sets

- Replica sets distributed across two or more data centers.

Three Member Replica Sets

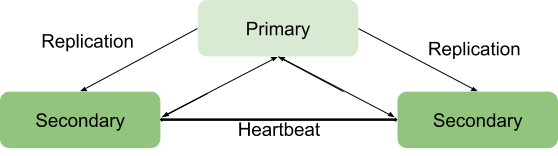

Replication is a scaling strategy for MongoDB that enhances High Availability of data. A replica set involves:

- A primary node: responsible for all write throughput operations and can also be read from.

- Secondary nodes: Can only be used for read operations but can be elected to primary in case the existing one fails. They obtain their data updates from an oplog generated by the primary member of the set.

- Arbiter. Used to facilitate the election of a primary in case there is an even number of replica set members. It does not host any copy of the data.

Benefits of a replica set can only be attained with a minimum number of three members with the following architecture:

Primary-Secondary-Secondary

This is the most recommended since it has a greater fault tolerance and addresses the limitations of adding a third data bearing member such as cost.

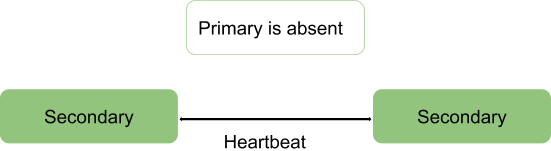

This deployment will always provide two complete copies besides the primary data hence ensuring high availability. Failure of the primary will trigger the replica set to elect a new primary and the serving operation will resume as normal. If the old primary becomes alive it will be categorized as a secondary member.

During the election process, the members signalize to each other through a heartbeat and there are no write operations taking place during this time

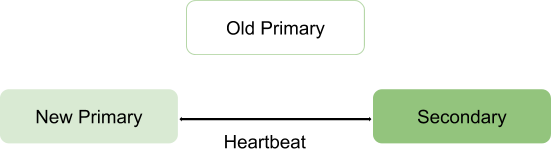

After the election process we assume the architecture to reform as:

Primary-Secondary-Arbiter

This ensures the replica set remains available even if the primary or secondary is unavailable by facilitating the election process of a secondary to a primary. Arbiters do not carry any copy of the data hence require fewer resources to manage.

A limitation with this deployment is; no redundancy since there are only two data bearing members: primary and secondary. This results in a lower fault tolerance.

Fault tolerance should be able to ensure:

- Write availability: majority of voting replica set members is needed to maintain or elect the primary which is responsible for the write operations.

- Data redundancy: write can be acknowledged by multiple members to avoid rollbacks

The Primary-Secondary-Arbiter configuration supports the write availability aspect only such that if a single member of the set is unavailable a primary can still be maintained.

However, failure to support the second aspect result into some operational consequences if the secondary member becomes unavailable:

- There will be no active replication especially if the secondary is offline for long. When the secondary is offline for too long, it may fall off the oplog forcing one to resync it during restart.

- Data redundancy will be sabotaged forcing write operation to be acknowledged by the current primary only.

- Majority with concern option will not provide the newest data to the connected applications and internal processes. This is the case when your configuration expects writes to request majority acknowledgement hence gets blocked until majority of data bearing members are available.

- Chunk migration between shards will also be compromised if the replica set is part of a sharded cluster.

- Pressure on the WiredTiger storage engine cache if rollbacks happen and the majority commit point cannot be advanced.

To avoid these consequences, one can opt for a Primary-Secondary-Secondary configuration as it increases fault tolerance.

Note: Fault tolerance does not come along only in case of failure but also some system operations such as software upgrade and normal maintenance may force a member to be unavailable briefly.

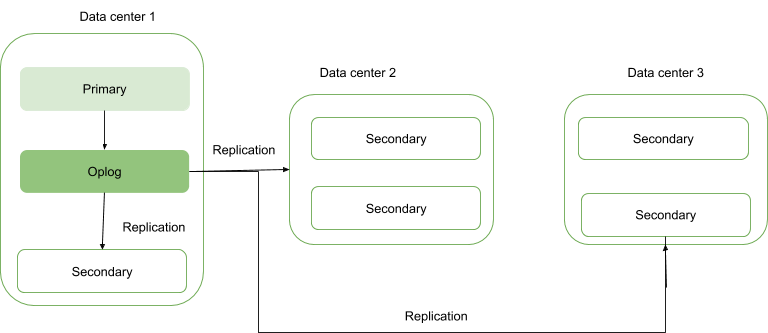

Replica Sets Distributed Across Two or More Data Centers

High availability can be elevated to another level by distributing replica set members across geographically distinct data centers. This approach will increase redundancy besides ensuring high fault tolerance in case any data center becomes unavailable.

If all the members are located in a single data center, the replica set is susceptible to data center failures such as network transients and power outages.

It is advisable to keep at least one member in an alternate data center, use an odd number of data centers and select a distribution of members that will offer a majority for election or at minimum provide a copy of the data in case of failure.

The configuration should ensure that if any data center goes down, the replica set remains writable since the remaining members can hold an election.

Distribute your data at least across three data centers.

Members may be limited to resources or have network restraints thus making them unsuitable to become primary in case of failover. You can configure these members not to become primary by giving them priority 0.

Members in a data center may have a higher priority than other data centers to give them a voting priority such that they can elect primary before members in other data centers.

All members in the Replica Set should be able to communicate with each other.

Conclusion

Replication benefits can be elevated to a more promising status by distributing the members across a number of data centers. This essentially increases fault tolerance besides ensuring data redundancy. Replica Set members when distributed across two or more data centers provides benefits over a single data center such as:

If one of the data centers goes down, data is still available for reads unlike for a single data center distribution.

Write operations can still be acknowledged whenever a data center with minority members goes down.

Read operations can still be possible if the data center with majority voting members goes down unlike the case for single data center.