blog

What is Hyperconvergence in a Private Cloud?

In recent years, the term “hyper-convergence” has emerged and steadily disrupting the enterprise IT markets with its extreme simplicity. Old-fashioned solutions are being challenged all over the enterprise world by a new breed of intelligent technologies like hyper-convergence. Hyperconvergence is the fastest-growing individual technology within the data center industry in the world at the moment, focusing on simplicity and efficiency in managing multiple tiers in bare-metal infrastructures.

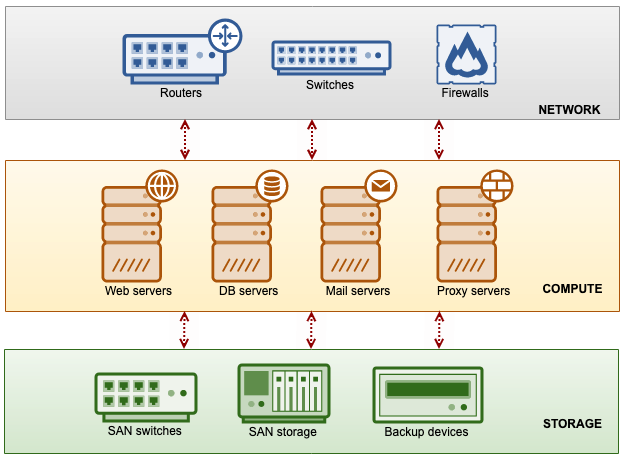

Traditional Cloud Infrastructure

Traditionally, a data center infrastructure consists of 3 independent tiers – compute, storage and network, illustrated in the following diagram:

They are independent of each other for a more flexible management approach without affecting and disrupting the other tiers. For example, upgrading RAM on one of the servers in the compute tier will not drag the whole system to go down. You just need to bring down the server for maintenance and the persistent data can be served by other servers since they reside in the storage tier.

However, managing multiple tiers requires a dedicated technical team for every tier. Each team has its own set of tools, policies, specifications, requirements, vendors and issues to deal with. For example, on the storage tier, the SAN storage and backup devices have their own UI and management tools, depending on the appliance version and vendors, that probably use different configuration options and skillset to manage. The storage team has to acquire the knowledge, skills and experience to be the resident expert in handling the appliances efficiently.

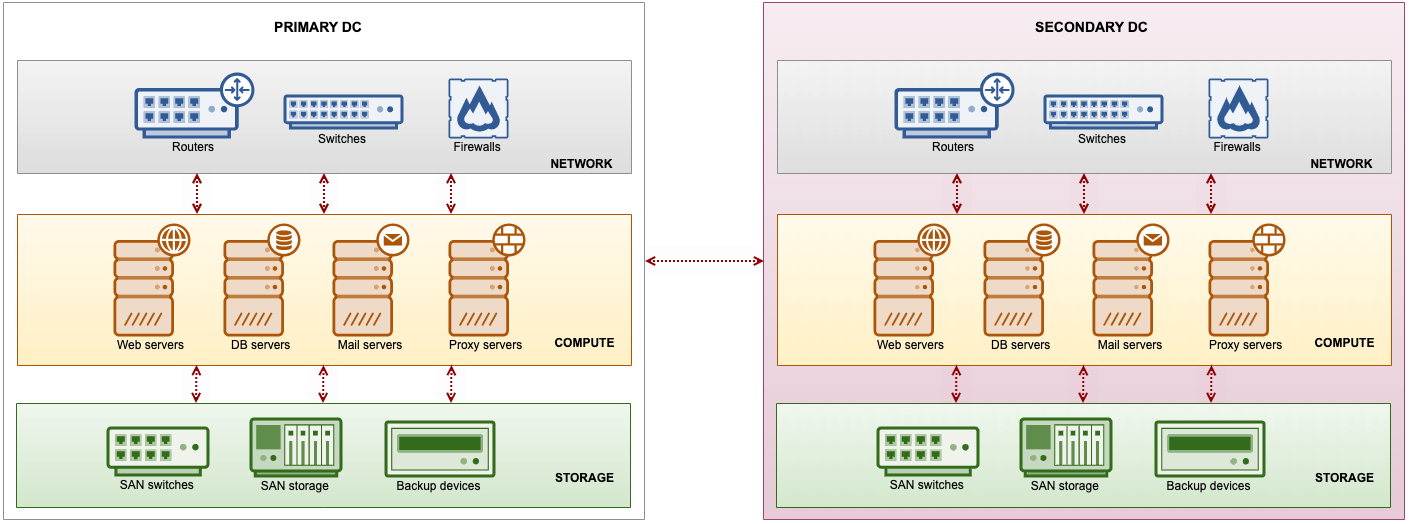

In the real-world implementation, no real enterprise ever trusts only one data center. The management complexity becomes more apparent when you have to apply redundancy for business continuity and scalability for business agility. The same set has to be deployed in another data center, connected via wide-area networking (WAN), as shown in the following diagram:

Since bandwidth is expensive, you probably want to optimize the data replication and synchronization between sites. The optimization shall include data compression, deduplication, prioritization and encryption to maximize data-transfer efficiencies across wide-area networks. This is not an easy task depending on the vast amount of data that needs to be replicated, the replication factors (how many sites to replicate the data) and replication data flow (unidirectional, bidirectional or omnidirectional). Don’t forget that you are still bound by the limited WAN bandwidth.

Communication between the teams is also crucial to make sure they are aware of the changes and keep up with what is going on with the other tiers. Miscommunication can really lead to catastrophic failures especially during high-intensity situations like when a real disaster strikes or when an urgent maintenance is required. Although the tiers are independent between each other, they are really a single computer unit from the higher-level perspective and are logically dependent on each other.

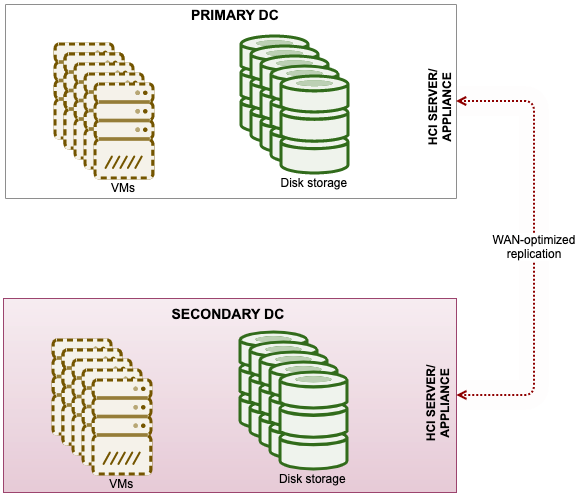

Hyperconvergence Infrastructure (HCI)

Hyperconvergence infrastructure (HCI) provides simplicity and efficiency by combining multiple tiers in bare-metal infrastructures into a single tier, as illustrated below:

A unit of HCI appliance comes with powerful CPUs together with a pool of fast disks, controlled by a software-defined approach. HCI combines two or more important tiers, as in this example, compute and storage, into one physical host without sacrificing any of the functionality and features that we have in the traditional data center infrastructure. From the infrastructure point-of-view, one of the biggest take-ups is that the storage network is greatly simplified in HCI solutions compared to traditional SAN.

In hardware consolidation ratios, an HCI can usually consolidate four racks of traditional data center equipment into one single rack. In some cases, the consolidation ratio can be up to 10 to 1, depending on the HCI software, appliances, and the number of components involved. HCI commonly uses a sophisticated file-system to ensure all the data is compressed and optimized to be sent over a vast distance. For data replication and synchronization, there are providers that can offer up to 50 to 1 data reduction in WAN optimization aspect.

HCI gets rid of infrastructure complexity by introducing a new simple and efficient way of constructing a data center, managed by one single user interface. There are no separate compute and storage tiers anymore, and it comes with a bunch of infrastructure-centric built-in features like auto-scaling, site failover, backup and disaster recovery. You can choose from multiple vendors and hardware possibilities depending on the budget and requirements. There are also AI-driven HCI solutions that deliver self-managing, self-optimizing and self-healing infrastructure.

There are many HCI solutions in the market and the most popular ones are HPE SimpliVity, Dell VxRail and Nutanix. Note that HCI is always more expensive than individual components because you are purchasing all-in-one solutions.

HCI and Private Cloud

Private cloud is basically the public cloud experience like flexibility, consumption model, scalability, usability in a private data center. HCI is built for easiness, full API remote management and hardware consolidation in mind. That’s why HCI is so popular as the base building block of private cloud infrastructure. The automation layers, simple-to-use end-user portal, flexible infrastructure, together with good financial-backed in place, you can provide that same cloud experience within your own data center.

To build a private cloud, underlying infrastructure needs to be as flexible, scalable and easy to manage as possible. Modern infrastructure approaches are easier to use with these kinds of situations and HCI is one of them. HCI empowers the deployment of cloud-like infrastructure on-premises with competitive costs, more control, and improved security.

All major providers from traditional infra vendors like Dell, HPE and NetApp and public cloud service providers like AWS, Google Cloud and Microsoft Azure, agree that the future of IT is and will be hybrid: some apps run in the public cloud, some of them run in private data centers and some computing is done at the edge. It will be interesting to see what’s next in infrastructure technology to shape and improve the experience of cloud computing.