blog

MariaDB MaxScale Load Balancing on Docker: Management: Part Two

This blog post is a continuation of MariaDB MaxScale Load Balancing on Docker: Deployment – Part1. In this part, we are going to focus more on management operations with advanced use cases like service control, configuration management, query processing, security and cluster reconciliation. The example steps and instructions shown in this post are based on the running environments that we have set up in the first part of this blog series.

Service Control

For MaxScale, starting and stopping the container is the only way to control the service. Provided the container has been created, we can use the following command to manage the service:

$ docker start maxscale

$ docker stop maxscale

$ docker restart maxscaleRunning without Root Privileges

The Docker containers by default run with the root privilege and so does the application that runs inside the container. This is another major concern from the security perspective because hackers can gain root access to the Docker host by hacking the application running inside the container.

To run Docker as a non-root user, you have to add your user to the docker group. Firstly, create a docker group if there isn’t one:

$ sudo groupadd dockerThen, add your user to the docker group. In this example our user is “vagrant”:

$ sudo usermod -aG docker vagrantLog out and log back in so that your group membership is re-evaluated (or reboot if it does not work). At this point, you can run the MaxScale container with the standard run command (no sudo required) as user “vagrant”:

$ docker run -d

--name maxscale-unprivileged

-p 4006:4006

-p 4008:4008

-p 8989:8989

-v $PWD/maxscale.cnf:/etc/maxscale.cnf

mariadb/maxscaleMaxScale process runs by user “maxscale” and requires no special privileges up to the root level. Thus, running the container in non-privileged mode is always the best way if you are concerned about the security.

Configuration Management

For standalone MaxScale container, configuration management requires modification to the mapped configuration file followed by restarting the MaxScale container. However, if you are running as a Docker Swarm service, the new configuration has to be loaded into the Swarm Configs as a new version, for example:

$ cat maxscale.cnf | docker config create maxscale_config_v2 -Then, update the service by removing the old configs (maxscale_config) and add the new one (maxscale_config_v2) to the same target:

$ docker service update

--config-rm maxscale_config

--config-add source=maxscale_config_v2,target=/etc/maxscale.cnf

maxscale-clusterDocker Swarm will then schedule container removal and replace procedures one container at a time until the replicas requirement is satisfied.

Upgrade and Downgrade

One of the advantages of running your applications in Docker is trivial upgrade and downgrade procedure. Every running container is based on an image, and this image can be switched easily with the image tag. To get the list of available images for MaxScale, check out the Tags section in the Docker Hub. The following examples show the process to downgrade a MaxScale 2.3 to one minor version earlier, 2.2:

$ docker run -d

--name maxscale

-p 4006:4006

-p 4008:4008

-v $PWD/maxscale.cnf:/etc/maxscale.cnf

mariadb/maxscale:2.3

$ docker rm -f maxscale

$ docker run -d

--name maxscale

-p 4006:4006

-p 4008:4008

-v $PWD/maxscale.cnf:/etc/maxscale.cnf

mariadb/maxscale:2.2Make sure the configuration options are compatible with the version that you want to run. For example, the above downgrade would be failed at the first run due to the following errors:

2019-06-19 05:29:04.301 error : (check_config_objects): Unexpected parameter 'master_reconnection' for object 'rw-service' of type 'service', or 'true' is an invalid value for parameter 'master_reconnection'.

2019-06-19 05:29:04.301 error : (check_config_objects): Unexpected parameter 'delayed_retry' for object 'rw-service' of type 'service', or 'true' is an invalid value for parameter 'delayed_retry'.

2019-06-19 05:29:04.301 error : (check_config_objects): Unexpected parameter 'transaction_replay_max_size' for object 'rw-service' of type 'service', or '1Mi' is an invalid value for parameter 'transaction_replay_max_size'.

2019-06-19 05:29:04.302 error : (check_config_objects): Unexpected parameter 'transaction_replay' for object 'rw-service' of type 'service', or 'true' is an invalid value for parameter 'transaction_replay'.

2019-06-19 05:29:04.302 error : (check_config_objects): Unexpected parameter 'causal_reads_timeout' for object 'rw-service' of type 'service', or '10' is an invalid value for parameter 'causal_reads_timeout'.

2019-06-19 05:29:04.302 error : (check_config_objects): Unexpected parameter 'causal_reads' for object 'rw-service' of type 'service', or 'true' is an invalid value for parameter 'causal_reads'.What we need to do is to remove the unsupported configuration options as shown above in the configuration file before downgrading the container image:

- master_reconnection

- delayed_retry

- transaction_replay

- causal_reads_timeout

- causal_reads

Finally, start the container again and you should be good. Version upgrade for MaxScale works similarly. Just change the tag that you want to use and off you go.

MaxScale Filters

MaxScale uses a component called filter to manipulate or process the requests as they pass through it. There are a bunch of filters you can use, as listed in this page, MaxScale 2.3 Filters. For example, a specific query can be logged into a file if it matches a criteria or you can rewrite the incoming query before it reaches the backend servers.

To activate a filter, you have to define a section and include the definition name into the corresponding service definition, as shown in the examples further down.

Query Logging All (QLA)

As its name explains, QLA filter logs all queries match the set of rule per client session. All queries will be logged following the filebase format.

Firstly, define the component with type=filter and module=qlafilter:

## Query Log All (QLA) filter

## Filter module for MaxScale to log all query content on a per client session basis

[qla-sbtest-no-pk]

type = filter

module = qlafilter

filebase = /tmp/sbtest

match = select.*from.*

exclude = where.*id.*

user = sbtestThen add the filter component into our services:

[rw-service]

...

filters = qla-sbtest-no-pk

[rr-service]

...

filters = qla-sbtest-no-pkIt’s also a good idea to map /tmp of the container with the actual directory on the Docker host, so we don’t have to access the container to retrieve the generated log files. Firstly, create a directory and give global writable permission:

$ mkdir qla

$ chmod 777 qlaSince we need to bind the above directory into the container, we have to stop and remove the running container and re-run it with the following command:

$ docker stop maxscale

$ docker run -d

--name maxscale

--restart always

-p 4006:4006

-p 4008:4008

-p 8989:8989

-v $PWD/maxscale.cnf:/etc/maxscale.cnf

-v $PWD/qla:/tmp

mariadb/maxscaleYou can then retrieve the content of the logged queries inside the qla directory:

$ cat qla/*

Date,User@Host,Query

2019-06-18 08:25:13,sbtest@::ffff:192.168.0.19,select * from sbtest.sbtest1Query Rewriting

Query rewrite is a feature that, depending on the queries running against the database server, quickly allows to isolate and correct problematic queries and improve performance.

Query rewriting can be done via regexfilter. This filter can match or exclude incoming statements using regular expressions and replace them with another statement. Every rule is defined in its own section and include the section name in the corresponding service to activate it.

The following filter will match a number of SHOW commands that we don’t want to expose to the read-only clients:

## Rewrite query based on regex match and replace

[block-show-commands]

type = filter

module = regexfilter

options = ignorecase

match = ^show (variables|global variables|global status|status|processlist|full processlist).*

replace = SELECT 'Not allowed'Then we can append the filter to the service that we want to apply. For example, all read-only connections have to be filtered for the above:

[rr-service]

...

filters = qla-sbtest-no-pk | block-show-commandsKeep in mind that multiple filters can be defined using a syntax akin to the Linux shell pipe “|” syntax. Restart the container to apply the configuration changes:

$ docker restart maxscaleWe can then verify with the following query:

$ mysql -usbtest -p -h192.168.0.200 -P4006 -e 'SHOW VARIABLES LIKE "max_connections"'

+-------------+

| Not allowed |

+-------------+

| Not allowed |

+-------------+You will get the result as expected.

Cluster Recovery

MaxScale 2.2.2 and later supports automatic or manual MariaDB replication or cluster recovery for the following events:

- failover

- switchover

- rejoin

- reset-replication

Failover for the master-slave cluster can and often should be set to activate automatically. Switchover must be activated manually through MaxAdmin, MaxCtrl or the REST interface. Rejoin can be set to automatic or activated manually. These features are implemented in the “mariadbmon” module.

The following automatic failover events happened if we purposely shutdown the active master, 192.168.0.91:

$ docker logs -f maxscale

...

2019-06-19 03:53:02.348 error : (mon_log_connect_error): Monitor was unable to connect to server mariadb1[192.168.0.91:3306] : 'Can't connect to MySQL server on '192.168.0.91' (115)'

2019-06-19 03:53:02.351 notice : (mon_log_state_change): Server changed state: mariadb1[192.168.0.91:3306]: master_down. [Master, Running] -> [Down]

2019-06-19 03:53:02.351 warning: (handle_auto_failover): Master has failed. If master status does not change in 4 monitor passes, failover begins.

2019-06-19 03:53:16.710 notice : (select_promotion_target): Selecting a server to promote and replace 'mariadb1'. Candidates are: 'mariadb2', 'mariadb3'.

2019-06-19 03:53:16.710 warning: (warn_replication_settings): Slave 'mariadb2' has gtid_strict_mode disabled. Enabling this setting is recommended. For more information, see https://mariadb.com/kb/en/library/gtid/#gtid_strict_mode

2019-06-19 03:53:16.711 warning: (warn_replication_settings): Slave 'mariadb3' has gtid_strict_mode disabled. Enabling this setting is recommended. For more information, see https://mariadb.com/kb/en/library/gtid/#gtid_strict_mode

2019-06-19 03:53:16.711 notice : (select_promotion_target): Selected 'mariadb2'.

2019-06-19 03:53:16.711 notice : (handle_auto_failover): Performing automatic failover to replace failed master 'mariadb1'.

2019-06-19 03:53:16.723 notice : (redirect_slaves_ex): Redirecting 'mariadb3' to replicate from 'mariadb2' instead of 'mariadb1'.

2019-06-19 03:53:16.742 notice : (redirect_slaves_ex): All redirects successful.

2019-06-19 03:53:17.249 notice : (wait_cluster_stabilization): All redirected slaves successfully started replication from 'mariadb2'.

2019-06-19 03:53:17.249 notice : (handle_auto_failover): Failover 'mariadb1' -> 'mariadb2' performed.

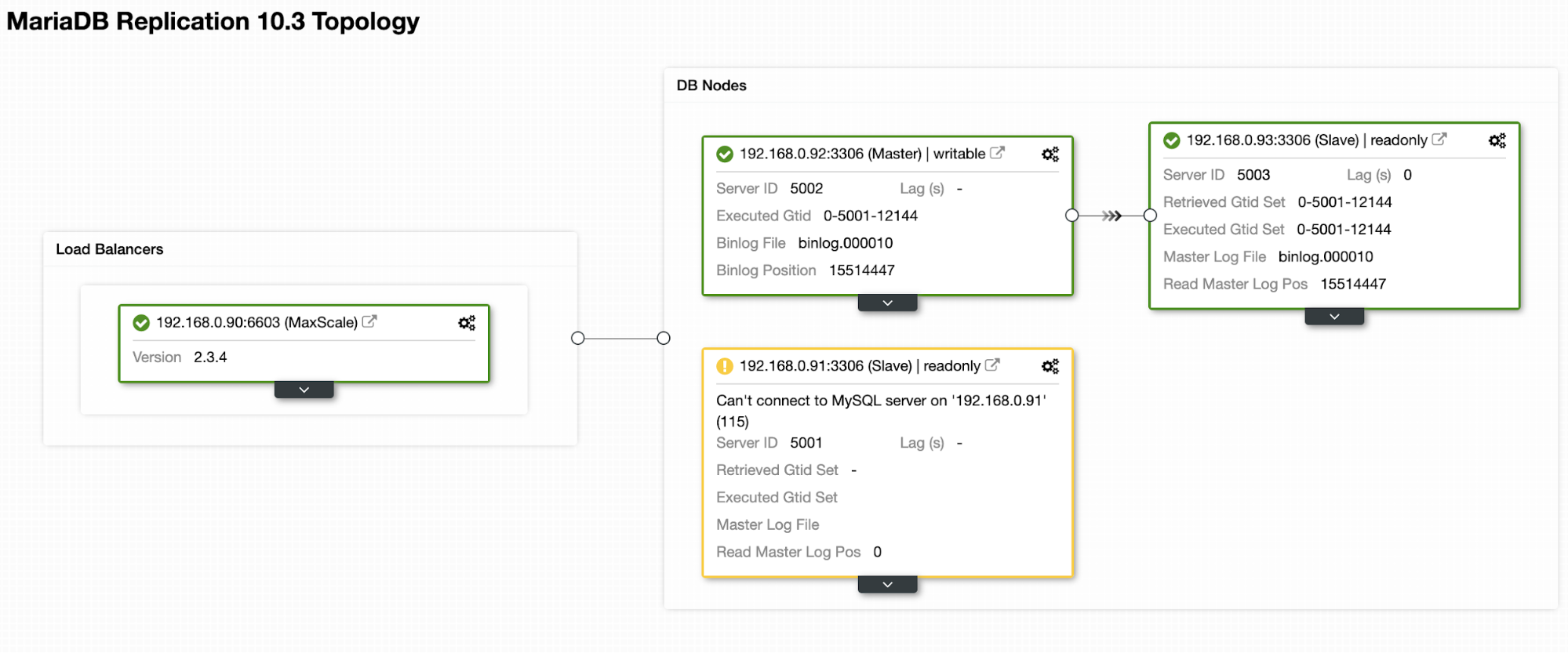

2019-06-19 03:53:20.363 notice : (mon_log_state_change): Server changed state: mariadb2[192.168.0.92:3306]: new_master. [Slave, Running] -> [Master, Running]After failover completes, our topology is now looking like this:

For switchover operation, it requires human intervention and one way to do it through MaxCtrl console. Let’s say the old master is back operational and is ready to be promoted as a master, we can perform the switchover operation by sending the following command:

$ docker exec -it maxscale maxctrl

maxctrl: call command mariadbmon switchover monitor mariadb1 mariadb2

OKWhere, the formatting is:

$ call command Then, verify the new topology by listing out the servers:

maxctrl: list servers

┌──────────┬──────────────┬──────┬─────────────┬─────────────────┬──────────────┐

│ Server │ Address │ Port │ Connections │ State │ GTID │

├──────────┼──────────────┼──────┼─────────────┼─────────────────┼──────────────┤

│ mariadb1 │ 192.168.0.91 │ 3306 │ 0 │ Master, Running │ 0-5001-12144 │

├──────────┼──────────────┼──────┼─────────────┼─────────────────┼──────────────┤

│ mariadb2 │ 192.168.0.92 │ 3306 │ 0 │ Slave, Running │ 0-5001-12144 │

├──────────┼──────────────┼──────┼─────────────┼─────────────────┼──────────────┤

│ mariadb3 │ 192.168.0.93 │ 3306 │ 0 │ Slave, Running │ 0-5001-12144 │

└──────────┴──────────────┴──────┴─────────────┴─────────────────┴──────────────┘We just promoted our old master back to its original spot. Fun fact, ClusterControl automatic recovery feature does exactly the same thing if it is enabled.

Final Thoughts

Running MariaDB MaxScale on Docker brings additional benefits like MaxScale clustering, easy to upgrade and downgrade, and also advanced proxying functionalities for MySQL and MariaDB clusters.