blog

Infrastructure Automation – Ansible Role for ClusterControl

If you are automating your server infrastructure with Ansible, then this blog is for you. We are glad to announce the availability of an Ansible Role for ClusterControl. It is available at Ansible Galaxy. For those who are automating with Puppet or Chef, we already published a Puppet Module and Chef Cookbook for ClusterControl. You can also check out our Tools page.

ClusterControl Ansible Role

The Ansible role is also available from our Github repository. It does the following:

- Configure Severalnines repository.

- Install and configure MySQL (MariaDB for CentOS/RHEL 7).

- Install and configure Apache and PHP.

- Set up rewrite and SSL module for Apache.

- Install and configure ClusterControl suite (controller, UI and CMONAPI).

- Generate an SSH key for cmon_ssh_user (default is root).

This role is built on top of Ansible v1.9.4 and is tested on Debian 8 (Jessie), Ubuntu 12.04 (Precise), RHEL/CentOS 6 and 7.

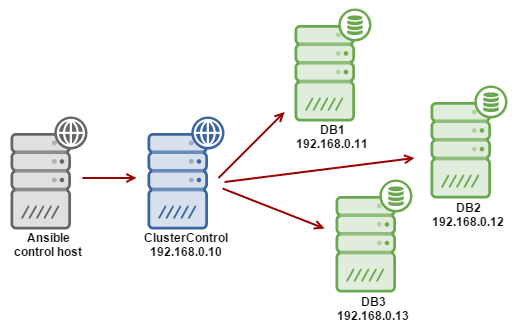

Example Deployment

Let’s assume that we already have Ansible installed on a host, and we want to have a ClusterControl host to deploy and manage a three-node Percona XtraDB Cluster. The following is our architecture diagram:

-

Get the ClusterControl Ansible role from Ansible Galaxy or Github.

For Ansible Galaxy:

$ ansible-galaxy install severalnines.clustercontrolFor Github:

$ git clone https://github.com/severalnines/ansible-clustercontrol $ cp -rf ansible-clustercontrol /etc/ansible/roles/severalnines.clustercontrol -

Configure the ClusterControl host in Ansible. Add the following line into /etc/ansible/hosts:

192.168.0.10 -

Create a playbook. In this example, we create a minimal Ansible playbook called cc.yml and add the following lines:

- hosts: 192.168.0.10 roles: - { role: severalnines.clustercontrol } -

Generate an SSH key and set up passwordless SSH from the Ansible control host to ClusterControl host as root user:

$ ssh-keygen -t rsa $ ssh-copy-id 192.168.0.10 -

Run the Ansible playbook.

$ ansible-playbook cc.yml -

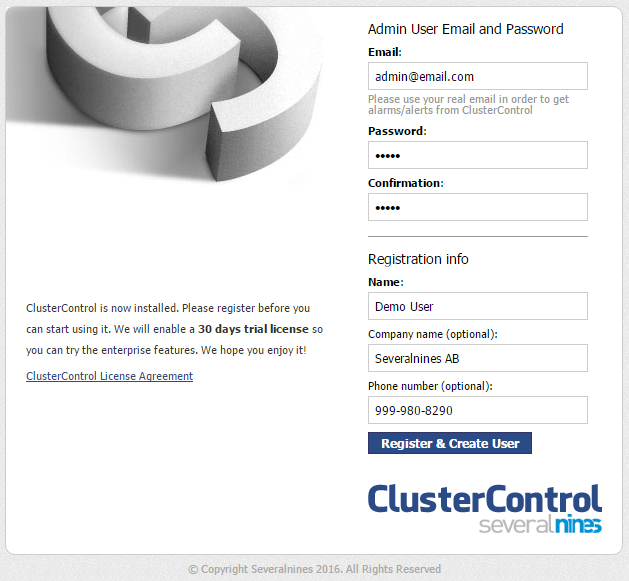

Once ClusterControl is installed, go to https://192.168.55.190/clustercontrol and create the default admin user/password.

-

On ClusterControl node, setup passwordless SSH key to all target DB nodes. For example, if ClusterControl node is 192.168.0.10 and DB nodes are 192.168.0.11,192.168.0.12, 192.168.0.13:

$ ssh-copy-id 192.168.0.11 # DB1 $ ssh-copy-id 192.168.0.12 # DB2 $ ssh-copy-id 192.168.0.13 # DB3** Enter the password to complete the passwordless SSH setup.

-

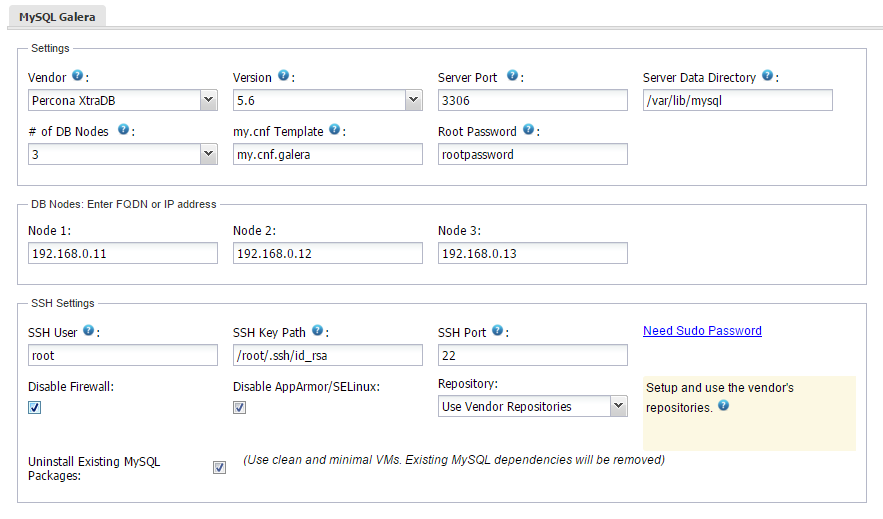

To deploy a new database cluster, click on “Create Database Cluster” and specify the following:

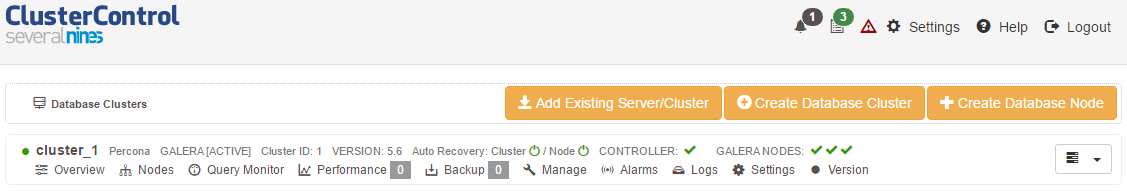

Grab a cup of coffee while waiting for the cluster to be deployed. It usually takes 15~20 minutes depending on the internet connection. Once the job is completed, you should see the cluster in the database cluster list, similar to the screenshot below:

Galera cluster is now deployed.

Example Playbook

The simplest playbook would be (as shown in the above example):

- hosts: clustercontrol-server

roles:

- { role: severalnines.clustercontrol }If you would like to specify custom configuration values as explained above, create a file called vars/main.yml and include it inside the playbook:

- hosts: 192.168.10.15

vars:

- vars/main.yml

roles:

- { role: severalnines.clustercontrol }Inside vars/main.yml:

mysql_root_username: admin

mysql_root_password: super-user-password

cmon_mysql_password: super-cmon-password

cmon_mysql_port: 3307If you are running as a non-root user, ensure the user has the ability to escalate as super user via sudo. Example playbook for Ubuntu 12.04 with sudo password enabled:

- hosts: [email protected]

become: yes

become_user: root

roles:

- { role: severalnines.clustercontrol }Then, execute the command with –ask-become-pass flag:

$ ansible-playbook cc.yml --ask-become-passFor more details on the Role Variables, check out the Ansible Galaxy or Github repository. Happy clustering!