blog

How to Monitor Multiple MySQL Instances Running on the Same Machine – ClusterControl Tips & Tricks

Requires ClusterControl 1.6 or later. Applies to MySQL based instances/clusters.

On some occasions, you might want to run multiple instances of MySQL on a single machine. You might want to give different users access to their own MySQL servers that they manage themselves, or you might want to test a new MySQL release while keeping an existing production setup undisturbed.

It is possible to use a different MySQL server binary per instance, or use the same binary for multiple instances (or a combination of the two approaches). For example, you might run a server from MySQL 5.6 and one from MySQL 5.7, to see how the different versions handle a certain workload. Or you might run multiple instances of the latest MySQL version, each managing a different set of databases.

Whether or not you use distinct server binaries, each instance that you run must be configured with unique values for several operating parameters. This eliminates the potential for conflict between instances. You can use MySQL Sandbox to create multiple MySQL instances. Or you can use mysqld_multi available in MySQL to start or stop any number of separate mysqld processes running on different TCP/IP ports and UNIX sockets.

In this blog post, we’ll show you how to configure ClusterControl to monitor multiple MySQL instances running on one host.

ClusterControl Limitation

At the time of writing, ClusterControl does not support monitoring of multiple instances on one host per cluster/server group. It assumes the following best practices:

- Only one MySQL instance per host (physical server or virtual machine).

- MySQL data redundancy should be configured on N+1 server.

- All MySQL instances are running with uniform configuration across the cluster/server group, e.g., listening port, error log, datadir, basedir, socket are identical.

With regards to the points mentioned above, ClusterControl assumes that in a cluster/server group:

- MySQL instances are configured uniformly across a cluster; same port, the same location of logs, base/data directory and other critical configurations.

- It monitors, manages and deploys only one MySQL instance per host.

- MySQL client must be installed on the host and available on the executable path for the corresponding OS user.

- The MySQL is bound to an IP address reachable by ClusterControl node.

- It keeps monitoring the host statistics e.g CPU/RAM/disk/network for each MySQL instance individually. In an environment with multiple instances per host, you should expect redundant host statistics since it monitors the same host multiple times.

With the above assumptions, the following ClusterControl features do not work for a host with multiple instances:

Backup – Percona Xtrabackup does not support multiple instances per host and mysqldump executed by ClusterControl only connects to the default socket.

Process management – ClusterControl uses the standard ‘pgrep -f mysqld_safe’ to check if MySQL is running on that host. With multiple MySQL instances, this is a false positive approach. As such, automatic recovery for node/cluster won’t work.

Configuration management – ClusterControl provisions the standard MySQL configuration directory. It usually resides under /etc/ and /etc/mysql.

Workaround

Monitoring multiple MySQL instances on a machine is still possible with ClusterControl with a simple workaround. Each MySQL instance must be treated as a single entity per server group.

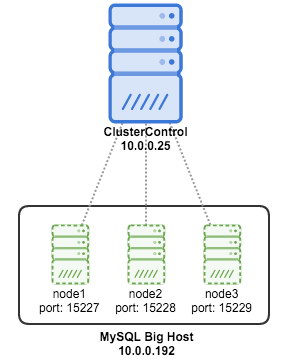

In this example, we have 3 MySQL instances on a single host created with MySQL Sandbox:

We created our MySQL instances using the following commands:

$ su - sandbox

$ make_multiple_sandbox mysql-5.7.23-linux-glibc2.12-x86_64.tar.gzBy default, MySQL Sandbox creates mysql instances that listen to 127.0.0.1. It is necessary to configure each node appropriately to make them listen to all available IP addresses. Here is the summary of our MySQL instances in the host:

[sandbox@master multi_msb_mysql-5_7_23]$ cat default_connection.json

{

"node1":

{

"host": "master",

"port": "15024",

"socket": "/tmp/mysql_sandbox15024.sock",

"username": "msandbox@127.%",

"password": "msandbox"

}

,

"node2":

{

"host": "master",

"port": "15025",

"socket": "/tmp/mysql_sandbox15025.sock",

"username": "msandbox@127.%",

"password": "msandbox"

}

,

"node3":

{

"host": "master",

"port": "15026",

"socket": "/tmp/mysql_sandbox15026.sock",

"username": "msandbox@127.%",

"password": "msandbox"

}

}Next step is to modify the configuration of the newly created instances. Go to my.cnf for each of them and hash bind_address variable:

[sandbox@master multi_msb_mysql-5_7_23]$ ps -ef | grep mysqld_safe

sandbox 13086 1 0 08:58 pts/0 00:00:00 /bin/sh bin/mysqld_safe --defaults-file=/home/sandbox/sandboxes/multi_msb_mysql-5_7_23/node1/my.sandbox.cnf

sandbox 13805 1 0 08:58 pts/0 00:00:00 /bin/sh bin/mysqld_safe --defaults-file=/home/sandbox/sandboxes/multi_msb_mysql-5_7_23/node2/my.sandbox.cnf

sandbox 14065 1 0 08:58 pts/0 00:00:00 /bin/sh bin/mysqld_safe --defaults-file=/home/sandbox/sandboxes/multi_msb_mysql-5_7_23/node3/my.sandbox.cnf

[sandbox@master multi_msb_mysql-5_7_23]$ vi my.cnf

#bind_address = 127.0.0.1Then install mysql on your master node and restart all instances using restart_all script.

[sandbox@master multi_msb_mysql-5_7_23]$ yum install mysql

[sandbox@master multi_msb_mysql-5_7_23]$ ./restart_all

# executing "stop" on /home/sandbox/sandboxes/multi_msb_mysql-5_7_23

executing "stop" on node 1

executing "stop" on node 2

executing "stop" on node 3

# executing "start" on /home/sandbox/sandboxes/multi_msb_mysql-5_7_23

executing "start" on node 1

. sandbox server started

executing "start" on node 2

. sandbox server started

executing "start" on node 3

. sandbox server startedFrom ClusterControl, we need to perform ‘Import’ for each instance as we need to isolate them in a different group to make it work.

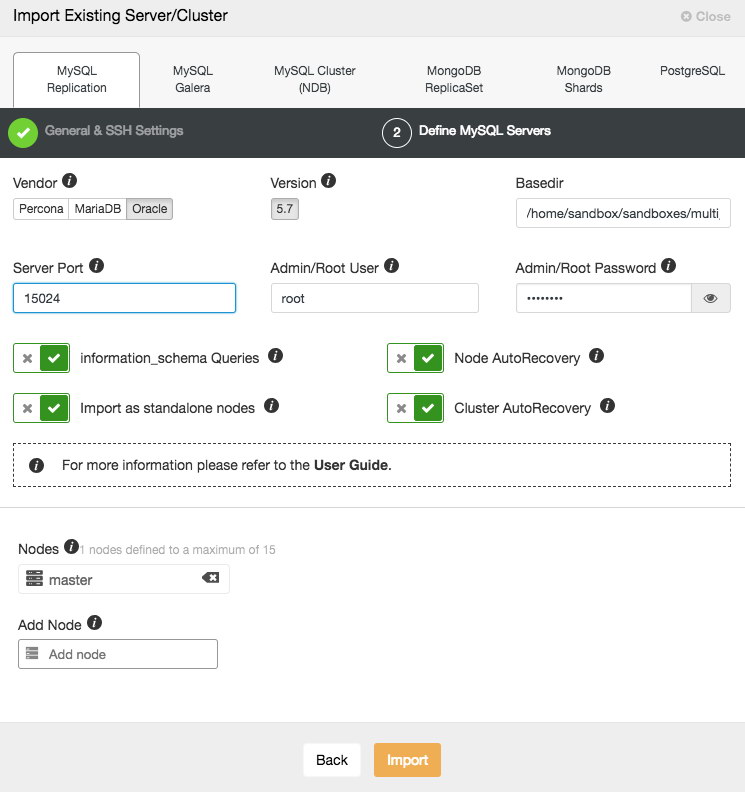

For node1, enter the following information in ClusterControl > Import:

Make sure to put proper ports (different for different instances) and host (same for all instances).

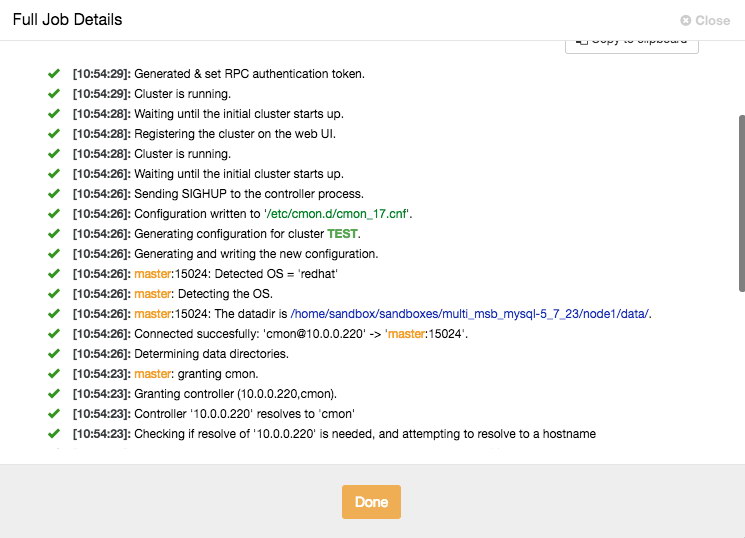

You can monitor the progress by clicking on the Activity/Jobs icon in the top menu.

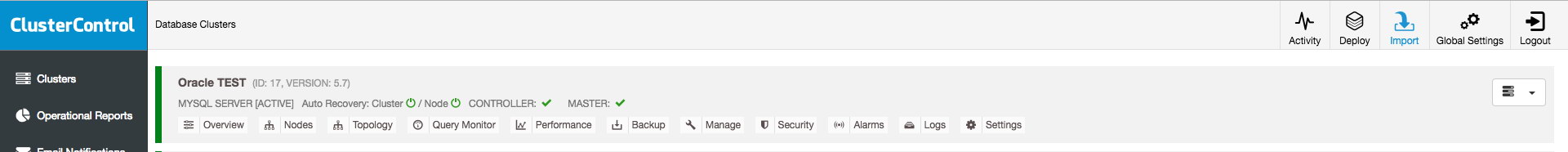

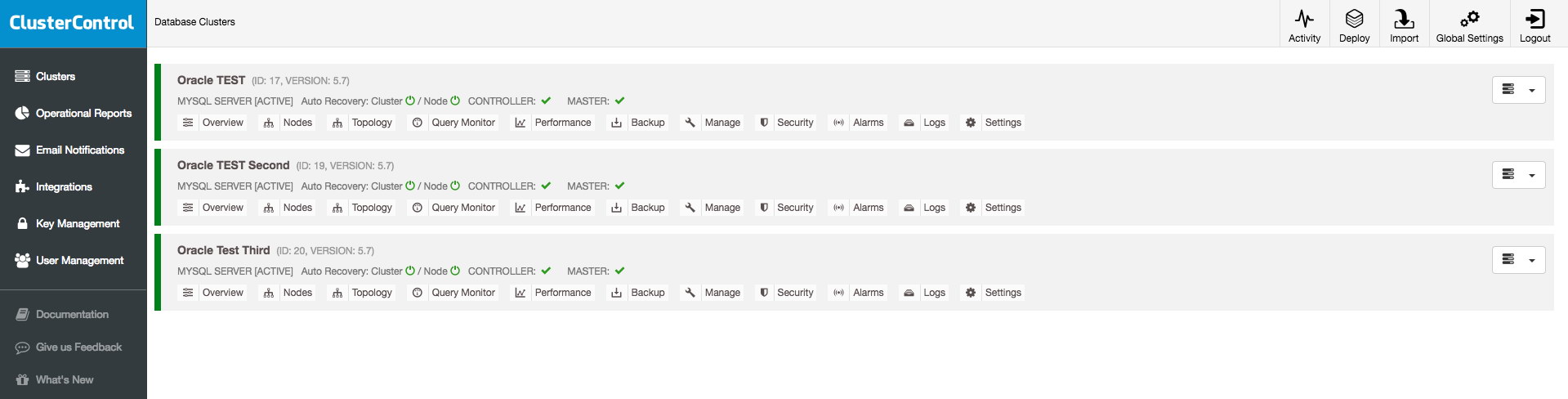

You will see node1 in the UI once ClusterControl finishes the job. Repeat the same steps to add another two nodes with port 15025 and 15026. You should see something like the below once they are added:

There you go. We just added our existing MySQL instances into ClusterControl for monitoring. Happy monitoring!

PS.: To get started with ClusterControl, click here!