blog

How Load Balancing Works with DBaaS Setups

Database as a Service (DBaaS) takes away the pain of operating a database. So as a user, when you decide to use a DBaas from a cloud service provider, you would only know the database version, size of instance, storage, RAM, and whether the DBaaS supports high availability with multiple instances. You would usually not know about the behind the scenes of the DBaaS – how traffic comes from the application to the database instance, how that traffic is rerouted in case of a failure, how multiple instances are kept in sync to serve traffic, and so on.

In this blog, we will discuss one of the critical parts of a DBaaS, which is Load Balancing.

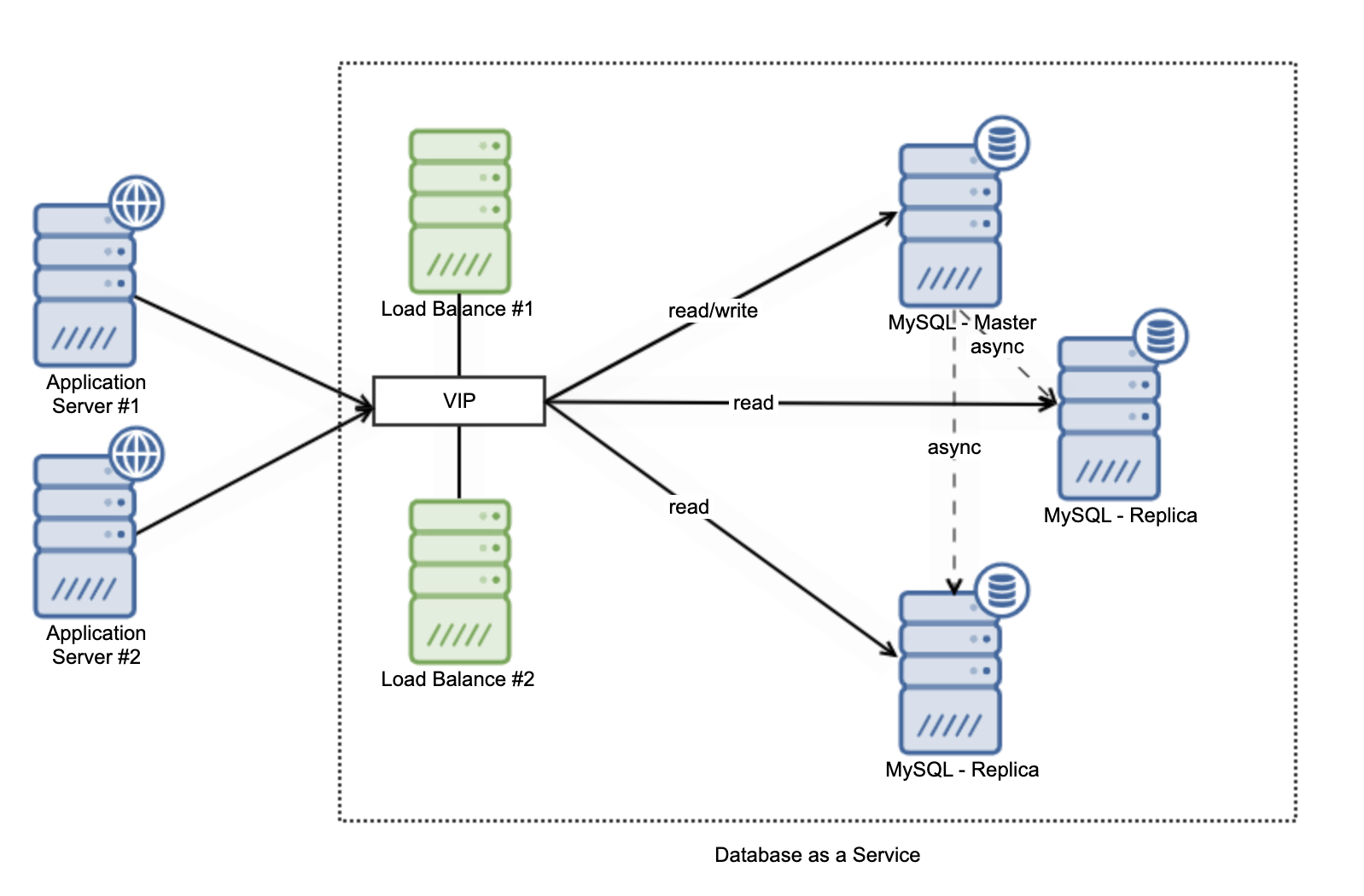

Load Balance on DBaaS Architecture

Load Balancing plays an important role in a Database as a Service. The load balancer stands in front of the database servers and presents one endpoint to applications. Application side needs only to know one single IP address to connect to the database. On the load balancers, queries are redirected to the database instances based on the load balancing algorithm, for instance round robin, least connection, or weight-based load balancing. Load balancers would usually have capabilities to detect non functioning databases, so the load balancer will take out the non functioning nodes from the load balance list.

Some more advanced features include detecting the degradation database performance, shunning servers that for instance are not synced with the latest data, and splitting read/write queries transparently.

On layer-4 load balancing, the splitting of connections between read and write queries is done using different ports while a layer-7 load balancer should have capabilities to automatically split read traffic from write traffic by inspecting incoming requests. So in a master-slave type setup, writes will only go to the master.

The Load Balanced Setup on DBaaS

ClusterControl supports building your own private Database as a Service. You can build and deploy a private DBaaS on premise or in the cloud, for instance in an AWS VPC.

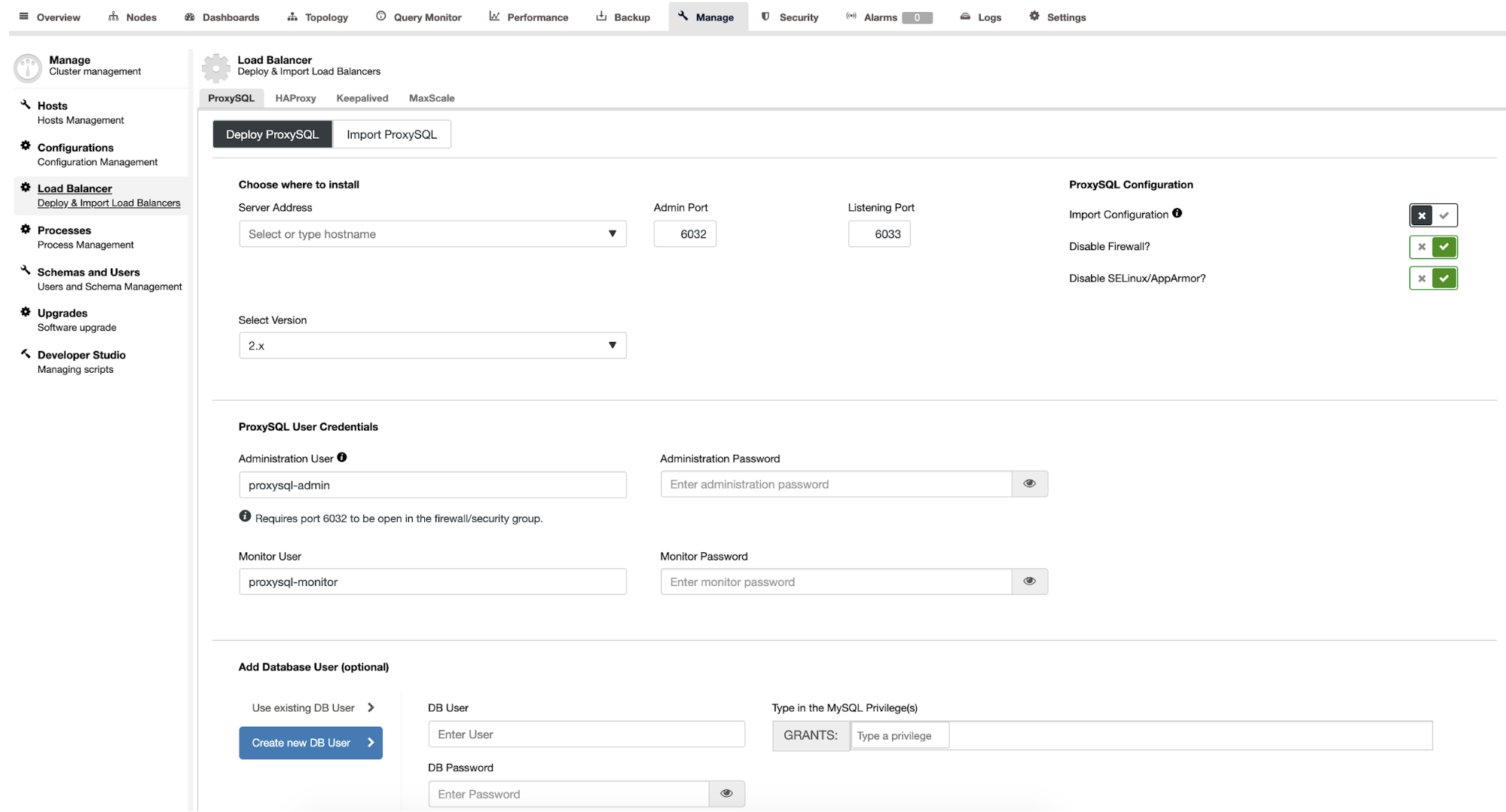

ClusterControl supports a few different load balancing options, including HAProxy, ProxySQL, and Maxscale. We will show you how to deploy load balancing using ProxySQL as a single endpoint for the database. You can go to Manage -> Load Balancer as shown below:

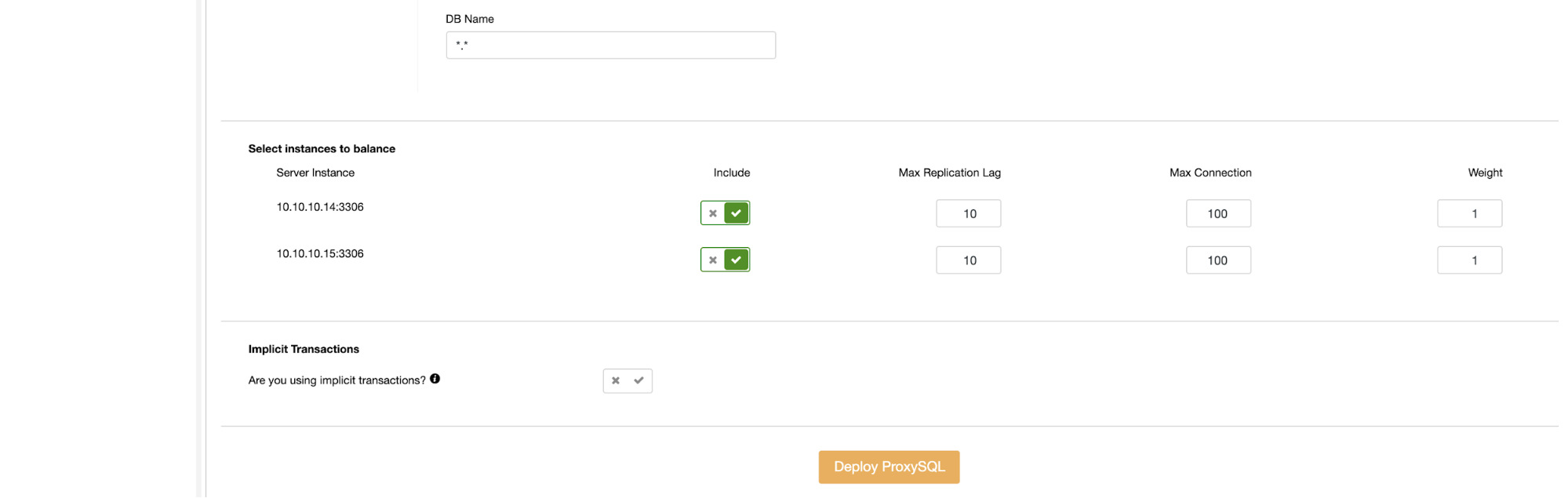

You can select on which node ProxySQL will be installed. If you want to have ProxySQL installed in a dedicated node, just type the IP Address on the list. Select the version of ProxySQL, password for ProxySQL administration and monitoring, and also enable the list of databases to be balanced. After that, you just need to click the Deploy button.

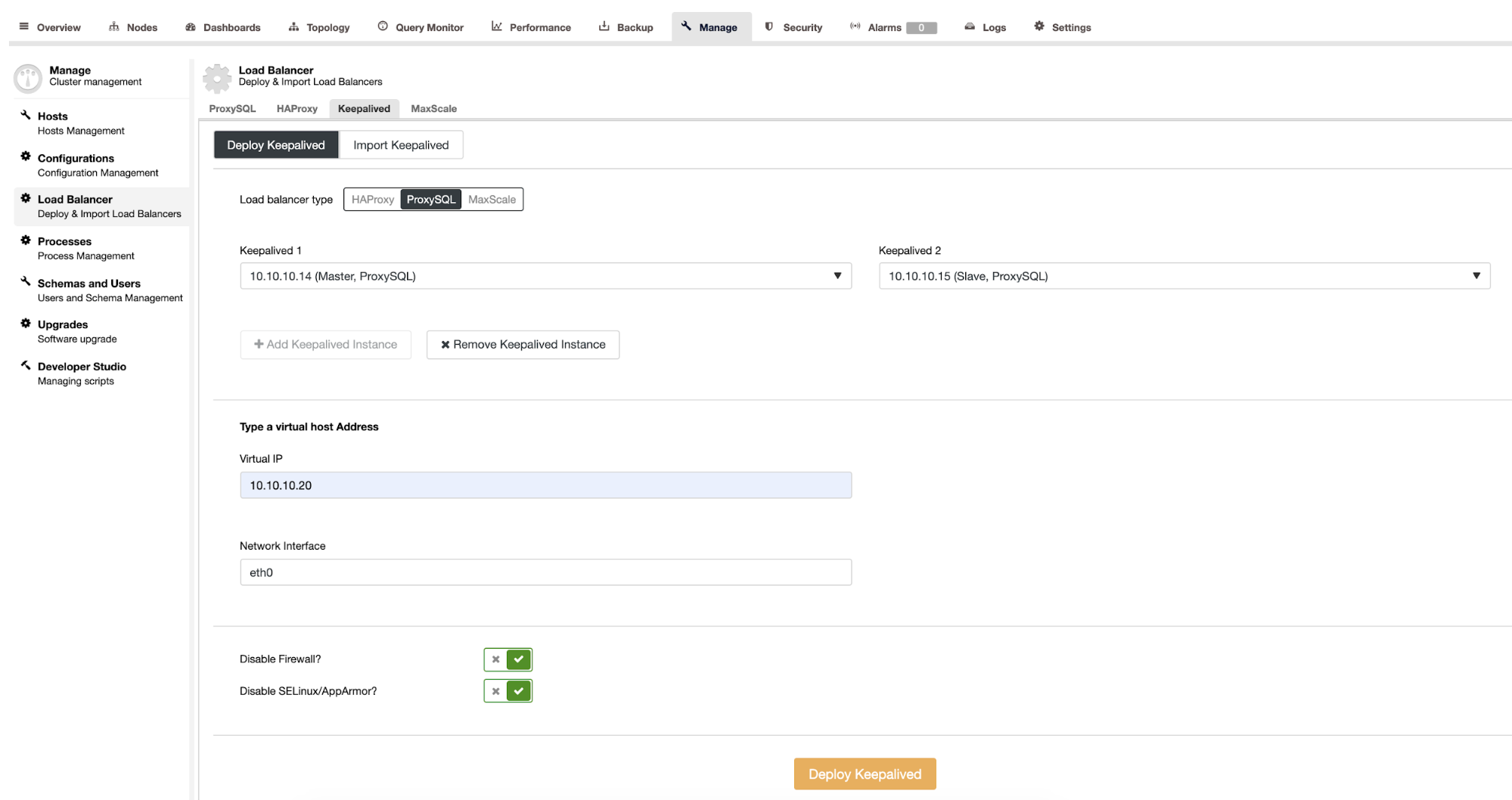

It is important that not only the database is highly available, but also the load balancer. You need to have at least 2 load balancers running and Virtual IP Address for the load balancer, this will act as a single connection from the application side. Keepalived service manages the Virtual IP Address, and takes care of floating it between two load balancers. ClusterControl also supports deployment of the Keepalived service, you just need to select the load balancer on which it needs to be installed, fill the Virtual IP Address and Network Interface.

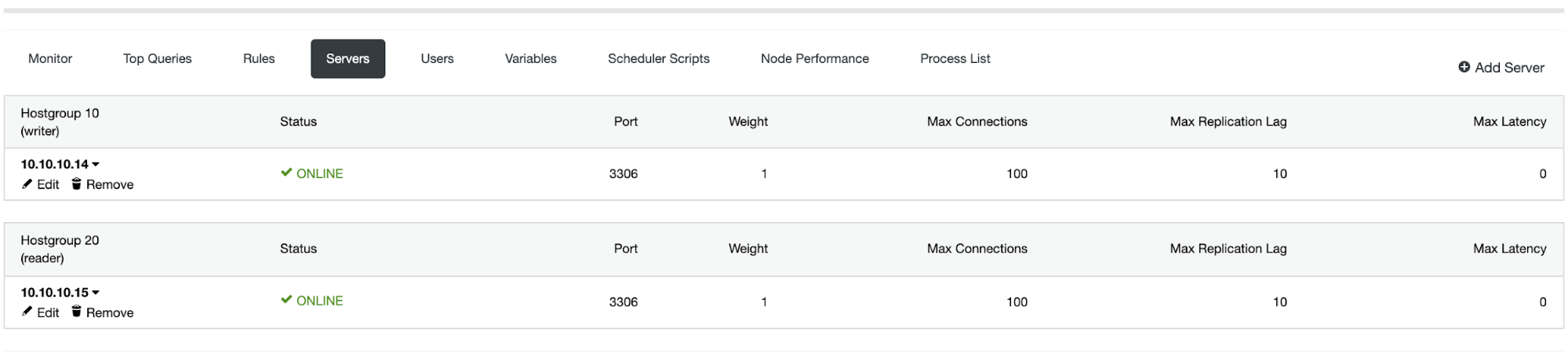

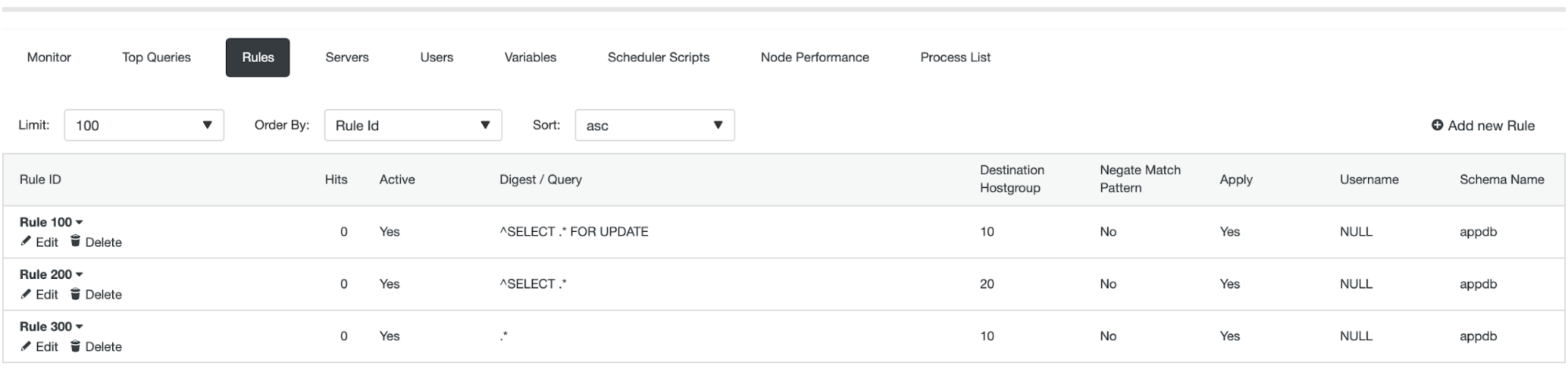

There are two roles on Keepalived, one acts as a Master, and the other acts as Backup. It will forward the traffic to the ProxySQL services. On the ProxySQL side, there are hostgroup (re. HG) and query rules concepts to split the read and write query pattern based on the rules as shown in below:

There are 2 hostgroup (HG10 & HG20), HG10 will forward the write queries to the master, and HG20 will forward the read queries to the slave database as shown in the Query Rules below:

From the application side, the database connection only knows the Virtual IP Address (in this case, the IP address is 10.10.10.20). The VIP will move to the backup ProxySQL instance in case of issues with the primary, so the traffic will automatically fail over and applications will still run with minimum impact.