blog

How to Deploy and Configure MaxScale for SQL Load Balancing with Read-Write Split

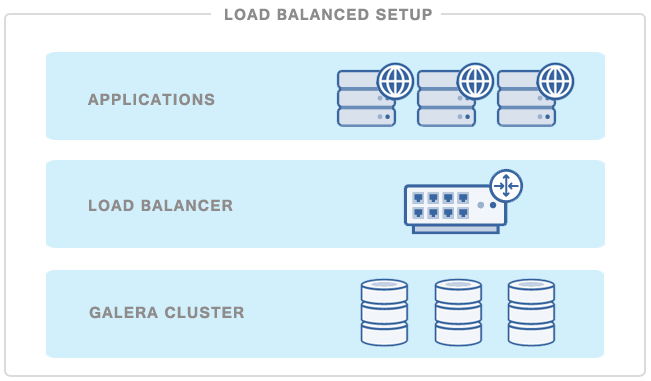

There are two models of load balancing: transport and application layer. HAProxy is a great TCP load balancer, but it’s lack of SQL awareness effectively limits its ability to address certain scaling issues in distributed database environments. In the open source world, there’s been a few SQL-aware load balancers, namely MySQL Proxy, ProxySQL and MaxScale, but they all seemed to be in beta status and unfit for production use. So we were pretty excited when the MariaDB team released a GA version of MaxScale earlier this year. In this blog, we’ll have a look at MaxScale and see how it compares with HAProxy.

Deployment

Installation is easy, at least on the latest LTS version of Ubuntu (Trusty, 14.04) which we used for our tests.

Add a public key:

$ apt-key adv --keyserver keyserver.ubuntu.com --recv-keys 8167EE24Add a MaxScale repository to one of *.list files for apt-get:

deb [arch=amd64] http://downloads.mariadb.com/software/MaxScale/maxscale/DEB trusty mainRun:

$ apt-get update && apt-get install maxscaleand you can enjoy your new software – proxy is installed into the /usr/local/skysql/maxscale directory.

Configuration

Once installed, we need to configure it. Along with installation comes an example configuration file, located in: /usr/local/skysql/maxscale/etc/MaxScale_template.cnf. It gives a nice introduction to the available options, and helps to setup the environment.

MaxScale uses a pluggable architecture with different plugins providing different features. In this post, we will concentrate on the routing part, and for now, leave out other interesting possibilities like query rewriting. MaxScale uses different types of services; monitors, services, listeners and filters.

For our tests we defined two types of routing services:

- ‘router=readwritesplit’, which provides read/write (RW) splitting,

- ‘router=readconnroute’, which provides round-robin-like (RR) kind of access.

Each service was accompanied by a listener, port 3307 for RW split and 3308 for RR service. With RR service, we relied on MaxScale’s monitoring of Galera nodes to route connections only to the nodes in a ‘Synced’ state.

[maxscale]

threads=4

[Galera Monitor]

type=monitor

module=galeramon

servers=server1,server2,server3

user=maxmon

passwd=maxpwd

monitor_interval=10000

disable_master_failback=1

[qla]

type=filter

module=qlafilter

options=/tmp/QueryLog

[fetch]

type=filter

module=regexfilter

match=fetch

replace=select

[RW]

type=service

router=readwritesplit

servers=server1,server2,server3

user=root

passwd=secretpass

max_slave_connections=100%

router_options=slave_selection_criteria=LEAST_CURRENT_OPERATIONS

[RR]

type=service

router=readconnroute

router_options=synced

servers=server1,server2,server3

user=root

passwd=secretpass

[Debug Interface]

type=service

router=debugcli

[CLI]

type=service

router=cli

[RWlistener]

type=listener

service=RW

protocol=MySQLClient

address=10.69.179.54

port=3307

[RRlistener]

type=listener

service=RR

protocol=MySQLClient

address=10.69.179.54

port=3308

[Debug Listener]

type=listener

service=Debug Interface

protocol=telnetd

address=127.0.0.1

port=4442

[CLI Listener]

type=listener

service=CLI

protocol=maxscaled

address=127.0.0.1

port=6603

[server1]

type=server

address=10.138.103.93

port=3306

protocol=MySQLBackend

[server2]

type=server

address=10.139.81.25

port=3306

protocol=MySQLBackend

[server3]

type=server

address=10.81.192.219

port=3306

protocol=MySQLBackendThere are couple of interesting bits in the configuration file. As you can see, we had to define user/password pairs several times. Those users are used to check the health of the MySQL nodes and to get access to the list of users defined in the system. For the sake of simplicity we used plain text passwords but it is possible to use hashed passwords for better security.

Finally, since we wanted to compare performance of MaxScale vs HAProxy, we used HAProxy installed from within ClusterControl in a default setup – configured similarly to MaxScale’s RR service.

How does MaxScale work with Galera Cluster?

So, let’s talk about how MaxScale sees the Galera Cluster. MaxScale provides an admin CLI which gives you access to some internal statistics. After the first login (user admin, password skysql), you can check available options by running the ‘help’ command. One of the very useful commands is ‘show servers’, which returns a health status of the cluster. Below is the example output of that command.

$ /usr/local/skysql/maxscale/bin/maxadmin -u admin

Password:

MaxScale> show servers

Server 0x219bac0 (server1)

Server: 10.138.103.93

Status: Slave, Synced, Running

Protocol: MySQLBackend

Port: 3306

Server Version: 5.6.22-72.0-56-log

Node Id: 2

Master Id: -1

Repl Depth: 0

Number of connections: 0

Current no. of conns: 0

Current no. of operations: 0

Server 0x20f7da0 (server2)

Server: 10.139.81.25

Status: Slave, Synced, Running

Protocol: MySQLBackend

Port: 3306

Server Version: 5.6.22-72.0-56-log

Node Id: 1

Master Id: -1

Repl Depth: 0

Number of connections: 0

Current no. of conns: 0

Current no. of operations: 0

Server 0x20f7c90 (server3)

Server: 10.81.192.219

Status: Master, Synced, Running

Protocol: MySQLBackend

Port: 3306

Server Version: 5.6.22-72.0-56-log

Node Id: 0

Master Id: -1

Repl Depth: 0

Number of connections: 0

Current no. of conns: 0

Current no. of operations: 0We are interested in the status of the nodes right now – as we can see, we have three nodes ‘Running’, all of them are ‘Synced’. Two were elected as ‘Slave’ and one as a ‘Master’. Those states are what we can use in the configuration file. For example, in RR service we defined the following variable:

router_options=syncedIt means that, at any given time, connections can be routed to any of the nodes, as long as they are in the ‘synced’ state (i.e. not serving as a donor or joining the cluster). On the other hand, the RW service was looking for ‘Slave’ and ‘Master’ states to route traffic accordingly. In case of a master failure, a new node is elected as a new master. Your application needs to reconnect though, MaxScale currently does not provide failover for currently open connections.

What’s worth noting, if you want to setup a RW split, you will need to set the max_slave_connections variable accordingly. By default MaxScale sets it to one and, as a result, only one slave is getting read connections. You can here use a fixed number (2, 5) or a percent of the slave pool (50%, 100%). As we wanted all of our slaves, no matter how many there are out there, to serve the traffic, we set this variable to 100%:

max_slave_connections=100%Another interesting bit is the ‘master’ failover part – when MaxScale detects that a node, elected as a master, is unreachable, it promotes one of the ‘slaves’ to the ‘master’ role. Then, by default, when old ‘master’ comes back online, it is immediately promoted to its old master role. As a result, writes may switch back and forth between different nodes should the ‘master’ start to flap. You can switch this default behavior by adding ‘disable_master_failback=1’ directive to the definition of the monitor service.

In case of any connectivity issues, it’s worth checking log files (by default located in the /usr/local/skysql/maxscale/log/ directory) and the kind of MySQL users MaxScale had detected. The latter can be done from the CLI, using the ‘show dbusers

MaxScale> show dbusers RW

Users table data

Hashtable: 0x2a0c900, size 52

No. of entries: 3

Average chain length: 0.5

Longest chain length: 7

User names: sbtest@%, maxmon@%, maxmon@%If you added some new users on the MySQL side, you can refresh MaxScale’s database by running reload dbusers

MaxScale> reload dbusers RWIn general, the CLI gives you some nice options to use. We’ll not go over all of them in this post, but we’d still like to mention some features:

- configuration (at least partially) can be changed on fly and reloaded (reload config command)

- server state can be set from the CLI, which enables a DBA to move writes to a different node in the cluster (set server … command)

- services can be disabled/enabled/restarted from the CLI

While in the CLI, help is easily reachable through the following commands:

help

help (for example help show) Stay tuned for part two of this post where we will cover the performance comparison between MaxScale’s different router services and HAProxy.