blog

Considerations When Running ClusterControl in the Cloud

Cloud computing is booming and the cost to rent a server, or even an entire datacenter has been going down in the last couple of years. There are many cloud providers to choose from, and most of them provide pay-per-use business models. These benefits are tempting enterprises to move away from traditional on-premise bare-metal infrastructure to a more flexible cloud infrastructure as the latter supports their business growth more effectively.

ClusterControl can be used to automate the management of databases in the cloud.In this blog post, we are going to look into a number of considerations that users tend to overlook when running ClusterControl in the cloud. Some of them can be seen as limitations of ClusterControl, due to the nature of the cloud environment itself.

IP Address and Networking

There a couple of things to look out for:

- ClusterControl have limited capabilities in provisioning IPv6 hosts. When deploying a database server or cluster, please use IPv4 whenever possible.

- ClusterControl must be able to reach the database host directly, either by using the FQDN, public IP address or internal IP address.

- ClusterControl does not support the bastion host approach to access the monitored hosts. Bastion host is a common setup in the cloud, whose purpose is to provide access to a private network from an external network. This reduces the risk of penetration from the external network. You can think of it as a gateway between an inside network and an outside network.

- ClusterControl relies on sticky hostname or IP address. Changing IP address of the monitored hosts is not straightforward in ClusterControl. Try to use a dedicated IP address whenever possible. Otherwise, use a dedicated FQDN.

Since version 1.4.1, ClusterControl is able to manage database instances that runs with multiple network interfaces per host. This is a pretty common setup in the cloud, where a cloud instance is usually assigned with an internal IP address which maps to the outside world via Network Address Translation (NAT) with a public IP address. In the pre-1.4.1 version, ClusterControl could only use the public IP address to monitor the database instances from outside, and it would deploy the database cluster based on the public IP address. ClusterControl now tackles this limitation by separating IP address connectivity per host into two types – management and data address.

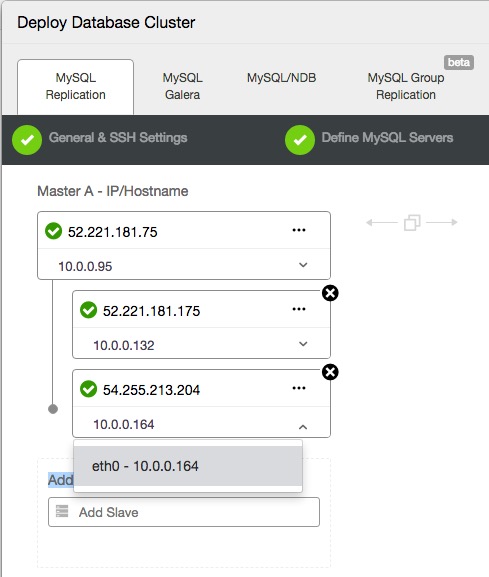

When deploying a new cluster or importing an existing cluster, ClusterControl will perform sanity checks once you have entered the IP address. During this check, ClusterControl will detect the operating system, collect host stats and network interfaces of the host. You can then choose which IP address that you would like to use as the management and data IP address. The management interface is used by ClusterControl operations, while the other interface is solely for database cluster communications.

The following is an example on how you can choose the data IP address during deployment:

Once the database cluster is deployed, you should see both IP addresses represented under the host, in the Nodes section. Keep in mind that having the ClusterControl host close to the monitored database server, e.g. in the same network, is always a better option.

Identity Management

ClusterControl only runs on Linux (see here for supported OS platforms). It uses SSH as the main communication channel to provision hosts. SSH offers simple implementation, strong authentication with secure encrypted data communication and is the number one choice used by administrators to control systems remotely. ClusterControl uses key-based SSH to connect to each of the monitored hosts, to perform management and monitoring tasks using a sudo user or root. In fact, the first and foremost step that you need to do after installing ClusterControl is to set up passwordless SSH authentication to all target database hosts (see the Getting Started page).

When launching a new Linux-based cloud instance, the instance is usually installed with an SSH server. The cloud provider usually generates a new private key, or associates an existing private key into the created instance for the sudo user (or root user) so the person who holds the private key can authenticate and access the server using an SSH client. Some cloud providers offer password-based SSH and will generate a random password for the same purpose.

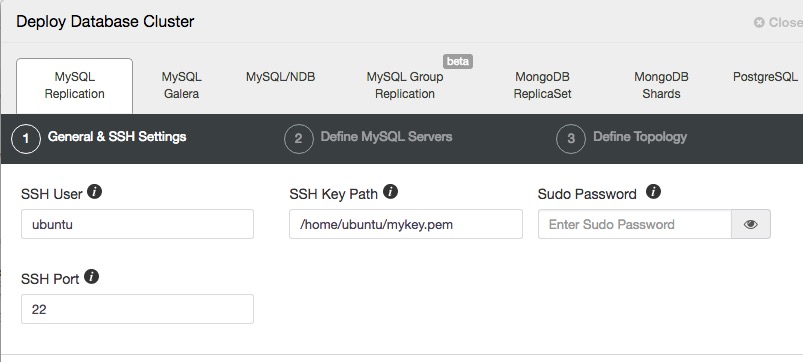

If you already have a private key associated with the instance, you can use the same key as the SSH identity in ClusterControl to provision other cloud instances directly. It’s not necessary for you to setup a passwordless SSH key for the new nodes, since all of them are associated with the very same private key. If you’re on AWS EC2 for example, upload the SSH key to the ClusterControl host:

$ scp mykey.pem [email protected]:~Then assign the correct ownership or permission on ClusterControl host:

$ chown ubuntu.ubuntu /home/ubuntu/mykey.pem

$ chmod 400 /home/ubuntu/mykey.pemThen, when being asked by ClusterControl to specify the SSH user and its associated SSH key file, specify the following:

Otherwise, if you don’t have an SSH key, generate a new one and copy them to all database nodes that you would like to manage under ClusterControl:

$ whoami

ubuntu

$ ssh-keygen -t rsa # press Enter for all prompts

$ ssh-copy-id ubuntu@{target host} # repeat this for each target hostClusterControl known limitations in this area:

- SSH key with passphrase is not supported.

- When using a user other than root, ClusterControl will default to connect through SSH with pseudo-terminal (tty). This would usually cause wtmp log file to grow quickly. See Setup wtmp Log Rotation for details.

Firewall and Security Groups

When running in the public cloud, you will be exposed to threats if your instances are open to the internet. It’s recommended for you to use a firewall (or security groups in cloud terminology) to only open the required ports. If ClusterControl is monitoring the cloud instances externally (i.e. from e.g. outside the security group that the databases are in), do open the relevant ports as listed in this documentation page. Otherwise, consider running ClusterControl in the same network as the databases. We recommend users to isolate their database infrastructure from the public Internet and just whitelist the known hosts or networks to connect to the database.

If you use ClusterControl command line client called s9s, you have to expose port 9501 as well. This is the ClusterControl backend (CMON) RPC interface that runs on TLS for the client to communicate. This service is configured to listen to localhost interface only by default. To configure this, take a look at this documentation. The s9s CLI tool allows you to control your database cluster through command lines remotely from anywhere, including your local workstation.

Instance Types and Specifications

As mentioned in the Hardware Requirements page, the minimum server requirement for ClusterControl is 2GB of RAM and 2 CPU cores. However, you can start with the smallest, lowest cost instance and resize it when the host requires more resources.

ClusterControl relies on a MySQL database to store and retrieve its monitoring data. During the ClusterControl installation, the installer script (install-cc) will configure the MySQL configuration options according to the resource it sees at this stage, especially the innodb_buffer_pool_size. If you upgrade the instance to a higher spec, do not forget to tweak this option accordingly inside the my.cnf and restart the MySQL service. A simple rule of thumb would be 50% of the total available memory in the host.

Take note that the following will affect the performance of the ClusterControl instance:

- The number of hosts it monitors

- MySQL server where ‘cmon’ and ‘dcps’ databases reside

- Granularity of the sampling

Migrating a cloud instance from one site to another, either across availability zones or geographical regions is pretty straightforward. You can simply take a snapshot of the instance and fire up a new instance based on the taken snapshot in another region. ClusterControl relies on proper IP address configuration, it will not work correctly if the database is started as a new instance with a different IP address. To manage the new instance, it is advisable to re-import the database node to ClusterControl.