blog

Challenges of multi-cloud architectures and how to address them

The cloud has revolutionized the way businesses operate, and multi-cloud is a concept that has been increasing in popularity over the last few years. With its ability to support geo-distributed topologies and provide robust disaster recovery options, it’s no wonder more and more organizations are adopting this approach.

There are many advantages to using this kind of environment, like scalability, flexibility, and high availability. However, with the benefits of multi-cloud come a number of challenges that also must be addressed.

In this blog, we will discuss some of the challenges of multi-cloud and how they can be addressed.

Management

One of the most significant challenges of multi-cloud is the complexity of managing and integrating different cloud environments. Each cloud provider has its own tools, APIs, and security protocols, making it difficult to standardize and automate processes across multiple platforms. Additionally, different cloud providers may have different capabilities and limitations, making ensuring consistency and continuity of service challenging. This complexity can lead to increased operational costs and make identifying and troubleshooting issues difficult.

One option to address these challenges is to have a tool centralizing management tasks, like ClusterControl.

Vendor lock-in

Another challenge of multi-cloud is vendor lock-in. Even if an organization is independent of a single provider, using multiple providers may limit the ability to switch between them easily. This can be a significant problem if an organization wishes to change providers or if a provider goes out of business. Additionally, if an organization is using multiple cloud providers, it can be challenging to move workloads between them, limiting the organization’s ability to optimize costs and performance.

This can be mitigated by using open-source technologies and standardization of the cloud environments.

Connectivity

If you are using different cloud providers, the communication in all your systems must be encrypted, and you must restrict the traffic only from known sources to reduce the risk of unauthorized access to your network. Using VPN, SSH, or Firewall Rules, or even a combination of them, are good solutions for this challenge.

Security

Security is another significant challenge of a multi-cloud deployment model. Each cloud provider has its security protocols, and ensuring that all cloud environments are secure and compliant with relevant regulations can be difficult. Also, multi-cloud environments can be more complex to secure, as they often involve multiple networks and multiple points of entry. Some of the most common security checks in a multi-cloud environment are:

- Controlling database access: You must limit the remote access to only necessary people and from the least amount of sources possible. With multiple clouds, it gets more complex. Using a VPN to access it is helpful here, but other options like SSH Tunneling or Firewall Rules exist.

- Secure installations and configurations: Not all cloud providers have the same default configuration or security features, so you should find a way to use a standard or configuration guide to avoid having a different thing in each place, which could be a headache.

- Audit and encrypt features: They are important security features, and the key here is to avoid specific vendor tools to prevent vendor lock-in. One way is to centralize the audit information and encrypted files in a different platform/place.

- Keep your OS and database up-to-date: There are several fixes and improvements that the database vendor or the operating system release to fix or avoid vulnerabilities. It is important to keep your system as up-to-date as possible by applying patches and security upgrades, which could be complicated in a multi-cloud environment if you need to manage different management consoles and different ways to perform this task. If you have SSH access to the database nodes, you can create a script to handle this task, but a UI tool would have a better User Experience.

Monitoring

Once you have your environment up and running, you will need to know what is happening at all times. Monitoring is a must to ensure everything is going fine or if you need to change something. For each database technology, there are several things to monitor. Some of these are specific to the database engine, vendor, or even the particular version that you are using.

If you are using a multi-cloud environment, you will need to access different monitoring tools, which could be time-consuming, so having a centralized system to monitor various cloud providers and technologies would be helpful here.

High Availability and scalability

There are different high availability levels depending on the required RPO (Recovery Point Objective) and RTO (Recovery Time Objective), and depending on the budget.

One of the responsibilities of a cloud provider is to give you a way to achieve high availability, so you should have the option to enable this in the management console, but what happens in the case of multi-cloud? In that case, you should be careful about connectivity, configuration, management, and cost of the cloud provider solutions, so there are many points of failure.

The best way to handle this is to have a system to centralize the high availability and scalability management tasks.

Split brain

With multi-cloud usage, split brain is also becoming more critical as the risk of having it increases in this scenario. You must prevent a split brain to avoid potential data loss or data inconsistency, which could be a big problem for the business.

Split brain occurs when the application is allowed to write in more than one node simultaneously, and it doesn’t handle it correctly. In this case, you will have different information on each node, which generates data inconsistency in the cluster. Fixing this issue could be demanding as you must merge data, which is sometimes impossible. To avoid this, you implement an odd number of nodes or an arbiter node to ensure you see the correct data.

Backups

Data is the most valuable asset in a company, so you should have a Disaster Recovery Plan (DRP) to prevent data loss in the event of an accident or hardware failure. A backup is the simplest form of DR. It might not always be enough to guarantee an acceptable Recovery Point Objective (RPO), but it is a good first approach. Also, you should define a Recovery Time Objective (RTO) according to your company’s requirements.

The best practice for creating a backup policy is storing the backup files in three different places: one stored locally on the database server (for faster recovery), another in a centralized backup server, and the last in the cloud. You can improve this by using full, incremental, and differential backups. All cloud providers give you a way to take backups, but generally, they don’t consider these best practices, so it is up to you to improve the default backup policy to reach the correct RTO value or make it as safe as possible.

ClusterControl

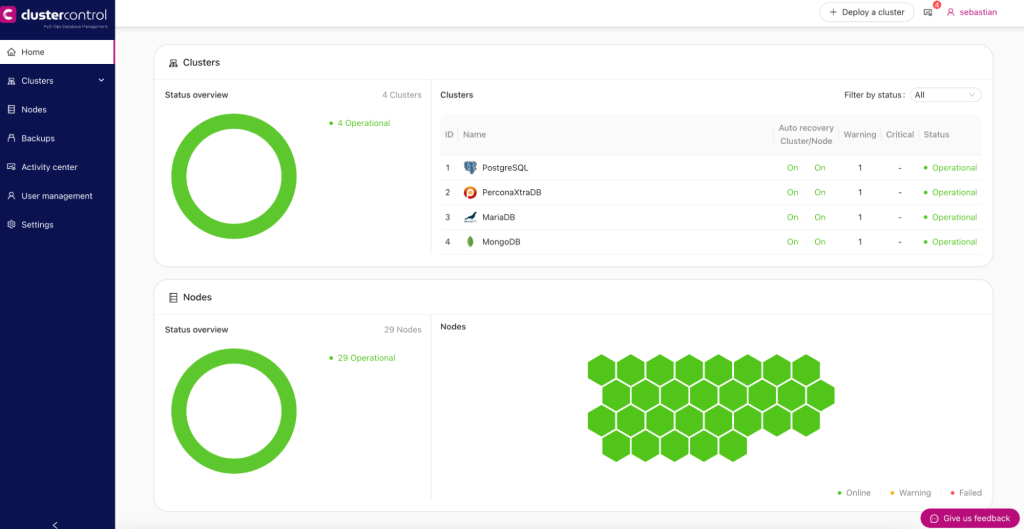

Organizations should consider adopting a multi-cloud management platform that can automate and standardize processes across multiple cloud environments to address these challenges. ClusterControl provides a single point of control, making managing and monitoring numerous cloud environments easier.

ClusterControl is a management and monitoring system that helps to deploy, manage, monitor, and scale your databases from a user-friendly interface. It has support for the leading open-source database technologies. You can automate many database tasks you must regularly perform, like adding new nodes, running backups and restores, and more.

So, let’s see how it can help you with the aforementioned challenges.

Management

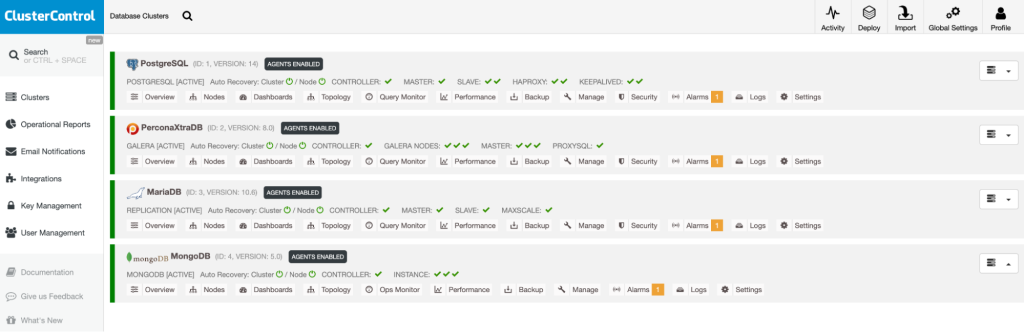

In ClusterControl, you will find all your database clusters on the same platform, no matter where they are deployed or what technology you use. It supports database vendors like Percona, MariaDB, PostgreSQL, and more.

Vendor lock-in

As ClusterControl deploys and uses open-source technology, you don’t need to worry about vendor lock-in. Everything that you run/deploy with ClusterControl, like backups or load balancers, is open-source software, so you can install it wherever you want, and you don’t need to use any specific cloud provider.

Connectivity

Even when ClusterControl can’t configure access to your database nodes beforehand, it uses SSH connections with SSH Keys to manage your database clusters securely. So, even in a multi-cloud environment, connections and traffic between ClusterControl and the cloud providers will be safe.

Security

Regarding security, ClusterControl can manage your database users so you can restrict access to your cluster from some specific sources. Also, you can create custom templates for deploying your databases, so you don’t need to depend on the cloud provider configurations.

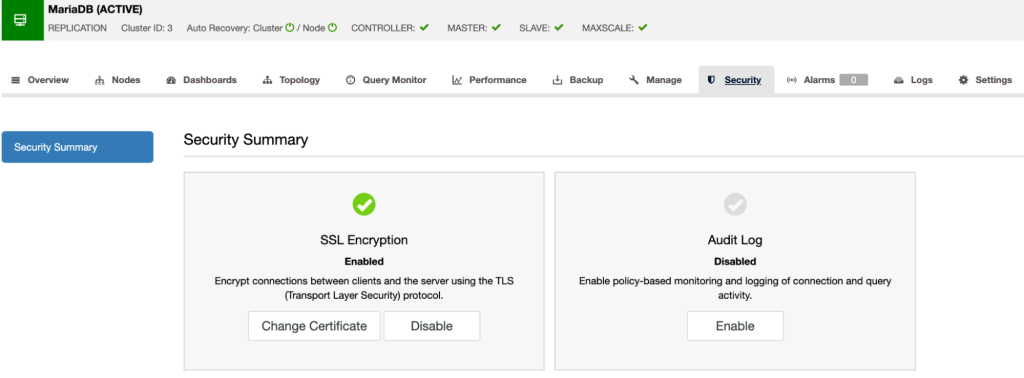

Audit and encrypt features are available for different database technologies. You can enable and use them from the same platform and avoid using the cloud provider features if it has them.

Finally, minor upgrades are also available from the ClusterControl UI, which makes this task much easier. Regarding major upgrades, the recommendation is to avoid running it in-place. Instead, you can deploy a new cluster in the new version with ClusterControl, and after testing it, change the endpoint in your application. This will reduce the impact of possible issues after upgrading.

Monitoring

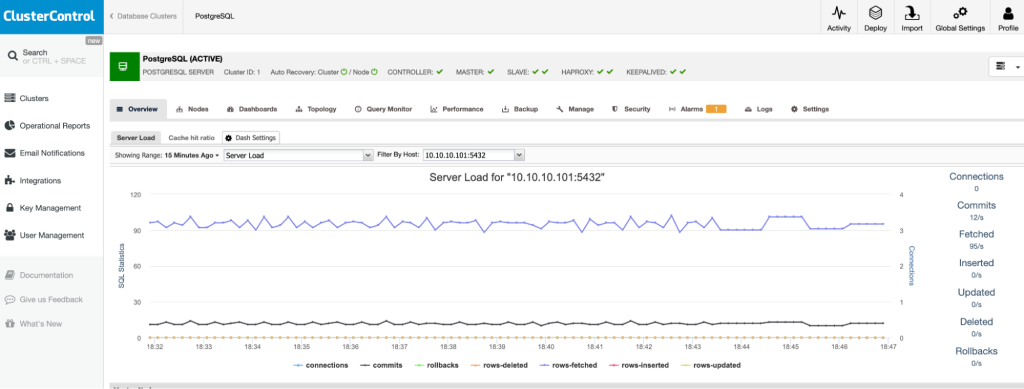

ClusterControl allows you to monitor your servers in real-time with predefined dashboards to analyze some of the most common metrics. It allows you to customize the graphs available in the cluster, and you can enable agent-based monitoring to generate more detailed dashboards. You can also create alerts that inform you of events in your cluster or integrate with different services such as PagerDuty or Slack.

High Availability and scalability

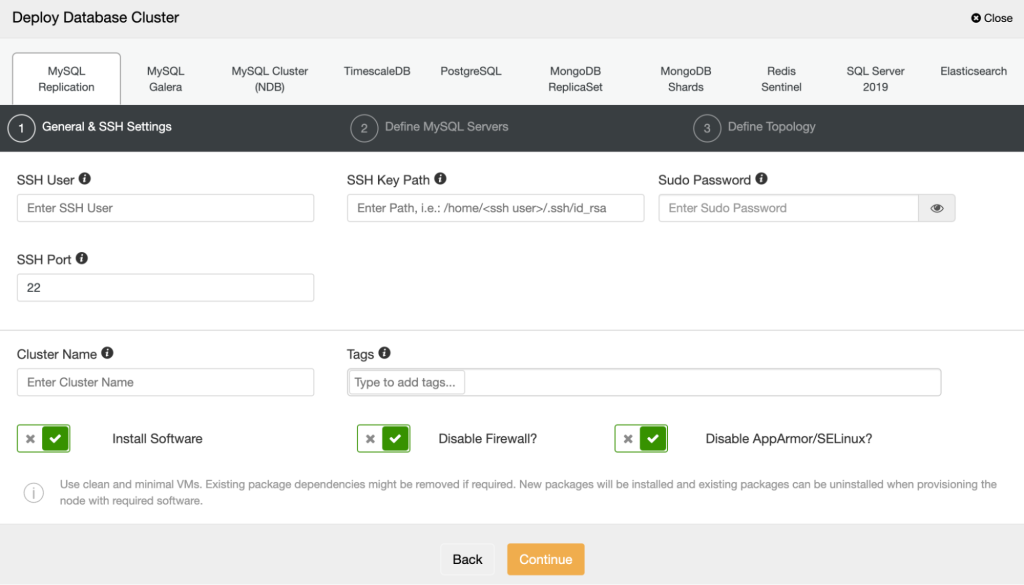

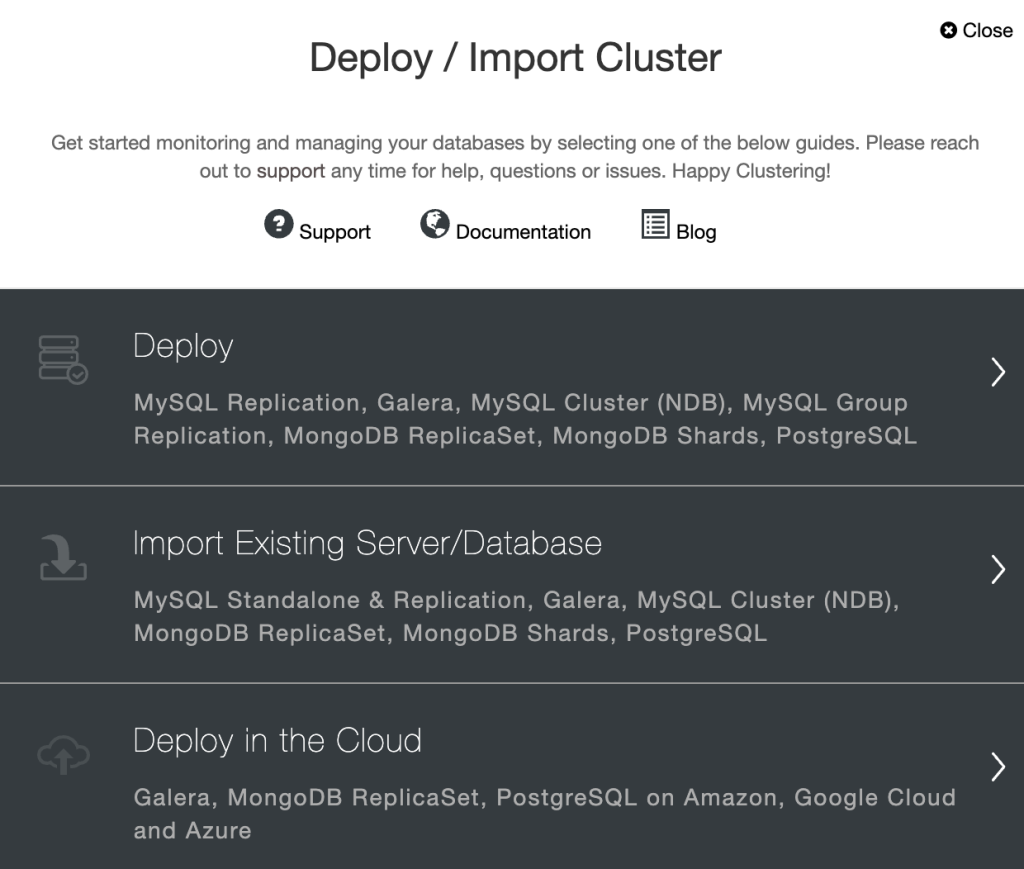

With ClusterControl, you can create your database cluster using the deploy feature. Simply select the option “Deploy” and follow the instructions that appear.

Note that if you already have a cluster running, you must select the “Import Existing Server/Database” instead if you already have the server instances created in the cloud. In case you want ClusterControl to create the instances for you, you can use the “Deploy in the Cloud” feature, which will use an AWS, Google Cloud, or Azure integration to create the VMs in the cloud for you, and you don’t need to access the cloud provider management console at all.

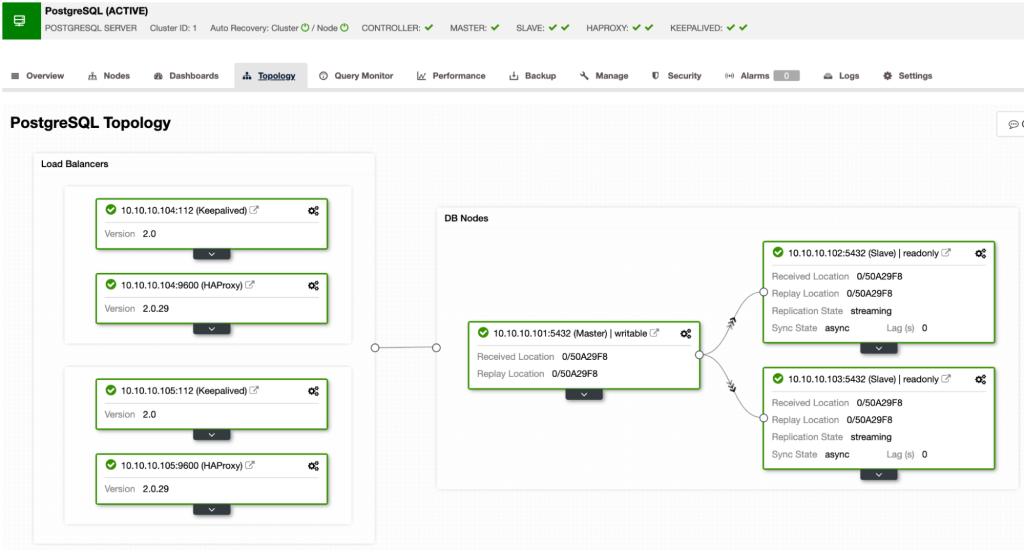

After you have the cluster managed by ClusterControl, you can easily add new database nodes, load balancers, or even run failover tasks from the same system and change or improve your database topology as you wish.

Split brain

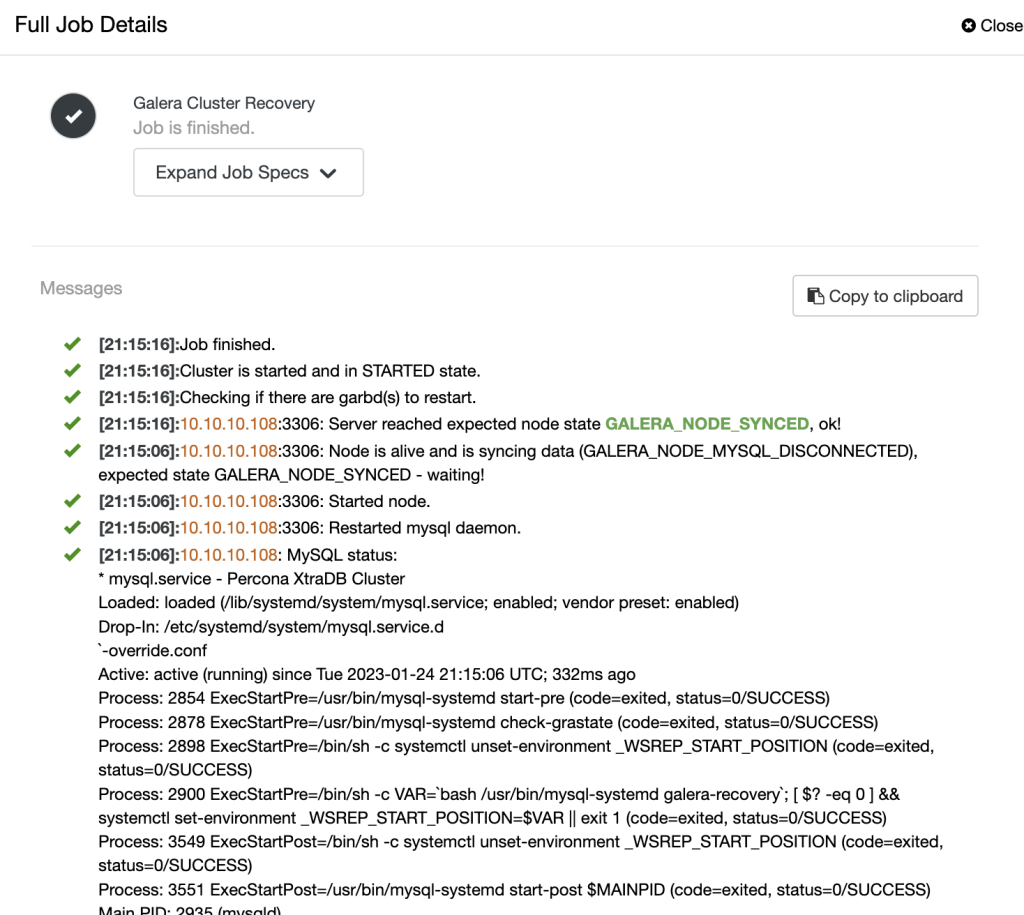

One of the most important features of ClusterControl is the Auto-Recovery feature. It will allow you to recover your cluster in case of failure in an automatic way, and it will also take the necessary actions to avoid a split-brain if needed and let you know if it happens with notifications and alarms.

Backups

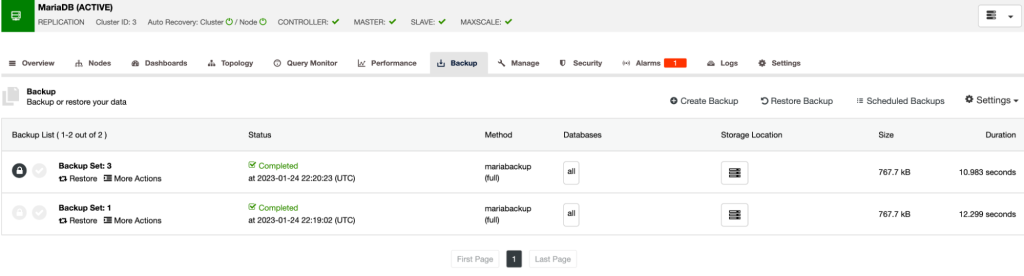

ClusterControl has many advanced backup management features that allow you not only to take different types of backups, in different ways, but also compress, encrypt, verify, and even more.

The automatic backup verification tool is also useful to make sure that your backups are good to use if needed and avoid problems in the future.

And more…

These features we’ve mentioned are some of the most important ones you can find in ClusterControl, but not the only ones.

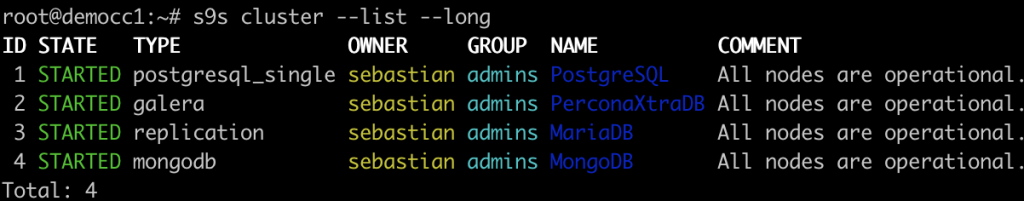

S9S-TOOLS

S9S-Tool is a command-line tool to interact, control, and manage database clusters using the ClusterControl Database Platform. Starting from version 1.4.1, the installer script will automatically install this package on the ClusterControl node. You can also install it on another computer or workstation to manage the database cluster remotely. Communication between this client and ClusterControl is encrypted and secure through TLS. This command-line project is open source and publicly available on GitHub.

S9S-Tool opens a new door for cluster automation where you can easily integrate it with existing deployment automation tools like Ansible, Puppet, Chef, or Salt.

ClusterControl V2

Since ClusterControl 1.9.5, the automatic ClusterControl installation includes ClusterControl V2, a new ClusterControl web application by default. This new application will continue to improve the user experience and ease of use that our users are accustomed to, and we hope to improve the quality and workflows further.

Wrapping up

Multi-cloud is a powerful trend that offers many benefits, but it also presents a number of challenges that must be addressed. Businesses need to have a clear understanding of the different cloud providers, their capabilities and limitations, and how they can integrate them to meet their specific business needs.

Using a tool like ClusterControl to manage your multi-cloud environment will allow you to quickly set up replication between different cloud providers for different technologies and manage the setup in an easy and user-friendly way. Also, it is useful for standardizing environments, adopting open-source technologies, and implementing a clear governance and compliance strategy. ClusterControl acts as a single pane of glass over all your instances, making it easier to manage and control infrastructure of any size, and best of all, you can try it commitment-free for 30 days.

Interested in learning more about you can achieve greater control, redundancy, flexibility, and compliance with a multi-cloud strategy? Check out our comprehensive guide to learn about common use cases, considerations for architecting a multi-cloud database, deploying and maintaining a multi-cloud database, and more. For more content regarding multi-cloud implementations and best practices, follow us on LinkedIn & Twitter and subscribe to our newsletter to get the latest updates as soon as we release them.