blog

Installing a ClusterControl Standby Server

As you might already know, ClusterControl runs on a dedicated host. Failure of the ClusterControl host (or the datacenter it is running in) does not affect your running database cluster, however you cannot manage the cluster during that time. This is inconvenient. To guard against this, it is possible to deploy a standby ClusterControl server and increase the availability of your management infrastructure.

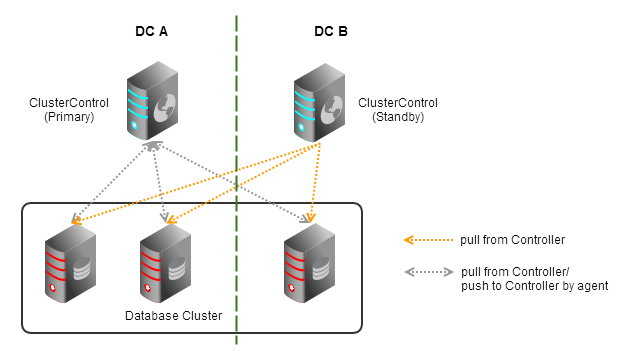

Let’s first have a look at how ClusterControl gathers data from the cluster nodes. As shown in the diagram below, the controller pulls database stats and variables directly from the managed database nodes. Host stats are collected by agents that run on the managed database nodes. These agents push the stats data to the controller.

It is possible to install a controller on a standby host, and configure the following:

- “enable_autorecovery” should be disabled on the standby side as we do not want to have race condition between controllers,

- make sure that the standby host has sufficient privileges to pull database stats and accept host stats reported from the database hosts.

Bear in mind that each ClusterControl agent can only report to one controller at the moment. So in case the main controller is down or inaccessible, you should simply change the agent’s mysql_hostname value in /etc/cmon.cnf to the standby controller host.

Installing Standby Server

We have an automation script to install the standby ClusterControl host, built on top of our bootstrap script available at our download site. On the standby host, do:

$ wget http://severalnines.com/downloads/cmon/cc-bootstrap.tar.gz $ tar zxvf cc-bootstrap.tar.gz $ cd cc-bootstrap-* $ ./s9s_bootstrap --install-standby

Follow the installation wizard, and it will guide you through the installation process. You will end up with ClusterControl UI page. For advanced users with custom setups, if you prefer to install using the manual way, please follow these instructions to have more control on the installation process.

At the end of the installation, you should be able to access the standby ClusterControl UI at http://

Failover Method

At the moment, ClusterControl only supports manual failover to the standby server. The failover should be done by updating mysql_hostname value in each of the agent host inside /etc/cmon.cnf:

mysql_hostname=

Then, restart the cmon agent to apply the changes:

$ /etc/init.d/cmon restart

If you want to enable auto recovery on standby server, comment or remove following line inside /etc/cmon.cnf:

#enable_autorecovery=0

Do not forget to restart cmon service after making changes on /etc/cmon.cnf. You should notice that the standby server has taken over the primary role. The agents are now reporting host statistics directly to the newly promoted primary node:

That’s it for now. We are working on an exciting new feature to automate this process so please stay tuned.